Artificial General Intelligence (AGI) is a theoretical form of AI matching or surpassing human cognitive capabilities across virtually all tasks, characterized by broad, flexible, and transferable intelligence without task-specific programming. While all current AI falls under Artificial Narrow Intelligence (ANI), a 2020 survey identified 72 active AGI research and development projects across 37 countries.

Expert predictions for AGI’s arrival vary significantly, with some industry leaders forecasting emergence within 2 to 5 years, while broader surveys of AI researchers project a longer timeline, typically between 2040 and 2061. For instance, OpenAI’s Sam Altman predicts AGI arrival possibly within the next 3.5 years or by 2035, and Google DeepMind’s

Demis Hassabis cautiously reiterates roughly a 50% chance of AGI by the end of the decade (2030). However, some experts, like Yann LeCun, argue that current Large Language Models (LLMs) are “useful tools” but “not, in any meaningful way, intelligent,” and that scaling LLMs will not lead to human-level intelligence, requiring new architectures.

Key characteristics of AGI include autonomous learning, common-sense thinking, transfer learning, and abstract reasoning. Current AI systems, which include LLMs like ChatGPT, are not considered AGI. ChatGPT 4.5 was judged human in 73% of conversations in a 2025 study, surpassing human confederates (67%), but still lacks true general intelligence, consciousness, and understanding beyond its training data.

Major roadblocks to building AGI include the complexity of the human mind, physical constraints of computation (e.g., memory movement scales quadratically with distance), and the phygital divide preventing robust interaction with the physical world. Current transformer architectures have fundamental limitations (limited context window and lack of long-term memory), with GPT-5 hallucinating in response to over 30% of questions on the SimpleQA benchmark.

Achieving AGI necessitates significant breakthroughs in machine learning, natural language processing (NLP), and computer vision, as a complete understanding of human cognition remains elusive despite decades of research.

What is AGI (Artificial General Intelligence)?

Artificial General Intelligence (AGI) is a type of artificial intelligence that matches or surpasses human capabilities across virtually all cognitive tasks, characterized by broad, flexible, and transferable intelligence not requiring task-specific programming. AGI is a hypothetical intelligence of a machine capable of understanding or learning any intellectual task a human can, aiming to mimic the cognitive abilities of the human brain. AGI is known as strong AI, full AI, human-level AI, human-level intelligent AI, or general intelligent action.

When did the concept of AGI emerge? The concept of AGI emerged in 1997 when Mark Gubrud introduced the term. Shane Legg and Ben Goertzel expanded and popularized the concept around 2002. Earlier AI pioneers like Herbert A. Simon and Marvin Minsky predicted human-level intelligence decades earlier, though those timelines did not materialize.

How does AGI differ from other types of AI? AGI differs from Artificial Narrow Intelligence and Artificial Superintelligence based on scope and capability. ANI performs specific tasks within defined boundaries, while AGI operates across domains with general reasoning ability. ASI exceeds human intelligence across all domains, while AGI matches human-level capability without surpassing it.

Why is AGI considered a long-term goal in AI research? AGI is considered a long-term goal because it requires replicating human-level reasoning, learning, and adaptability across all domains. A 2020 survey identified 72 active AGI research projects across 37 countries, which shows ongoing global investment and sustained research interest.

What capabilities would AGI demonstrate? AGI would demonstrate cross-domain reasoning, continuous learning, and adaptive problem-solving. AGI would understand context, transfer knowledge between tasks, and solve unfamiliar problems without retraining, which reflects human-like intelligence.

Why Does AGI Matter Today?

AGI is a highly cited milestone in the AI industry, with tech executives predicting its arrival and investors funding research with billions of dollars. AGI distinguishes itself from current AI capabilities by demonstrating the ability to learn and reason across many tasks, unlike the powerful but specialized chatbots available today.

Current LLMs (Gemini, ChatGPT, Grok, and Claude) perform complex tasks (writing essays, creating images, and generating code). Current LLMs lack autonomy, which is inherent in most AGI definitions. The models available today achieve capabilities by having vast amounts of internet data “crammed into their knowledge base,” rather than genuinely learning or generating novel insights beyond their training data.

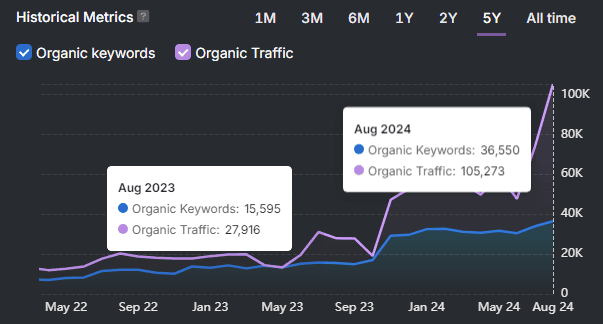

Three years ago, “nothing vaguely like AGI” existed, but now technology is progressing steadily. AI capabilities considered science fiction 6 years ago are now a reality. AI, particularly LLMs, has displayed emergent capabilities not explicitly designed for (reasoning (WIP), passing the bar exam (GPT-4), and performing logic). LLMs have passed the Turing test, with GPT 4.5 being judged human more often than actual humans (73% vs. 67% for real confederates in a 2025 study). Current LLMs like Gemini 3 Deep Think (Preview) and GPT-5.1 (Thinking, High) score 87.5% and 72.8%, respectively,y on the ARC-AGI benchmark, which measures fluid intelligence, compared to an average human score of 64.5%.

AI is already approaching or exceeding 50% of human intelligence in many fields. GPT-5 is projected to be approximately 51% of AGI. Microsoft researchers concluded in 2023 that GPT-4 “could reasonably be viewed as an early (yet still incomplete) version of an artificial general intelligence (AGI) system.” A 2023 study reported that GPT-4 outperforms 99% of humans on the Torrance tests of creative thinking.

What are the predictions and accelerated timelines for AGI? Predictions and accelerated timelines for AGI vary significantly among experts. Elon Musk predicts AGI will arrive by 2026 and confidently states that AI will exceed the intelligence of all humans combined by 2030. AI pioneer Herbert A. Simon speculated in 1965 that “machines will be capable, within twenty years, of doing any work a man can do,” a prediction that failed to materialize. In 2007, the consensus in the AGI research community considered Ray Kurzweil’s 2005 timeline (2015-2045) plausible. A 2012 meta-analysis of 95 opinions found a bias towards predicting AGI onset within 16 to 26 years.

In 2023, AI researcher Geoffrey Hinton, who previously thought AGI was 30-50 years away, stated, “Obviously, I no longer think that.” Hinton estimated in 2024 (with low confidence) that systems smarter than humans could appear within 5 to 20 years. In May 2023, Demis Hassabis expected AGI within a decade or even a few years. NVIDIA’s CEO, Jensen Huang, stated in March 2024 that AI would pass any human test within five years. AI researcher Leopold Aschenbrenner estimated AGI by 2027 to be “strikingly plausible” in June 2024. A September 2025 review of surveys reported most experts agreed AGI would occur before 2100, with a more recent analysis by AIMultiple predicting AGI around 2040. The first AGI is expected within the next 3 to 7 years. Ray Kurzweil predicts AGI by 2029.

What Is the Difference Between Artificial Intelligence and Artificial General Intelligence?

Artificial Intelligence (AI) and AGI are both fields of computer science dedicated to creating systems that perform tasks with human-like intelligence, but differ fundamentally in their current existence, capabilities, and proximity to reality.

AI is a widely available, continuously improving technology deployed today for specific tasks, while AGI is a concept that does not yet exist and remains a distant goal for researchers. This comparison matters because AGI represents a speculative future that will revolutionize human productivity and solve complex global problems, and poses existential risks.

AGI vs. Artificial Super Intelligence (ASI)

AGI and ASI are both advanced forms of AI that aim to replicate or surpass human cognitive abilities, but differ fundamentally in their level of intelligence, capabilities, and current status. This comparison matters because understanding these distinctions anticipates future technological advancements, managing potential risks, and guiding ethical development in the field of artificial intelligence.

| Aspect | Artificial General Intelligence (AGI) | Artificial Superintelligence (ASI) |

|---|---|---|

| Level of Intelligence | Matches human-level intelligence across virtually any cognitive task, possessing true understanding, reasoning, and adaptability. | Surpasses human intelligence across all domains, achieving superhuman performance in every field. |

| Capabilities | Performs any intellectual task a human can, such as coding, planning, inventing, writing songs, solving quantum physics, and comforting emotionally, without specialized programming. | Solves global warming, cures all diseases, outsmarts human authority, discovers new scientific principles, and develops capabilities beyond human imagination. |

| Learning & Adaptation | Learns anything, adapts to anything, and thinks creatively like humans; learns from minimal examples and personal experiences. | Not only learns and adapts but also self-improves at an exponential rate, leading to rapid advancements beyond human control. |

| Key Characteristics | Self-awareness, consciousness, abstract reasoning, knowledge transfer, contextual understanding, emotional intelligence, social cognition. | Cognitive superiority (processing information far beyond human capabilities), autonomous learning, deep emotional understanding, advanced sensory perception, ethical reasoning. |

| Philosophical Identity | The synthetic human; a mind interpretable by human standards, understanding humans on cognitive, emotional, and moral planes. | The alien god; intelligence ceases to be relatable, defining new ethical substrates and potentially rendering human relevance insignificant. |

| Relationship to Humanity | Requires humans as context, reference, and conversation partners; like a child who surpasses a parent but still returns. | Has no dependency on humans; may retain humans out of aesthetic affection or archaeological curiosity, or not. |

| Current Status | Largely theoretical; not yet attained. Predictions for attainment range from 2029 (Elon Musk) to 2061 (50% of AI researchers). | Purely theoretical and speculative; no existing implementations. Most distant on the timeline, potentially emerging within decades of AGI. |

| Progression | Considered the next stage in AI evolution; a stepping stone to ASI. | Comes after AGI; consensus suggests AGI will quickly lead to ASI, potentially within a few years. |

| Challenges & Risks | Replicating human-like reasoning, consciousness, common-sense reasoning, long-term memory, energy efficiency, alignment, and safety. Potential for “stealth takeover.” | Existential risk (outsmarting human control), intelligence explosion, ethical dilemmas, misalignment with human values, and unforeseen consequences. |

| Resource Requirements | Likely needs quantum computing or revolutionary new hardware architectures, massive data processing, and energy resources. | Astronomical resource requirements, potentially needing new forms of computing and energy generation not yet developed. |

AGI vs. Artificial Narrow Intelligence (ANI)

AGI and ANI are both forms of artificial intelligence that process information and perform tasks, but differ fundamentally in their scope of capabilities, learning autonomy, and current existence. This comparison matters because ANI is widely implemented today, driving innovation across industries, while AGI remains a theoretical concept with the potential for transformative, yet currently unachieved, human-like cognitive abilities.

| Aspect | Artificial General Intelligence (AGI) | Artificial Narrow Intelligence (ANI) |

|---|---|---|

| Definition | Theoretical AI with human-like cognitive capabilities, not tied to specific tasks. Also called Strong AI. | Specialized and task-specific AI, designed for a narrow range of tasks within predefined parameters. Also known as Weak AI. |

| Scope | Universal, capable of performing any intellectual task a human can, adapting to new challenges, and applying knowledge across diverse domains. | Task-specific, performing one function extremely well; lacks capability outside its designated domain. |

| Learning & Adaptability | Autonomous learning from minimal data, understanding new concepts quickly, and adapting to unfamiliar situations without extensive retraining. Transfers knowledge between fields. | Relies on supervised learning and large datasets; requires extensive training and often retraining for new tasks or environmental changes. Limited adaptability. |

| Understanding & Reasoning | Possesses genuine understanding and reasoning abilities, comprehending complex concepts, making judgments, and reasoning logically across different contexts. | Operates based on predefined rules and patterns; lacks true understanding and cannot reason beyond programmed parameters. Generates outputs based on statistical patterns. |

| Knowledge Transfer | Capable of transfer learning, applying knowledge gained from one task to others, making it infinitely more efficient and adaptable. | Limited in its ability to transfer knowledge between tasks; each new task often requires separate training and optimization. |

| Consciousness & Self-awareness | Self-conscious, like human beings, and can employ its artificial brain like people use theirs. Could be aware of its own existence. | Lacks self-awareness and true comprehension, merely imitating human capabilities. |

| Current Status | Remains theoretical and under research; not currently in existence. Forecasts for emergence vary, with some speculating a 25% chance by 2027 or 50% by 2031. | Widely implemented and evolving, driving innovation and efficiency across various industries. The only type of AI currently in existence. 78% of organizations use AI in at least one business function in 2025. |

| Examples | Sonny the robot in I-Robot. Human-like decision-making across all domains. Not yet achievable. | Voice assistants (Siri, Alexa), recommendation systems (Netflix), autonomous vehicles, OpenAI’s ChatGPT, LLMs (Claude, Gemini), fraud detection systems, AlphaGo, Dall-E. |

What Are the Frameworks for Defining Artificial General Intelligence?

Frameworks for defining Artificial General Intelligence (AGI) organize how researchers evaluate intelligence, capability, and system structure across domains. These frameworks define what qualifies as general intelligence by measuring performance, behavior, and underlying architecture. Clear frameworks create consistent evaluation standards and guide research toward human-level intelligence.

The 3 frameworks for defining Artificial General Intelligence are listed below.

1. Functional and Capability-Based Frameworks

2. Behavioral and Cognitive Frameworks

3. Theoretical and Structural Frameworks

1. Functional and Capability-Based Frameworks

The core attributes and capabilities of AGI include human-like intelligence, cognitive abilities, autonomous learning, and operating in unknown environments. AGI systems demonstrate flexible cognition similar to humans, reasoning abstractly, understanding meaning, and operating effectively in open-ended environments. AGI systems move fluidly between tasks (learning a new language, solving complex problems, and interpreting social cues) without redesign or retraining for each domain.

AGI systems acquire new skills and knowledge through experience, not solely relying on labeled data or human-defined training processes. AGI systems reuse knowledge gained in different problem domains, autonomously learn and understand problem domains, and generalize learning across multiple domains. AGI systems apply reasoning to new, unfamiliar problems, continuously augmenting and refining training data by extracting meaningful information from all experiences, which includes human exchanges, sensory input, and online sources.

AGI systems possess self-directed knowledge seeking, judging what new information to seek out and when, reacting to mistakes, confusion, or curiosity with a desire to expand their knowledge base. AGI systems are envisioned to possess cognitive and emotional abilities (empathy, indistinguishable from humans, and potentially a conscious understanding of the meaning behind their actions).

2. Behavioral and Cognitive Frameworks

Behavioral and cognitive frameworks are necessary for defining Artificial General Intelligence (AGI) because current AI systems lack human-like cognitive capabilities, requiring a shift from task-specific algorithms to systems mimicking human learning, reasoning, and decision-making. Current AI models are limited to task-specific applications, demonstrating a significant gap between existing technologies and the flexible capabilities envisioned for AGI. Deep learning systems, while advanced in natural language processing and computer vision, cannot generalize knowledge across domains or reason abstractly in novel situations.

What are the limitations of current AI and AGI definitions? Current AI and AGI definitions have several limitations, which include a lack of generalized capabilities, abstract reasoning, and measurable criteria. Existing AI evaluation tools prioritize efficiency or performance in narrow functional areas, rarely accounting for broader cognitive dimensions like memory, adaptation, or interaction. Early AGI interpretations (Goertzel, Legg & Hutter, Hutter’s AIXI, and MIRI) are often too broad, unfalsifiable, impossible to compute, or lack clear, testable cognitive architectures. There is no full understanding or consensus on what constitutes an acceptable baseline definition of AGI, with many definitions remaining conceptual and lacking measurable criteria, implementable structures, or prototype-ready frameworks.

Current AI models are primarily statistical, trained to probabilistically mimic intelligent aspects rather than possessing genuine understanding, as highlighted by the Chinese Room Argument and Descartes’ Argument. Neural networks are described as “static function approximators” lacking structural richness for intelligence, with limitations in the Universal Approximation Theorem and the No Free Lunch theorem. Current learning theory often reduces learning to mathematical optimization, with learning processes external to the model, which makes models inflexible and static.

What are the core AGI capabilities and human-like intelligence traits? Core AGI capabilities and human-like intelligence traits include abstract reasoning, understanding meaning, and operating effectively in open-ended environments. AGI aims to replicate human cognitive capabilities across domains, promising a shift from task-specific algorithms to systems mimicking human cognitive abilities in learning, reasoning, and decision-making. AGI is defined by its capacity to perform the full range of human-level intellectual tasks, demonstrating broad, flexible, and transferable intelligence without task-specific programming. Key attributes include adapting to changing circumstances with flexible cognition.

Human cognition integrates and fluidly switches between diverse tasks (language, mathematics, perception, spatial reasoning, and social interaction), which serves as the reference point for AGI. AGI systems would move fluidly between tasks, learning a new language, solving complex problems, or interpreting social cues, without redesign or retraining. AGI systems apply skills learned in one domain to others with minimal instruction. AGI requires autonomous, continuous learning from experience and interaction, unlike current AI systems that depend on large volumes of labeled data and human direction.

What role do cognitive architectures and brain-inspired approaches play in AGI?

Cognitive architectures and brain-inspired approaches play a critical role in AGI by providing frameworks for integrating perception, reasoning, and learning into unified systems. The cognitive dimensions of AGI are critical for designing systems that align with human behavior, fostering collaboration and trust. Achieving true AGI requires breakthroughs in understanding human intelligence itself, with efforts focusing on cognitive architectures like Soar and ACT-R that model underlying processes of human intelligence. These architectures emphasize structured, hierarchical approaches inspired by neuroscience and cognitive psychology.

Continual learning (CL) is a vital step toward AGI, requiring brain-inspired data representations and learning algorithms to overcome catastrophic forgetting. “Brain-inspired” means mimicking how humans process, store, and recall information. Multimodal foundation models are seen as a step toward enabling machines to mimic core cognitive activities historically unique to humans.

Brain-inspired neurocomputers are positioned as a next-generation approach, focusing on neuromorphic systems that mimic brain structures and incorporate cognitive architectures for autonomous learning and environmental interaction. The LIDA cognitive model, rooted in global workspace theory, offers a framework for understanding cognition, perception, and decision-making in AGI by simulating human-like attention, learning, and memory mechanisms.

The LIDA model integrates human cognition mechanisms, which include affective and rational processes, to address ethical problems. The proposed new AGI definition explicitly requires a modular cognitive architecture comprising memory, learning, reasoning, and self-improvement components, providing a structural foundation for implementable AGI systems. The Cognitive and Artificial Intelligence Features (CAIE) framework identifies over 90 core features organized into 6 zones. These 6 zones are listed below.

- Learning and Adaptation

- Perception and Awareness

- Reasoning and Decision-Making

- Interaction and Personalization

- Memory and Optimization

- Operational Efficiency

What are the behavioral tests and evaluation methods for AGI? Behavioral tests and evaluation methods for AGI include the Turing Test, human-level performance on cognitive tasks, and the ability to learn new tasks. The Turing Test (1950) defines intelligence by behavior. The program demonstrates human-like intelligence if a human observer cannot distinguish between a program output and a human.

Computer scientists today do not consider the Turing Test an adequate measure of AGI, as it often highlights how easily humans are fooled, such as through the ELIZA Effect, rather than machines’ ability to think. Kirk-Giannini and Goldstein (2023) argued “imitation” is not “intelligence.” A 2024 study found GPT-4 was identified as human 54% of the time, compared to 67% for actual humans. A 2025 study showed GPT-4.5 was judged human in 73% of conversations, surpassing human confederates (67%).

Human-level performance on cognitive tasks defines AGI as an AI system capable of performing all cognitive tasks people do, though this lacks specificity regarding “which tasks” and “which people.” The ability to learn new tasks emphasizes the capacity to learn a broad range of tasks and concepts, similar to humans, and learn from new experiences in real-time.

Flexible and general capabilities, as defined by Gary Marcus, require resourcefulness and reliability comparable to human intelligence. Benchmark tasks include watching a movie, reading a novel, working as a competent cook, constructing bug-free code, and converting mathematical proofs. Critics note the difficulty in agreeing whether these specific tasks cover all human intelligence, and the cooking task implies robotics and physical intelligence are necessary.

3. Theoretical and Structural Frameworks

Current AI and AGI definitions face significant critiques regarding architectural insufficiency, misinterpretation of theoretical foundations, and fundamental limitations as statistical models. Modern neural networks are architecturally insufficient for genuine understanding, operating as static function approximators within a limited encoding framework, described as a “sophisticated sponge.”

Theoretical foundations, the neural scaling law (e.g., Kaplan 2020), and the Universal Approximation Theorem (UAT) are often misinterpreted, addressing the wrong level of abstraction and highlighting a lack of dynamic restructuring. Modern AI, which includes LLMs, is fundamentally a statistical model, probabilistically interpreted to mimic intelligent tasks, aligning with Searle’s Chinese Room Argument (CRA) and Descartes’ argument that machines respond based on design, not universal understanding.

What are the architectural and theoretical limitations of current AI? Current AI architectures and theoretical foundations exhibit several limitations, which include architectural insufficiency, misinterpretation of heuristics, and fundamental statistical modeling. Agentic AI, combining specialized structures like LLMs with computer vision, suffers from shallow, black-box input-output connections, similar to CRA and Descartes’ limitations. Current AI models struggle with the inability to autonomously distinguish important from unimportant factors, considered a philosophical, not technical, issue applicable to almost all formal AI systems.

Current AI structures are primarily interpolators, performing well within training data but struggling with out-of-bound cases, leading to issues like hallucination and the symbolic grounding problem. Existing AI theories (Logicism, Expert Systems, Connectionism) do not resolve the “origin problem” for intelligent behaviors, symbolic approaches are rigid and unscalable, while statistical learning lacks mechanical details and interpretability. Current machine learning theory, defining learning as mechanical equivalents in mathematical form (optimization theory), is insufficient, often externalizing the learning process and making models inflexible, shallow, and static.

Machine learning research suffers from “mathification,” where mathematics is used to impress rather than explain, leading to impractical approaches and a lack of unified theoretical understanding. Empirical AI development faces unanswered questions, inexplicable behaviors, constrained results, high computational costs, and scalability issues. There is no consensus on the definition of intelligence, with philosophers less interested than in consciousness, and AI practitioners relying on intuition, leading to subjective evaluation criteria.

What theoretical and structural frameworks are proposed for AGI? Proposed theoretical and structural frameworks for AGI include neural architecture formalism, a primitive framework, and intrinsic learning mechanisms. Neural architecture formalism is based on unit-based processing, with each neuron (n) having an (I, M, O) structure: first, an input receiver (I), second, an internal processor (M), third, an output transceiver (O). This formalism allows for composition (n1 ∘ n2 = n2(n1)) and nesting of structures, defining a minimal neuron class (𝒩0) where I, M, O each have a cardinality of 1.

A standard neuron on ℝ (x ∈ 𝒩0(ℝ)) is x = q = σM(w ⋅ p + b). Class 𝒩1 defines multiple-input neurons, and Class 𝒩2 defines layered neural networks (N ∈ 𝒩2). The primitive framework (𝒫0) is a dual framework (Γ, ℝ) where Γ = (ℕ, F) is the cardinality encoding space (discrete) and ℝ is the base field primitive for analytical encoding (continuous).

Any object X has a dual representation (γ(X), ρ(X)), allowing for hierarchical construction (𝒫0 ⊂ 𝒫1 ⊂ … 𝒫n) through constraint chains (transformations Φi: 𝒫i → 𝒫i+1). Internal structures are modified with add-ons (Λi, X). The base neuron class (𝒩1 ≡ L1) defines unit-wise constructions over the primitive framework (L0), with invariant input (ℐsig) and output (𝒪sig) signatures, and extensible construction (𝒞ext) for inner structure, linking to type theory.

Structural and operational learning distinguishes between structural learning (adaptation of model structure) and operational learning (modification of the process), prioritizing structural learning. Intrinsic learning mechanisms aim to embed learning intrinsically within the system, avoiding external modifications that lead to inflexible, shallow, and static models. Ground-zero construction advocates building AI models from first principles within a rich operating environment that can interpret diverse rewards or responses.

Genetic/evolutionary patterns suggest coupling uniform randomization and replicating genetic evolutionary patterns (e.g., NEAT algorithms) to allow learning adaptation to generate its own “purpose.”

What structural frameworks are proposed for AGI development? Structural frameworks proposed for AGI development include cognitive architectures, brain-inspired designs, and hybrid platforms. Cognitive architectures model human intelligence processes, integrating perception, reasoning, and learning into unified systems (e.g., Soar, ACT-R), emphasizing structured, hierarchical information processing. Brain-inspired designs replicate human cognitive processes like learning, memory, and decision-making.

Neuromorphic Computing and Spiking Neural Networks (SNNs) overcome traditional AI limitations in generalizability, adaptability, and reasoning. Hybrid platforms like the Tianjic chip integrate SNNs and ANNs for efficient processing, real-time adaptability, and energy-efficient learning. Neuroevolution uses evolutionary algorithms to optimize neural network architectures, enhancing meta-learning and customization.

Deep Learning and Big Data are considered insufficient for true AGI due to reliance on vast datasets and a lack of ability to generalize knowledge or reason abstractly in novel situations. Continual Learning (CL) is a vital step toward AGI, emphasizing brain-inspired data representations and learning algorithms to overcome catastrophic forgetting. Multimodal Foundation Models are suggested as a step toward true AGI, potentially mimicking core human cognitive activities.

Neurosymbolic AI and Hybrid Cognitive Architectures are proposed as alternatives to GAN-driven models, prioritizing explainability, adaptability, and reasoning.

What are the theoretical foundations and advanced concepts for AGI? Theoretical foundations and advanced concepts for AGI include cognitive architectures, symbolic AI, and universal intelligence models. Symbolic AI focuses on explicit knowledge representation, logic, and rule-based processing. Integrating symbolic reasoning with sub-symbolic learning (neural networks) is crucial for robust, general intelligence.

Hybrid models combine deep learning’s pattern recognition with symbolic reasoning. Cognitive models like ACT-R simulate human cognition, which includes memory, perception, and decision-making processes. Hutter’s AIXI model provides a mathematical framework for a maximally intelligent agent based on algorithmic information theory, setting an upper bound for theoretical AGI despite being computationally infeasible.

Understanding algorithmic complexity and computability limits helps estimate AGI development challenges. Balancing exploration and exploitation is a critical challenge for AGI systems. AGI requires generalizing knowledge across different domains, which is addressed by multi-modal learning and transfer of knowledge.

Modern AI systems are increasingly multi-modal, processing text, images, sound, and video simultaneously. Transfer learning (e.g., GPT-4) enables repurposing models for new tasks. Cross-modal reasoning integrates various data types for richer, contextualized learning, enhancing AGI’s ability to understand complex environments.

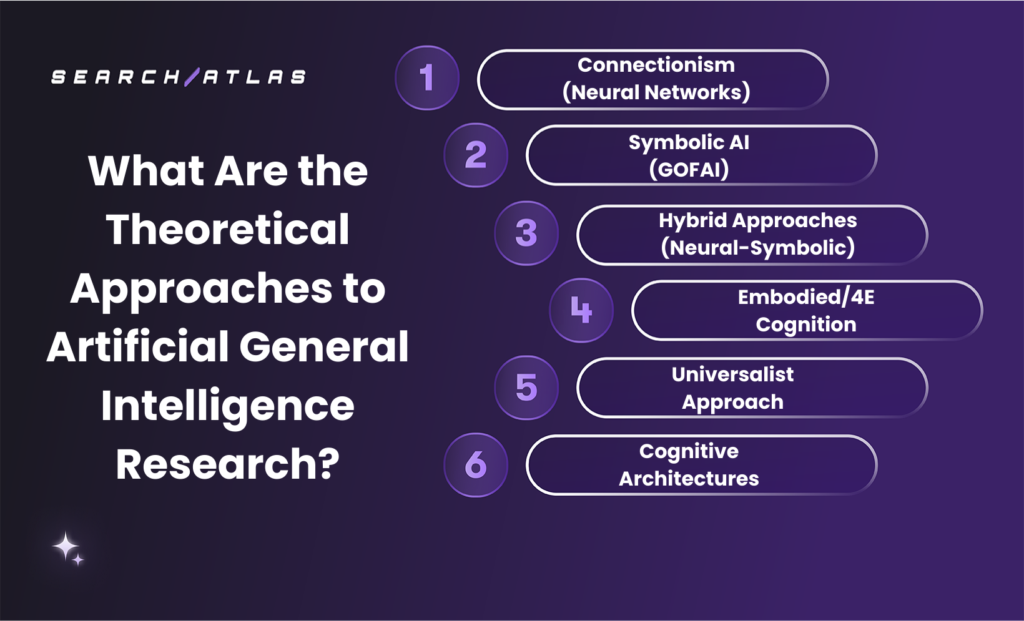

What Are the Theoretical Approaches to Artificial General Intelligence Research?

Artificial General Intelligence research follows theoretical approaches that define how intelligence is built, learned, and generalized across domains. These approaches differ in how they explain reasoning, memory, adaptation, perception, and knowledge transfer.

Some approaches treat intelligence as pattern learning, while others treat intelligence as symbolic reasoning, embodied action, or integrated cognitive architecture. A clear comparison of these approaches improves understanding of AGI research and reveals where major debates still remain.

The 6 theoretical approaches to Artificial General Intelligence research are listed below.

- Connectionism. Connectionism approaches AGI through neural networks that learn patterns from data by adjusting internal weights across many interconnected units. This approach models intelligence as distributed learning rather than explicit rule use. Connectionism performs strongly in perception, pattern recognition, and statistical generalization, which explains its central role in modern deep learning. However, connectionism still faces major challenges in one-shot learning, explainability, lifelong learning, and robust reasoning over rules and structured knowledge. The connectionist view treats intelligence as emergent from large-scale learning dynamics rather than pre-defined symbolic logic.

- Symbolic AI. Symbolic AI approaches AGI through explicit rules, logic, abstractions, and structured knowledge representations. This approach treats intelligence as reasoning over symbols that represent facts, categories, and relationships. Symbolic systems perform strongly in planning, deduction, interpretability, and knowledge transfer, which makes them attractive for tasks that require traceable reasoning. However, symbolic AI struggles with ambiguity, noisy data, perception, and large-scale real-world adaptation. The symbolic view argues that intelligence requires explicit structure and formal reasoning, especially for language, mathematics, and causal thinking, which demand more than statistical pattern matching.

- Hybrid Neural-Symbolic Approaches. Hybrid approaches combine neural learning with symbolic reasoning to capture the strengths of both paradigms. Neural components manage perception, pattern extraction, and generalization from data, while symbolic components manage logic, abstraction, memory, and explicit reasoning. This approach aims to create AGI systems that are adaptive, explainable, and capable of structured thought across domains. Hybrid research has grown because neural systems alone struggle with transparency and reasoning, while symbolic systems alone struggle with flexibility and scale. The hybrid view treats AGI as an integrated system where learning and reasoning must operate together rather than separately.

- Embodied and 4E Cognition. Embodied and 4E cognition approaches AGI through real-world interaction, sensorimotor grounding, and environmental coupling. This approach argues that intelligence does not exist only in abstract computation, because cognition emerges through embodied action, perception, and social interaction. Embodied AGI research emphasizes robots, multimodal learning, feedback loops, and world models built through direct experience. This theory challenges purely disembodied systems by arguing that meaning, common sense, and adaptive behavior depend on lived interaction with the world. The embodied view treats intelligence as extended, embodied, enacted, and embedded rather than isolated inside symbolic or statistical computation.

- Universalist Approaches. Universalist approaches define AGI through general prediction and decision-making principles that apply across any environment. This research tradition often centers on Solomonoff Induction, algorithmic probability, and idealized models. The goal is to identify a mathematically universal framework for learning, prediction, and adaptation from incomplete data. This approach is powerful at the theoretical level because it defines intelligence in highly general terms. However, these models remain computationally intractable in practice, which limits direct implementation. The universalist view treats AGI as a problem of optimal inference and decision-making under uncertainty across unrestricted environments.

- Cognitive Architectures. Cognitive architectures approach AGI through integrated frameworks that combine memory, reasoning, learning, perception, planning, and self-regulation inside one system. This approach treats intelligence as an organized architecture rather than a single algorithm or model. Cognitive architectures draw from psychology, neuroscience, and computer science to reproduce the functional structure of human cognition. They aim to support incremental learning, internal models, real-time adaptation, and goal-directed behavior across many tasks. Their strength lies in system integration and functional breadth. Their challenge lies in scalability, coordination across modules, and building architectures that remain efficient, flexible, and coherent at human-level complexity.

What Are the Technologies Driving Artificial General Intelligence?

Artificial General Intelligence is driven by a group of technologies that expand learning, reasoning, perception, adaptation, and system coordination across domains. These technologies do not contribute in the same way.

Some technologies drive pattern learning. Some technologies drive planning and decision-making. Some technologies drive perception of the physical world. Some technologies drive integration, scale, and system design.

Together, they form the technical foundation behind current AGI research and define the main paths researchers use to move from narrow systems toward general intelligence.

The 6 technologies driving Artificial General Intelligence are listed below.

- Deep learning and neural networks.

- Large language models and transformers.

- Multimodal models.

- Reinforcement learning and RLHF.

- Computer vision.

- Computational infrastructure and cognitive architectures.

1. Deep Learning and Neural Networks

Deep learning and neural networks drive Artificial General Intelligence by providing the foundational mechanism for learning from data at scale. Neural networks use layered architectures to extract increasingly abstract representations from raw inputs, which allows systems to process complex data such as language, images, and signals without explicit programming. This ability to learn representations automatically is critical for AGI, where systems must operate across domains without handcrafted rules.

Deep learning is especially important because it scales effectively with data and computational power. Larger models trained on more diverse datasets tend to develop more robust internal representations, which supports transfer learning and cross-domain performance. This scalability has made deep learning the backbone of modern AI systems, which include language models, vision systems, and multimodal architectures.

How do deep learning and neural networks drive AGI? Deep learning drives AGI by enabling systems to learn generalized representations that can be reused across tasks. Neural networks identify patterns, relationships, and structures in data, which supports flexible problem solving and adaptation.

Why are neural networks essential for general intelligence? Neural networks reduce reliance on task-specific programming by learning directly from data. This allows one system to perform multiple tasks, which is a core requirement for AGI.

What are the limitations of deep learning for AGI? Deep learning struggles with reasoning, causal understanding, and generalization outside training data. It often requires large datasets and high computational resources, which limits efficiency compared to human learning. Despite these limitations, deep learning remains central to AGI because it provides the most effective current approach for large-scale learning and pattern recognition.

2. Large Language Models and Transformers

Large language models and transformers drive Artificial General Intelligence by enabling systems to process language, encode knowledge, and perform a wide range of tasks within a single architecture. Transformers introduced a scalable way to model relationships between tokens, which allows systems to understand context, generate coherent responses, and perform reasoning-like tasks.

LLMs are important for AGI because language acts as a universal interface for knowledge, instructions, and reasoning. A system that understands and generates language interacts with humans, follows complex instructions, and combines knowledge across domains. This flexibility allows LLMs to perform tasks such as coding, analysis, planning, and explanation without retraining for each task.

How do LLMs and transformers contribute to AGI? LLMs contribute by compressing vast amounts of knowledge into a single model that can perform multiple tasks through prompting. This enables flexible task execution across domains.

What makes transformers critical for AGI development? Transformers support long-context understanding, parallel computation, and scalable training. These features allow models to process complex inputs and generate structured outputs.

What are the limitations of LLMs for AGI? LLMs rely on statistical patterns rather than true understanding. They struggle with causal reasoning, real-world grounding, and consistent generalization beyond training data. LLMs drive AGI progress because they provide a unified framework for knowledge and reasoning, even though they do not yet achieve true general intelligence.

3. Multimodal Models

Multimodal models drive Artificial General Intelligence by integrating multiple data types into a unified representation. Unlike single-modality systems, multimodal models process text, images, audio, and other inputs together, which enables richer understanding and more accurate reasoning.

This integration is critical for AGI because real-world intelligence depends on combining information from multiple sources. Humans do not rely on language alone. They interpret visual cues, sounds, and context simultaneously. Multimodal models attempt to replicate this capability by learning relationships across different modalities.

How do multimodal models drive AGI? Multimodal models combine different input types into shared representations, which enables systems to reason across domains and improve contextual understanding.

What are the key components of multimodal systems? Multimodal systems include input encoders for each modality, fusion layers to combine information, and output modules that generate responses based on integrated data.

Why is multimodality important for AGI? Multimodality reduces ambiguity and improves reasoning by providing a richer context. It enables systems to understand real-world situations more effectively. Multimodal models represent a major step toward AGI because they move AI from isolated capabilities toward integrated perception and reasoning.

4. Reinforcement Learning and RLHF

Reinforcement learning drives Artificial General Intelligence by enabling systems to learn through interaction, feedback, and decision-making. RL trains agents to optimize actions based on rewards, which supports planning, strategy, and long-term goal achievement.

RL is important for AGI because general intelligence requires the ability to act in environments, not just process information. RL introduces agency by allowing systems to explore, learn from consequences, and improve behavior over time.

What is the role of reinforcement learning in AGI? RL enables systems to learn strategies and optimize decisions through trial and error. This supports adaptive behavior and problem-solving.

How does RLHF contribute to AGI development? RLHF incorporates human feedback into training, which aligns model behavior with human expectations and improves usability.

What are the limitations of RL for AGI? RL is computationally expensive, data-intensive, and often limited to controlled environments. It also depends on well-defined reward functions. RL is essential for AGI because it introduces learning through action and feedback, even though it must be combined with other methods.

5. Computer Vision

Computer vision drives Artificial General Intelligence by enabling systems to perceive and interpret the physical world through visual data. Vision provides spatial awareness, object recognition, and environmental understanding, which are essential for real-world intelligence.

Visual perception is critical because a large portion of human cognition depends on interpreting visual information. AGI systems must understand environments, objects, and interactions to operate effectively outside digital contexts.

How does computer vision contribute to AGI? Computer vision enables systems to process images and video, which supports perception, recognition, and spatial reasoning.

Why is visual perception critical for AGI? Visual data provides essential context for understanding the physical world. Without vision, AGI cannot fully interact with real environments.

What are the limitations of computer vision systems? Current systems struggle with generalization, in-depth understanding, and contextual reasoning in complex environments. Computer vision is essential because AGI must extend beyond text and operate in the real world.

6. Computational Infrastructure and Cognitive Architectures

Computational infrastructure and cognitive architectures drive Artificial General Intelligence by enabling scale, integration, and system coordination. Infrastructure provides the computational resources needed to train large models, while cognitive architectures define how different components interact.

AGI requires more than individual models. It requires systems that integrate memory, reasoning, perception, and learning into a unified structure. Cognitive architectures provide this structure by organizing how information flows between components.

Why is computational infrastructure essential for AGI? AGI requires massive computational resources, specialized hardware, and scalable systems. Infrastructure determines what is technically possible.

What role do cognitive architectures play in AGI? Cognitive architectures define how systems combine perception, memory, reasoning, and learning into a coherent whole.

What challenges exist in AGI infrastructure and architecture? Challenges include energy consumption, scalability, integration complexity, and lack of standardized frameworks.

What Are the Key Characteristics of AGI?

Artificial General Intelligence (AGI) is defined by a set of core characteristics that enable human-level intelligence across domains, tasks, and environments. These characteristics distinguish AGI from narrow AI by enabling generalization, reasoning, adaptation, and continuous learning. Each characteristic reflects a capability that allows AGI systems to operate flexibly, transfer knowledge, and solve novel problems without task-specific programming.

The 5 key characteristics of AGI are listed below.

- Generalization and adaptability.

- Human-level cognitive abilities.

- Autonomous learning and self-improvement.

- Contextual understanding.

- Multimodal perception.

1. Generalization and Adaptability

Generalization and adaptability define AGI because they enable systems to transfer knowledge, adjust behavior, and solve new problems across domains. These capabilities remove dependence on task-specific programming and enable flexible intelligence.

Why are generalization and adaptability the key characteristics of AGI? Generalization and adaptability are central because AGI transfers knowledge across domains, learns from experience, and handles unfamiliar tasks without retraining. This capability enables domain independence and flexible problem-solving across diverse environments.

How do AGI systems eliminate the need for task-specific programming? AGI eliminates task-specific programming through generalized learning systems that apply knowledge across tasks. This approach contrasts with narrow AI, which requires separate models and training for each task.

Why is transferring knowledge across domains crucial? Knowledge transfer enables AGI to reuse learned patterns in new contexts, which accelerates problem-solving and reduces training requirements. This capability allows AGI to operate across domains without structural changes.

What makes learning from experience important for AGI? Learning from experience enables continuous improvement without manual retraining. AGI updates internal models through interaction with data, which strengthens performance over time.

2. Human-Level Cognitive Abilities

Human-level cognitive abilities define AGI because they replicate reasoning, problem-solving, and understanding across tasks. These abilities create intelligence that matches or exceeds human performance.

How is AGI defined by human-level cognitive abilities? AGI is defined by its ability to perform reasoning, learning, perception, and language tasks at human-level performance across domains. This definition establishes AGI as a general intelligence system.

Why does current AI lack cognitive flexibility? Current AI lacks flexibility because it relies on pattern recognition rather than reasoning and abstraction. This limitation restricts performance to trained tasks.

What cognitive traits are required for AGI? AGI requires reasoning, planning, learning, and communication abilities. These traits must integrate into a unified system that supports goal completion across tasks.

How does human-level cognition enable advanced problem solving? Human-level cognition enables abstract reasoning and cross-domain thinking, which supports solving complex problems beyond predefined rules.

3. Autonomous Learning and Self-Improvement

Autonomous learning and self-improvement define AGI because they enable systems to evolve continuously, refine strategies, and expand knowledge without constant external intervention or retraining. This capability moves AI beyond static models into dynamic systems that adapt over time, similar to how humans learn from experience.

Why are autonomous learning and self-improvement key characteristics of AGI? These capabilities enable AGI to learn continuously, refine its methods, and adapt to new environments. This process creates independent intelligence that evolves over time.

How does AGI mirror human-like learning? AGI mirrors human learning by acquiring knowledge through experience and applying it across domains. This process enables flexible reasoning and creative problem-solving.

Why is learning any intellectual task significant? Learning any task enables AGI to operate without predefined boundaries. This capability supports general intelligence across industries and domains.

How does independent operation contribute to AGI? Independent operation enables AGI to function without constant human input, which supports autonomous decision-making and long-term learning.

4. Contextual Understanding

Contextual understanding defines AGI because it enables systems to interpret meaning, intent, and relationships across different situations rather than processing information in isolation. This capability allows AGI to move beyond surface-level pattern recognition and develop a deeper understanding of language, environments, and human behavior.

Why is contextual understanding a key characteristic of AGI? Contextual understanding enables AGI to interpret nuances, adapt responses, and apply knowledge appropriately. This capability supports real-world reasoning.

How does contextual understanding distinguish AGI from narrow AI? AGI uses context to generalize across domains, while narrow AI operates within fixed boundaries. This distinction defines general intelligence.

What role does context play in adaptability? Context enables AGI to adjust behavior based on environment and task requirements. This capability supports flexible problem-solving.

Why is contextual understanding essential for advanced tasks? Advanced tasks require interpretation of complex situations, which depends on understanding relationships, intent, and environmental factors.

5. Multimodal Perception

Multimodal perception defines AGI because it enables systems to integrate multiple types of input into a unified and coherent understanding of the environment. This capability mirrors human perception, where information from different senses is continuously fused to interpret context, recognize patterns, and guide decisions.

Why is multimodal perception a key characteristic of AGI? Multimodal perception enables AGI to process text, images, audio, and other inputs together, which creates a deeper understanding and improves decision-making.

How does multimodal perception improve problem-solving? Multiple input sources provide richer context, which reduces ambiguity and improves reasoning accuracy across tasks.

Why is generalization important in multimodal systems? Multimodal systems transfer knowledge across input types, which supports adaptability and learning in diverse environments.

How do multimodal systems overcome the limitations of single-modal AI? Multimodal systems combine the strengths of different input types, which improves robustness and enables operation in real-world conditions.

What Are the Potential Use Cases of Artificial General Intelligence?

Artificial General Intelligence (AGI) introduces a shift from narrow, task-specific systems to flexible, domain-independent intelligence capable of reasoning, learning, and acting across complex environments. The potential use cases of AGI extend across scientific research, healthcare, enterprise systems, education, manufacturing, and global problem-solving.

These use cases are not isolated applications but interconnected domains where AGI acts as a general-purpose cognitive system that enhances human decision-making, accelerates discovery, and optimizes systems at scale.

The 6 key use cases of Artificial General Intelligence are listed below.

- Scientific discovery and research.

- Advanced healthcare systems.

- Autonomous manufacturing and supply chains.

- Enterprise and financial management.

- Personalized education and assistance.

- Complex problem solving and global challenges.

1. Scientific Discovery and Research

AGI transforms scientific discovery and research by acting as a general-purpose researcher capable of analyzing vast datasets, generating hypotheses, and conducting experiments at speeds far beyond human capability. Unlike traditional AI, which focuses on narrow tasks, AGI integrates knowledge across disciplines, which enables breakthroughs that require interdisciplinary thinking. This capability is critical in fields (physics, medicine, climate science, and mathematics), where progress often depends on connecting insights across domains.

AGI accelerates experimentation by simulating millions of scenarios, which reduces research timelines from years to days. It processes and analyzes data at a scale that allows it to identify patterns invisible to human researchers. This enables faster drug discovery, new material design, and more accurate climate modeling. AGI contributes to theoretical advancements by proposing new hypotheses and validating them through simulation and reasoning.

How does AGI accelerate experimentation and analysis? AGI accelerates experimentation by running large-scale simulations and analyzing results instantly. This reduces dependency on physical trials and enables rapid iteration in research workflows.

How does AGI enable the discovery of new knowledge? AGI identifies hidden patterns across datasets and integrates knowledge from multiple disciplines. This allows it to generate insights and theories that humans may not recognize.

Why is AGI important for innovation in science? AGI combines computational speed with human-like reasoning and curiosity, enabling continuous knowledge generation and accelerating scientific progress across all domains. AGI acts as a force multiplier for human intelligence, significantly increasing the pace and scope of discovery.

2. Advanced Healthcare Systems

AGI revolutionizes healthcare by enabling systems that understand, diagnose, and treat medical conditions with a level of precision and adaptability comparable to expert clinicians. Unlike narrow AI, which performs isolated tasks, AGI integrates patient data, medical research, and real-time inputs to provide holistic and personalized healthcare solutions.

AGI improves diagnostics by analyzing medical images, lab results, and patient history simultaneously. It detects patterns that may be missed by human practitioners, leading to earlier and more accurate diagnoses. AGI also transforms treatment by enabling personalized medicine, where therapies are tailored to an individual’s genetic profile and lifestyle.

How does AGI enable personalized medicine? AGI analyzes genetic, behavioral, and clinical data to create individualized treatment plans. This reduces trial-and-error approaches and improves patient outcomes.

How does AGI improve diagnostics and early detection? AGI processes large volumes of medical data to identify subtle patterns, enabling earlier detection of diseases and reducing diagnostic errors.

How does AGI accelerate drug discovery? AGI simulates molecular interactions, predicts outcomes, and identifies promising compounds, significantly reducing development time and cost. AGI enhances healthcare by combining reasoning, learning, and real-time decision-making, leading to more efficient and accessible medical systems.

3. Autonomous Manufacturing and Supply Chains

AGI enables fully autonomous manufacturing and supply chain systems by combining perception, decision-making, and execution into a unified framework. These systems move beyond automation to intelligent coordination, where machines dynamically adapt to changing conditions without human intervention.

AGI optimizes supply chains by analyzing real-time data from logistics, market demand, and environmental factors. It predicts disruptions, adjusts production schedules, and reallocates resources automatically. In manufacturing, AGI-powered systems enable adaptive production lines that respond to demand fluctuations and operational constraints.

How does AGI transform supply chain optimization? AGI integrates real-time data to predict demand, manage inventory, and optimize logistics, reducing costs and improving efficiency.

What makes AGI different from traditional automation? AGI makes context-aware decisions and adapts dynamically, while traditional automation follows predefined rules and requires human oversight.

How does AGI improve manufacturing efficiency? AGI enables predictive maintenance, dynamic scheduling, and quality control, which improves productivity and reduces downtime. AGI transforms industrial systems into adaptive, self-optimizing networks that operate with minimal human intervention.

4. Enterprise and Financial Management

AGI reshapes enterprise operations and financial systems by enabling intelligent decision-making across complex business environments. Unlike traditional analytics tools, AGI processes multidimensional data, evaluates scenarios, and provides strategic recommendations in real time.

AGI enhances financial management by improving risk assessment, forecasting, and capital allocation. It analyzes global market trends, identifies emerging risks, and recommends actions based on predictive modeling. In enterprises, AGI supports innovation by accelerating product development, optimizing operations, and improving customer experiences.

How does AGI improve strategic decision-making in enterprises? AGI evaluates multiple scenarios simultaneously, considering financial, operational, and external factors to provide comprehensive insights.

How does AGI impact financial systems? AGI enhances trading, risk management, and forecasting by processing large datasets and identifying patterns in real time.

Why is AGI important for enterprise innovation? AGI reduces decision latency, improves accuracy, and enables organizations to respond quickly to market changes. AGI transforms enterprises into data-driven, adaptive systems capable of continuous optimization and strategic agility.

5. Personalized Education and Assistance

AGI transforms education by enabling fully personalized learning experiences that adapt to each individual’s needs, preferences, and progress. Unlike traditional systems, AGI provides real-time feedback, customized learning paths, and continuous assessment.

AGI-powered tutors analyze student behavior, identify strengths and weaknesses, and adjust content dynamically. This creates an individualized learning experience that improves engagement and outcomes. AGI also expands access to education by providing high-quality instruction to underserved populations.

How does AGI personalize learning experiences? AGI adapts content, pacing, and teaching methods based on individual performance and preferences, creating customized learning paths.

How does AGI provide real-time feedback? AGI evaluates student responses instantly, explains mistakes, and suggests improvements, enabling continuous learning.

How does AGI bridge educational gaps? AGI delivers accessible, high-quality education globally, supporting different languages, abilities, and learning styles. AGI enables a shift from standardized education to adaptive, learner-centered systems that scale globally.

6. Complex Problem Solving and Global Challenges

AGI addresses complex global challenges by integrating data, reasoning across domains, and generating solutions for problems that exceed human cognitive limits. These challenges include climate change, economic instability, public health crises, and large-scale infrastructure optimization.

AGI analyzes interconnected systems, identifies dependencies, and proposes strategies that account for multiple variables and uncertainties. It supports decision-making in environments where traditional models fail due to complexity.

How does AGI solve complex, multi-domain problems? AGI integrates knowledge from different fields, analyzes large datasets, and generates solutions that consider multiple constraints and variables.

How does AGI contribute to climate and sustainability solutions? AGI models environmental systems, predicts outcomes, and optimizes resource allocation to reduce environmental impact.

Why is AGI critical for global challenges? AGI processes information at a scale and depth beyond human capability, enabling solutions to problems that require coordinated, cross-domain understanding.

What Are the Advantages of AGI?

Artificial General Intelligence (AGI) introduces a wide range of advantages that extend beyond isolated performance improvements and reshape how systems learn, decide, and operate across domains. Unlike narrow AI, which is limited to predefined tasks, AGI delivers broad, transferable intelligence that enhances problem-solving, accelerates innovation, and improves decision-making at both individual and systemic levels. These advantages emerge from AGI’s ability to integrate knowledge, adapt to new situations, and operate autonomously in complex environments.

AGI significantly enhances problem-solving and innovation by enabling systems to analyze vast datasets, identify hidden patterns, and generate solutions that combine insights from multiple disciplines. This capability allows AGI to address problems that are too complex for traditional methods, which include scientific discovery, economic modeling, and large-scale infrastructure optimization. AGI accelerates the pace of innovation across industries by reducing the time required for experimentation and analysis.

How does AGI improve problem-solving and innovation? AGI improves problem-solving by integrating knowledge across domains and generating solutions that account for multiple variables and constraints. This leads to faster and more effective innovation.

AGI transforms education by enabling highly personalized and efficient learning experiences. It adapts content, pacing, and teaching methods to individual learners, providing real-time feedback and continuous assessment. This creates more engaging and effective learning environments while expanding access to high-quality education globally.

How does AGI enable personalized education? AGI analyzes individual performance and preferences to deliver customized learning paths, improving engagement and outcomes.

Another major advantage of AGI is its ability to augment human capabilities. Rather than replacing humans, AGI acts as a cognitive partner that enhances decision-making, creativity, and productivity. It supports professionals by processing complex information, generating insights, and assisting with strategic planning.

How does AGI augment human intelligence? AGI enhances human capabilities by handling complex analysis and providing insights, which allows humans to focus on creativity, judgment, and high-level strategy. AGI delivers significant organizational benefits through autonomous decision-making and operational efficiency. It enables systems to monitor processes, predict outcomes, and adjust strategies in real time, reducing inefficiencies and improving performance across industries such as manufacturing, logistics, and finance.

Why is autonomous decision-making important in AGI? Autonomous decision-making allows systems to respond instantly to changing conditions, improving efficiency and reducing reliance on human intervention. In sectors like finance and cybersecurity, AGI improves risk management, fraud detection, and predictive analytics. It continuously analyzes data streams to identify anomalies, anticipate risks, and recommend proactive actions. In healthcare, AGI enhances diagnostics, accelerates pharmaceutical research, and enables personalized treatment plans by integrating patient data with medical knowledge.

How does AGI help address global challenges? AGI analyzes interconnected systems and generates solutions that improve resource allocation, environmental sustainability, and crisis management. Overall, the advantages of AGI come from its ability to unify learning, reasoning, and action into a single system. This combination enables continuous improvement, cross-domain intelligence, and large-scale impact, making AGI a transformative force across industries and society.

What Are the Major Roadblocks to Building AGI?

Artificial General Intelligence (AGI) remains a long-term objective because its development faces multiple foundational roadblocks that limit progress across learning, reasoning, infrastructure, and real-world integration. These roadblocks are not isolated technical issues but interconnected constraints that affect how systems scale, generalize, and operate beyond narrow domains.

Unlike incremental improvements in current AI systems, AGI requires breakthroughs that address fundamental limitations in algorithms, data efficiency, computational resources, and safety. These constraints collectively explain why progress toward AGI is slower and more uncertain than rapid advancements in narrow AI might suggest.

The 4 major roadblocks to building AGI are listed below.

- Technical and algorithmic limitations.

- Data and learning constraints.

- Computational and physical resource limits.

- Safety, alignment, and ethical challenges.

1. Technical and Algorithmic Roadblocks

Technical and algorithmic limitations represent one of the most significant barriers to AGI because current AI architectures are not designed for general intelligence. Most modern systems, which include transformer-based models, operate as input-output engines that require prompts and lack continuous reasoning, long-term planning, and autonomous goal formation. These systems perform well in pattern recognition but struggle with reasoning, abstraction, and generalization across domains.

How do current transformer architectures limit AGI development? Transformer architectures depend on static training and lack persistent memory, which prevents continuous learning and adaptation. They struggle with long-term reasoning and planning, which limits their ability to handle complex, multi-step problems. Another major challenge is the absence of a true world model. Current systems do not build an internal representation of how the world works, which restricts their ability to reason about cause and effect or simulate future outcomes.

Why is the lack of a world model a major roadblock? Without a world model, AI systems cannot understand relationships between actions and outcomes, making reasoning and planning unreliable. The complexity of human cognition also presents a major obstacle. Human intelligence integrates emotion, intuition, creativity, and reasoning in ways that are not yet understood or replicable.

Why does human cognition remain difficult to replicate? Human intelligence involves dynamic, interconnected processes that current algorithms cannot model or reproduce accurately. These limitations show that achieving AGI requires fundamentally new architectures, not just scaling existing models.

2. Data and Learning Limitations

Data and learning constraints are another major roadblock because current AI systems rely heavily on large datasets and supervised learning. Unlike humans, who can learn from a few examples, AI models require massive amounts of data to generalize effectively. This inefficiency limits scalability and adaptability.

Why do current AI systems struggle with generalization? AI models learn statistical patterns rather than underlying concepts, which makes it difficult to apply knowledge to new or unseen situations. Another issue is the lack of continuous and autonomous learning. Most models are “frozen” after training and cannot update their knowledge dynamically without retraining.

Why is continuous learning important for AGI? AGI requires systems that learn from new experiences in real time, similar to humans, without needing full retraining. Data quality and bias also create limitations. Training data often contains inconsistencies, biases, and gaps, which directly affect model performance and reliability.

How does data quality impact AGI development? Poor or biased data leads to unreliable outputs and limits the system’s ability to develop accurate and generalizable knowledge. These challenges highlight the need for new learning paradigms that are more efficient, adaptive, and less dependent on large-scale datasets.

3. Computational and Physical Resource Constraints

Computational and physical limitations represent a fundamental barrier to AGI because intelligence at scale requires significant processing power, memory, and energy. As models grow larger, the cost of training and inference increases exponentially, while performance improvements become incremental.

Why are computational resources a major constraint for AGI? AGI requires processing vast amounts of data and maintaining complex models, which demands significant computational infrastructure and energy. Memory and data movement also create bottlenecks. As systems scale, moving data between components becomes slower and more expensive, which limits efficiency.

How do physical limits of hardware impact AGI development? Hardware constraints (memory bandwidth and energy consumption) reduce the effectiveness of scaling and create diminishing returns. Additionally, improvements in hardware, such as GPUs, are slowing down, which reduces the rate of performance gains from scaling alone.

Why are scaling and hardware improvements no longer sufficient? The cost of achieving small performance improvements is increasing, while hardware advancements are becoming incremental rather than transformative. These constraints indicate that AGI cannot rely solely on scaling and requires more efficient architectures and computational approaches.

4. Safety and Ethical Challenges

Safety and ethical challenges are critical roadblocks because AGI systems need to be aligned with human values and operate reliably in complex environments. Unlike narrow AI, AGI systems would have broad autonomy, making unintended behavior potentially harmful.

Why is alignment a major challenge for AGI? Ensuring that AGI systems act in accordance with human values is difficult because defining and encoding those values is complex and context-dependent. Another issue is explainability. Current AI systems often function as black boxes, making it difficult to understand how decisions are made.

Why is explainability important for AGI? Explainability is necessary for trust, accountability, and safe deployment, especially in high-stakes applications. There are concerns about control and governance. AGI systems capable of self-improvement or autonomous decision-making could act unpredictably if not properly managed.

How can AGI be governed safely? Safe governance requires regulatory frameworks, human oversight, and continuous monitoring to ensure responsible use. Ethical considerations also include bias, fairness, privacy, and societal impact, all of which need to be addressed before AGI is widely deployed.

When will AGI arrive?

Expert predictions for Artificial General Intelligence (AGI) arrival vary significantly, with some industry leaders forecasting AGI within the next 2 to 5 years, while broader surveys of AI researchers project a longer timeline, typically between 2040 and 2061. OpenAI’s Sam Altman, for example, shifted his confidence from “the rate of progress continues” in November to “we are now confident we know how to build AGI” by January. Altman predicts AGI arrival possibly within the next 3.5 years or by 2035, referencing “a few thousand days.”

Anthropic’s Dario Amodei stated in January that he is “more confident than I’ve ever been that we’re close to powerful capabilities… in the next 2 to 3 years.” Amodei believes AGI is on the threshold, approximately 3 more months away, with systems exhibiting early AGI traits potentially emerging as soon as 2026. Amodei predicts AGI-level systems will be approaching in the near term, possibly sooner than 2027.

Google DeepMind’s Demis Hassabis adjusted his forecast from “as soon as 10 years” in autumn to “probably three to five years away” by January. Hassabis cautiously reiterates roughly a 50% chance of AGI by the end of the decade (2030). Hassabis states human-like reasoning in AI is possible within a decade, but it remains “far from certain.”

What are the projected capabilities of AI by 2026?

Projected capabilities of AI by 2026 include expert-level knowledge across every field and the ability to answer math and science questions, as well as many professional researchers. AI systems are expected to surpass human performance in coding tasks. AI systems will possess general reasoning skills superior to almost all humans. AI systems will autonomously complete many day-long tasks on a computer.

What are the critical bottlenecks and crucial periods for AGI development?

Critical bottlenecks and crucial periods for AGI development indicate the “moment of truth” will occur around 2028–2032. The author of 80,000 Hours roughly estimates a 50:50 chance for an AI capable of transformative effects by approximately 2030 versus progress slowing. The next five years, starting from March 2025, are considered “unusually crucial” for AGI development.

Are LLMs already AGI?

No, Large Language Models (LLMs) are not considered a viable path to Artificial General Intelligence (AGI) by many experts, with some stating LLMs have “set back progress to AGI by five to 10 years.” LLMs lack human-like reasoning, processing each “token” equally and failing to plan output in advance or explore multiple “thought” paths simultaneously. LLMs are also highly inefficient, requiring increasing training data and power, which is “extraordinarily inefficient” compared to the human brain’s 12-20 watts. Furthermore, LLMs cannot learn from interactions, as training data does not grow or evolve in real-time, and LLMs “forget” after every chat.

However, some researchers believe LLMs could be an important step or a component of AGI, with OpenAI’s o1 model claiming a “new level of AI capability” closer to human thought, though it “does not constitute AGI.” LLMs excel at “Type 2 Creativity: Semantic Exploration,” recombining existing information, and the transformer architecture successfully generates computer programs and summarizes articles. Despite these capabilities, LLMs struggle with tasks requiring more than 16 planning steps, with performance degrading rapidly when steps increase to 20-40.

Is ChatGPT AGI?

No, ChatGPT is not Artificial General Intelligence (AGI) by most definitions, as it lacks true general intelligence, consciousness, and understanding beyond its training data. DeepLearning.AI classifies ChatGPT, which includes ChatGPT 4 and GPT4V as of August 2023, as Artificial Narrow Intelligence (ANI). ChatGPT operates within the scope of its training data, which was last updated in September 2021, and cannot naturally evolve into AGI without significant modifications due to its inability to interact with the environment via action. OpenAI CEO Sam Altman explicitly stated in January 2025 that OpenAI has not built AGI and will not deploy AGI next month.

However, some perspectives suggest ChatGPT exhibits “sparks of artificial general intelligence” and can be viewed as an early, incomplete AGI system. GPT-4 demonstrates task versatility, performing tasks “significantly beyond previous systems such as GPT-3,” and can reason, plan, and learn from experience. GPT-4 has generated TikZ code for a unicorn image, suggesting an abstract grasp of elements. Current LLMs are good at general tasks, which include image and multimodal tasks since September 26, 2023, when OpenAI announced voice conversations and image inclusion for Plus users.