Regular Expressions (Regex) patterns refer to structured text-matching rules that define how specific sequences of characters are identified, extracted, and validated across datasets, which makes regex patterns essential for artificial intelligence (AI) workflows. Regular expressions AI systems use symbolic syntax to convert unstructured text into structured formats, which supports how Large Language Models (LLMs) process and generate language through pattern recognition. Regex patterns matter because AI pipelines require consistent input structures for tasks such as regex NLP, regex data cleaning, and regex machine learning.

Regex pattern matching works by applying predefined rules composed of metacharacters, character classes, quantifiers, anchors, groups, and lookarounds to scan and manipulate text. These components control how patterns match position, frequency, and structure, which enables precise extraction and validation. This mechanism matters because regex text preprocessing standardizes data before model training, which improves accuracy and reduces noise in AI systems.

Regular expressions (Regex) patterns perform key functions across AI domains, including NLP text preprocessing, data cleaning, tokenization, feature engineering, data validation, web scraping, and log parsing. Regex NLP workflows remove noise and normalize tokens, while regex data cleaning enforces formats such as emails or URLs. Regex pattern matching also supports feature extraction and external data ingestion, which ensures AI systems operate on reliable and structured inputs.

Regex patterns provide deterministic, rule-based extraction, while AI-based detection provides probabilistic, context-aware interpretation, which defines their complementary roles in AI systems. Regex patterns perform best for fixed and predictable structures, while AI models handle ambiguity and semantic variation. Tools such as Python re and JavaScript RegExp enable the implementation of regex examples AI across preprocessing pipelines, which positions regex as a foundational layer in modern AI workflows.

What Are Regular Expressions (Regex)?

Regular expressions (regex) are sequences of characters that define search patterns used to match, extract, validate, and manipulate text within strings, which makes regex a foundational text-processing language across programming and AI systems. The regular expression meaning centers on pattern definition, where each element in a regex is either a literal character that matches itself or a metacharacter that applies a special rule such as repetition, grouping, or position matching. This regex definition explains what is regex in practice, which is a deterministic system that transforms unstructured text into structured outputs for reliable processing.

How do regular expressions (regex) function as a pattern-matching language across programming environments? Regular expressions (regex) function as a cross-language pattern-matching system supported in programming environments such as Python, JavaScript, Java, and R, which allows consistent text processing across platforms. Regex engines interpret patterns using algorithms such as finite automata and backtracking, which evaluate whether text satisfies defined rules. Python uses the re module as the primary interface for regex operations, which enables AI practitioners to perform regex NLP, regex data cleaning, and regex text preprocessing directly inside machine learning pipelines.

What is the core concept behind how regex interprets characters and symbols? The core concept of regular expressions (regex) is that each character in a pattern represents either a literal value or a metacharacter with predefined operational meaning. Metacharacters such as . or * control matching logic, while character classes, quantifiers, anchors, and groups define structure, frequency, and position. This distinction matters because regex pattern matching depends on interpreting symbolic instructions rather than plain text comparison, which enables precise extraction and validation.

What is the historical origin and standardization of regular expressions? Regular expressions originated in formal language theory in 1951 through Stephen Cole Kleene’s concept of regular languages and evolved into practical tools through Unix systems and Perl-based implementations. Ken Thompson integrated regex into early Unix tools such as grep, while later standardization through Perl Compatible Regular Expressions (PCRE) established a widely adopted syntax. This evolution matters because regex transitioned from theoretical computation models into universal tools used in modern data processing and AI workflows.

Why are regular expressions (regex) important for modern data processing and AI workflows? Regular expressions (regex) are important because they enable efficient, scalable, and precise text processing across data validation, extraction, and preprocessing tasks that AI systems depend on. Regex patterns reduce complexity by replacing multi-step parsing logic with compact rules, which improves performance and consistency. This capability positions regular expressions AI workflows as a critical preprocessing layer that prepares structured inputs for downstream models and analytics systems.

What Are Metacharacters in Regex?

Metacharacters in Regular Expressions (Regex) are special characters with predefined meanings that control how regex engines interpret patterns, which makes metacharacters the foundation of regex pattern construction.

Metacharacters define operations such as matching position, repetition, or grouping instead of representing literal text, which enables precise and flexible regex pattern matching across AI and data processing workflows.

The 8 core metacharacters in Regular Expressions (Regex) are listed below.

- . (dot) — matches any single character except newline, which makes the dot the most general pattern matcher.

- \ (backslash) — escapes metacharacters to match them literally, so \. matches an actual period instead of any character.

- ^ (caret) — anchors the pattern to the start of a string or line, which controls positional matching.

- $ (dollar) — anchors the pattern to the end of a string or line, which ensures boundary-based validation.

- | (pipe) — acts as an alternation operator, so cat|dog matches either “cat” or “dog.”

- () (parentheses) — create capturing groups that extract subpatterns and enable backreferences.

- [] (square brackets) — define character classes, so [a-z] matches any lowercase letter.

- {} (curly braces) — specify exact repetition counts for quantifiers such as {2,5}.

Approximately 14 metacharacters exist, depending on regex flavor, and all metacharacters require escaping with a backslash when matching them as literal characters.

What Are Character Classes and Shorthand Sequences in Regex?

Character classes in Regular Expressions (Regex) define which specific characters are allowed at a given position, while shorthand sequences provide compact notation for commonly used character sets, which makes them essential for regex NLP and text preprocessing. Character classes reduce complexity by grouping multiple characters into a single rule, which improves efficiency in regex data cleaning and AI pipelines.

The 8 main character classes and shorthand sequences in Regular Expressions (Regex) are listed below.

- [abc] — matches any single character “a,” “b,” or “c.”

- [^abc] — matches any character that is not “a,” “b,” or “c.”

- [a-z] — matches any lowercase letter from “a” to “z.”

- \d — matches any digit from 0 to 9.

- \w — matches any word character, including letters, digits, and underscore.

- \s — matches whitespace characters such as space, tab, or newline.

- \D, \W, \S — match non-digit, non-word, and non-whitespace characters respectively.

- [^\w\s] — matches any character that is not a word character or whitespace, which is widely used to remove punctuation in NLP preprocessing.

Character classes are critical for regex text preprocessing because patterns such as r'[^\w\s]’ standardize input data by removing noise before AI model ingestion.

What Are Quantifiers in Regex?

Quantifiers in Regular Expressions (Regex) define how many times a character, group, or pattern must appear, which allows regex patterns to control repetition and frequency in text matching. Quantifiers extend basic pattern matching by specifying optional, exact, or ranged occurrences, which improves flexibility in regex machine learning and data validation workflows.

The 6 main quantifiers in Regular Expressions (Regex) are listed below.

- * — matches 0 or more occurrences of the preceding element.

- + — matches 1 or more occurrences of the preceding element.

- ? — matches 0 or 1 occurrence, which makes the element optional.

- {n} — matches exactly n occurrences of the preceding element.

- {n,} — matches n or more occurrences.

- {n,m} — matches between n and m occurrences.

Quantifiers are essential in regex pattern matching because they control pattern flexibility, which enables scalable extraction, validation, and normalization across AI-driven text processing systems.

What Are Anchors and Word Boundaries in Regex?

Anchors and word boundaries in Regular Expressions (Regex) are positional tokens that match locations within text rather than actual characters, which enables precise control over where a pattern must occur. Anchors such as ^ and $ define the start and end of a string or line, while word boundaries such as \b and \B define transitions between word and non-word characters. This behavior matters because regex pattern matching often requires validation of entire inputs or exact positions instead of partial matches.

What do anchors (^ and $) do in regex pattern matching? Anchors ^ and $ enforce position-based matching by restricting patterns to the beginning or end of a string or line. The caret ^ matches the start position, while the dollar $ matches the end position, which ensures strict validation. For example, the pattern ^hello$ matches only the exact word “hello” and rejects “hello world.” This positional control matters because regex data validation relies on full-string matching to prevent incorrect or partial matches.

What are word boundaries (\b and \B) and how do they work? Word boundaries \b match the position between a word character and a non-word character, while \B matches positions that are not word boundaries. A word character includes letters, digits, and underscores, which means \bcat\b matches the standalone word “cat” but not “concatenate.” This distinction matters because regex NLP workflows require accurate token-level matching to avoid false positives during text preprocessing.

What Are Groups and Capturing in Regex?

Groups and capturing in Regular Expressions (Regex) are mechanisms that organize subpatterns and store matched segments for reuse, extraction, or reference, which enables structured data retrieval from text. Groups use parentheses () to define logical units within a pattern, while capturing stores the matched content for later use in processing or substitution. This structure matters because regex machine learning and data extraction workflows depend on isolating meaningful components from raw text.

What are capturing groups, and how do they function? Capturing groups in regex are parentheses-based subpatterns that store matched text segments for reuse through indexing or naming. For example, the pattern (\d{3})-(\d{3})-(\d{4}) captures parts of a phone number into separate groups. These captured values can be referenced using backreferences such as \1, \2, or named groups such as (?P<area>\d{3}). This capability matters because regex data extraction requires structured outputs that can be reused in transformations or analysis.

What are non-capturing groups, and why are they used? Non-capturing groups (?:…) group patterns without storing matches, which improves performance and simplifies pattern design. Non-capturing groups are used when grouping is required for logic but not for extraction. This distinction matters because unnecessary capturing increases memory usage and complexity in large-scale regex text preprocessing pipelines.

What Are Lookahead and Lookbehind Assertions in Regex?

Lookahead and lookbehind assertions in Regular Expressions (Regex) are zero-width conditions that check for patterns before or after a position without consuming characters, which enables conditional matching in complex text structures. These assertions allow regex engines to validate context while preserving the original match boundaries. This functionality matters because regex pattern matching often requires context-sensitive validation in AI data processing workflows.

What is lookahead in regex, and how does it work? Lookahead assertions verify that a specific pattern follows the current position without including it in the match. Positive lookahead (?=…) ensures the pattern exists ahead, while negative lookahead (?!…) ensures it does not. For example, \d+(?= USD) matches numbers only if followed by “USD.” This conditional logic matters because regex NLP and financial data parsing require context-aware extraction without altering the matched output.

What is lookbehind in regex and how does it work? Lookbehind assertions verify that a specific pattern precedes the current position without including it in the match. Positive lookbehind (?<=…) ensures a preceding pattern exists, while negative lookbehind (?<!…) ensures it does not. For example, (?<=\$)\d+ matches numbers only if preceded by a dollar sign. This backward validation matters because regex data cleaning and extraction workflows often depend on identifying values based on prior context.

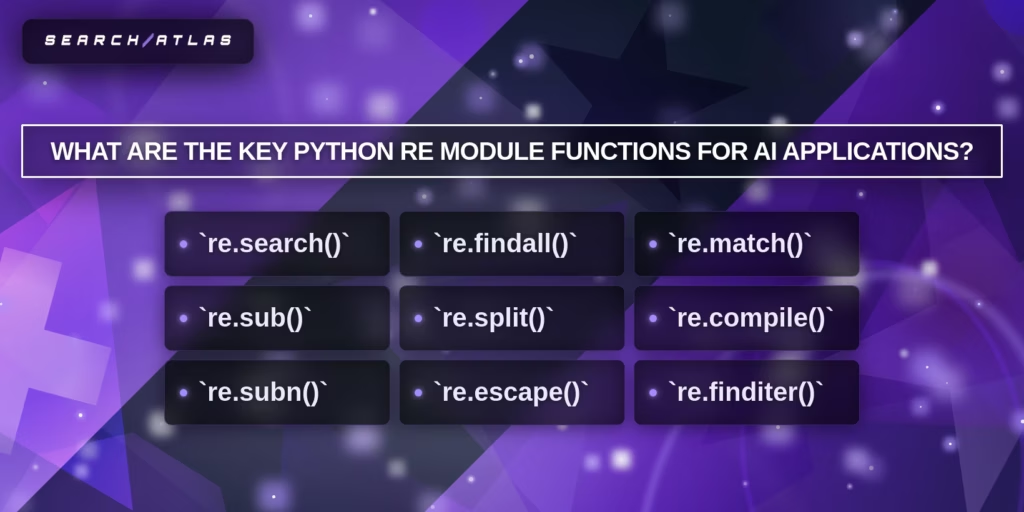

What Are the Key Python re Module Functions for AI?

The key Python re module functions for AI are built-in methods that enable pattern matching, extraction, transformation, and validation of text data, which makes them essential for regex NLP, regex data cleaning, and regex machine learning workflows. Python re module functions operate as execution interfaces for regex pattern matching, which allows AI systems to process unstructured text into structured, model-ready inputs.

The 9 key Python re module functions for AI applications are listed below.

- re.search() — scans the entire string and returns the first match.

- re.findall() — returns all non-overlapping matches in a list format.

- re.match() — checks for a match only at the beginning of the string.

- re.sub() — replaces matched patterns with specified values.

- re.split() — splits text based on regex-defined delimiters.

- re.compile() — compiles regex patterns for reuse and performance optimization.

- re.subn() — performs replacement and returns both result and count.

- re.escape() — escapes special characters to match them literally.

- re.finditer() — returns an iterator of match objects with detailed metadata.

What does re.search() do in AI text processing?

re.search() is a regex function that scans an entire string to locate the first occurrence of a pattern, which makes it essential for flexible information extraction in unstructured text. re.search() returns a Match object with attributes such as position and groups, which enables precise data manipulation. This flexibility matters because regex NLP workflows require pattern detection regardless of position in large datasets such as logs or documents.

What does re.findall() do in AI data extraction?

re.findall() is a regex function that retrieves all non-overlapping matches of a pattern, which enables comprehensive data extraction across large text corpora. re.findall() returns structured outputs such as lists or tuples, depending on capturing groups, which supports downstream processing. This function matters because regex machine learning pipelines often require every instance of an entity, such as dates, prices, or keywords.

What does re.match() do in AI validation tasks?

re.match() is a regex function that checks for a pattern only at the beginning of a string, which enables strict input validation for structured data. re.match() ensures that text follows predefined formats such as IDs or timestamps. This behavior matters because regex data validation requires controlled input structures before AI model ingestion.

What does re.sub() do in regex data cleaning?

re.sub() is a regex function that replaces matched patterns with specified values, which makes it essential for data cleaning and normalization in AI workflows. re.sub() supports dynamic replacements using functions and capturing groups, which enables advanced transformations such as anonymization or formatting. This function matters because regex text preprocessing requires consistent and standardized inputs.

What does re.split() do in regex NLP pipelines?

re.split() is a regex function that divides text based on pattern-defined delimiters, which enables flexible tokenization and segmentation. re.split() supports complex delimiters and capturing groups, which improves parsing of semi-structured data. This function matters because tokenization is a foundational step in regex NLP and machine learning pipelines.

What does re.compile() do for regex performance in AI systems?

re.compile() is a regex function that converts patterns into reusable objects, which improves performance and maintainability in large-scale AI applications. re.compile() allows repeated use of patterns without recompilation and supports flags for behavior control. This function matters because AI systems process large datasets where efficiency and consistency are critical.

What does re.subn() do in AI preprocessing pipelines?

re.subn() is a regex function that performs pattern replacement and returns both the modified string and the number of substitutions, which enables monitoring and evaluation of preprocessing steps. This dual output supports tracking data transformations and detecting anomalies. This function matters because AI pipelines require measurable preprocessing operations.

What does re.escape() do in regex-based AI systems?

re.escape() is a regex function that escapes all special characters in a string, which ensures literal matching and prevents unintended pattern behavior. re.escape() is critical when handling user input or dynamic patterns. This function matters because regex machine learning systems must avoid errors and security risks such as regex injection.

What does re.finditer() do in advanced AI text analysis?

re.finditer() is a regex function that returns an iterator of match objects with positional metadata, which enables detailed and memory-efficient text analysis. re.finditer() provides start and end indices for each match, which supports contextual analysis and annotation. This function matters because advanced regex NLP tasks, such as entity recognition and n-gram generation, require both content and position awareness.

How Are Regex Patterns Used for NLP Text Preprocessing?

Regex patterns are used for NLP text preprocessing to clean, normalize, and structure raw text data, which directly improves model accuracy and consistency in artificial intelligence systems. Regex NLP workflows apply rule-based pattern matching to remove noise, standardize formats, and prepare text for tokenization and feature extraction, which ensures that machine learning models receive high-quality input data.

The 8 main ways regex patterns are used for NLP text preprocessing are listed below.

- Removing punctuation and special characters. Regex patterns such as [^\w\s] remove non-word and non-whitespace characters, which eliminates noise from text data. This step matters because punctuation can distort token distributions and reduce model performance in NLP tasks.

- Normalizing whitespace. Regex patterns such as \s+ replace multiple spaces, tabs, or newlines with a single space, which standardizes text formatting. This normalization matters because inconsistent spacing affects tokenization and downstream processing.

- Converting text to lowercase. Regex-based pipelines integrate case normalization to ensure consistent token representation. This process matters because models treat “Word” and “word” as different tokens without normalization.

- Removing numbers or filtering digits. Regex patterns such as \d+ remove or extract numeric values depending on the use case. This step matters because numbers may introduce noise or require separate feature handling in NLP models.

- Tokenizing text into words. Regex patterns such as \b\w+\b extract individual tokens from text, which supports lexical analysis. This tokenization matters because NLP models require structured input units for processing.

- Extracting specific entities. Regex patterns such as @\w+ for mentions or #\w+ for hashtags identify entities within text. This extraction matters because entity recognition supports feature engineering and classification tasks.

- Removing stopwords or unwanted patterns. Regex patterns filter predefined terms or patterns, which reduces irrelevant content. This filtering matters because removing low-value tokens improves signal quality in NLP datasets.

- Standardizing text formats. Regex patterns transform text into consistent formats, such as replacing contractions or normalizing URLs. This standardization matters because consistent structure improves model interpretability and performance.

Regex text preprocessing forms the first layer of NLP pipelines because it converts raw, inconsistent text into structured, normalized input, which enables accurate tokenization, feature engineering, and model training in AI systems.

How Does Regex Enable Custom Tokenization?

Regex enables custom tokenization by defining precise rules that split text into meaningful units based on patterns rather than fixed delimiters, which allows AI systems to control how language is segmented. Regex tokenization uses patterns such as \b\w+\b, \w+|[^\w\s], or domain-specific rules to extract words, symbols, or mixed tokens depending on the task. This flexibility matters because standard tokenization methods fail when text contains irregular structures such as hashtags, code snippets, or multilingual content.

How does regex tokenization improve NLP processing accuracy? Regex tokenization improves NLP processing accuracy by allowing selective inclusion or exclusion of characters, which ensures that tokens match the intended linguistic or analytical structure. For example, the pattern \w+ly\b extracts adverbs, while #\w+ captures hashtags as single tokens. This control matters because regex NLP pipelines require consistent token boundaries to maintain semantic integrity and improve downstream model performance.

How Does Regex Normalize Entities for Feature Engineering?

Regex normalizes entities for feature engineering by transforming variable text patterns into consistent, structured representations, which enables machine learning models to process equivalent data uniformly. Regex normalization replaces variations of the same entity with standardized formats using patterns such as \d{4}-\d{2}-\d{2} for dates or [\w.-]+@[\w.-]+ for emails. This normalization matters because inconsistent representations reduce feature reliability and introduce noise into model training.

How does entity normalization improve feature consistency in AI systems? Entity normalization improves feature consistency by converting diverse inputs into unified tokens or placeholders, which simplifies feature extraction and reduces dimensionality. For example, replacing all URLs with <URL> or all numbers with <NUM> ensures that models focus on semantic structure rather than irrelevant variation. This process matters because regex machine learning pipelines depend on stable and comparable features for accurate predictions.

How Are Regex Patterns Used for Data Validation in AI Systems?

Regex patterns are used for data validation in AI systems by enforcing strict input formats and detecting invalid or inconsistent data before processing, which ensures data quality and reliability. Regex data validation applies rules such as ^[\w.-]+@[\w.-]+\.\w+$ for email validation or ^\d{3}-\d{3}-\d{4}$ for phone numbers to verify structural correctness. This validation matters because AI systems rely on clean, structured data to produce accurate outputs.

How does regex validation improve data integrity in AI workflows? Regex validation improves data integrity by rejecting malformed inputs and standardizing accepted formats, which reduces error propagation in machine learning pipelines. Anchors such as ^ and $ enforce full-string validation, while quantifiers and character classes define exact constraints. This precision matters because regex data cleaning and validation act as gatekeeping layers that protect AI systems from unreliable or noisy data inputs.

How Is Regex Used for Web Scraping and Log Parsing?

Regex is used for web scraping and log parsing by extracting, structuring, and validating specific data patterns from unstructured or semi-structured sources such as HTML pages, JSON responses, and system logs, which enables AI systems to convert raw data into usable datasets. Regex pattern matching scans large text inputs and isolates entities such as URLs, IP addresses, timestamps, and identifiers, which supports automated data pipelines in AI workflows.

How does regex extract data during web scraping in AI workflows? Regex extracts data in web scraping by matching predefined patterns within raw HTML or API responses, which allows AI systems to identify and collect targeted information at scale. Patterns such as https?://\S+ extract URLs, while [\w.-]+@[\w.-]+ captures email addresses from web content. Regex integrates with HTTP request libraries to process responses and isolate relevant fields, which matters because AI systems rely on structured datasets for tasks such as threat intelligence, sentiment analysis, and data aggregation.

How do regex filters ensure data accuracy in web scraping pipelines? Regex filters ensure data accuracy by validating and sanitizing extracted data against strict format rules before it enters AI systems. Patterns enforce constraints such as valid IP formats or domain structures, which reduces noise and prevents malformed data from propagating. This validation matters because regex data cleaning improves the reliability of downstream AI models and enhances detection accuracy in applications such as cybersecurity and analytics.

How is regex used for log parsing in AI systems? Regex is used for log parsing by defining patterns that extract structured fields such as timestamps, error codes, IP addresses, and user actions from large log files, which enables automated analysis and monitoring. For example, patterns such as \d{4}-\d{2}-\d{2} extract dates, while \b\d{1,3}(\.\d{1,3}){3}\b identifies IP addresses. This automation matters because AI systems process large-scale logs to detect anomalies, monitor performance, and support incident response workflows.

How do advanced regex techniques improve log analysis in AI workflows? Advanced regex techniques such as lookaheads, lookbehinds, and nested quantifiers enable context-aware pattern matching, which improves precision in complex log environments. These techniques allow correlation of related events and filtering of specific conditions without consuming unnecessary text. This capability matters because AI-driven log analysis requires granular pattern detection for threat hunting, anomaly detection, and forensic investigation.

How do regex and AI work together in hybrid data processing systems? Regex and AI work together by combining deterministic pattern matching with probabilistic model inference, which improves both accuracy and explainability in data processing workflows. Regex provides transparent, rule-based validation, while AI models detect patterns that are not explicitly defined. This hybrid approach matters because it balances precision and adaptability in applications such as security monitoring and large-scale data ingestion.

How can regex performance be optimized for large-scale scraping and log parsing? Regex performance is optimized by using efficient pattern design, compiled expressions, and constrained matching rules, which reduce processing time and prevent excessive backtracking. Techniques include using anchors (^, $), non-capturing groups, and specific character classes instead of broad patterns such as .*. This optimization matters because large-scale AI systems process gigabytes to petabytes of data, where inefficient regex patterns can cause latency or system failure.

How do poorly designed regex patterns affect parsing performance? Poorly designed regex patterns cause excessive backtracking and matching ambiguity, which leads to performance degradation and potential parsing failures. Patterns such as .* can expand unpredictably and increase computation time, especially in long logs. This issue matters because reliable regex pattern matching is critical for maintaining stability and scalability in AI-driven data pipelines.

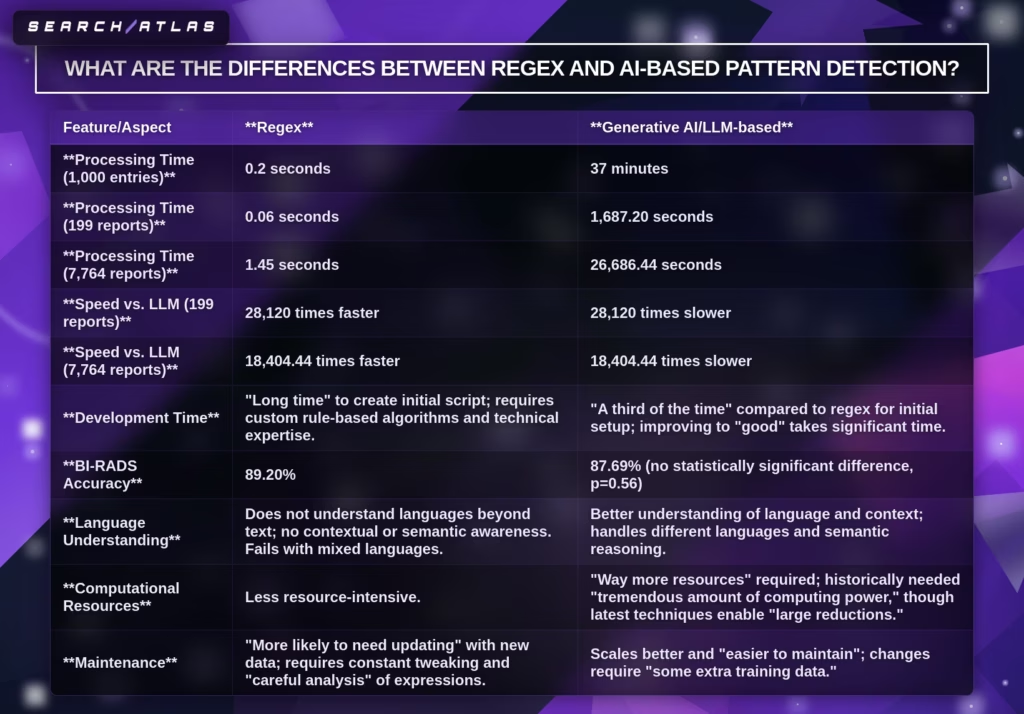

What Are the Differences Between Regex and AI-Based Detection?

Regex and AI-based detection are two pattern extraction approaches that differ in processing method, speed, flexibility, and resource requirements, which determine how each method performs in AI data workflows. Regular expressions (regex) use deterministic, rule-based pattern matching, while AI-based detection uses probabilistic models such as large language models (LLMs) to interpret context and semantics. This difference matters because the choice impacts accuracy, scalability, cost, and suitability for structured versus unstructured data.

The key differences between regex and AI-based detection are listed below.

- Processing approach. Regex uses explicit rule-based pattern matching, while AI-based detection uses machine learning models that infer patterns from data. Regex pattern matching follows predefined syntax, while AI systems learn from examples and context.

- Processing speed. Regex executes extremely fast, processing thousands of entries in milliseconds, while AI-based detection requires significantly more time due to model inference. Regex can be over 10,000× faster in large-scale text processing tasks.

- Language understanding. Regex does not understand semantics or context and only matches text patterns, while AI-based detection interprets meaning, intent, and linguistic variation. AI systems handle ambiguity, synonyms, and multilingual inputs more effectively.

- Accuracy for structured data. Regex provides near 100% precision for well-defined patterns such as emails, IDs, or numeric formats, while AI-based detection may introduce variability. Regex avoids hallucinations because it follows strict rules.

- Performance on unstructured data. Regex struggles with inconsistent, messy, or context-dependent text, while AI-based detection performs better by adapting to variations and incomplete data. AI systems handle typos, missing fields, and natural language complexity.

- Computational resources. Regex requires minimal computational resources and runs efficiently on standard hardware, while AI-based detection requires significantly more compute power, including GPUs or cloud infrastructure.

- Development time. Regex requires longer initial development due to manual rule creation, while AI-based detection can produce initial results faster using pre-trained models. However, refining AI systems requires additional training and tuning.

- Maintenance and scalability. Regex requires ongoing updates when patterns change, while AI-based systems adapt through retraining and scale better with evolving data. Regex maintenance depends on rule updates, while AI maintenance depends on data updates.

- Explainability. Regex provides transparent and explainable logic because every pattern is explicitly defined, while AI-based detection operates as a black box with less interpretability. This difference matters for auditing and compliance.

- Cost structure. Regex is cost-efficient due to low computational requirements and fast execution, while AI-based detection incurs higher operational costs due to longer processing times and infrastructure demands.

Regex is best suited for structured or semi-structured data with clearly defined patterns, high-speed requirements, and low-resource environments. AI-based detection is best suited for unstructured, context-heavy data that requires semantic understanding and adaptability.

Regex and AI-based detection work most effectively in hybrid systems where deterministic pattern matching and probabilistic reasoning complement each other, which improves both precision and flexibility. Hybrid workflows use regex for strict validation and extraction, while AI models handle ambiguity and contextual interpretation. This combination matters because modern AI pipelines often require both exact pattern control and adaptive intelligence for optimal performance.

What Are the Most Common Regex Patterns for AI Practitioners?

The most common regex patterns for AI practitioners are reusable pattern templates that validate, extract, split, and normalize structured text entities such as emails, URLs, IP addresses, dates, phone numbers, and identifiers. These regex patterns matter because AI workflows depend on reliable text preprocessing, data validation, feature extraction, and data cleaning before machine learning models process inputs.

Common regex patterns give AI practitioners fast, deterministic control over repetitive text structures, which makes regex pattern matching a foundational layer in regex NLP, regex data cleaning, and regex machine learning workflows.

The most common regex patterns every AI practitioner should know are listed below.

- Email validation. Email validation patterns such as ^[A-Za-z0-9._%+-]+@[A-Za-z0-9.-]+\.[A-Za-z]{2,}$ verify whether an email address follows a valid structural format. This regex pattern is common because email fields appear in user records, CRM systems, and web forms, which makes validation important for input quality and downstream segmentation.

- URL matching. URL matching patterns such as https?://\S+ or stricter protocol-based variants extract and validate web addresses from text. This regex pattern matters because AI systems scrape pages, process links, analyze sources, and normalize URL-based features during data collection and content analysis.

- IPv4 address validation. IPv4 validation patterns such as ^(?:(?:25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)\.){3}(?:25[0-5]|2[0-4]\d|1\d\d|[1-9]?\d)$ verify four-octet IP structures. This regex pattern is important in cybersecurity, log parsing, and infrastructure analytics because AI workflows often process IP-based entities in network and threat datasets.

- Strong password validation. Strong password patterns such as ^(?=.*[a-z])(?=.*[A-Z])(?=.*\d)(?=.*[@$!%*?&]).{8,}$ enforce minimum complexity rules. This regex pattern is widely used because authentication systems require structured password validation before account creation or security checks.

- International phone number (E.164 format). E.164 patterns such as ^\+[1-9]\d{1,14}$ validate international phone numbers with a leading plus sign and up to 15 digits. This regex pattern matters because AI systems process global customer records, call data, and messaging workflows that require standardized telephone formats.

- Date format (YYYY-MM-DD). Date patterns such as ^\d{4}-(0[1-9]|1[0-2])-(0[1-9]|[12]\d|3[01])$ validate ISO-style calendar dates. This regex pattern is common because standardized dates improve sorting, filtering, feature engineering, and consistency across AI datasets.

- Hex color code. Hex color patterns such as ^#?([A-Fa-f0-9]{6}|[A-Fa-f0-9]{3})$ validate 3-digit and 6-digit color values. This regex pattern appears in design systems, UI generation, styling tools, and content workflows where structured color values must be validated.

- Extract HTML tags (simple). HTML tag extraction patterns such as <[^>]+> identify basic HTML tag structures inside text. This regex pattern is useful for simple content cleaning, markup removal, and preprocessing of scraped or exported web content before AI analysis.

- Credit card number (basic detection). Credit card detection patterns such as \b(?:\d[ -]*?){13,16}\b identify card-like numeric strings. This regex pattern matters in compliance, security, and sensitive-data detection because AI workflows often need to flag or redact financial information before processing.

- Slug generator (URL-safe string). Slug-related patterns such as [^a-z0-9]+ help replace unsafe characters and normalize text into URL-safe strings. This regex pattern is useful in content management, SEO workflows, and AI-assisted publishing because titles often need conversion into standardized slugs.

- Digits. Digit patterns such as \d+ extract or validate numeric sequences. This regex pattern is one of the most fundamental because AI preprocessing often isolates counts, IDs, prices, codes, or measurements from unstructured text.

- Alphanumeric characters. Alphanumeric patterns such as ^[A-Za-z0-9]+$ validate strings that contain only letters and digits. This regex pattern matters for usernames, IDs, codes, and field-level validation where symbols must be excluded.

- Time. Time patterns such as ^(?:[01]\d|2[0-3]):[0-5]\d$ validate 24-hour time strings. This regex pattern is common in scheduling data, logs, event records, and structured temporal datasets that AI systems use for sequence analysis.

- Match duplicates. Duplicate-matching patterns such as \b(\w+)\s+\1\b identify repeated words or adjacent duplicate tokens. This regex pattern helps AI practitioners detect noisy text, duplicated entries, or repeated linguistic structures during preprocessing and quality checks.

- Identification (Social Security Number). SSN patterns such as ^\d{3}-\d{2}-\d{4}$ or stricter constrained variants validate United States Social Security Number formats. This regex pattern is important in privacy, redaction, and compliance workflows that must detect or mask sensitive identifiers.

- Zip codes. ZIP code patterns such as ^\d{5}(?:-\d{4})?$ validate 5-digit and ZIP+4 United States postal codes. This regex pattern matters for address normalization, location-based analytics, and customer data validation.

- IBAN. IBAN patterns such as ^[A-Z]{2}\d{2}[A-Z0-9]{11,30}$ validate the general structure of International Bank Account Numbers. This regex pattern is common in financial systems because international banking data requires strict format validation before storage or analysis.

- Percentage (1 to 100). Percentage patterns such as ^(100|[1-9]?\d)%?$ validate values from 0-100 or 1-100 depending on the business rule. This regex pattern matters in reporting, scoring systems, dashboards, and model outputs that express percentages as formatted text.

- Splitting full names. Name-splitting regex patterns help separate structured components such as first name, middle name, and last name from a full-name string. This regex pattern is useful in data cleaning and entity normalization, although complex name structures often require additional logic beyond regex alone.

- Splitting phone numbers (area code and line number). Phone-splitting patterns such as ^\(?(\d{3})\)?[-\s]?(\d{3})[-\s]?(\d{4})$ capture phone number subcomponents into separate groups. This regex pattern matters because AI systems often need area code, prefix, and line number as individual structured fields.

- Splitting addresses (house number, street, city). Address-splitting regex patterns capture components such as house number, street name, and city from semi-structured address lines. This regex pattern is useful for location normalization, CRM cleanup, delivery data processing, and fraud analysis, although address parsing often benefits from hybrid logic because real-world addresses vary heavily.

These regex patterns form a practical baseline for AI practitioners because they cover the most common validation, extraction, and normalization tasks across text preprocessing, security, analytics, and structured data preparation. AI practitioners use these patterns because regex examples AI workflows require deterministic handling of repetitive entities before probabilistic models interpret context, which makes common regex patterns a durable skill across NLP, machine learning, web scraping, and log analysis.

What Are the Best Regex Tools and Libraries for AI Development?

The best regex tools and libraries for AI development are platforms and engines that support regex pattern matching, automation, validation, and high-performance text processing across AI workflows such as NLP, data cleaning, and large-scale data extraction. These tools range from AI-powered regex generators to high-performance regex engines and debugging environments, enabling AI practitioners to build, test, optimize, and deploy regex patterns efficiently within regex NLP, regex machine learning, and regex data preprocessing pipelines.

The best regex tools and libraries for AI development include:

- ChatGPT / LLMs (AI-powered regex generation). LLMs generate, explain, and optimize regex patterns from natural language prompts, making regex creation faster and more accessible. They are widely used for regex examples, AI workflows because they reduce manual effort, assist debugging, and improve development speed in text preprocessing and data cleaning.

- RegexGPT. RegexGPT converts plain English instructions into complete regex solutions with explanations and code examples. This tool is valuable for AI practitioners because it bridges human intent and regex pattern matching logic, improving usability and learning.

- RegexAI. RegexAI generates multiple candidate regex patterns based on user intent and explores the solution space. Its ability to refine patterns using contextual understanding makes it useful for complex extraction tasks in regex NLP workflows.

- AutoRegex. AutoRegex automates regex creation using AI, significantly reducing development time and improving pattern accuracy. It is particularly effective for regex data cleaning and feature extraction tasks in AI pipelines.

- RightBlogger AI Regex Generator. This tool simplifies regex creation through a user-friendly interface and supports multiple programming languages. It helps AI developers quickly generate validation and extraction patterns without deep regex expertise.

- AI-powered documentation tools. These tools automatically generate explanations and documentation for regex patterns, improving maintainability and knowledge transfer in AI teams working with complex regex systems.

- Hyperscan (High-performance regex engine). Hyperscan is a high-throughput regex matching library capable of scanning large datasets at extremely high speeds. It is ideal for AI systems processing massive text streams such as logs, cybersecurity data, and real-time analytics.

- RE2 (Safe and scalable regex engine). RE2 provides guaranteed linear-time execution and prevents catastrophic backtracking, making it reliable for production AI systems handling untrusted input and large-scale data processing.

- JPCRE2 (PCRE2 wrapper with JIT support). JPCRE2 offers advanced regex features with just-in-time compilation for faster execution. It is suitable for complex pattern matching in multilingual and large-scale AI datasets.

- Rust regex library — Rust’s regex engine uses finite automata for predictable linear performance. It is highly efficient for large-scale regex machine learning workflows and integrates well with high-performance systems.

- Boost.Xpressive (C++ regex library). Boost.Xpressive provides template-based regex construction in C++, useful for integration-heavy environments, though less optimal for high-performance AI workloads.

- Lightgrep (forensics-focused regex tool). Lightgrep specializes in multi-pattern matching over large binary datasets. It is valuable for AI use cases like anomaly detection, forensic analysis, and large-scale data mining.

- Super Expressive (JavaScript regex builder). Super Expressive allows developers to build regex patterns using readable, composable syntax. This improves maintainability and reduces complexity in AI-driven web applications.

- Regex+ (JavaScript regex tool). Regex+ provides programmatic regex construction, though less documented for AI-specific use cases compared to other libraries.

- Python re module (core AI regex library). Python’s built-in regex library is the most widely used interface for regex in AI, supporting text preprocessing, tokenization, and data cleaning in NLP pipelines.

- regex.rip (ReDoS checker). regex.rip identifies performance vulnerabilities in regex patterns, helping AI systems avoid inefficient or unsafe expressions.

- recheck (ReDoS detection library). recheck analyzes regex patterns for denial-of-service vulnerabilities, ensuring safe deployment in AI systems handling external inputs.

- vuln-regex-detector. This tool detects catastrophic backtracking and validates regex safety, which is critical for secure AI data pipelines.

- SafeRegex. SafeRegex tests regex patterns for performance risks, supporting secure and stable regex deployment in production AI environments.

- Regex Crossword (learning tool). Regex Crossword helps developers learn regex concepts interactively, supporting foundational skill-building for AI practitioners.

- Regex Learn. Regex Learn provides structured tutorials and exercises, helping practitioners understand regex pattern matching for NLP and preprocessing tasks.

- Regulex (regex visualizer). Regulex visualizes regex patterns as diagrams, making it easier to understand complex AI-generated regex.

- RegexBuddy (professional regex tool). RegexBuddy offers advanced debugging, testing, and multi-engine support, making it useful for refining regex in production AI systems.

- RegexMagic (regex generator). RegexMagic generates regex patterns from examples, simplifying pattern creation for validation and extraction tasks.

- BuildRegex (GUI builder). BuildRegex provides a graphical interface for constructing regex, useful for beginners and rapid prototyping.

- Regular-Expressions.info (learning resource). This site offers comprehensive tutorials and references, supporting a deeper understanding of regex concepts used in AI.

- RexEgg (advanced regex guide). RexEgg focuses on optimization and advanced regex techniques, helping AI practitioners write efficient patterns.

- RegExr (testing and learning tool). RegExr provides interactive regex testing and explanations, useful for debugging and refining patterns.

- Debuggex (visual debugging tool). Debuggex helps visualize regex execution flow, aiding in debugging complex patterns used in AI workflows.

- Regex101 (testing and debugging platform). Regex101 is one of the most widely used tools for testing, debugging, and explaining regex patterns, especially for validating AI-generated regex outputs.

In practice, AI practitioners use a combination of these tools rather than a single solution. AI-powered generators accelerate regex creation, high-performance engines like Hyperscan and RE2 handle large-scale processing, and testing tools like Regex101 ensure correctness and safety. This hybrid ecosystem reflects how regex patterns, regular expressions AI, and regex pattern matching integrate into modern AI pipelines for preprocessing, validation, and scalable text analysis.

What Are the Best Practices for Using Regex in AI Projects?

The best practices for using regex in AI projects are designing readable patterns, avoiding common regex failures, testing and debugging every pattern, and optimizing performance for scale. These best practices matter because regex pattern matching directly affects data quality, preprocessing reliability, system safety, and execution speed in regex NLP, regex data cleaning, and regex machine learning workflows.

The 4 best practices for using regex in AI projects are listed below.

- Pattern design and readability. Pattern design and readability improve maintainability, reduce implementation errors, and make regex patterns easier to verify across evolving AI workflows. Regex is difficult to read and troubleshoot when expressions become large, so AI practitioners should keep patterns focused, use explicit subpatterns, and document complex logic with comments or verbose syntax where supported. This practice matters because well-structured regex is easier to debug, safer to review, and more reliable when used with AI-assisted regex generation.

- Avoiding common pitfalls. Avoiding common pitfalls prevents catastrophic backtracking, regex denial of service (ReDoS), and unstable behavior on unconstrained inputs. AI practitioners should avoid overly broad wildcards such as .* when more specific character classes or lazy quantifiers can be used, escape literal metacharacters correctly, and prefer validated patterns for sensitive entities such as URLs, IP addresses, and identifiers. This practice matters because weak regex design can cause production failures, security issues, and unreliable preprocessing.

- Testing and debugging. Testing and debugging ensure that regex patterns behave correctly across expected, edge-case, and adversarial inputs. AI practitioners should validate every pattern against passing and failing examples, compare outputs after each update, and use deterministic tools such as Regex101 or equivalent test frameworks to verify intended behavior. This practice matters because regex and AI-generated regex both introduce risk, and only systematic testing confirms correctness.

- Performance optimization. Performance optimization reduces execution time, limits memory overhead, and prevents regex bottlenecks in high-volume AI systems. AI practitioners should use anchors such as ^ and $ when full-string matching is required, pre-compile reusable patterns, reduce backtracking through specific character classes or atomic structure where supported, and use safer engines such as RE2 or linear-time libraries when scale and safety matter. This practice matters because inefficient regex can exhaust CPU and memory resources in large-scale log parsing, scraping, and preprocessing pipelines.

These best practices work together because a readable design improves verification, pitfall avoidance improves safety, testing improves correctness, and optimization improves scalability. Regex projects in AI perform best when patterns are treated as production logic rather than quick text hacks, which keeps preprocessing deterministic, explainable, and efficient.

What Are the Regex Examples and Use Cases in 2026?

The regex examples and use cases in 2026 include validation, extraction, cleaning, routing, filtering, log analysis, SEO segmentation, and AI preprocessing tasks that require deterministic text pattern control. Regex remains widely used because regex patterns solve repetitive text-processing problems faster and with lower resource cost than AI-based systems when data is structured or semi-structured.

The main regex examples and use cases in 2026 are listed below.

- Validating user input. Regex validates inputs such as email addresses, phone numbers, passwords, and dates. This use case improves data integrity and prevents malformed values from entering AI systems and applications.

- Parsing log files. Regex extracts timestamps, status codes, IP addresses, and error messages from logs. This use case supports observability, debugging, anomaly detection, and security monitoring.

- Performing search-and-replace. Regex standardizes repeated text transformations across datasets, scripts, editors, and ETL pipelines. This use case supports normalization, reformatting, and bulk cleanup.

- Extracting data from strings. Regex captures structured entities such as product names, dates, URLs, identifiers, and contact information from unstructured text. This use case supports database population, reporting, and feature engineering.

- Input sanitization and text cleaning. Regex removes HTML tags, special characters, duplicated spaces, and unwanted noise from raw text. This use case is central to regex text preprocessing and NLP preparation.

- Automating repetitive tasks. Regex powers scripting tasks such as file renaming, format conversion, content cleanup, and repetitive reporting. This use case reduces manual effort and improves consistency.

- Filtering and grouping data. Regex segments URLs, query types, campaigns, or content groups based on pattern rules. This use case supports analytics, reporting, and SEO categorization.

- Identifying and fixing issues. Regex detects broken links, 404 patterns, crawl leaks, and formatting errors in websites and datasets. This use case supports site health and operational quality control.

- Removing duplicate content. Regex identifies repeated tokens, duplicate phrases, and structurally repeated strings. This use case improves content cleanliness and reduces redundancy in datasets.

- Powering AI tools. Regex prepares clean, structured input for AI systems through entity extraction, normalization, and rule-based validation. This use case improves model input quality and lowers preprocessing noise.

- URL routing in web frameworks. Regex defines flexible path rules for request routing in applications. This use case supports dynamic URL handling and structured application logic.

- SEO-specific use cases. Regex filters Search Console data, groups analytics paths, extracts page elements in crawlers, and isolates bots or error patterns in logs. This use case supports search analysis and technical SEO workflows.

Regex use cases in 2026 remain strong because regex handles exact formats with speed, transparency, and low infrastructure cost. AI systems add value for ambiguity and semantics, but regex continues to dominate fixed-format validation, extraction, and filtering tasks.

When Is Regex Faster Than AI-Based Extraction?

Regex is faster than AI-based extraction when the data follows predictable, structured, or semi-structured patterns that can be defined explicitly. Regex executes deterministic rule matching in milliseconds or seconds, while AI-based extraction requires model inference, which is slower and more resource-intensive. This difference matters most in high-volume workflows such as log parsing, structured medical extraction, form validation, and large-scale preprocessing.

How much faster is regex than AI-based extraction on structured tasks? Regex can be thousands to tens of thousands of times faster than AI-based extraction on structured datasets. In the examples provided, regex processed 1,000 entries in 0.2 seconds, while an AI-based method required 37 minutes, and regex processed 7,764 reports in 1.45 seconds, while an AI-based method required 26,686.44 seconds. This speed difference matters because regex supports near real-time throughput and much lower compute cost.

Why does regex outperform AI on predictable text patterns? Regex outperforms AI on predictable text patterns because regex matches explicit structures directly without semantic reasoning overhead. Patterns such as BI-RADS scores, phone numbers, dates, p-values, gene names, and ingredient lists are well-suited to deterministic matching. This advantage matters because AI systems add latency and cost without improving outcomes when the text structure is already well defined.

When should AI-based extraction be preferred instead of regex? AI-based extraction should be preferred when the text is unstructured, inconsistent, context-dependent, or difficult to define through explicit pattern rules. AI handles semantic variation, fuzzy phrasing, and evolving formats more effectively than regex. This tradeoff matters because regex provides speed and precision for fixed patterns, while AI provides adaptability for messy language.

Does Regex Work with Large Language Models?

Yes, regex works with Large Language Models (LLMs) because regex and LLMs solve different parts of the text-processing pipeline and complement each other effectively. Regex provides deterministic validation, extraction, and formatting, while Large Language Models (LLMs) provide contextual understanding, generation, and semantic interpretation. This combination matters because AI systems perform better when probabilistic reasoning is constrained or verified by exact rules.

How do regex and LLMs work together in practice? Regex and Large Language Models (LLMs) work together by letting LLMs interpret or locate relevant content and letting regex extract or validate the structured parts. For example, a Large Language Model (LLM) can identify the relevant section of an invoice or report, while regex extracts dates, totals, IDs, phone numbers, or URLs from that section. This workflow matters because it combines semantic flexibility with deterministic precision.

Can Large Language Models generate regex patterns? Yes, Large Language Models (LLMs) can generate regex patterns, explain regex logic, and help refine expressions from natural-language instructions. LLMs are useful for fast prototyping, translation between regex flavors, and debugging support. This capability matters because regex is difficult to write manually, and LLMs reduce development time for many common regex tasks.

Why do LLM-generated regex patterns still require verification? LLM-generated regex patterns still require verification because Large Language Models (LLMs) are probabilistic systems and do not guarantee correctness. A generated pattern can include unintended side effects, miss edge cases, or overmatch data. This limitation matters because deterministic tools such as Regex101 or test suites must confirm that the pattern behaves exactly as intended before production use.

When is regex better than LLMs inside AI systems? Regex is better than Large Language Models (LLMs) when the task requires exact matching of deterministic formats such as phone numbers, emails, URLs, dates, or other strict identifiers. Regex provides fast, explainable, low-cost control for these patterns, while LLMs are better for fuzzy or contextual extraction. This distinction matters because robust AI systems use regex for structure and LLMs for meaning, not as interchangeable tools.