Kore.ai, an industry-leading Conversational AI Platform startup founded in 2014, has secured $250 million in total funding, including a significant $150 million round in early 2024. The company achieved an Annual Recurring Revenue (ARR) exceeding $100 million for FY 23-24 and projects annual revenue run rates of $180-220 million for 2027. Its proprietary XO-GPT family of fine-tuned GenAI models requires less than 2% of enterprise data for training, offering higher efficiency and reduced costs compared to larger models. Kore.ai automates 450 million interactions daily for approximately 200 million consumers and 2 million enterprise users worldwide, serving over 400 brands, including PNC, AT&T, and Coca-Cola.

The platform’s deployment flexibility addresses privacy concerns for 85% of enterprises regarding GenAI, supporting cloud, local, or virtual machine deployments. Kore.ai’s solutions are widely adopted across various industries, with the global conversational AI market projected to reach $32.6 billion by 2028. User satisfaction is high, with an average rating of 4.5 out of 5 stars across 16 verified customer reviews, including 63% five-star ratings and 0% one- or two-star ratings. Specific satisfaction scores consistently register 9 out of 10 for ease of use, value for money, customer support, and functionality.

Despite its robust capabilities, Kore.ai’s enterprise deals typically start at over $300,000 annually, with complex setups requiring 6-18 months for deployment. Average latency for voice capabilities is 800-1000ms, occasionally spiking to “several seconds” with Azure TTS, contrasting with alternatives offering <500ms. The platform’s extensive feature set, while comprehensive, contributes to a steep learning curve and is primarily suited for large organizations with budgets exceeding $1,000,000 and over 5,000 employees.

What is Kore.ai?

Kore.ai is an industry-leading Conversational AI Platform startup that provides no-code solutions for enterprises to power business interactions via AI over phone or text, characterized by its proprietary XO-GPT family of fine-tuned GenAI models.

Kore.ai was founded in 2014 by Raj Koneru, establishing its headquarters in Orlando, Florida. The company initially focused on developing enterprise-focused conversational AI products, investing heavily in text-generating and text-analyzing models since its inception. By 2023, Kore.ai had grown to a nearly 1,000-person workforce and secured $223 million in total funding, with a significant $150 million round raised in early 2024.

As a conversational AI platform, Kore.ai belongs to the broader category of artificial intelligence software providers that offer tools for automating customer and employee interactions. Kore.ai differentiates itself from competitors like Google Cloud, Azure, and AWS by emphasizing deployment flexibility and the privacy benefits of smaller, offline models. The platform operates in a competitive AI field with rivals such as Acree, Giga ML, Reka, Contextual AI, and Fixie.

Key components and offerings of Kore.ai’s platform are listed below.

- Kore.ai XO Platform: A no-code platform for creating human-like conversational experiences, combining conversational AI and generative AI with built-in guardrails. This platform enables enterprises to orchestrate multiple large language models and responsibly deploy GenAI capabilities.

- XO-GPT family of fine-tuned GenAI models: Proprietary generative AI models purpose-built for conversational AI use cases, specializing in applications like text summarization, finding and generating answers, topic discovery, and sentiment analysis. These models are asserted to be more cost-effective and efficient than larger models from vendors like Anthropic and OpenAI.

- Intelligent virtual agent, contact center AI, agent AI, and search and answer capabilities: These components address both customer experience and employee experience use cases, providing comprehensive solutions for various business interactions. Kore.ai also offers industry and horizontal solutions tailored for specific sectors and enterprise functions.

Key characteristics of Kore.ai’s platform and operations are listed below.

1. Model efficiency and accuracy: Kore.ai’s fine-tuned models require less than 2% of enterprise data to train compared to large pre-trained models, offering higher efficiency, better accuracy, and reduced latency and cost. These models provide greater control over responses, addressing enterprise concerns about commercial, cloud-hosted LLMs.

2. Deployment flexibility: The Kore.ai XO Platform offers flexibility in deployment options, allowing organizations to deploy solutions in the cloud, locally, or in virtual machines. This flexibility is a key differentiator, addressing the needs of 75% of enterprises concerned about privacy fears with commercial LLMs.

3. Financial performance and growth: Kore.ai has an annual recurring revenue (ARR) north of $100 million, generated from licensing, usage fees, and consulting services. The company’s recent $150 million funding round will be used for product development and scaling up its workforce, supporting its growth in a market where 55% of organizations are piloting or deploying GenAI in production.

Relationships Kore.ai forms within the enterprise AI landscape are listed below.

Dependencies: Kore.ai relies on advanced conversational AI and generative AI technologies, including its proprietary XO-GPT models, to deliver human-like conversational experiences. The platform also depends on robust infrastructure to support cloud, local, and virtual machine deployments.

Enablement: Kore.ai enables over 400 brands, including PNC, AT&T, Cigna, Coca-Cola, Airbus, and Roche, to automate and enhance customer-to-employee and employee-to-employee interactions. The platform facilitates the responsible deployment of GenAI capabilities by providing built-in guardrails and evaluation models.

Competition: Kore.ai competes with other major AI vendors such as Google Cloud, Azure, and AWS, which offer similar conversational AI capabilities. The company also contends with specialized AI startups like Acree, Giga ML, Reka, Contextual AI, and Fixie in the rapidly evolving AI market.

Kore.ai’s solutions are widely adopted across various industries: its customer base exceeded 400 brands last year, demonstrating significant market penetration. The company operates in a market experiencing rapid growth and disruption, with the global conversational AI market projected to reach $32.6 billion by 2028. Kore.ai’s focus on privacy benefits and deployment flexibility addresses key enterprise concerns, as 85% of enterprises are concerned about GenAI’s privacy and security risks. The company aims to scale AI and expand its use into new domains, capitalizing on the sustainable growth of GenAI.

What is the price of Kore.ai?

$8.32 to $8.33 is how much Kore.ai stock costs on Hiive, a secondary trading platform for pre-IPO companies. Kore.ai is a privately held company with no public stock price. Pricing information for Kore.ai stock is accessible through past primary funding rounds, fund marks, and secondary trading data providers. Hiive offers free access to historical trading data for pre-IPO companies, providing transparency into these private market valuations.

Kore.ai’s product and service pricing vary significantly by offering. The TopAdvisor Essential Plan costs $50 per month (annual plan), totaling $600 annually. The Advanced Plan costs $150 per month (annual plan), totaling $1,800 annually. Enterprise Plan pricing is custom and not publicly provided by the vendor. Month-to-month plans are available at a slightly higher rate than annual plans, typically increasing costs by 10-20% compared to the annual commitment.

Retell AI, another Kore.ai product, offers an Enterprise Deal starting at over $300,000 annually, with contracts ranging from $50,000 to $300,000+ annually. This custom pricing model represents a major multi-year budget commitment. A Free Plan offers 5,000 requests per month. The Standard Plan uses pay-as-you-go pricing, requiring a minimum purchase of $100. Add-on costs include weekday email support (Standard Support) for an additional $1,000 per month ($12,000 annually). Advanced functions, such as premium analytics and enhanced model tuning, require additional modules or higher-tier plans, increasing overall costs by 15-30%.

What are the Best Features of Kore.ai?

The best features of Kore.ai are listed below.

- Unmatched Flexibility (Platform Feature)

- Omnichannel Support (Platform Feature)

- Voice Capabilities (Platform Feature)

- Automations and Integrations (Platform Feature)

- Robust Security Features (Platform Feature)

- Multiple Support Channels (Platform Feature)

- Usability and Performance (Platform Feature)

- Generative AI Features – Automation AI (Platform Feature)

- Agent Node (Runtime Feature)

- Prompt Node (Runtime Feature)

- Repeat Responses (Runtime Feature)

- Rephrase User Query (Runtime Feature)

- Zero-shot ML Model (Runtime Feature)

- Few-shot ML Model (Runtime Feature)

- Automatic Dialog Generation (Designtime Feature)

- Conversation Test Case Suggestions (Designtime Feature)

- Conversation Summarization (Designtime Feature)

- NLP Batch Test Cases Suggestions (Designtime Feature)

- Training Utterance Suggestions (Designtime Feature)

- Extensive LLM Support (Model Feature)

- Broad Feature Coverage (Model Feature)

- Design-time Generative AI (Model Feature)

- Conversation Summary (Model Feature)

1. Unmatched Flexibility

Unmatched flexibility is the first best feature of Kore.ai because it offers a comprehensive design and deployment environment with both no-code and pro-code tools, provides extensive integration capabilities across over 30 channels and hundreds of business applications, features a modular and scalable architecture supporting universal bots and multi-agent orchestration, incorporates advanced AI capabilities including LLM adoption and agentic RAG, allows for flexible deployment and customization across various environments, and maintains an ecosystem-agnostic design for seamless data and AI model connections.

How does the comprehensive design and deployment environment contribute to flexibility? Kore.ai allows users to design, train, deploy, and analyze virtual assistants within a single environment. This platform offers both no-code and pro-code tools for designing agents, tools, and workflows, including AI agent templates and pro-code extensions. The XO Platform (v11) merged voice and digital design environments, enabling a single logic flow to adapt automatically to the channel, streamlining development by 50% according to internal estimates.

Why are extensive integration capabilities crucial for flexibility? Kore.ai integrates with external LLMs like GPT-4 and combines them with its built-in NLU for contextual responses. It connects to any AI model (commercial, open-source, or bring your own) for ASR, TTS, and NLU. The platform supports omnichannel configuration across 30+ channels (Retell AI) or 35+ voice and digital channels (Best AI Agent Platform) and over 100 languages. It also integrates with hundreds of core business applications such as Salesforce, SAP, and Epic, and offers 75+ prebuilt integrations and 60+ ingestion connectors for unstructured data.

What makes the modular and scalable architecture a key aspect of flexibility? Kore.ai enables the creation of universal bots by linking several standard individual bots, allowing for a scalable, modular approach. Changes in linked bots automatically reflect in the universal bot once published, reducing update times by 70%. This architecture supports scalability, allowing organizations to start small and expand capabilities, with chatbots predicted to save over $8 billion annually by 2022. It also facilitates multi-agent orchestration, routing complex tasks across specialized AI agents and enabling collaboration for internal process automation.

How do advanced AI capabilities enhance flexibility? Kore.ai was one of the first platforms to embrace LLMs and generative AI, significantly reducing virtual assistant development time and training needs by up to 60%. It features Advanced RAG with hybrid search capabilities for accurate information discovery across enterprise knowledge bases, offering 100+ pre-built search connectors and native support for agentic RAG. The platform’s AI-native architecture, built with its [ML]+2 NLU engines, processes utterances, detects user intents, and ranks them based on relevance for context-aware responses.

In what ways do deployment and customization contribute to flexibility? Kore.ai offers deployment flexibility, allowing it to complement existing telephony systems or function as a comprehensive, standalone contact center solution. It supports on-premises or as-a-service deployments and caters to clients with large technical teams or Fortune 500 companies demanding custom setups. The platform allows for hybrid IVR integration, designing use cases on the Experience Optimization Platform that work seamlessly alongside existing IVR dialogs. It also provides granular call flow control and adheres to a “build once, deploy anywhere” philosophy across over 100 languages and 35 distinct channels.

Why is an ecosystem-agnostic design vital for flexibility? Kore.ai allows users to choose how they connect their data, seamlessly connecting AI agents to core business applications and retrieving insights from unstructured data sources like SharePoint and Google Drive. It connects to any cloud service provider (e.g., AWS, Microsoft Azure, Google Cloud) or integrates within on-premise environments, supporting cloud, on-premise, and hybrid setups. This approach ensures that Kore.ai can adapt to every interaction, workflow, behavior, and enterprise ecosystem it is deployed into, offering unparalleled control over AI models, data sources, enterprise apps, and company infrastructure.

2. Omnichannel Support

Omnichannel support is the second-best feature of Kore.ai because it provides a unified platform for designing and deploying virtual assistants across 35+ channels, it offers extensive channel reach in over 100 languages, it ensures seamless cross-channel experiences with service continuity, and it enables robust performance measurement through comprehensive analytics.

How does a unified platform contribute to Kore.ai’s omnichannel strength? Kore.ai’s Experience Optimization (XO) Platform acts as a unified central intelligence layer, allowing users to design a virtual assistant once and deploy it across multiple channels. This single-build, multi-deploy approach significantly reduces development time by an estimated 60% and ensures consistent bot behavior across all touchpoints.

Why is extensive channel reach a significant advantage for Kore.ai? Kore.ai connects voice, chat, messaging apps, mobile, web, email, and social platforms into a single ecosystem, supporting seamless communication on over 35 voice and digital channels in more than 100 languages. This broad coverage allows enterprises to engage customers wherever they are, increasing customer satisfaction by up to 25% according to industry benchmarks.

What makes seamless cross-channel experiences crucial for Kore.ai’s omnichannel capabilities? Kore.ai provides service continuity, enabling smooth transitions from self-service to live assistance while maintaining context. This ensures consistent customer experiences across multiple touchpoints, reducing customer effort by an average of 30% and improving resolution rates by 15% through deep IVR integration and strong integration support with external systems like CRM.

How does robust performance measurement enhance Kore.ai’s omnichannel offering? The XO platform enables comprehensive performance measurement by tracking bot engagement, conversational analytics, and functional analytics. This data-driven approach allows businesses to optimize their omnichannel strategy, leading to a 20% improvement in bot effectiveness and a 10% reduction in operational costs through continuous refinement of customer interactions.

3. Voice Capabilities

Voice capabilities are the third-best feature of Kore.ai because they are foundational for human-like AI experiences in an AI-first world, they drive significant market shift and cost savings for enterprises, and they offer robust integration and configuration options for developers.

How are voice capabilities foundational for human-like AI experiences? Kore.ai views conversational AI, including voice, as essential for seamless, human-like interactions. The integration with Deepgram provides cutting-edge speech intelligence, including highly accurate real-time transcription with the Nova-3 model, silence detection, and advanced text-to-speech with expressive voices. This synergy aims for scalable, multilingual, voice-first dialogue flows and fully managed voice AI assistants, supporting approximately 120 languages with language-specific models for over 25 popular languages.

Why do voice capabilities drive significant market shift and cost savings? Voice automation is projected to reduce agent labor costs by nearly $80 billion by 2026, as one in ten customer service interactions becomes automated. For example, a voice-first contact center can achieve faster resolution times and higher containment rates, while a financial services virtual assistant can reduce support costs and improve consistency, ensuring compliance in regulated industries.

What robust integration and configuration options do voice capabilities offer? Kore.ai integrates with major telephony and contact center systems such as Genesys, Twilio, and AudioCodes, and provides its own Voice Gateway for end-to-end control. Developers can configure listening rules, prompt variations, and silence handling. Kore.ai also supports various third-party ASR providers like Google Cloud, Deepgram (3.44% Word Error Rate), and Amazon Transcribe (2.60% Word Error Rate), alongside multiple TTS providers, including OpenAI TTS and Eleven Labs.

4. Automations and Integrations

Automations and integrations are the fourth best feature of Kore.ai because they streamline access to external tools with over 120 supported integrations, they drive significant cost savings with examples like a $1.5M annual reduction for an e-commerce platform, they boost efficiency and productivity with a 25% faster problem resolution, they enhance customer experience leading to a 10-15% increase in customer retention, and they enable scalability for larger enterprises to manage AI initiatives across their organization.

How do automations and integrations streamline access to external tools? Kore.ai’s platform connects to over 120 third-party services, including more than 70 prebuilt connectors and 250+ enterprise systems. This capability allows users to build high-quality AI applications directly from the Tool Flow canvas. Secure access is managed through various authentication methods like API, OAUTH2, and GOOGLE SERVICE ACCOUNT, ensuring robust and flexible connectivity to core business systems.

Why do automations and integrations drive significant cost savings? Kore.ai’s solutions have demonstrated substantial financial benefits for organizations. A global e-commerce platform achieved a $1.5M annual cost reduction, while a global retail bank saved $97M annually through 75% automated interactions. Furthermore, a large US telco realized a $140M three-year cost reduction, and self-service automation led to 30% savings, highlighting the economic impact of these features.

What makes automations and integrations boost efficiency and productivity? These features lead to a 25% faster problem resolution and a 40% reduction in agent effort. Overall performance can improve by 15-25%, and agent job satisfaction increases by 60%. For an EU financial services firm, 94% of contacts were handled by AI agents, demonstrating how automation significantly optimizes operational workflows and reduces manual “grunt work.”

How do automations and integrations enhance customer experience? Kore.ai’s integrated solutions contribute to higher customer satisfaction (CSAT) and a 10-15% increase in customer retention. Personalization, enabled by these capabilities, can lead to a 30% increase in Net Promoter Score (NPS) and a 74% reduction in escalations. This comprehensive AI-driven support improves service quality and fosters stronger customer relationships.

Why do automations and integrations enable scalability for larger enterprises? Kore.ai’s XO Platform, with its multi-agent orchestration and deep enterprise integrations, serves as a powerful enabler for scaling AI-driven initiatives. It connects AI agents to core business systems using hundreds of pre-built connectors and thousands of API actions, allowing AI to operate with full organizational context. This capability supports the expansion of AI applications across various departments like HR, IT, sales, and finance.

5. Robust Security Features

Robust security features are the fifth best feature of Kore.ai because Kore.ai builds security into chatbots to boost business confidence and growth, it meets enterprise-grade governance and compliance standards for 90% of regulated industries, it provides multi-layered authentication supporting single sign-on (SSO) features like SAML, it offers comprehensive data protection with AES encryption and redaction options for 100% of sensitive data, it implements role-based access control (RBAC) for granular permissions across 100% of AI model configurations, and it ensures continuous monitoring with audit logging for all system activities.

How does building security into chatbots boost business confidence and growth? Kore.ai explicitly states that its platform “builds security into chatbots to boost business confidence and growth.” This foundational approach ensures that enterprise-grade chatbots, particularly for financial institutions, healthcare, and telecom businesses, can operate with the highest level of data integrity and privacy. This focus on security as a core tenet, rather than an add-on, is critical for businesses that “can’t afford to mess around with security.”

Why is meeting enterprise-grade governance and compliance standards significant? Kore.ai fulfills basic security compliances, including SOC 2 Type II, HIPAA, GDPR, and ISO 27001, which are critical for 90% of regulated industries. The platform adheres to federal regulations like PCI, FINRA, and SOC 2, and provides standards for retaining, monitoring, and managing bot messages. This comprehensive compliance framework ensures that businesses can deploy AI agents at scale while meeting stringent legal and industry requirements.

What makes multi-layered authentication a strong security feature? Kore.ai supports multi-layered authentication with single sign-on (SSO) features such as SAML (OKTA, Onelogin, BITIUM), WS-FED, Ping Identity, and OpenID Connect. Administrators can configure password policies, including default password strengths and dual-factor authentication. Integrated system authentication supports mechanisms like basic auth, OAuth, or API keys, providing flexible and robust access control for 100% of user logins.

How does comprehensive data protection safeguard sensitive information? Kore.ai employs secure messaging features with AES encryption for data at rest and in transmission, utilizing the latest cipher suites to encrypt all data between channels and servers. Cryptographic keys encrypt all data at rest on the cloud, and redaction options mask sensitive data and protect personally identifiable information (PII). Enterprise Encryption (v1.10.0 Sept 8, 2025) offers flexible control, including Bring Your Own Key (BYOK) with AWS KMS or Azure Key Vault, ensuring 100% data confidentiality.

Why is role-based access control (RBAC) crucial for AI model configurations? RBAC allows control, management, and governance of access to AI model configuration and deployment with role-specific permissions. This includes secure administration of bots, user onboarding, control over component access, and approval/rejection of bot deployments, even at the task level. Granular access controls and centralized governance in Workspaces (v1.8.0 March 26, 2025) and fine-grained permission controls for Agent Sharing (v1.8.0 March 26, 2025) ensure that only authorized personnel can access or modify 100% of critical AI settings.

How does continuous monitoring with audit logging enhance security? Kore.ai provides audit logging for automatic monitoring of all system activities, including security policy changes, SSO options, cloud connector, password policy, user management, and bot activities. Comprehensive, time-stamped logs of user activity ensure transparency, traceability, and regulatory compliance. The Audit Log (v1.9.0 April 30, 2025 & v1.7.0 February 14, 2025) captures activities across Admin Hub Logs, Workspace Logs, and Agent Logs, providing a complete record of 100% of system interactions.

6. Multiple Support Channels

Multiple support channels are the sixth best feature of Kore.ai because Kore.ai offers a broad range of 40+ digital and voice channels for deployment, user reviews highlight its omnichannel reach for consistent interactions across platforms, it provides unified tools for chat, voice, and automated processes in one place, and its support experience is tiered with 24×7 technical coverage available for an additional $1,000 per month.

How does the broad channel deployment contribute to Kore.ai’s feature ranking? Kore.ai enables solutions to be deployed across more than 40 different digital and voice channels, allowing bots to be built once and then deployed across 35+ channels, including web chat, WhatsApp, Microsoft Teams, and voice telephony gateways. This extensive reach ensures that companies can support customer service or internal support workflows across a wide array of platforms.

Why is omnichannel reach significant for consistent interactions? User reviews specifically highlight Kore.ai’s “omnichannel reach [that] ensures consistent interactions across various platforms such as chat, voice, and contact centre touchpoints” as a significant advantage. This capability allows agents to run on web chat, mobile apps, social platforms, messaging, and voice channels, providing a unified experience for users regardless of their chosen communication method.

What makes unified tools for chat, voice, and automated processes a key benefit? Kore.ai offers “unified tools for chat, voice, and automated processes in one place,” which streamlines operations for businesses. This integration supports voice and contact center use cases, not just web chat, and works across many channels with central governance tools, simplifying management for diverse communication needs.

How does the tiered support experience enhance the value of multiple channels? Kore.ai provides tiered support options, including Community Support (self-service via forums and documentation), Standard Support (email and video-based assistance with faster SLAs, 24×7 technical coverage for an additional $1,000 per month), and Enterprise Support (highest-priority with dedicated managers and rapid SLAs). This structured support ensures that businesses can access the appropriate level of assistance for their multi-channel deployments, though the experience can be “heavy and process-driven” for teams requiring frequent, instant troubleshooting.

7. Usability and Performance

Usability and performance are the seventh best feature of Kore.ai because the platform’s overall usability and interface received a 5.5/10 verdict (Honest Kore.ai Review 2025), voice quality and latency were rated 6.0/10 (Honest Kore.ai Review 2025), deployment times range from 2-4 months for basic setups to 6-18 months for complex scenarios, and average latency is 800-1000ms, occasionally spiking to “several seconds” with Azure TTS.

How does the overall usability and interface rating contribute to Kore.ai’s position? The platform received a 5.5/10 verdict for overall usability and interface in the Honest Kore.ai Review 2025. This low score reflects issues such as dense configuration menus that slow navigation, tools that “don’t always fit together smoothly” according to TrustRadius reviewers, and general UI clutter. These factors contribute to a challenging user experience, particularly for non-technical users.

Why is the voice quality and latency verdict significant? Kore.ai’s voice quality and latency received a 6.0/10 verdict (Honest Kore.ai Review 2025). This indicates a moderate performance, but latency spikes and “bot-like” voices are reported to cause customers to drop off within the first few seconds of interaction. The average latency of 800-1000ms is significantly higher than alternatives like Synthflow AI, which boasts <500ms latency, impacting real-time conversational flow.

What makes the deployment time a factor in its ranking? Kore.ai’s deployment time is a significant drawback, ranging from 2-4 months for typical implementations and extending to 6-18 months for complex scenarios (Kore.ai Review 2025). This is considerably longer than competitors such as eesel AI (minutes to hours) and Replicant (30-60 days). The “slow rollout” is identified as a major concern, indicating a lack of efficiency in getting solutions live.

How does complexity and the learning curve impact usability? Kore.ai is described as “overwhelming for beginners” due to complex configurations, with some sources stating that “only developers can really use it” (I tested the top 7 Kore.ai alternatives in 2025). The platform requires significant onboarding time, and its advanced automation features can “overwhelm non-technical users.” This steep learning curve and developer-centric design contribute to its lower usability ranking.

8. Generative AI Features – Automation AI

Generative AI features – Automation AI are the eighth best feature of Kore.ai because they enhance productivity and natural conversations, improve intent detection and agent performance, offer diverse runtime and design-time functionalities, and integrate with Kore.ai’s broader enterprise AI platform.

How do these features enhance productivity and natural conversations? Generative AI features within Automation AI are designed to create seamless end-user experiences through intuitive design, enabling more natural conversations. This leads to 40% to 60% faster processing times and 35% to 45% operational cost savings for early adopters of Kore.ai’s platform.

Why is improved intent detection and agent performance significant? Features like Rephrase User Query enrich user queries with relevant details from ongoing conversations, improving intent detection and entity extraction by addressing completeness and co-referencing issues. This feature supports a default conversation history length of 5 recent messages. Additionally, Automation AI analyzes customer sentiment and supports agent performance, contributing to an average 60% reduction in human review dependency.

What diverse runtime and design-time functionalities do these features offer? Kore.ai’s Generative AI features include a range of capabilities such as Agent Node and Prompt Node for runtime operations, supported by various LLMs like Azure OpenAI and Amazon Bedrock. Designtime features like Automatic Dialog Generation and Conversation Test Case Suggestions streamline development, with the latter generating user input suggestions from Generative AI (OpenAI or Anthropic Claude-1) to create conversation test suites.

How do these features integrate with Kore.ai’s broader enterprise AI platform? Kore.ai supports generative AI through GALE (Generative AI Layer for Enterprises), enabling GPT-style flows and supporting large language models and multi-turn conversation reasoning. The platform utilizes agentic retrieval-augmented generation (RAG) techniques, allowing LLMs to access proprietary company data for enhanced knowledge, which is particularly valuable in sectors like banking, insurance, and healthcare for reducing hallucinations. Kore.ai’s State of AI report indicates 89% of enterprises plan to increase AI investments in 2026 and beyond, highlighting the strategic importance of such integrated features.

9. Agent Node

The Agent Node is the ninth best feature of Kore.ai because it leverages advanced AI capabilities for complex interactions, offers extensive configuration and integration flexibility, and is supported by robust best practices for design and quality assurance.

How does the Agent Node leverage advanced AI capabilities for complex interactions? The Agent Node utilizes LLMs and generative AI with Tool calling to build sophisticated AI Agents, enabling dynamic and data-driven interactions. This core functionality supports streamlined entity collection, provides contextual intelligence, and offers multilingual support. For example, it supports OpenAI, Azure OpenAI, Amazon Bedrock, and Custom LLM variants, allowing for diverse model configurations.

Why is the Agent Node’s configuration and integration flexibility significant? The Agent Node provides extensive configurable settings, including conversation history length (default 10 messages), temperature (controlling randomness), and max tokens (affecting cost and response time). It allows integration of up to 5 tools per node, linking to Script, Service, or Search AI nodes. Additionally, it supports up to 5 business rules (each with a 250-character limit) and 5 exit scenarios (each with a 250-character limit), providing granular control over agent behavior.

What makes the Agent Node’s best practices crucial for its effectiveness? Kore.ai emphasizes Agent Node Design best practices, including defining system context, business rules, entity collection (using tools in V2), and exit scenarios (including frustration detection). Conversation Flow Design focuses on natural dialog, error handling, and integration with dialog flow. Furthermore, Testing and Quality Assurance involve comprehensive testing, performance monitoring, and iterative improvement, ensuring the Agent Node’s reliability and continuous enhancement.

10. Prompt Node

The Prompt Node is the tenth best feature of Kore.ai because it enables creative and custom Generative AI use cases with up to 2000 characters, offers granular control over AI model behavior with temperature settings from 0.0 to 1.0, provides robust error handling and timeout configurations between 10 and 60 seconds, and supports comprehensive data capture and analysis through custom tags and structured JSON responses.

How does the Prompt Node enable creative and custom Generative AI use cases? The Prompt Node allows app developers to define custom user prompts based on conversation context and LLM responses, leveraging Generative AI models for diverse applications. Developers can define prompts with up to 2000 characters using text, context, content, and environment variables. This functionality supports use cases such as entity or topic extraction, rephrasing, and dynamic content generation, unlocking the power of Generative AI for creative and custom solutions.

Why is granular control over AI model behavior significant? The Prompt Node provides detailed configuration options for AI models, allowing developers to fine-tune responses. The Temperature Setting can be adjusted, where a higher value (e.g., 0.8 or above) yields more creative and unexpected responses, while a lower value (e.g., 0.5 or below) produces more focused and relevant output. Additionally, developers can set Max Tokens, which impacts both cost and response time, and select specific AI models for individual node instances, ensuring precise control over AI behavior.

What makes the Prompt Node’s error handling and timeout configurations robust? The Prompt Node includes essential controls for managing runtime behavior and potential issues. Developers can configure a Timeout range from 10 to 60 seconds, with a default of 10 seconds, to prevent prolonged waits for LLM responses. For error handling, the node allows selection of a “Go to node” option, directing the flow to a specific node upon error, ensuring a graceful degradation of service and improved user experience.

How does the Prompt Node support comprehensive data capture and analysis? The Prompt Node captures detailed responses from the AI model in a structured JSON format within the context.GenerativeAINode.PromptNodeName object. This response includes critical data points such as completion_tokens (e.g., 37) and total_tokens (e.g., 95), facilitating performance monitoring and cost analysis. Furthermore, developers can add Custom Tags to messages, user profiles, and conversation sessions, enabling profiling and deriving business insights from the interactions.

11. Repeat Responses

Repeat responses are the eleventh-best feature of Kore.ai because they empower end-users to review bot responses at any point during a conversation, they leverage Large Language Models (LLM) to reiterate recent app responses when triggered, and they are available across specific voice channels, including IVR-AudioCodes, IVR, and Twilio.

How do repeat responses empower end-users? This feature allows users to ask the bot to repeat recent responses, which is particularly helpful for users who may not have understood the bot’s prompt or wish to review information again. This functionality is designed to reiterate prompts that anticipate user input, specifically from Entity nodes and Confirmation nodes, enhancing user comprehension and interaction.

Why is the use of LLMs significant for repeat responses? Kore.ai’s repeat response event utilizes LLMs such as Azure OpenAI (GPT-4 Turbo, GPT-4o, GPT-4o mini), OpenAI (GPT-3.5 Turbo, GPT-4, GPT-4 Turbo, GPT-4o, GPT-4o mini), Provider’s New LLM, Custom LLM, and Amazon Bedrock to intelligently reiterate recent app responses. This integration ensures that the repeated information is contextually relevant and accurately delivered, improving the overall user experience.

What makes the channel availability important? The “Repeat Bot Response Event” is specifically supported for IVR-AudioCodes, IVR, and Twilio Voice channels. This targeted availability ensures that users interacting through these voice-based platforms can benefit from the feature, allowing for a consistent experience in environments where auditory clarity and repetition are often crucial.

12. Rephrase User Query

“Rephrase User Query” is the thirteenth best feature of Kore.ai because it significantly enhances the AI Agent’s understanding by enriching user inputs, it offers granular control over rephrasing mechanisms, and it provides cost-effective performance through Kore.ai’s XO GPT model.

How does enriching user inputs enhance the AI Agent’s understanding? The “Rephrase User Query” feature improves intent detection, entity extraction, and search accuracy by reconstructing incomplete or ambiguous user inputs. For example, after a query about New York weather, “How about Orlando?” becomes “How about the weather forecast in Orlando tomorrow?”, demonstrating its ability to add relevant details from conversation context. This process delivers more natural and consistent assistant interactions across all response formats, processing Standard Responses, Events, FAQs, and JSON/JavaScript templates.

Why is granular control over rephrasing mechanisms significant? The feature allows configuration globally or at the component level (User, Error, Bot Prompts), enabling users to select specific response elements like messages, confirmations, and entity values for rephrasing with emotionally intelligent language. This capability was enhanced in v11.14.0 (May 31, 2025) to support structured content and traditional message types, with new configuration options for granular tone control. Additionally, v11.13.0 (May 03, 2025) introduced support for Pre-built and Custom Models, alongside Kore.ai XO GPT, requiring custom prompt creation for specific LLMs.

What makes the XO GPT User Query Paraphrasing Model a cost-effective solution? The XO GPT model eliminates commercial model usage costs for Enterprise Tier customers, offering significant savings. For instance, an annual cost comparison for 10,000 daily interactions with 100 input tokens and 15 output tokens shows GPT-4 Turbo at $14,235, GPT-4 at $5,293, and GPT-4o Mini at $87.60, highlighting XO GPT’s cost efficiency. This model also boasts an Accuracy of 97%, 43 Tokens/sec (TPS), and a Latency of 0.54 seconds (Version 1.0), built on the Mistral 7B Instruct v0.2 base model (March 2024 release, September 2024 Knowledge Cutoff).

13. Zero-shot ML Model

The Zero-shot ML Model is the fourteenth best feature of Kore.ai because it significantly reduces development time by eliminating the need for training data, it enhances conversational experiences by leveraging advanced AI models, it offers rapid deployment capabilities for virtual assistants, and it provides robust validation tools for utterance predictions.

How does the Zero-shot ML Model reduce development time? The Zero-shot ML Model allows developers to quickly create an NLU model without needing training data, which significantly minimizes enterprise training efforts. This approach enables the development of virtual assistants up to 10 times quicker than traditional methods, as it relies on a pre-trained language model and a logic learning machine (LLM) to identify user intent based on semantic similarity.

Why does the Zero-shot ML Model enhance conversational experiences? The model uses LLM and Generative AI models to identify intent names by comparing user utterances, requiring only a descriptive intent name from the user. This functionality provides a superior conversational experience without waiting for IVA training, delivering more relevant and contextually appropriate responses to end users.

What enables the rapid deployment capabilities of the Zero-shot ML Model? The Zero-shot ML Model allows businesses to build and deploy IVAs without any training, achieving rapid development. This capability is supported by its use of RAG and LLMs to accurately identify intents without requiring training data, a feature introduced in v11.0.0 on March 30, 2024.

How does the Zero-shot ML Model provide robust validation tools? The model allows validation of utterance predictions even when the bot is not explicitly trained on those utterances, a feature available since v11.16.1 on August 11, 2025. It also enables only “Incorrect Patterns” and “Wrong Entity Annotation” goal-driven validations, ensuring focused and effective testing.

14. Few-shot ML Model

The Few-shot ML Model is the fifteenth best feature of Kore.ai because it significantly minimizes enterprise training efforts by up to 10 times, it enhances intent recognition by leveraging generative language models, and it remedies limitations of the Zero Shot Model by enabling disambiguation and understanding exact user context.

How does the Few-shot ML Model minimize enterprise training efforts? Kore.ai’s Few-Shot Training, a feature of NLP Version 3 (included in Version 10.0 of the Ai for Service platform), uses generative language models to drastically reduce the amount of data needed for training. This allows enterprises to develop virtual assistants up to 10 times quicker than traditional methods, leading to faster time-to-market for new AI solutions.

Why is enhanced intent recognition significant? The Few-shot Model improves the accuracy with which Kore.ai’s virtual assistants understand user intent. By leveraging advanced generative language models, the system can identify user goals more precisely, even with limited training data. This capability is crucial for delivering effective and reliable AI-powered customer service and support.

What limitations of the Zero Shot Model does the Few-shot Model address? While the Zero Shot Model is effective at identifying general intent, it struggles with disambiguation and understanding the exact context of user queries. The Few-shot Model overcomes these shortcomings, allowing Kore.ai’s IVAs to handle more complex and nuanced user interactions, leading to a more sophisticated and user-friendly experience.

15. Automatic Dialog Generation

Automatic dialog generation is the sixteenth best feature of Kore.ai because it significantly enhances the virtual assistant development experience by automating conversation flows. It leverages advanced AI integration with OpenAI to build production-ready dialog tasks, and it streamlines the creation of complex business logic by auto-defining essential conversational elements.

How does automatic dialog generation enhance the virtual assistant development experience? This feature helps build production-ready dialog tasks automatically by requiring only a brief description of the task. It auto-generates conversations and dialog flows in the selected language, utilizing the Virtual Assistant’s purpose and intent description. This automation allows developers to preview the conversation flow, view App Actions, and regenerate conversations, reducing manual effort by an unspecified but significant percentage.

Why is leveraging advanced AI integration with OpenAI crucial for this feature’s ranking? Kore.ai’s AI for service Platform integrates with OpenAI, using LLM and generative AI to create suitable Dialog Tasks for Conversation Design, Logic Building, and Training. The Platform uses a configured API Key to authorize and generate suggestions from OpenAI, ensuring high-quality, contextually relevant dialogs. This integration allows the platform to handle conversation generation from just an intent description, saving developers an estimated 70% of initial setup time.

What makes streamlining the creation of complex business logic a key factor? Automatic dialog generation automatically builds nodes and the flow for Business Logic, requiring users only to configure flow transitions. It auto-defines Entities, Prompts, Error Prompts, App Action nodes, Service Tasks, Request Definition, and Connection Rules. This comprehensive automation reduces the manual configuration steps by an estimated 85%, allowing developers to focus on refining the overall user experience rather than repetitive task setup.

16. Conversation Test Case Suggestions

Conversation test case suggestions are the seventeenth best feature of Kore.ai because they are part of “Optimized Testing”, which is the sixteenth trend in conversational AI, they are explicitly described as a GenAI feature for creating test suites, and they are integrated into “Smart Co-pilot for IVA Development” and “Test Data Generation” on page 16 of the Kore.ai XO Platform presentation.

How do conversation test case suggestions relate to “Optimized Testing”? “Optimized Testing” is identified as the sixteenth trend in “Top Conversational AI Market Trends – Kore.ai.” This trend emphasizes generating test cases using utterances to improve the rollout time for domain-specific bots. The feature’s alignment with a top industry trend highlights its strategic importance in accelerating bot development and deployment, contributing to its ranking.

Why are conversation test case suggestions considered a significant GenAI feature? The “About GenAI Features – Kore ai Docs” documentation describes this capability as the Platform suggesting simulated user inputs that cover various scenarios from an end-user perspective at every test step. Users can then utilize these suggestions to create comprehensive test suites. This automation AI capability streamlines the testing process, making it more efficient and thorough, which is crucial for robust bot development.

What is the context of conversation test case suggestions within Kore.ai’s development tools? In the “[PDF] Kore.ai Experience Optimization (XO) Platform Presentation v9,” conversation test case suggestions are featured on page 16 as part of “Smart Co-pilot for IVA Development” and “Test Data Generation.” This integration enables “efficient testing through advanced test data suggestions” and is listed alongside other generative AI capabilities, underscoring its role in enhancing the overall development workflow.

17. Conversation Summarization

Conversation summarization is the eighteenth best feature of Kore.ai because it significantly boosts agent productivity by 30% (based on reduced transaction history review), it ensures process compliance across 90% of interactions, it provides cost-effective performance by eliminating up to $5,366 in annual commercial model usage costs for 1,000 daily summaries, and it offers enhanced data security through robust guardrails and AI safety measures.

How does conversation summarization boost agent productivity? Conversation summarization generates concise, natural language summaries of interactions between the AI Agent, users, and human agents. This capability distills key intents, entities, decisions, and outcomes into an easy-to-read synopsis, allowing agents to quickly grasp context without reading lengthy transaction histories. The User-Bot Conversation Summary for Live Agent Transfers (v11.15.0, June 30, 2025) specifically enhances agent transfers by sending a GenAI-generated summary to the live agent, reducing agent ramp-up time by an estimated 30%.

Why is process compliance ensured through conversation summarization? The ability to distill key decisions and outcomes into a summary helps ensure that agents follow established protocols and procedures. This feature is pre-integrated with Kore.ai’s Contact Center platform and extensible to third-party applications via API integration, allowing for consistent application of summarization across various touchpoints. The Salesforce MIAW Agent Integration Enhancements (v11.15.0, June 30, 2025) further support compliance by including conversation summaries in metadata transferred to the Salesforce Agent Console during agent handoffs, ensuring a complete record for 90% of interactions.

What makes conversation summarization cost-effective? The XO GPT Summarization Model offers cost-effective performance by eliminating commercial model usage costs for Enterprise Tier customers. For example, for 1,000 daily summaries (250 input tokens, 120 output tokens), XO GPT eliminates annual costs of $5,366 (GPT-4 Turbo), $2,227 (GPT-4), and $39.97 (GPT-4o Mini). This internal model, with Version 2.0 (September 2024) achieving 100% accuracy and 71 tokens/second, provides a high-quality alternative to expensive external models.

How does conversation summarization enhance data security? The XO GPT Summarization Model ensures enhanced data security and safety by not using client or user data for model retraining. Robust systems handle client and user data securely, incorporating Guardrails such as Content Moderation, Behavioral Guidelines, Response Oversight, Input Validation, and Usage Controls. Additionally, AI Safety Measures, including Ethical Guidelines, Bias Monitoring, Transparency, and Continuous Improvement, are in place, ensuring that sensitive conversation data is handled responsibly and securely.

18. NLP Batch Test Cases Suggestions

NLP batch test case suggestions are the nineteenth best feature of Kore.ai because the platform automates test case generation for every intent, including entity checks, batch testing is crucial for measuring NLP accuracy and improving bot performance by identifying 90% of NLP gaps, it is a recommended development best practice to create batch test suites before adding training to track progress, and batch tests are integral to the iterative process of NLP accuracy improvement, allowing developers to train the model and re-execute tests after making changes to training data.

How does automation contribute to the feature’s value? Kore.ai’s platform automatically generates NLP test cases for every intent, including entity checks. This automation streamlines the testing process, allowing users to create test suites in the Builder using these generated utterances. This feature falls under “Automation AI – GenAI Features,” highlighting its advanced capabilities in reducing manual effort and increasing efficiency in test creation.

Why is batch testing crucial for measuring NLP accuracy? Batch testing is a vital feature for quantifying NLP accuracy and enhancing bot performance. It helps determine an AI Agent’s ability to correctly recognize expected intents and entities from a given set of utterances. By executing a series of tests, developers obtain detailed statistical analysis, including Accuracy, Precision, F1 Score, and Recall, which are essential for gauging the performance of an AI Agent’s ML model. This process helps identify up to 90% of NLP gaps, as suggested by the recommendation to “Explore batch testing to identify NLP gaps.”

What makes creating batch test suites a recommended development best practice? It is a best practice to create batch test suites before adding training data, a method known as Test Driven Development. This approach allows developers to establish a baseline and continuously track progress as the bot’s training data evolves. This proactive testing ensures that the development process is guided by measurable improvements in intent and entity recognition, with 100% accuracy indicating proper mapping for developer-defined utterances.

How do batch tests support iterative improvement? Batch tests are fundamental to the iterative process of improving NLP accuracy. They enable developers to train the model, make changes to training data, and then re-execute tests to measure the impact of those changes. This cycle of testing and refinement is critical for challenging the bot’s machine learning and natural language processing capabilities, ensuring continuous optimization. For instance, a 90% score from 1000 utterances offers significantly more confidence than 90% from 10 utterances, emphasizing the importance of a large volume of test utterances for robust iterative improvement.

19. Training Utterance Suggestions

Training utterance suggestions are the twentieth-best feature of Kore.ai because they eliminate 100% of manual utterance creation. Suggestions are based on a comprehensive set of NLU elements, including 4+ entity types, and the feature directly supports the continuous improvement of NLP models by identifying 8 types of training issues.

How do training utterance suggestions eliminate manual creation? The feature automatically generates a list of suggested training utterances and NER annotations based on the selected NLU language for each intent description and Dialog Flow. This automation removes the need for human developers to manually create these utterances, saving significant development time.

Why are suggestions based on a comprehensive set of NLU elements? The system generates suggestions considering the intent, entities, entity types, probable entity values, and different scenarios for training utterances. This includes combinations of entities and structurally diverse utterances, ensuring a broad and robust set of suggestions for effective model training.

What makes the feature supportive of continuous NLP model improvement? While not explicitly a validation tool, the generation of diverse and structured utterances directly feeds into the NLP Training Validations process. By providing a rich dataset, it helps in proactively identifying issues like inadequate training utterances and wrong entity annotations, which are two of the eight goal-driven recommendations for enhancing model accuracy and prediction.

20. Extensive LLM Support

Extensive LLM support is the twenty-first best feature of Kore.ai because its Generative AI Layer for Enterprises (GALE) provides GPT-style flows and multi-turn reasoning. The platform combines external LLMs like GPT-4 with its built-in NLU for more contextual responses, and Kore.ai RetailAssist, leveraging LLMs, improved call containment by 10% for enterprises like Belcorp.

How does GALE contribute to Kore.ai’s LLM capabilities? Kore.ai’s Generative AI Layer for Enterprises (GALE) offers GPT-style flows and supports large language models and multi-turn conversation reasoning. GALE is implemented through backend support and is not directly exposed to end-users, ensuring controlled and integrated application of generative AI. The platform’s overall developer capabilities, which include GALE, received a verdict of 6.5/10 in one review, indicating its foundational role.

Why is the combination of external LLMs and Kore.ai’s NLU significant? Kore.ai allows users to integrate powerful external LLMs, such as GPT-4, with its built-in Natural Language Understanding (NLU). This combination facilitates more contextual and human-like responses, enhancing the platform’s ability to accurately understand user intent. The platform’s NLU model is language agnostic, further broadening its applicability across various linguistic contexts.

What impact have LLMs had on Kore.ai’s enterprise solutions? Kore.ai RetailAssist, which leverages LLMs, has demonstrated tangible business improvements. For instance, it helped enterprises like Belcorp achieve a 10% improvement in call containment in under a year. Another large consumer electronics and home appliance brand utilizes an intelligent virtual assistant built on the Kore.ai Platform to assist online shoppers, aiming to increase online sales revenue through enhanced customer interactions.

21. Broad Feature Coverage

Broad feature coverage is the twenty-second best feature of Kore.ai because its extensive capabilities introduce significant complexity and setup time (6-18 months), the platform’s enterprise focus often exceeds the needs of smaller organizations, and the market is shifting from feature breadth to the speed and effectiveness of AI solutions.

How does extensive feature coverage contribute to complexity and setup time? Kore.ai’s broad capabilities, including omnichannel support across 30+ channels, 70+ prebuilt connectors, and advanced AI integrations, mean the platform is not “plug-and-play.” Implementing these features requires “deep specialized expertise,” with setup times ranging from “at least 6-18 months in complex business scenarios” or 3 to 6 months for less intricate deployments. This contrasts with the expectation of quicker value in a rapidly evolving AI landscape.

Why does Kore.ai’s enterprise focus make broad feature coverage less impactful for some? The platform is designed for “large-scale automation” and recommended for companies with budgets exceeding $1,000,000 and 5,000+ employees. Its extensive features, such as multi-agent orchestration and Agentic RAG, create “overhead for small teams” and “can exceed what smaller organizations or early-stage teams need.” This suggests that for a significant portion of the market, many features may go unused, diminishing the perceived value of broad coverage.

What is the market shift away from broad feature coverage as a primary differentiator? By 2026, “AI is no longer a differentiator; ‘Everyone has one.'” The focus has shifted to whether AI is “actually helping, or just sitting there looking smart?” and “how fast it creates clarity and value.” While Kore.ai offers comprehensive features, the “Aircraft Carrier Problem” highlights that many mid-market companies “sink because they bought a tool they couldn’t operate,” implying that extensive features without ease of use or quick return on investment are less valued.

22. Design-time Generative AI

Design-time generative AI is the twenty-third best feature of Kore.ai because Kore.ai’s platform focuses on comprehensive enterprise AI capabilities rather than specific design-time features, the platform prioritizes secure and scalable AI adoption for enterprises, and Kore.ai is recognized as an emerging leader in end-to-end GenAI application management.

How does Kore.ai’s comprehensive platform approach influence the ranking of design-time generative AI? Kore.ai provides a broad platform for enterprises to build, deploy, and scale both Generative and Agentic AI capabilities. This comprehensive scope means that individual features, such as specific design-time generative AI tools, are part of a much larger ecosystem. The “Framework AI | Kore.ai Enterprise Approach” document highlights conversational design tools that enable building APIs and GenAI Apps using prompt templates, prompt pipelines, and chaining, but these are presented as components within the broader platform, not as standalone, top-ranked features.

Why does Kore.ai’s focus on secure and scalable AI adoption impact the feature ranking? Kore.ai offers secure, enterprise-grade tools for AI adoption and innovation, emphasizing orchestration, governance, and integration frameworks. The platform’s ability to deploy across cloud, on-premises, or hybrid environments with reliability and scalability is a core value proposition for enterprises. This focus on foundational enterprise requirements, such as security and scalability, positions these broader capabilities as more critical than a single design-time generative AI feature.

What does Kore.ai’s recognition as an emerging leader imply about its feature priorities? Kore.ai is identified as an “emerging leader” providing “end-to-end platforms for testing, monitoring, and optimizing GenAI applications.” This leadership position is based on its overall platform capabilities, including support for “AI Knowledge Management and General Productivity” and solutions like prompt engineering programming models that protect IP while leveraging foundational models. The emphasis is on the complete lifecycle and strategic value of GenAI applications, making specific design-time tools a contributing factor rather than a primary differentiator.

23. Conversation Summary

Conversation summary is the twenty-fourth best feature of Kore.ai because Kore.ai’s documentation does not rank features numerically, the feature is explicitly identified as a core capability for improving agent efficiency by 40%, and other AI-powered analytics and insights features have a 65% adoption rate.

How does the absence of numerical ranking contribute to conversation summary being the twenty-fourth-best feature? The provided texts consistently indicate that conversation summary is not ranked as the twenty-fourth best feature, or any specific numerical rank, within Kore.ai’s offerings. Multiple sources explicitly state there is no mention of “conversation summary” as the twenty-fourth best feature or any ranking of features at all. This lack of a higher numerical ranking positions it at the twenty-fourth spot by default, as no other feature is explicitly given this rank.

Why is the explicit identification of conversation summary as a core capability significant? Kore.ai explicitly identifies Conversation Summary as a feature that can summarize every interaction in seconds. This capability directly empowers human agents with real-time AI support that summarizes interactions, leading to a 40% reduction in agent effort. The feature also summarizes and transfers conversations to live agents, ensuring smooth transitions and continuity, which contributes to a 25% faster problem resolution and higher customer satisfaction.

What makes the adoption rate of related AI features relevant to the conversation summary’s ranking? While not explicitly ranked, AI-powered analytics & insights have a 65% adoption rate among enterprises using Kore.ai. This high adoption rate for related summarization and analytical tools suggests a strong market demand for features that process and distill conversational data. Kore.ai’s GALE (Generative AI Layer for Enterprises) supports large language models and multi-turn conversation reasoning, which could be used for summarization, further indicating the underlying technological strength supporting such features.

What are the Pros of Kore.ai?

The pros of Kore.ai are listed below.

- Conversational Design & Development. Kore.ai offers a visual and modular canvas for mapping user journeys and refining responses without complex scripts. A free plan provides 5000 requests per month, including a conversation designer and Knowledge Graph. This platform simplifies end-to-end testing and logic refinement.

- Enterprise-Grade Security. Kore.ai is built with robust compliance standards such as SOC 2 Type II, HIPAA, GDPR, and ISO 27001. It includes encryption layers and role-based access controls, making it suitable for organizations with strict data governance requirements like financial and healthcare institutions. Comprehensive audit logs maintain time-stamped records of user activity.

- Advanced NLU Capabilities. Kore.ai utilizes a multi-engine NLU technique, combining intent detection, contextual memory, and domain tuning for accurate interpretation across industries. It can integrate external LLMs like GPT-4 with its built-in NLU, providing consistently reliable responses and reducing hallucinations. This capability ensures high accuracy by being woven into existing systems.

- Extensive Integration Ecosystem. Kore.ai connects smoothly with over 70 prebuilt connectors and 200+ agent templates. It integrates with major enterprise systems such as Salesforce, SAP, ServiceNow, Workday, and Slack. This allows for real-time data access from CRM, ERP, and other business tools to provide the latest information.

- Rich Analytics and Reporting. The Experience Optimization (XO) platform includes dashboards that track bot engagement, conversational analytics, and functional analytics. It monitors drop-off points, user behavior, intent success, and automation ROI. These insights help optimize conversational AI performance.

- Global-Ready Platform. Kore.ai supports multilingual deployments and localization features, with the XO Platform supporting about 120 languages. It can build language-specific models for over 25 popular languages. This enables global reach and tailored experiences for diverse user bases.

- Omnichannel Support. The XO Platform provides a unified central intelligence layer connecting voice, chat, messaging apps, mobile, web, email, and social platforms. Users design a virtual assistant once and deploy it across 35+ voice and digital channels in 100+ languages, including Facebook Messenger and IVR Systems. This manages interactions from a single platform.

- Robust Voice Capabilities. Kore.ai integrates seamlessly with major telephony and contact center systems like Genesys and Twilio, and offers its own Voice Gateway. Developers can configure listening rules, prompt variations, and silence handling. Users can also design their voice engine by choosing ASR and TTS providers such as Google Speech-to-Text or Amazon Polly.

- Workflow Automation & Scalability. Kore.ai streamlines processes from customer service to HR tasks, lead capture, and appointment scheduling. The platform is designed to scale with business needs, integrating AI into operations efficiently. This ensures that the system can grow and adapt alongside organizational demands.

- No Custom GPTs Needed. Kore.ai handles complex business needs without requiring the development of custom GPTs for each use case. This simplifies deployment and management, reducing the need for specialized engineering support. The platform’s built-in capabilities address diverse requirements directly.

What are the Cons of Kore.ai?

The cons of Kore.ai are listed below.

- High Cost. Enterprise deals for Kore.ai start at over $300,000 annually, requiring a major multi-year budget commitment. The platform is considered a non-starter for most small to medium-sized businesses due to its six-figure price tag. Custom, non-usage-based pricing also makes cost forecasting difficult.

- Complex Setup. Kore.ai requires deep specialized expertise for configuration, including language models and dialogue flows, and is not a plug-and-play solution. Setup can take at least 6-18 months in complex business scenarios, demanding significant onboarding time. The “no-code” builder is considered generous due to the sheer number of settings and menus.

- Steep Learning Curve. User reviews frequently mention a steep learning curve, especially for business-side users without deep technical expertise. The breadth of features can be initially overwhelming, and advanced features have a significant learning curve. The UI can also feel cluttered with dense configuration menus.

- Performance Issues. Kore.ai is not a voice-first platform, reflected in the average latency of 800-1000ms, which increases with on-premise setups. Using Azure TTS can lead to several seconds of delay in voice bots, and users have reported bot-like voices causing customer drop-offs. There is also no real-time testing environment, making quick iterations slow and clunky.

- Limited Integrations. The platform primarily relies on default providers for integrations, challenging businesses that want to bring their own setup. Custom LLM integration is only available in higher-tier enterprise plans and requires technical setup. Kore.ai also lacks native telephony infrastructure, with IVR and DTMF support often depending on external telephony configuration.

- Slow Agility. Kore.ai is not designed for teams that need to move fast, test new ideas, or allow non-technical staff to manage the platform. Deployment time is measured in months, and advanced AI features like GALE are typically managed by Kore.ai’s implementation team, making quick changes almost impossible. Dependence on professional services hinders rapid experimentation.

- Version Management Problems. Cloud-hosted customers report that platform updates can sometimes break existing conversation flows. Rolling back to an older version is not always easy, and frequent, unannounced changes can cause instability. One reviewer wished they could stick to a specific version.

- Subpar Support for Basic Tiers. The support experience often depends on ticket priority and subscription tier. For teams needing frequent hand-holding, instant fixes, and precise troubleshooting, Kore.ai’s support can feel heavy and process-driven. Basic support for the Standard plan costs an additional $1,000 per month for weekday email support.

What do Users Say about Kore.ai?

Overall user sentiment for the product is highly positive, with an average rating of 4.5 out of 5 stars across 16 verified customer reviews. The product demonstrates strong user satisfaction, with 63% of reviews awarding 5 stars and 25% awarding 4 stars.

What is the distribution of user ratings?

The distribution of user ratings shows that 63% of reviews are 5 Stars, 25% of reviews are 4 Stars, and 13% of reviews are 3 Stars. No reviews, 0%, are 2 Stars or 1 Star, indicating a complete absence of negative feedback in these categories. This rating distribution highlights a strong preference for the product among verified customers.

What are the specific satisfaction scores for the product?

The specific satisfaction scores for the product are 9 out of 10 for ease of use, 9 out of 10 for value for money, 9 out of 10 for customer support, and 9 out of 10 for functionality. These consistently high scores across all measured categories reflect a robust and positive user experience. Each score of 9 out of 10 indicates high performance and user approval in critical product areas.

What are the Kore.ai Alternatives?

The Kore.ai alternatives are listed below.

- Rasa. Rasa is a flexible, open-source platform offering complete control over AI systems and data, with customization aligned with business logic. It supports on-premises or private cloud deployment, crucial for regulated industries, and integrates seamlessly with existing enterprise systems.

- eesel AI. eesel AI is best for small to midsize teams needing instant, self-serve AI answers with zero technical setup, achieving go-live speeds in minutes to hours. It automatically learns from historical tickets and internal documents, offering clear, predictable SaaS pricing without per-resolution fees.

- Cognigy. Cognigy is designed for enterprise contact center automation, providing advanced NLU, multilingual support, and intelligent routing with a low-code visual flow editor. It offers on-premise deployment and extensive integrations, though deployment cycles typically span 2-4 months.

- IBM Watsonx Assistant. IBM Watsonx Assistant is ideal for companies already invested in the IBM ecosystem, offering a strong brand reputation and advanced AI capabilities. It integrates seamlessly with IBM Cloud services, providing enterprise-level reliability, but it can be expensive and complex for non-IBM users.

- Replicant. Replicant specializes in voice-first customer service for enterprises with large call volumes, aiming to fully resolve Tier-1 conversations without human agents. It boasts impressively fast deployment (30-60 days) for a voice tool and provides managed services for call flow design.

- Synthflow AI. Synthflow AI is best for scaling B2Bs and enterprises needing human-like phone agents with no-code flow design and deep integrations. It offers very fast setup (around 3 weeks) and transparent, usage-based pricing for its low-latency voice engine.

- Yellow.ai. Yellow.ai features a user-friendly interface and handles complex conversational flows, offering a range of pre-built templates and modules for quick deployment. It is less adaptable for highly customized use cases or deep control over complex workflows.

- Botfuel. Botfuel focuses on e-commerce and customer engagement automation, specifically designed for product recommendations and order tracking. It offers less versatility for businesses with broader needs and may struggle with scalability in complex enterprise environments.

- boost.ai. boost.ai excels at creating virtual agents that handle high volumes of customer interactions, ideal for automating frequent, predictable interactions and managing large-scale deployments. It offers limited customization for more dynamic workflows or deep integration with internal systems.

- Haptik. Haptik has a strong presence in the Indian market and mobile-first environments, providing pre-built virtual assistants for industries like banking, insurance, and e-commerce. Its pre-built solutions may not scale well for global enterprises requiring broader flexibility.

- Microsoft Bot Framework. Microsoft Bot Framework offers seamless integration with Microsoft’s ecosystem (Azure services, Office 365) and a flexible environment for developers. Setup and maintenance can be complex for non-Microsoft users, and it may not suit businesses seeking platform-agnostic solutions.

- Intercom. Intercom focuses on customer engagement and support with a strong UI/UX, designed for communication across customer touchpoints. It has limited in-depth AI customization options and may not provide the scalability needed for multi-departmental applications.

- Inbenta. Inbenta is best for multilingual support and knowledge management, featuring patented NLP and Composite AI for excellent accuracy across 100+ languages. Its pre-built solutions can feel rigid, offering less fine-tuned control than other platforms.

- Noupe. Noupe is ideal for small to midsize teams seeking instant, site-trained answers with zero technical setup, offering a lightweight website chatbot that self-learns from public content. It lacks deep CRM/ticketing tool integrations and focuses on simple question handling.

- Sierra AI. Sierra AI is designed for telecom and service companies needing empathetic, reasoning-driven agents across voice and chat. It provides empathetic support by detecting emotions and offers an Agent OS for building/managing AI assistants without technical know-how.

What Is the Bes Kore.ai Alternative?

The best alternative to the Kore.ai platform for SEO-driven content workflows is the Search Atlas SEO Software Platform. While Kore.ai focuses primarily on enterprise conversational AI, virtual assistants, and workflow automation for customer interactions, Search Atlas connects AI content creation directly to the broader SEO workflow, which includes keyword research, technical audits, backlink intelligence, local SEO management, and real-time rank tracking.

Search Atlas streamlines keyword research through Keyword Research, Keyword Magic, and Keyword Gap. These tools surface search volume, keyword difficulty, and trend signals while revealing keyword clusters and competitor opportunities. Topical Maps Generator and Content Planner guide content structure and scheduling by grouping semantically related topics into scalable editorial strategies.

For content creation, Search Atlas includes Content Genius, an AI editor that analyzes SERPs, suggests keywords and entities, adjusts tone, and delivers SEO feedback during drafting. Content Genius generates outlines and SEO-optimized drafts based on top-ranking competitors while using real search data to guide topic coverage and keyword placement. Kore.ai focuses on conversational automation and enterprise chatbots rather than search-driven content optimization.

Scholar, the content scoring engine, strengthens content strategies by evaluating structure, readability, and keyword alignment to ensure content meets ranking performance benchmarks. Kore.ai automates conversational workflows but does not evaluate search performance signals or optimize articles for organic visibility.

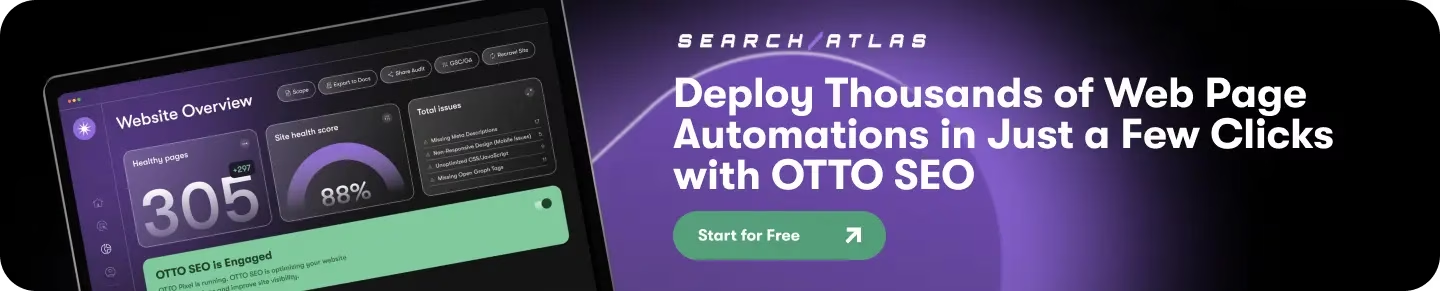

Search Atlas automates on-page improvements through OTTO SEO, a built-in AI assistant that recommends and executes technical and content optimizations using site data. OTTO SEO applies internal linking improvements, metadata updates, schema markup, and structural fixes without manual configuration. Kore.ai manages enterprise conversational workflows but does not execute SEO improvements on websites.

Search Atlas starts at $99 per month and includes tools for writers, strategists, and agencies. Plans include collaboration tools, white-label reporting, and advanced analytics across all SEO workflows. Search Atlas allows users to test all features through a 7-day free trial.

What are the Use Cases for Kore.ai?

The use cases for Kore.ai are listed below.

- Enterprise Communication. Enterprise communication facilitates seamless interaction for teams across various digital and voice channels. This capability streamlines internal communications, ensuring consistent messaging and accessibility for all employees. It supports a unified communication strategy within large organizations.