Optimizing context windows in large language models (LLMs) involves structuring input so the model processes the most relevant information within its token limits. A context window defines the maximum amount of text, measured in tokens, that an LLM is able to process at one time. It determines how much conversation history, instructions, retrieved documents, and tool outputs the model is able to use to generate responses. Early transformer models processed a few thousand tokens, while modern systems process hundreds of thousands or even millions of tokens, enabling analysis of extensive documents and complex tasks.

Context window optimization focuses on maximizing information value while minimizing unnecessary tokens. Longer contexts increase computational cost and reduce attention efficiency because transformer models evaluate relationships between every token in a sequence. As context length grows, models experience phenomena such as attention dilution and “lost in the middle,” where relevant information buried in long inputs becomes harder to retrieve accurately. Effective optimization prioritizes concise, structured inputs that preserve essential information and remove redundancy.

Practical context optimization strategies include selective context injection, summarization, compression, and Retrieval-Augmented Generation (RAG). These approaches retrieve or generate only the information required for a specific query instead of passing entire documents into the prompt. Context engineering expands this process by managing system prompts, conversation history, external knowledge retrieval, and tool outputs to deliver the right information at the right time. Properly optimized context windows improve response quality, operational efficiency, and reliability in complex multi-step tasks.

What is a Context Window?

A context window is a fundamental parameter of a large language model (LLM) that defines the maximum amount of text, measured in tokens, that the model can process and reference during a single interaction. A context window determines how much information the model can “see” at one time, including prompts, instructions, conversation history, retrieved documents, and tool outputs. The concept of a context window is central to transformer-based AI systems because it directly controls how much information the model can analyze before generating a response.

How did context windows emerge in modern AI models? The context window concept originates from the transformer architecture introduced in the 2017 paper “Attention Is All You Need.” Transformer models rely on self-attention mechanisms that evaluate relationships between tokens within a sequence. Early large language models, such as GPT-3 in 2020, supported a context window of 2,048 tokens, equivalent to roughly 1,500 words. By 2023, newer systems expanded this capacity dramatically, with models capable of processing 100,000 tokens or more, enabling AI systems to analyze significantly longer inputs and conversations.

What does the context window measure, and why are tokens used? A context window is measured in tokens because tokens represent the smallest units of language that AI models process. Tokens can represent full words, word fragments, punctuation marks, or numbers, depending on the tokenizer used by the model. On average, 1 word equals approximately 1.5 tokens, meaning a context window of 100,000 tokens corresponds to about 75,000 words of text. Token measurement allows AI systems to process language consistently across different languages, structures, and formatting patterns.

Why is the context window often compared to AI working memory? The context window functions as a working memory system for an LLM because it determines how much information the model can actively reference while generating responses. Everything inside the context window can influence the model’s reasoning process, while information outside the window is effectively invisible to the model. This limitation explains why long conversations may lose earlier details when the token limit is exceeded.

What information is typically stored inside a context window? A context window contains multiple information layers that guide model behavior and response generation. These layers commonly include system instructions that define the model’s behavior, conversation history from earlier dialogue turns, retrieved documents supplied through Retrieval-Augmented Generation (RAG), and outputs from external tools or APIs. Each of these components consumes tokens, meaning the available context must be shared between instructions, input data, and generated responses.

How does context window length affect LLM capabilities? The size of a context window directly influences what tasks a large language model can perform effectively. Larger context windows allow models to analyze long documents, maintain coherent multi-turn conversations, and reason across multiple sources of information. For example, modern LLMs, such as Gemini 1.5 Pro, support context windows up to 2 million tokens, enabling analysis of entire codebases, research datasets, or lengthy legal documents within a single interaction.

Why Does Context Window Optimization Matter?

Context window optimization matters because it improves LLM performance, reduces operational costs, preserves critical information in long workflows, and prevents failures caused by context overflow.

Effective context window optimization ensures models maintain reasoning continuity, avoid silent information loss, and deliver accurate outputs during complex AI workflows. These improvements directly support LLMO (large language model optimization), which focuses on optimizing content and systems for how large language models retrieve, interpret, and generate responses.

How does context window optimization impact agentic workflow performance? Context window optimization prevents failures in agent-based systems by ensuring critical information remains inside the model’s active context. AI agents often perform multi-step workflows that repeatedly call a language model. For example, a 50-step workflow using 20,000 tokens per step can accumulate more than 1 million tokens, quickly exceeding context limits. When limits are reached, models silently discard earlier tokens, which may contain instructions or constraints. This silent truncation causes agents to perform incorrect actions while appearing confident, making debugging difficult because the failure occurs inside the model’s context management.

Why does context window optimization significantly influence operational costs? Context window optimization reduces operational costs because token usage directly determines API expenses and computational requirements. Transformer-based models process tokens using attention mechanisms that scale roughly with the square of the input length. For example, 2,000 tokens require about 4 times more compute than 1,000 tokens, increasing latency and infrastructure demand. At the production scale, inefficient prompts can dramatically increase expenses. A system processing 1 million AI requests per month may incur $1–3 million in monthly costs using large-context models, compared with $100,000–$300,000 when optimized with smaller or efficient models.

Why is enhanced LLM understanding and memory crucial for complex tasks? Context window optimization improves model understanding by allowing LLMs to maintain relevant information across longer interactions and complex tasks. Larger context windows enable models to process detailed instructions, user goals, system rules, and external data simultaneously. This capability is essential for tasks such as software development automation, research analysis, and document summarization, where the model must reason across hundreds of pages or multiple data sources without losing critical context.

How does context window optimization mitigate performance degradation in long contexts? Context window optimization mitigates performance degradation caused by uneven attention distribution within long inputs. Research shows that language models exhibit a U-shaped attention pattern, where information near the beginning and end of a prompt receives more attention than content located in the middle. This phenomenon, often called “lost in the middle,” means relevant information can become difficult for the model to retrieve even when it technically remains within the context window. Optimization techniques such as prompt structuring, summarization, and retrieval-based context injection help keep critical information near high-attention regions.

Why does context window optimization enable deeper conversations with AI agents? Context window optimization enables deeper conversations by preserving conversation history and user-specific information across multiple interactions. When models maintain a structured dialogue history within the context window, they can reference past questions, user preferences, and previous results. Modern systems such as Gemini 1.5 Pro support context windows of up to 1–2 million tokens, enabling extended conversations that incorporate large datasets, documents, or conversation history while maintaining coherent responses.

How does context window optimization address the inherent limitations of large language models? Context window optimization addresses inherent LLM limitations because language models do not process long contexts with perfect memory or uniform attention. Even when models support 100,000 tokens or more, performance declines as prompts grow longer and more complex. Studies show that models can miss relevant details buried within large contexts and exhibit reduced extraction accuracy as prompt length increases. Advanced optimization approaches such as recursive language models (RLMs) and hierarchical context management improve accuracy by structuring information into smaller, prioritized segments, enabling smaller models to outperform larger models on long-document reasoning tasks.

What is Context Engineering?

Context engineering is the discipline of designing dynamic systems that provide AI models with the right information, in the right format, at the right time to complete tasks effectively. Context engineering focuses on managing everything inside the context window of a large language model, including system instructions, conversation history, retrieved knowledge, tool outputs, and user input.

Andrej Karpathy describes context engineering as “the delicate art and science of filling the context window with just the right information for the next step.” The goal of context engineering is to ensure that a model receives the exact data required to solve a task without overwhelming the context window with unnecessary tokens.

How is context engineering different from prompt engineering? Context engineering differs from prompt engineering because prompt engineering focuses on phrasing instructions, while context engineering designs the entire information architecture surrounding the model. Prompt engineering optimizes the wording of prompts to guide model behavior. Context engineering expands beyond instructions to control what information enters the context window, including external knowledge retrieval, system rules, conversation memory, and tool outputs. This broader scope allows developers to build AI systems that dynamically assemble context based on the specific task being performed.

What information components are managed within context engineering systems? Context engineering systems manage multiple structured information layers that determine how an LLM interprets and completes tasks. These layers commonly include system prompts that define behavior rules, user prompts that define the current task, conversation history that preserves dialogue continuity, retrieved information supplied through Retrieval-Augmented Generation (RAG), tool definitions that explain available capabilities, and outputs from executed tools. Together, these components form the active context state used by the model during inference.

Why is context engineering critical for modern AI applications and agents? Context engineering is critical because modern AI applications depend on dynamic context assembly rather than static prompts. Complex systems such as AI agents, copilots, and enterprise automation tools require models to access changing information sources, including databases, APIs, documents, and memory systems. Context engineering orchestrates these inputs so the model receives only relevant data at the correct moment, improving reasoning accuracy, reducing token usage, and enabling reliable multi-step workflows.

How does context engineering improve reliability and performance in LLM systems? Context engineering improves reliability by ensuring models operate with accurate, relevant, and structured information inside the context window. Many AI system failures occur because models lack access to critical context rather than because of model limitations. Research suggests 70 to 80% of agent failures originate from context problems, such as missing data, poorly structured prompts, or irrelevant information. By dynamically managing context retrieval, summarization, and formatting, context engineering improves output accuracy, reduces hallucinations, and increases the reliability of AI-driven systems.

What Are the Core Strategies for Context Window Optimization?

Context window optimization relies on four foundational strategies that control how information enters, persists, and moves within an LLM context. These strategies (write, select, compress, and isolate) form the core framework used in production AI systems to manage token limits while preserving the most relevant information for model reasoning.

Each strategy solves a different constraint of context management, including persistent memory, targeted information retrieval, token efficiency, and workflow compartmentalization, as explained below.

1. Write

Writing is a context window optimization strategy that persists information outside the active context so it can be retrieved later instead of remaining inside the context window. Writing stores summaries, notes, and structured data in external memory systems such as databases, scratchpads, or file-based memory. This approach prevents context windows from filling with historical information while ensuring important knowledge remains accessible for future steps.

How does hierarchical summarization support the write strategy? Hierarchical summarization reduces token usage by converting large documents or conversations into structured summaries before storing them in memory. Instead of passing entire documents into the context window, systems extract key sections such as abstracts, conclusions, or critical findings. For example, reducing an 80,000-token research document to a 15,000-token structured summary significantly improves model focus and output quality while preserving essential knowledge.

How does rolling context improve context efficiency? Rolling context improves optimization by keeping only the most recent interactions inside the active context window. Systems typically maintain the last 3–5 conversation turns while archiving older exchanges in external memory. This strategy removes outdated or irrelevant tokens while ensuring the model continues to operate with the most relevant information.

How do explicit token budgets improve LLM responses? Explicit token budgets guide the model to produce concise outputs that respect context limitations. System instructions can include directives such as “You have 4,000 tokens remaining for this response.” These constraints encourage structured and efficient responses, preventing models from generating unnecessarily long outputs that consume the remaining context window.

How does compaction maintain long-running conversations? Compaction summarizes conversation history when context windows approach their limits, allowing a new context window to start with a distilled summary. This process preserves critical information such as decisions, constraints, and unresolved tasks while removing redundant tool outputs or intermediate reasoning steps.

How does structured note-taking enable persistent AI memory? Structured note-taking enables persistent memory by storing task-relevant notes outside the context window for later retrieval. AI agents can maintain task lists, structured logs, or strategic notes that are periodically written to memory and reloaded when required. This approach enables long-horizon reasoning tasks that would be impossible if all information remained inside a single context window.

2. Select

Selecting is a context window optimization strategy that retrieves only relevant information instead of loading entire datasets into the context window. Selection ensures that models receive targeted knowledge for the current query, reducing token usage and improving reasoning accuracy.

How does Retrieval-Augmented Generation support context selection? Retrieval-Augmented Generation (RAG) retrieves relevant documents from external knowledge sources before injecting them into the context window. Instead of storing all knowledge inside the model prompt, RAG systems use vector search or semantic retrieval to locate the most relevant passages from databases, documents, or APIs.

Why does selective retrieval improve model performance? Selective retrieval improves performance by removing irrelevant tokens that dilute model attention. When a model processes excessive or unrelated context, its attention mechanism becomes less focused on important information. By selecting only relevant data, systems improve response quality and reduce hallucination risk.

How does selection help manage operational costs? Selection reduces operational costs because most AI providers charge based on token usage. Sending only relevant passages into the model lowers the number of processed tokens, which directly reduces API costs and computational overhead.

How does selection help realize the full capability of LLM systems? Selection allows LLM systems to operate effectively in complex applications that require access to large knowledge bases. Instead of loading millions of tokens into a prompt, systems dynamically retrieve only the necessary knowledge segments required for a task.

3. Compress

Compressing is a context window optimization strategy that reduces token count while preserving the semantic meaning of information. Compression techniques remove redundant tokens, shorten text structures, and summarize content so that more meaningful information fits within a fixed context window.

How does compression reduce computational cost? Compression reduces computational cost because transformer models perform attention calculations across all tokens in the context. Since attention complexity grows approximately O(n²) with token count, reducing tokens by 50–80% significantly lowers compute requirements and API costs.

Why does compression improve model focus? Compression improves model focus by removing redundant or irrelevant text that can dilute attention. When context contains unnecessary information, the model spends computational effort evaluating tokens that do not contribute to the task.

How does compression increase effective context capacity? Compression increases effective context capacity by allowing more meaningful information to fit inside the same token limit. Summarized or condensed representations allow models to process additional relevant knowledge without exceeding context boundaries.

How does compression address the “lost in the middle” problem? Compression helps mitigate the “lost in the middle” phenomenon, where important information buried inside long prompts becomes difficult for models to retrieve. By shortening prompts and preserving only essential information, compression keeps key knowledge closer to high-attention regions of the context.

4. Isolate

Isolating is a context window optimization strategy that separates workflows so each AI component receives only the information required for its task. Isolation prevents multiple systems or agents from sharing one large context that quickly exceeds token limits.

How does isolation reduce context rot and diminishing performance? Isolation reduces context rot by limiting each model call to a smaller and more focused context. As context size increases, model performance tends to decline due to attention dilution and token competition. Smaller, isolated contexts improve reliability and reasoning precision.

Why is managing attention budgets important in isolated contexts? Isolation preserves the model’s attention budget by ensuring only relevant tokens consume the model’s limited reasoning capacity. Similar to human attention limits, LLMs perform best when information is curated rather than overloaded.

How does isolation overcome the architectural constraints of transformer models? Isolation reduces the computational burden created by the transformer attention mechanism, which calculates relationships between every token in the sequence. By keeping contexts smaller, isolation improves processing speed and reduces memory requirements.

How does isolation optimize latency and operational cost? Isolation improves latency and cost efficiency because smaller contexts require fewer computations and fewer tokens processed per request. Systems that isolate tasks across multiple smaller model calls often achieve faster responses and significantly lower operational costs compared with a single large-context request.

How Does RAG Optimize Context Windows?

Retrieval-Augmented Generation (RAG) optimizes context windows by retrieving only the most relevant external information instead of loading entire knowledge bases into the prompt. RAG improves context efficiency by grounding model responses in specific documents, database records, or indexed passages that directly match the current query. This approach reduces irrelevant token usage, improves factual accuracy, and helps large language models focus on the highest-signal information inside a limited context window.

How does RAG technically manage context selection? RAG manages context selection through document chunking, embedding-based retrieval, reranking, filtering, and selective prompt injection. The system splits large documents into smaller chunks, converts those chunks into embeddings, and stores them in a retrieval system such as a vector database. At runtime, the retriever identifies the most relevant chunks for the user query and sends only those chunks into the model context. More advanced RAG pipelines improve this process with multi-query rewriting, metadata filters, contextual retrieval, hybrid BM25-plus-embedding search, and reranking layers that refine the final context set before generation.

Why does RAG improve performance, cost, and answer quality? RAG improves performance, cost, and quality because it reduces token waste while increasing the likelihood that the model receives correct supporting evidence. Well-optimized RAG pipelines usually operate much faster than brute-force long-context prompting, with vector retrieval adding only milliseconds to the request flow. RAG lowers cost because the model processes a few thousand high-value tokens instead of hundreds of thousands of raw tokens. RAG improves quality because responses are grounded in retrieved evidence, which reduces hallucination risk and increases source-based factual accuracy.

How should teams configure RAG for context window efficiency? Teams should configure RAG with explicit token budgets, controlled document counts, and context fill limits so retrieved information remains focused and useful. Practical context management in RAG includes allocating tokens across the system prompt, retrieved context, user query, and response space, while limiting document size and the number of inserted chunks. The strongest configurations prioritize quality over quantity, place the most relevant evidence closest to the query, and continuously evaluate retrieval recall, precision, and human-rated answer quality.

How does RAG fit into production context engineering? RAG functions best as part of a hybrid context engineering system rather than as a stand-alone retrieval layer. Production systems combine retrieval with summarization, compression, reranking, and adaptive controls to balance relevance, latency, and token cost. This hybrid design allows long-context models to analyze deeper material when needed while keeping default interactions efficient and tightly scoped.

How Do Compression and Summarization Techniques Reduce Context Size?

Compression and summarization reduce context size by removing redundant tokens and condensing information into shorter, high-density representations that preserve essential meaning. These techniques lower token usage while keeping the model supplied with the facts, decisions, and relationships required for accurate reasoning. In practice, context compression can reduce token usage by 50% to 80% while preserving strong output quality, and some methods achieve much higher compression with limited quality loss.

What mechanisms do compression systems use to shrink context? Compression systems shrink context through summarization, relevance filtering, semantic deduplication, extractive selection, and sentence-level pruning. Summarization keeps the most important points and removes secondary details. Relevance filtering discards information that does not support the current query. Semantic deduplication removes repeated content across retrieved chunks. Extractive methods preserve exact original sentences with the highest information value, while sentence-level pruning cuts filler phrases, verbose clauses, and low-utility wording.

Why do extractive methods often outperform weaker abstractive compression? Extractive compression often performs better because it preserves original phrasing and factual details instead of rewriting the source into a potentially lossy abstraction. Weaker abstractive summarization can omit key details or introduce distortion, especially on technical or retrieval-heavy tasks. Extractive reranking methods have shown strong results across question-answering and long-context benchmarks because they keep the most relevant original evidence while staying within a tighter token budget.

How should teams apply compression without hurting answer quality? Teams should compress selectively, preserve recent or critical context verbatim, and use stronger compression only on lower-priority or older content. Good implementations protect core instructions, key facts, and recent conversation turns while summarizing older segments or repetitive observations. The goal is not maximum compression in isolation. The goal is better tokens per task, where the model receives the smallest useful context that still supports accurate reasoning.

How Do Caching Strategies Reduce Context Window Costs?

Caching strategies reduce context window costs by reusing previously processed prompt content instead of recomputing the same tokens on every request. When stable prompt sections repeat across requests, caching lets the provider or application skip repeated processing of those shared prefixes. This lowers input-token cost, improves throughput, and reduces latency for repeated workflows such as document Q and A, agents, coding assistants, and long-form chat systems.

What kinds of caching reduce LLM costs most effectively? The most effective caching methods include prompt caching, context caching, and request-response caching. Prompt caching reuses stable prompt prefixes such as tool definitions, system instructions, and project context. Context caching stores large reference material once and reuses it across interactions. Request-response caching bypasses the model entirely for previously answered or repeated requests. Together, these methods reduce redundant model work and cut repeated token charges.

Why does prompt structure determine cache performance? Prompt structure determines cache performance because even small changes in cached sections can break cache hits. Stable instructions and reusable context need to appear first, while dynamic content such as timestamps, session data, and user-specific changes should appear later. High-performing systems separate stable prompt prefixes from variable data so the cache can match repeated requests more reliably and deliver consistent savings.

How should teams implement caching in production? Teams should first identify repeated prompts, design stable prompt prefixes, choose provider-specific caching methods, and monitor cache hit rate as a core production metric. Effective implementations warm caches before launching parallel traffic, track latency and cached-token percentages, and define clear expiration rules for invalidation and freshness. Caching works best when the repeated content is truly stable and frequently reused across many requests.

How Do Sliding Window and Truncation Approaches Manage Context Overflow?

Sliding window and truncation approaches manage context overflow by keeping input within the model’s token limit while preserving the most important information for the next response. These approaches prevent explicit API errors, silent information loss, and degraded output quality that occur when prompts exceed the available context window. Both methods act as guardrails that decide what stays in context and what gets dropped, summarized, or compressed.

What is the sliding window approach in context management? The sliding window approach maintains a fixed-size buffer of recent context and drops older content as new content enters. This method works like a First-In, First-Out memory structure that prioritizes recency, message importance, and token budget constraints. More advanced versions keep the most recent messages plus historically relevant items selected through semantic similarity or priority scoring, which prevents useful early context from disappearing only because it is old.

How does truncation differ from a sliding window? Truncation differs from a sliding window because truncation explicitly cuts input to fit the model limit, while a sliding window continuously manages a rolling context budget over time. Basic truncation removes excess content once the token count exceeds the maximum input size. Intelligent truncation improves on this by preserving must-have content such as the current user message and core instructions, then discarding or summarizing lower-priority context only when necessary.

Why do naive overflow strategies create reliability problems? Naive overflow strategies create reliability problems because they often delete relevant information without regard to task importance or future reasoning needs. Blind truncation can remove constraints, earlier decisions, or critical evidence, which causes models and agents to answer with an incomplete context. Overflow can then appear as hallucination, drift, or failure to follow instructions, even when the logs show no obvious external system error.

How should teams use sliding windows and truncation safely? Teams should use sliding windows and truncation with token reserves, message prioritization, summarization thresholds, and explicit protection for critical instructions. Systems should reserve tokens for the model response, preserve the most recent turns, and summarize dropped sections when older context still matters. This approach keeps the active context compact while avoiding unnecessary loss of decision-critical information.

How Do Memory Systems Extend Context Beyond Single Interactions?

Memory systems extend context beyond single interactions by storing important information outside the immediate context window and retrieving it when relevant in future sessions or tasks. Large language models are stateless by default, which means they do not remember previous calls unless prior information is reintroduced. Memory systems solve this limitation by giving AI applications persistent structures for user preferences, historical facts, past decisions, and task-specific knowledge.

What types of memory systems support long-term AI context? Long-term AI context is supported through short-term memory, long-term memory, working memory, episodic memory, semantic memory, and procedural memory. Short-term memory holds the active session state. Long-term memory stores persistent knowledge across sessions. Working memory coordinates what the system needs for the current task. Episodic memory preserves interaction history, semantic memory stores generalized facts and concepts, and procedural memory preserves successful strategies or action patterns.

How do memory systems interact with context windows in practice? Memory systems interact with context windows by retrieving the most relevant stored knowledge and inserting only that subset into the active prompt. This retrieval process often uses scoring based on semantic relevance, recency, importance, or task alignment. Instead of replaying full conversation transcripts, the system rebuilds a smaller and more useful task context from stored memory components, which extends continuity without exhausting the live token budget.

Why are memory systems important for production AI applications? Memory systems are important because production AI applications need continuity, personalization, and efficient reuse of prior information across many interactions. Without memory, support agents repeat questions, coding assistants forget earlier requirements, and personalized assistants lose user preferences from one session to the next. Effective memory infrastructure reduces repeated prompt overhead, improves user experience, and supports more stable multi-session behavior in agents and copilots.

How should teams design memory systems for reliable context extension? Teams should design memory systems with memory hierarchies, relevance scoring, conflict resolution, token budgeting, and cleanup mechanisms that prevent drift and contamination. Strong systems separate temporary session memory from durable long-term memory, retrieve only high-value items for the current task, and regularly consolidate, summarize, or expire stale content. This design keeps memory useful, bounded, and aligned with the current reasoning objective instead of turning stored history into uncontrolled context bloat.

What is the Lost in the Middle Problem?

The lost in the middle problem is an attention mechanism problem in which large language models perform best on information placed at the beginning or end of a long context, while middle context performance declines when relevant information appears in the center of the prompt. This context position bias creates a distinctive U-shaped performance curve, where primacy and recency outperform the middle of the sequence. Research in “Lost in the Middle: How Language Models Use Long Context” showed that long-context models often underuse relevant information placed in the middle, even when that information is fully inside the context window.

How does the lost in the middle problem appear in real model behavior? The lost in the middle LLM problem appears as a measurable accuracy drop when the answer-bearing content sits in the middle of a long retrieved context. In multi-document question answering tests, GPT-3.5-Turbo showed materially lower accuracy when the relevant document appeared in middle positions than when it appeared at the beginning or end, and the same U-shaped pattern persisted across models with larger advertised context windows. This result shows that a larger context window does not remove the underlying attention distribution problem.

Why does this problem matter in real-world AI workflows? The lost in the middle problem matters because production agents can correctly use executive summaries and final conclusions while missing critical requirements buried in the middle of documents, threads, or tool outputs. In practical workflows, an agent may capture the opening business goal and the final pricing table but miss technical constraints, implementation details, or exceptions placed in the middle. This failure creates unreliable outputs in document-heavy tasks such as proposal generation, legal review, technical support, research synthesis, and multi-step agent execution.

What causes middle context performance to degrade? Middle context performance degrades because transformer models do not distribute attention uniformly across long sequences. Decoder-only architectures, causal masking, positional encoding behavior, and training patterns all contribute to a bias toward earlier and later tokens. Middle tokens sit too far from the strongest primacy and recency zones, which makes them easier for the model to overlook, even though they remain technically available in context.

How can teams reduce the lost in the middle problem? Teams reduce the lost in the middle problem by restructuring context around importance instead of original document order and by explicitly marking critical information. Effective methods include context reordering, moving the most important evidence closer to the front of the context, adding attention guidance markers such as [CRITICAL] or [REFERENCE], repeating essential constraints near the beginning or end, and using priority scoring systems that rank retrieved items before prompt assembly. Query-aware contextualization and long-context reordering techniques improve the chance that relevant evidence lands in positions the model uses more reliably.

How Does Context Isolation Work in Multi-Agent Systems?

Context isolation in multi-agent systems works by giving each agent only the information relevant to its role instead of sharing the full system context across all agents. This design prevents context bloat, improves reasoning quality, and reduces token consumption during complex workflows. In isolated systems, each agent operates with a focused context window tailored to its task, which improves attention efficiency and prevents unrelated information from interfering with decision-making.

Content isolation is commonly used in agent orchestration frameworks where tasks are decomposed and delegated across specialized agents. It is a foundational principle in modern Agentic AI architectures.

How does role-based context filtering improve multi-agent performance? Role-based context filtering improves multi-agent performance by assigning each agent a specialized context that matches its function within the workflow. For example, a research agent receives the user query, search tools, and evaluation criteria required to collect information. An analysis agent receives the summarized findings along with analytical frameworks for evaluating evidence. A synthesis agent receives the analyzed insights plus formatting rules for generating the final output. By separating information according to role, each agent focuses only on the data required to complete its task, which significantly improves reasoning clarity and reduces unnecessary token usage.

How do agents communicate without sharing entire contexts? Agents communicate through structured outputs rather than sharing full conversation histories or internal context windows. Instead of passing raw prompts between agents, systems exchange structured messages such as JSON objects, schema-defined responses, or task summaries. These outputs contain only the necessary results of the previous step, such as extracted facts, ranked evidence, or processed insights. Structured communication ensures that downstream agents receive distilled information rather than large volumes of intermediate reasoning, which keeps context windows small and prevents information overload.

How does state-based context isolation control information flow? State-based context isolation controls information flow by storing system data in structured state schemas where only specific fields are exposed to the LLM at each step. In this architecture, the application maintains a shared state object that stores information such as user goals, retrieved documents, reasoning outputs, and intermediate results. When an agent runs, the orchestration layer selectively exposes only the fields relevant to that agent’s task. This selective exposure prevents agents from accessing unrelated context while ensuring they still receive the information required to complete their responsibilities.

How do observation masking and LLM summarization manage agent context growth? Observation masking and LLM summarization are two techniques used to control context growth in multi-agent systems. Observation masking hides older or lower-priority information from the active context while preserving it in external memory or logs. This method reduces token usage without modifying the underlying data. LLM summarization compresses older context into shorter summaries that preserve important decisions and results while removing redundant details. In practice, many production systems combine both methods, masking routine observations while summarizing complex reasoning traces to maintain efficient context windows across long-running agent workflows.

What Are the Best Practices for Context Window Optimization in Production?

Context window optimization in production requires combining multiple techniques such as session control, token monitoring, retrieval pipelines, compression, and observability. No single method solves context limitations on its own. Production systems manage context by controlling how information enters the prompt, measuring token usage, and limiting unnecessary context expansion across conversations and agent workflows.

1. Start new sessions for each new task

Starting a new session for each task prevents unrelated context from entering the model prompt. Long conversations accumulate tokens from earlier requests, which can introduce irrelevant information and reduce reasoning accuracy. Resetting the session ensures that only task-specific instructions and data occupy the context window.

2. Monitor token consumption patterns

Monitoring token usage reveals how context is actually consumed across requests and workflows. Production teams track metrics such as average tokens per request, peak token usage, token distribution across conversation lengths, and which prompt components consume tokens (system prompt, retrieval context, chat history, tool outputs). These measurements identify where context growth occurs.

3. Benchmark effective context length

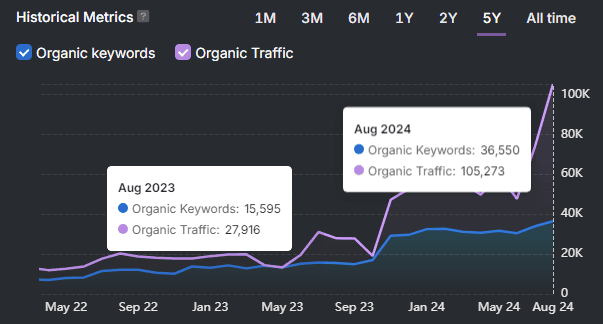

Benchmarking identifies the context length where model performance begins to decline. Many models advertise large context windows, but practical reasoning performance often decreases once prompts exceed roughly 32K tokens. Testing real workloads helps determine the usable context range for a given model and application.

4. Combine multiple context optimization strategies

Production systems typically combine retrieval pipelines, compression methods, and selective context injection. Retrieval-Augmented Generation (RAG) limits the amount of external knowledge inserted into prompts. Prompt compression reduces token usage. Selective context injection ensures agents or tasks receive only the information needed for the current step.

5. Implement context observability

Context observability is the practice of tracing what information enters the prompt at each step of a workflow. Systems log token counts, prompt composition, and context boundaries so teams can detect overflow conditions, irrelevant retrieved documents, or missing evidence during agent execution.

6. A/B test retrieval pipelines versus long-context approaches

A/B testing determines whether targeted retrieval or large prompt contexts produce better results. Teams measure accuracy, latency, and token cost across both approaches. In many applications, retrieval pipelines with smaller prompts outperform large-context prompting in both cost and reliability.

Context window optimization in production is an iterative process. Teams continuously measure token usage, evaluate retrieval quality, adjust compression thresholds, and refine context injection strategies as workloads and models evolve.

How Do You Balance Context Window Performance Against Token Costs?

Balancing context window performance against token costs requires controlling how much information enters the prompt while maintaining enough context for accurate reasoning. Token consumption directly determines API costs because providers charge for every input and output token processed. Larger context windows increase request cost linearly while also increasing computational load and latency. Production systems treat context size as a constrained resource, optimizing how context is selected, structured, and reused rather than simply increasing prompt length.

Why does token consumption strongly affect operational cost? Token consumption directly maps to billing and system throughput, which means longer prompts immediately increase cost per request. Every additional token in the prompt increases inference cost and memory usage during model execution. In many deployments, conversation history must be re-sent with each request because models are stateless, causing token usage to grow with every interaction. For example, document-heavy prompts or long chat histories can multiply token costs if the entire context is repeatedly transmitted.

How should teams measure cost per conversation or user session? Teams balance cost and performance by tracking cost per conversation and cost per user session rather than evaluating individual requests in isolation. Measuring tokens per session reveals how context accumulates across multi-turn conversations and agent workflows. This allows teams to identify expensive patterns such as repeated document insertion, overly long system prompts, or unnecessary conversation replay.

What Are the Current Context Window Sizes Across Major LLMs?

Context window size refers to the maximum number of tokens a model can process in a single interaction, including the prompt, retrieved context, conversation history, and the generated response. Context windows vary significantly across models, depending on architecture and optimization techniques. While some models advertise extremely large windows, practical performance often depends on how effectively the model uses tokens inside that window rather than the raw maximum size.

What context window sizes are available in major commercial LLMs? Major LLM providers offer context windows ranging from tens of thousands to millions of tokens. The table below summarizes commonly referenced context limits across widely used models.

| Model | Provider | Approximate Context Window |

|---|---|---|

| GPT-4 Turbo | OpenAI | ~128K tokens |

| GPT-4o | OpenAI | ~128K tokens |

| Claude 3 Opus / Sonnet | Anthropic | ~200K tokens |

| Claude 3.5 / Claude 4 family | Anthropic | ~200K tokens |

| Gemini 1.5 Pro | Google DeepMind | Up to ~1M tokens (experimental up to ~2M) |

| Gemini 1.5 Flash | Google DeepMind | ~1M tokens |

| Llama 3 (8B / 70B) | Meta | ~8K tokens |

| Llama 3.1 | Meta | Up to ~128K tokens |

| Mistral Large | Mistral AI | ~32K tokens |

| Mixtral models | Mistral AI | ~32K tokens |

These numbers represent the maximum supported input context, not necessarily the effective context length for reliable reasoning.

Why does the advertised context window size differ from the effective context length? Advertised context window size indicates the technical maximum, but effective context length is the range where models maintain reliable reasoning and retrieval accuracy. Research benchmarks show that many models experience performance degradation as prompts approach the upper limit of the context window. Problems such as the lost-in-the-middle effect, attention dilution, and increased token noise reduce the model’s ability to identify relevant information in very long contexts.

How do large context windows influence AI system design? Large context windows allow models to process longer documents, larger codebases, and extended conversations without external retrieval systems. However, long contexts increase token costs, latency, and memory requirements. Because of these trade-offs, many production systems still rely on retrieval pipelines, compression strategies, and selective context injection rather than simply filling the entire context window.

Why do production systems rarely use the full context window? Production AI systems rarely fill the entire context window because longer prompts increase cost and often reduce reasoning quality. Instead, systems aim to keep prompts focused by retrieving only relevant information, compressing context when necessary, and structuring prompts so critical information appears in positions where models process it more reliably.

How do incremental loading and adaptive caching reduce token costs? Incremental loading and adaptive caching reduce token usage by sending only the necessary context instead of repeatedly transmitting the full prompt state. Incremental loading retrieves additional context only when required, such as pulling document sections on demand rather than inserting the entire file. Adaptive caching stores previously processed prompt segments or model outputs so identical or similar requests can reuse earlier results instead of triggering new model inference.

Why should costs be analyzed by application type? Breaking down token expenses by application type helps identify which workflows require context optimization. Different AI workloads use context windows differently. Chatbots accumulate conversation history, document analysis systems insert large text segments, and code generation tools often include codebases or tool outputs. Measuring token usage across these categories reveals where context growth occurs and where optimization will have the largest cost impact.

Why should cost monitoring be integrated into the evaluation pipeline from the beginning? Cost monitoring should be integrated into the evaluation pipeline so token usage can be measured at every workflow step. Tracking tokens per stage (retrieval, reasoning, tool output, and final generation) makes it possible to identify inefficiencies early. Production teams often log token counts per request, per tool call, and per agent step to ensure that context expansion does not silently increase operational costs as the system evolves.

What are the Limitations of Context Window Optimization?

Context window optimization has several limitations because it cannot overcome fundamental constraints of transformer architectures, token budgets, and retrieval systems. Even with techniques such as compression, retrieval, and context isolation, large language models still face limits related to attention scaling, cost growth, information loss, and reasoning degradation in long prompts.

The main limitations of context window optimization are listed below.

- Fixed token capacity. Context window optimization cannot exceed the maximum token limit defined by the model architecture. Each LLM has a fixed context window, which restricts how much text can be processed in a single interaction, regardless of optimization strategies.

- No inherent long-term memory. Large language models are stateless systems that forget information between requests unless context is reintroduced. Context optimization reduces token usage but does not create persistent memory without external systems such as vector databases or memory stores.

- Attention mechanism scaling limits. Transformer attention has quadratic complexity O(n²), meaning compute requirements increase rapidly as context grows. Larger prompts require significantly more processing power and memory.

- Performance degradation in long contexts. LLM accuracy often declines when prompts become very long. This occurs because attention becomes diluted across many tokens, which can reduce the model’s ability to identify the most relevant information.

- The “lost in the middle” problem. Language models show context position bias, where information at the beginning or end of the prompt receives more attention than content in the middle. This reduces the effective usefulness of large context windows.

- Increased computational costs. Token consumption directly increases inference costs because providers charge per token processed. Larger contexts lead to higher operational costs at scale.

- Slower response times. Processing long prompts increases inference latency because models must compute attention across more tokens, which slows response generation in real-time applications.

- Information loss from optimization methods. Optimization techniques introduce trade-offs. Summarization may remove important details, and chunking documents can break the global context across sections.

- Architectural complexity of retrieval systems. Retrieval-augmented generation improves context efficiency but introduces additional components such as vector databases, reranking pipelines, and metadata filtering, increasing system complexity.

- Tokenization inefficiencies. Tokenization inflates token counts for structured data, code, or certain languages. This reduces the usable portion of the context window and increases costs.

- Training data limitations. Many LLMs are trained primarily on shorter documents. As a result, models may struggle to reason effectively across extremely long contexts even when technically supported.

- Context contamination and noise. Including too much information in a prompt introduces irrelevant content. This context pollution can distract the model and lead to inaccurate or incoherent responses.

Context window optimization improves efficiency and reliability but does not eliminate the architectural, computational, and reasoning limitations of current LLM systems.

What are Context Poisoning, Context Distraction, and Context Confusion?

Context poisoning, context distraction, and context confusion are failures in how large language models manage information inside the context window, where incorrect, excessive, or irrelevant context degrades reasoning quality and response accuracy. These failures occur when the information inside the prompt interferes with the model’s ability to interpret instructions or select relevant knowledge.

What is context poisoning in large language models? Context poisoning occurs when incorrect or hallucinated information enters the context window and is reused in later reasoning steps as if it were factual input. Because models treat prior context as authoritative within a session, an early hallucination can propagate through the workflow. When the poisoned information is repeatedly referenced in subsequent prompts, the model may reinforce the error and generate increasingly incorrect outputs.

What is context distraction in LLM systems? Context distraction occurs when the context window becomes so large that the model focuses excessively on the provided context rather than reasoning from its training knowledge. Extremely long prompts containing large conversation histories or documents can overwhelm the model’s attention. As attention spreads across thousands of tokens, models may repeat previous information or fail to synthesize new insights.

What is context confusion in AI agents? Context confusion occurs when irrelevant documents, tools, or instructions appear in the context window and compete for the model’s attention. When too many tools or sources are present, the model may follow incorrect instructions or select the wrong tool. This happens because unrelated context introduces competing signals during the model’s reasoning process.

How can AI systems reduce context poisoning, distraction, and confusion? AI systems reduce these contextual failures by validating inputs, filtering retrieved data, and limiting the amount of information placed in the context window. Techniques such as retrieval filtering, context summarization, and role-based context isolation help ensure that only relevant information enters the prompt, which improves reliability and reasoning quality.

What Does the Future of Context Window Optimization Look Like?

The future of context window optimization focuses on improving how large language models process, retrieve, and manage long contexts rather than only increasing maximum token limits. Advances in model architecture, memory systems, and orchestration layers aim to enable models to handle millions of tokens while maintaining reasoning accuracy and reducing computational cost.

What architectural innovations will improve long-context processing? New model architectures are being developed to reduce the computational burden of processing large contexts. Traditional transformer attention scales quadratically with sequence length, which limits efficiency for very long prompts. Techniques such as sparse attention and hybrid memory architectures reduce this complexity by allowing models to focus attention only on the most relevant tokens rather than every token in the sequence.

How will extremely large context windows change AI capabilities? Future models are expected to support context windows measured in millions of tokens, enabling analysis of entire books, codebases, or datasets within a single prompt. Emerging architectures have demonstrated reliable performance across very large contexts, with experimental systems maintaining high accuracy across millions of tokens. These capabilities enable long-form reasoning tasks such as multi-document analysis, large-scale software debugging, and scientific research synthesis.

How will orchestration layers improve context management? Future AI systems will increasingly manage context outside the model through orchestration frameworks and agent architectures. Instead of placing all information directly into the prompt, orchestration layers dynamically retrieve, compress, and structure relevant data before passing it to the model. Techniques such as rolling context windows, automatic summarization, and retrieval pipelines help maintain efficient prompts while preserving important information.

What role will standardized context protocols play in AI systems? Standardized interfaces for connecting AI models to external knowledge sources are expected to improve context management. Protocols such as the Model Context Protocol (MCP) allow models to retrieve structured information from databases, tools, and knowledge systems without embedding the entire dataset inside the prompt.

How will hardware advancements influence context window optimization? Future hardware developments will expand the practical limits of long-context processing. Improvements in high-bandwidth memory and specialized AI accelerators increase the amount of data that models can process during inference. These hardware advances enable larger context windows while reducing latency and energy consumption.

How will context engineering shape the next generation of AI systems? Context engineering will become a central discipline in AI system design as models rely on structured context management to maintain accuracy at scale. Future systems combine retrieval pipelines, compression techniques, caching strategies, and multi-agent coordination to control how information enters and leaves the context window. This approach allows AI systems to handle complex tasks while maintaining efficient token usage and reliable reasoning.