AI content detection refers to the process by which automated systems analyze text patterns to determine whether artificial intelligence generated the content. Avoiding AI content detection means producing AI-assisted content that reads naturally, reflects human expertise, and provides genuine value rather than publishing raw machine output. The same practices that make AI-assisted writing difficult for detectors to flag improve readability, credibility, and search performance because high-quality content satisfies Google’s quality signals and user expectations.

AI detectors and search engines flag raw AI output because large language models (LLMs) often generate predictable linguistic patterns. AI content detection systems identify signals such as repetitive phrasing, limited sentence variation, shallow explanations, and statistical uniformity across paragraphs. Search engines apply similar evaluations through ranking and spam systems that analyze whether content demonstrates expertise, originality, and helpfulness. Google’s policies focus on identifying scaled content abuse, which refers to publishing large volumes of low-effort content with little originality or added value, rather than penalizing AI use itself.

Avoiding AI detection does not mean bypassing integrity safeguards or hiding automated generation. Legitimate AI-assisted writing focuses on improving clarity, factual accuracy, structure, and brand voice so that the final content reflects human editorial oversight and authentic expertise. Editing AI drafts, adding domain knowledge, and refining narrative flow transform generic outputs into authoritative resources that both readers and search systems evaluate positively.

The core principle behind making AI content undetectable is identical to producing strong editorial content. Writers reduce AI detection risk when they apply human editing, expand context, introduce original insights, and structure information around real user questions. These improvements create semantic richness, varied sentence construction, and authentic reasoning that detection algorithms struggle to classify as purely machine-generated. As a result, the most reliable ways to avoid AI detection are the same practices that strengthen E-E-A-T signals, improve reader engagement, and support sustainable search visibility.

How Do AI Content Detectors Work?

AI content detectors are machine learning systems that analyze statistical language patterns to estimate the probability that artificial intelligence generated a piece of text. AI content detectors do not prove authorship. AI content detectors evaluate linguistic signals such as predictability, sentence variation, and stylistic repetition to calculate whether the writing resembles patterns produced by large language models. These systems return probabilistic scores rather than definitive conclusions, which explains why AI content detector accuracy varies depending on text length, editing level, and writing style.

How do AI detectors work when analyzing written content? AI detectors work by applying natural language processing (NLP) and machine learning classification models that scan text for patterns commonly associated with AI-generated language. The analysis focuses on statistical structures such as word predictability, repeated transitions, and uniform sentence construction rather than identifying the author. Classification algorithms compare these patterns against datasets containing both human-written and AI-generated text, which allows the system to estimate the likelihood of machine generation.

What is perplexity in AI detection, and why does it matter? Perplexity is a statistical metric that measures how predictable word sequences are within a piece of text. AI-generated writing typically produces highly predictable word combinations because language models select the most probable next token during generation. Human writing demonstrates higher variability because human authors introduce unexpected phrasing, creative wording, and irregular sentence flow. Human writing commonly falls between 20 and 50 perplexity, while many large language models generate text in the 5 to 10 perplexity range, which makes predictability a key signal in AI detection.

What is burstiness in AI detection, and how does it distinguish human writing? Burstiness is a metric that measures variation in sentence length, structure, and complexity across a text. Human writing usually displays high burstiness because authors naturally alternate between short sentences and longer explanatory statements. AI-generated text often produces consistent sentence patterns with similar lengths and structures, which creates low burstiness. Detection systems analyze this variation to determine whether the writing resembles natural human rhythm or algorithmic generation.

How do classification algorithms identify common AI writing patterns? AI detection algorithms classify text by scanning for repeated linguistic structures, overused transitions, and generic phrasing patterns that appear frequently in AI-generated outputs. These systems flag signals such as repeated connectors like “Moreover,” “Furthermore,” or “In today’s digital landscape,” along with repetitive paragraph structures and a lack of personal perspective. When these patterns appear consistently across a document, classification models increase the probability score that the content was generated by artificial intelligence.

Do AI detectors rely on metadata or digital watermarks? Some AI content detectors examine metadata traces or watermark signals embedded during AI text generation. Certain generative systems experiment with watermarking techniques that embed statistical markers inside generated tokens. However, watermark signals often degrade after editing, paraphrasing, or formatting changes, which limits their reliability for detection.

How does AI content detection differ from Google’s SpamBrain evaluation? AI content detectors estimate whether a text resembles machine-generated writing, while Google’s SpamBrain system evaluates content quality and usefulness rather than AI origin. SpamBrain is Google’s spam detection system that identifies low-quality or manipulative content patterns such as scaled content abuse and low-effort pages. Google’s ranking systems focus on whether content provides original value, expertise, and helpful information instead of penalizing content purely because artificial intelligence assisted its creation.

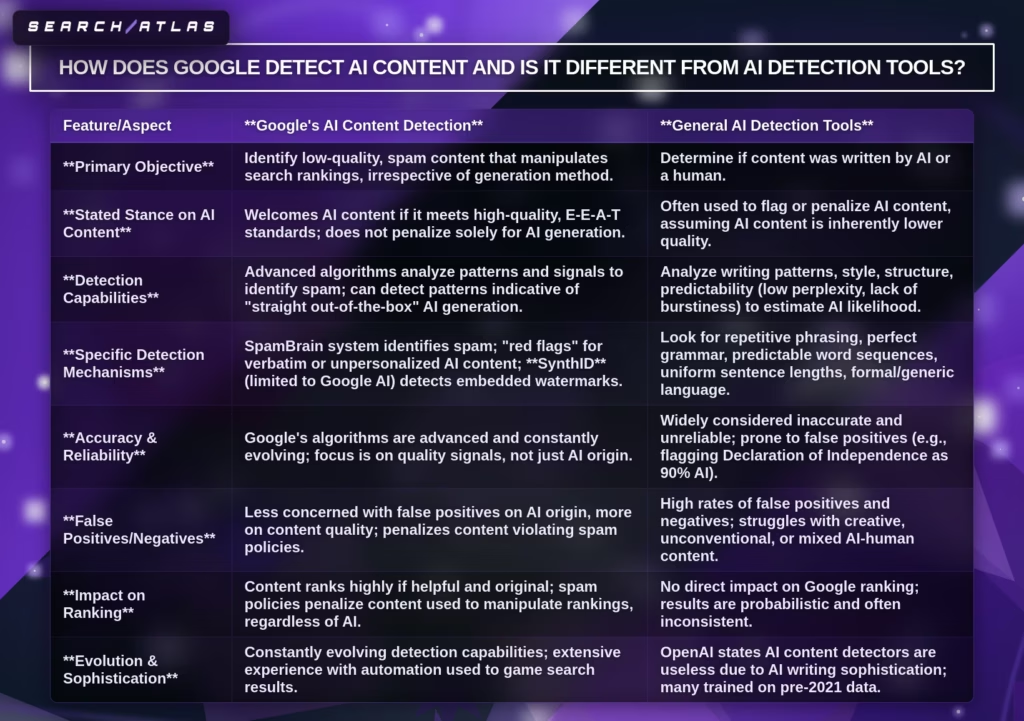

How Does Google Detect AI Content, and Is It Different from AI Detection Tools?

The Google approach to detecting AI-generated content is fundamentally different from third-party AI detection tools because Google evaluates content quality and spam patterns rather than determining whether artificial intelligence created the text. Google’s systems focus on whether a page provides helpful, original information for users. Third-party AI detectors attempt to classify authorship by analyzing linguistic signals such as perplexity, burstiness, and repetitive phrasing.

What is the main difference between Google’s detection systems and AI detection tools? Google’s systems evaluate content usefulness and spam signals, while AI detection tools estimate the probability of machine-generated writing. AI detection tools analyze statistical language patterns to determine whether text resembles AI output. Google instead evaluates signals related to search quality, including originality, expertise, and whether the content attempts to manipulate rankings. Google’s spam detection system, known as SpamBrain, identifies patterns associated with low-quality or automated content created primarily to influence search results rather than to help users.

How does Google identify low-quality AI content if it does not use AI detection tools? Google identifies problematic AI content through spam and quality analysis rather than origin detection. Google’s ranking systems analyze signals such as duplicated text, thin content, repetitive publishing patterns, and pages created with little effort or added value. These signals indicate scaled content abuse, which refers to producing large volumes of low-value pages regardless of whether humans or AI generated them. Google penalizes these patterns because they degrade search quality.

What role does SpamBrain play in Google’s AI content evaluation? SpamBrain is Google’s machine learning spam detection system that identifies manipulative or low-quality content patterns across the web. SpamBrain evaluates behavioral and linguistic signals that suggest automation at scale, such as mass-produced pages, template-driven articles, and content designed primarily for ranking manipulation. The system continuously updates its models to detect evolving spam techniques, including automated content generation.

Why are third-party AI detection tools considered unreliable? AI detection tools frequently produce false positives and false negatives because they rely on statistical probability rather than verified authorship signals. These tools classify writing as AI-generated when the text appears predictable or formulaic, which sometimes causes human-written documents to be flagged incorrectly. Studies show detectors can misclassify human writing when the text has a simple structure, formal tone, or consistent sentence patterns.

Does AI detection influence Google search rankings? No. AI detection scores from external tools have no direct impact on Google rankings because Google does not rely on those tools for evaluation. Google ranks content based on quality, relevance, and authority signals rather than whether AI assisted the writing process. Pages can perform well in search results when they provide accurate information, demonstrate expertise, and satisfy user intent, regardless of how the content was created.

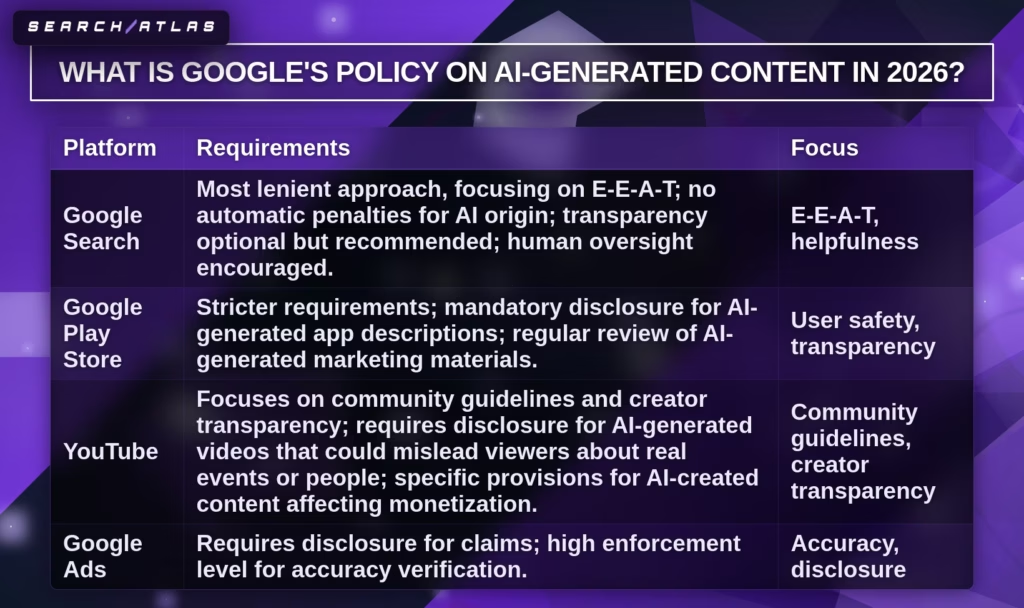

What Is Google’s Policy on AI-Generated Content in 2026?

Google’s policy on AI-generated content in 2026 states that Google does not penalize content simply because artificial intelligence generated it. Google’s ranking systems prioritize helpful, reliable, and people-first content regardless of the production method. Content created using AI can rank in search results when it demonstrates experience, expertise, authoritativeness, and trustworthiness (E-E-A-T) and provides real value to users.

What principles determine whether AI-generated content is acceptable to Google? Google accepts AI-assisted content when the content meets quality standards, serves user intent, and provides original value. Google’s official guidance emphasizes that content should help users rather than manipulate search rankings. The evaluation criteria behind this policy are explained in Does Google Penalize AI Content, which examines how Google applies spam policies and quality signals when evaluating AI-assisted pages.

What types of AI-generated content does Google penalize? Google penalizes AI-generated content when it violates spam policies such as scaled content abuse, thin pages, or manipulative ranking tactics. Pages that publish large quantities of repetitive or low-effort AI content without meaningful human input risk losing visibility or receiving manual actions. Google’s ranking systems target these patterns because they provide little value to users and degrade search quality.

Does using AI help or hurt search rankings by itself? No. Using artificial intelligence to create content neither helps nor harms rankings on its own because Google evaluates the final page quality rather than the writing method. Pages rank when they satisfy user intent, provide accurate information, and deliver unique insights. AI tools function best as editorial assistants that help writers research, structure, and refine content rather than replace human expertise.

Why Does Raw AI Content Get Flagged by Detectors and Google?

Raw AI content gets flagged by AI detection tools and Google systems because unedited AI outputs exhibit predictable linguistic, structural, and statistical patterns that differ from natural human writing. These signals include low perplexity, uniform sentence structure, repetitive phrasing, and limited originality. AI detection tools flag these signals to estimate machine-generated probability, while Google systems identify similar patterns when evaluating low-effort or scaled content that provides little value to users.

What patterns cause raw AI content to be flagged by AI detectors? AI detectors flag raw AI content because large language models generate statistically predictable language patterns that differ from human writing variability. Detection systems analyze linguistic signals such as low perplexity, low burstiness, repetitive transitions, and consistent syntax across paragraphs. Human writing typically shows greater unpredictability and variation in sentence rhythm, which makes highly uniform text appear algorithmic.

Why do predictable LLM patterns trigger AI detection systems? Predictable large language model (LLM) patterns trigger detection because AI models generate text by selecting the most probable next word during token prediction. This probabilistic generation creates consistent syntax, clean transitions, and predictable word sequences. Detection systems identify these patterns because human authors more frequently introduce unexpected phrasing, stylistic shifts, and irregular sentence structures.

Why does the lack of originality or “information gain” cause Google systems to flag AI content? Google systems flag content when pages provide little originality or added value compared to existing search results. Google evaluates whether a page introduces new information, insights, or expertise beyond what already exists online. Pages that repeat generic explanations or summarize existing information without meaningful expansion risk being classified as low-effort or scaled content.

Why do repetitive phrasing and template structures signal automated content?

Repetitive phrasing and templated structures indicate automation because AI models frequently reuse common transitions, paragraph formats, and generic language patterns. Examples include repeated connectors such as “Furthermore,” “Moreover,” or “In today’s digital landscape.” When these patterns appear across multiple sections, detection systems interpret them as signals of automated text generation.

Why does uniform sentence structure make AI content easier to detect? Uniform sentence structure increases detection probability because AI-generated writing often maintains consistent sentence length and grammatical rhythm. Human writing normally alternates between short statements, complex explanations, and stylistic variations. Detection algorithms measure this variation through burstiness metrics, which reveal whether sentence patterns follow natural human rhythm or machine consistency.

Why does publishing AI content without human editing increase the risk of detection? Publishing AI content without human editorial refinement increases detection risk because raw AI outputs often lack personal voice, contextual reasoning, and domain expertise. Human editors introduce narrative variation, domain knowledge, and real-world examples that break statistical AI patterns. Editorial oversight reduces detection signals while simultaneously improving readability, credibility, and search performance.

How to Write AI-Assisted Content That Reads Naturally?

AI-assisted content that reads naturally is a human-led editorial workflow where artificial intelligence supports research and drafting while human editors add expertise, verification, and voice. AI-assisted content becomes natural when human writers restructure language, inject real experience, verify facts, and align the text with brand voice. This process removes predictable AI patterns and produces readable, authoritative content.

A natural AI content workflow is a structured process where AI produces a draft, and humans refine the text through voice alignment, factual validation, and manual rewriting. Human editors control tone, structure, and originality while AI accelerates research and draft generation. This workflow improves readability and prevents statistical patterns that trigger AI content detection.

1. Build a Brand Voice Brief Before Prompting AI

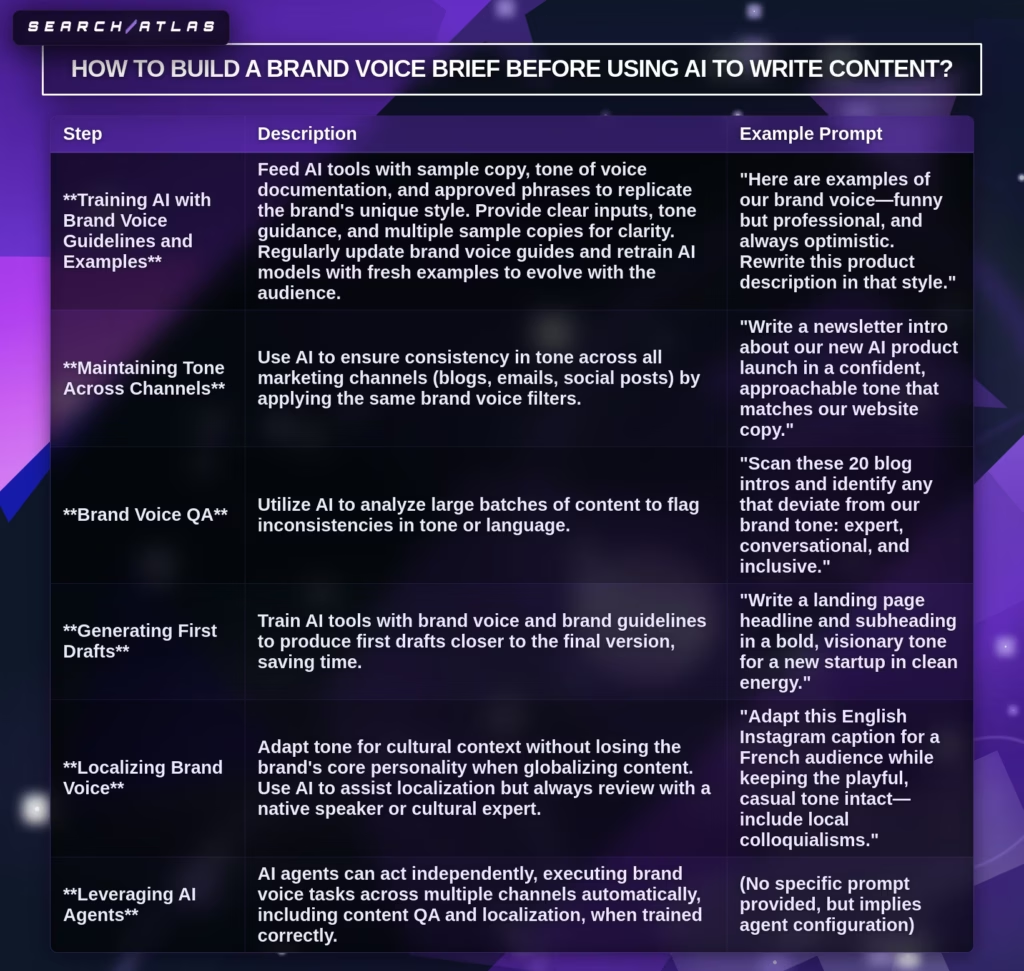

A brand voice brief is a documented specification that defines how a brand communicates through tone, vocabulary, sentence rhythm, and editorial perspective. Brand voice briefs guide AI systems so that generated drafts reflect a recognizable identity instead of generic language. A brand voice brief improves consistency across articles and prevents neutral AI phrasing.

How do writers create a brand voice brief for AI-assisted content? Writers create a brand voice brief by documenting personality, perspective, and tone rules that define the communication style of the brand. Personality explains how the brand speaks to the audience. Perspective explains the brand’s point of view within the industry. Tone defines grammar style, vocabulary preferences, and sentence structure.

2. Use SERP-Informed, Voice-Specific AI Prompts

SERP-informed prompts are structured instructions that guide AI models using search intent, ranking patterns, and audience context. SERP-informed prompts help AI generate content that matches how users search and how search engines structure high-ranking pages. These prompts reduce generic AI output.

How do writers build effective SERP-informed prompts? Writers build SERP-informed prompts by defining the AI role, the target audience, and the required output structure. Role definition instructs the AI to respond as a subject expert. Audience context defines knowledge level and reading style. Output structure defines headings, paragraph length, and formatting expectations.

3. Inject Original Data, Examples, and First-Person Experience

Original insights improve AI-assisted content because original information creates information gain that distinguishes the article from generic summaries. Large language models reproduce patterns from training data, but human authors provide real-world observations and tested knowledge.

What types of original information should writers add to AI drafts? Writers add original data, case studies, real campaign examples, and personal experience to strengthen expertise signals. These additions demonstrate practical knowledge and increase credibility for readers. Real examples also improve content clarity because they translate abstract explanations into practical outcomes.

4. Rewrite Manually for Burstiness, Perplexity, and Sentence Variety

Burstiness is the variation of sentence length and structure, while perplexity measures the unpredictability of word selection in text. Human writing shows natural variation between short and long sentences. AI writing often produces uniform sentence patterns that appear predictable.

How do writers manually increase burstiness in AI-generated drafts? Writers increase burstiness by mixing short statements with longer explanations and by replacing repetitive AI transitions with natural phrasing. Editing also removes overly formal connectors and introduces conversational rhythm. Reading the text aloud helps identify mechanical phrasing.

5. Fact-Check Every Claim and Remove Hallucinations

AI hallucination refers to fabricated facts, statistics, or citations generated by artificial intelligence models during text generation. Large language models predict word sequences rather than verifying factual accuracy. This limitation creates convincing but incorrect statements.

How do editors verify claims in AI-assisted content? Editors verify AI-generated information by checking statistics, confirming sources, validating citations, and reviewing dates for accuracy. Each factual claim should match a credible external source. Subject matter experts should review technical content to ensure correctness.

6. Run AI-Assisted Content Through a Detector as a Quality Checkpoint

AI detection tools act as diagnostic systems that identify machine-like language patterns in text. Detection systems highlight repetitive phrasing, predictable sentence structure, and overly consistent syntax. Editors use this feedback to locate sections that require rewriting.

Why should AI detectors not be used as pass or fail tests? AI detectors produce probabilistic estimates rather than definitive authorship verification. Detection scores vary between tools and datasets. Content teams should use detector feedback as an editing signal instead of a publishing decision rule.

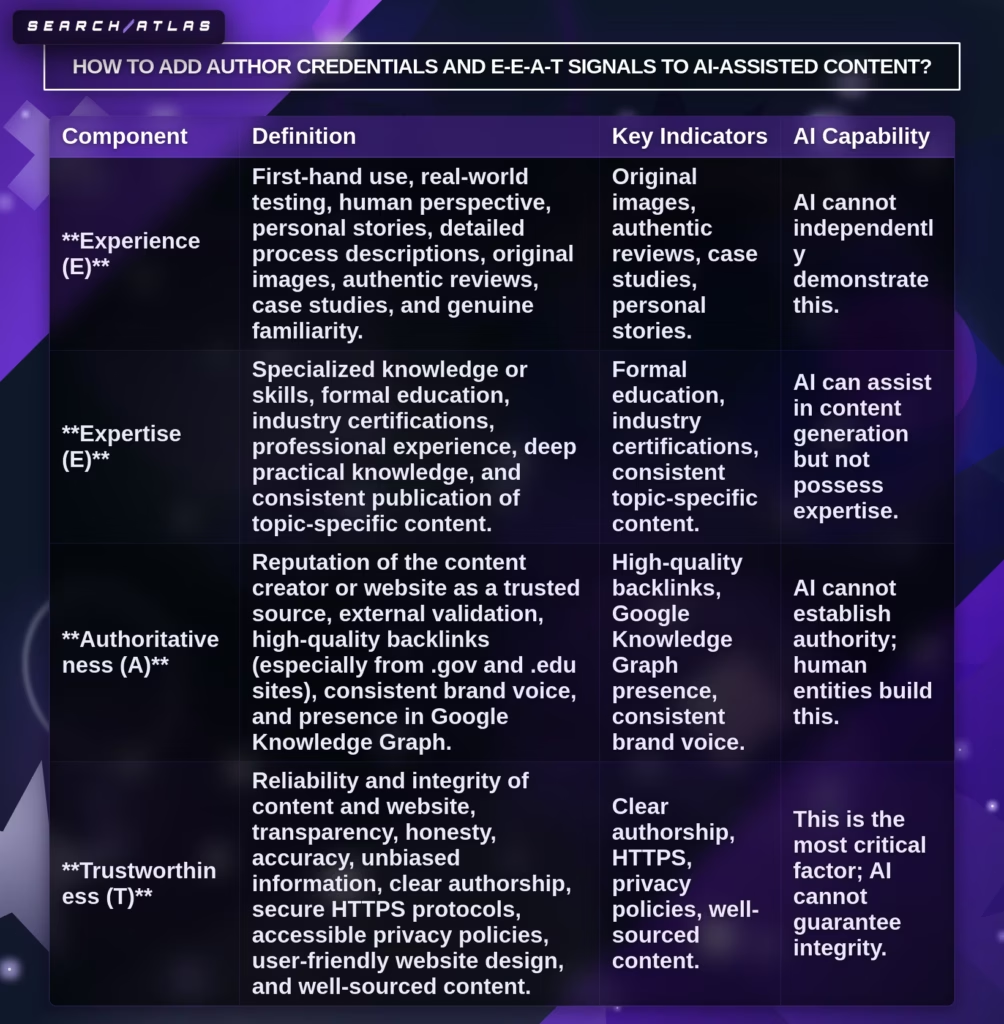

7. Add Author Byline, Credentials, and E-E-A-T Signals

Author bylines and E-E-A-T signals are transparency elements that identify the human expert responsible for creating and reviewing the content. E-E-A-T refers to experience, expertise, authoritativeness, and trustworthiness. These signals demonstrate that human knowledge supports the article.

How do writers add E-E-A-T signals to AI-assisted articles? Writers add E-E-A-T signals by including author names, professional credentials, expert citations, and updated publication dates. Author biography pages and professional profiles strengthen credibility signals. Verified sources and transparent authorship improve trust for readers and search engines.

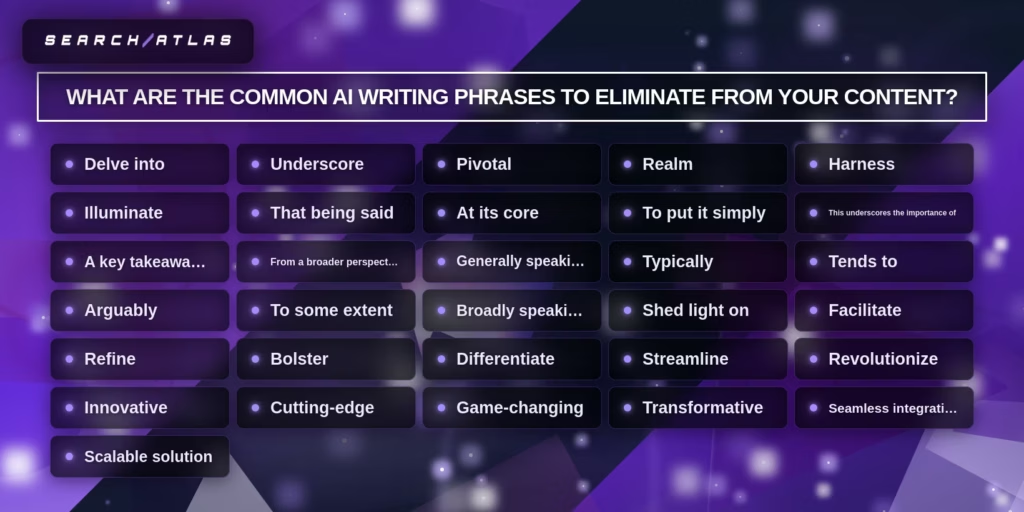

What are the Common AI Writing Phrases to Eliminate From Your Content?

The common AI writing phrases to eliminate from content are listed below.

- Delve into (High-Frequency AI Word)

- Underscore (High-Frequency AI Word)

- Pivotal (High-Frequency AI Word)

- Realm (High-Frequency AI Word)

- Harness (High-Frequency AI Word)

- Illuminate (High-Frequency AI Word)

- That being said (Transition Phrase)

- At its core (Structured Phrase)

- To put it simply (Structured Phrase)

- This underscores the importance of (Structured Phrase)

- A key takeaway is (Structured Phrase)

- From a broader perspective (Structured Phrase)

- Generally speaking (Qualifier)

- Typically (Qualifier)

- Tends to (Qualifier)

- Arguably (Qualifier)

- To some extent (Qualifier)

- Broadly speaking (Qualifier)

- Shed light on (Analytical Word)

- Facilitate (Analytical Word)

- Refine (Analytical Word)

- Bolster (Analytical Word)

- Differentiate (Analytical Word)

- Streamline (Analytical Word)

- Revolutionize (Overused AI Buzzword)

- Innovative (Overused AI Buzzword)

- Cutting-edge (Overused AI Buzzword)

- Game-changing (Overused AI Buzzword)

- Transformative (Overused AI Buzzword)

- Seamless integration (Overused AI Buzzword)

- Scalable solution (Overused AI Buzzword)

What is a Safe AI Content Workflow for Agencies?

A safe AI content workflow is a structured content production process where artificial intelligence assists research, drafting, and optimization while human editors control strategy, accuracy, and brand alignment. A safe AI content workflow reduces operational risk while preserving editorial quality, data security, and brand consistency across large content operations.

Why do agencies need a safe AI content workflow when using artificial intelligence for content production? Agencies need a safe AI content workflow because uncontrolled AI usage creates risks related to data privacy, factual accuracy, and brand inconsistency. AI models generate text quickly but require human oversight to ensure the content follows editorial guidelines and complies with legal and ethical standards. Agencies implement hybrid workflows where AI accelerates drafting while human editors approve strategy and final publication.

What components form a safe AI content workflow inside a digital agency? A safe AI content workflow includes AI models, editorial guardrails, monitoring systems, and human approval checkpoints that control the content lifecycle. AI models generate outlines, drafts, and research summaries. Editorial guardrails define acceptable prompts, tone requirements, and compliance rules. Monitoring and logging systems track AI usage and decision paths to ensure transparency and accountability.

How does a hybrid AI and human workflow improve agency content production? Hybrid AI workflows improve agency content production because artificial intelligence handles repetitive tasks while human editors manage creativity, expertise, and decision-making. AI accelerates research, competitor analysis, and draft generation. Human writers refine arguments, add experience, verify facts, and maintain brand voice before publishing.

How to Maintain Brand Voice When Using AI for Content at Scale?

Maintaining brand voice when using AI for content at scale requires a structured editorial system that trains AI tools with brand guidelines and enforces human review before publication. Brand voice is the consistent communication style that expresses the company’s identity through tone, vocabulary, and perspective.

Why does AI-generated content often lose brand voice consistency? AI-generated content often loses brand voice consistency because large language models generate language based on averaged patterns from training data rather than a specific brand identity. This behavior produces generic phrasing and predictable structures that weaken brand differentiation. Content requires explicit voice training and editorial correction.

How can agencies enforce brand voice across AI-assisted content workflows? Agencies enforce brand voice by documenting tone rules, providing examples of approved language, and training AI systems with representative brand content. Editorial guidelines define vocabulary preferences, sentence structure, and positioning statements. These rules ensure AI-generated drafts follow the brand identity before human editors refine the final content.

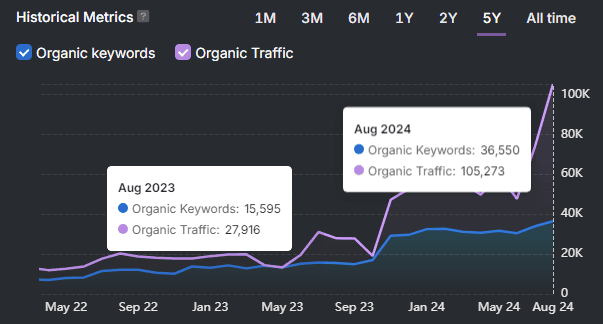

Search Atlas Content Genius is an AI content platform that analyzes SERP data, entity coverage, and topical authority to generate search-optimized drafts aligned with brand guidelines. Search Atlas Content Genius helps agencies maintain brand voice by combining content optimization with structured workflows that guide tone, keyword placement, and topical coverage. Agencies use Search Atlas Content Genius to generate outlines, build optimized content briefs, and produce drafts that match both search intent and brand messaging.

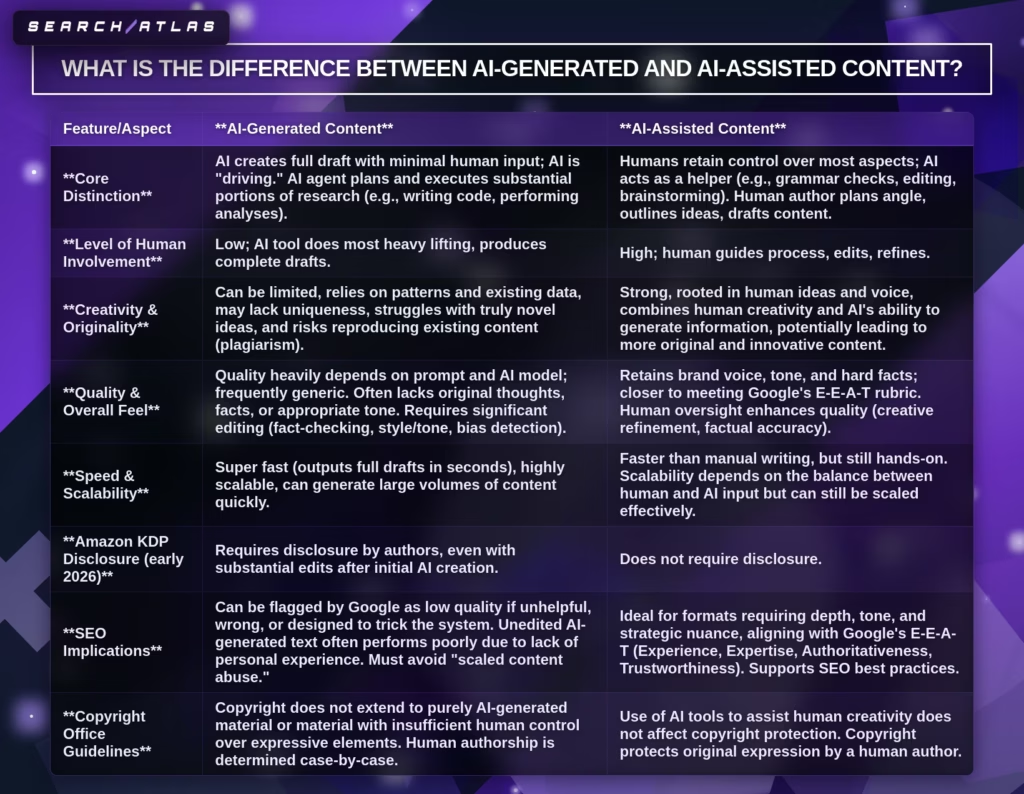

What Is the Difference Between AI-Generated and AI-Assisted Content?

AI-generated content refers to text produced primarily by artificial intelligence with minimal human involvement, while AI-assisted content refers to human-written content supported by AI tools for research, editing, and optimization. The difference determines content originality, editorial control, and compliance with platform policies.

How does AI-generated content differ from AI-assisted content in terms of human involvement? AI-generated content relies on artificial intelligence to create full drafts, while AI-assisted content relies on human authors who use AI tools to accelerate specific tasks. AI-generated workflows require limited human intervention beyond prompting. AI-assisted workflows involve significant human planning, editing, and strategic input.

Why does AI-assisted content usually produce higher-quality results? AI-assisted content usually produces higher quality results because human editors introduce expertise, original insights, and contextual reasoning that AI models cannot replicate. Human oversight also improves factual accuracy, brand voice consistency, and narrative clarity.

Are AI Detection Tools Accurate in 2026?

No, AI detection tools are not fully accurate in 2026 because no detection system can reliably distinguish between human writing and edited AI-generated text. AI detectors produce probabilistic predictions rather than definitive authorship identification.

Why do AI detection tools struggle to achieve consistent accuracy? AI detection tools struggle with accuracy because human and AI writing share the same statistical language patterns. Small edits, paraphrasing, or rewriting significantly reduce detection reliability. Accuracy drops when writers modify AI drafts.

Can Human Content Be Flagged as AI?

Yes, human-written content can be incorrectly flagged as AI-generated because detection tools rely on statistical pattern recognition rather than verified authorship signals. This limitation creates false positives when human writing resembles predictable language patterns.

Why do false positives occur in AI detection systems? False positives occur because detection algorithms classify text based on sentence predictability, structure uniformity, and vocabulary patterns rather than author identity. Human writing that uses simple language, consistent grammar, or academic structure can therefore trigger AI detection flags.