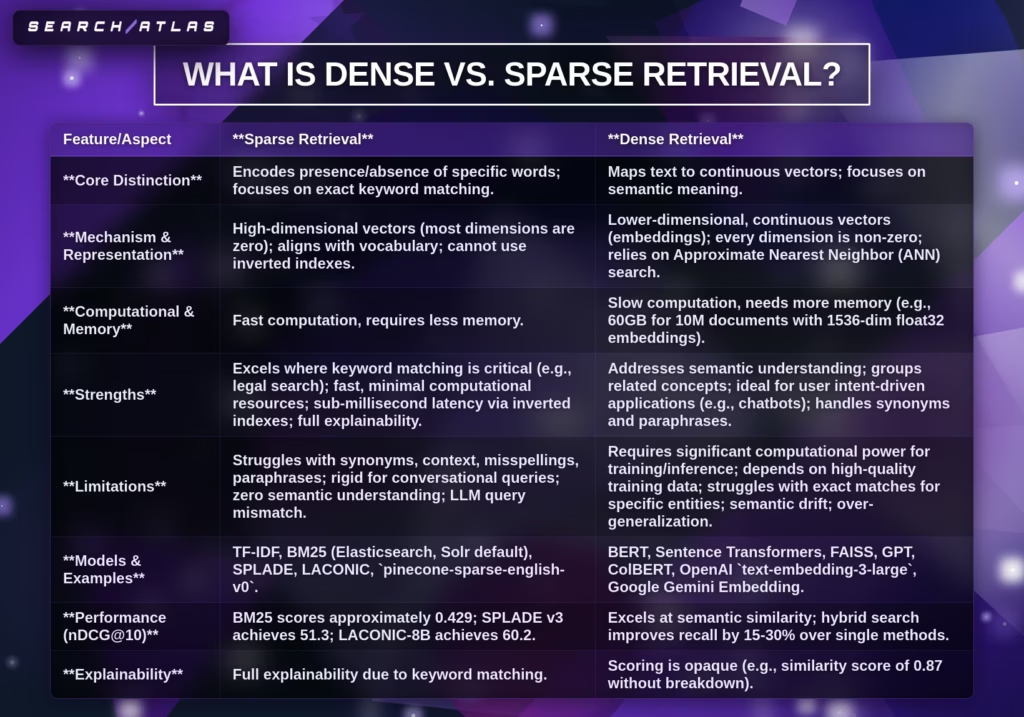

Dense vs sparse retrieval refers to two information retrieval methods where dense retrieval uses vector embeddings for semantic search and sparse retrieval uses term-based weighting for keyword search. Dense retrieval encodes queries and documents into dense vectors (768–1536 dimensions) and ranks results using semantic similarity, while sparse retrieval encodes text into high-dimensional sparse vectors (10,000–100,000 dimensions) and ranks results using the TF-IDF or BM25 algorithm based on exact term overlap. Semantic search vs keyword search defines the core difference, where dense retrieval captures meaning, and sparse retrieval enforces lexical precision.

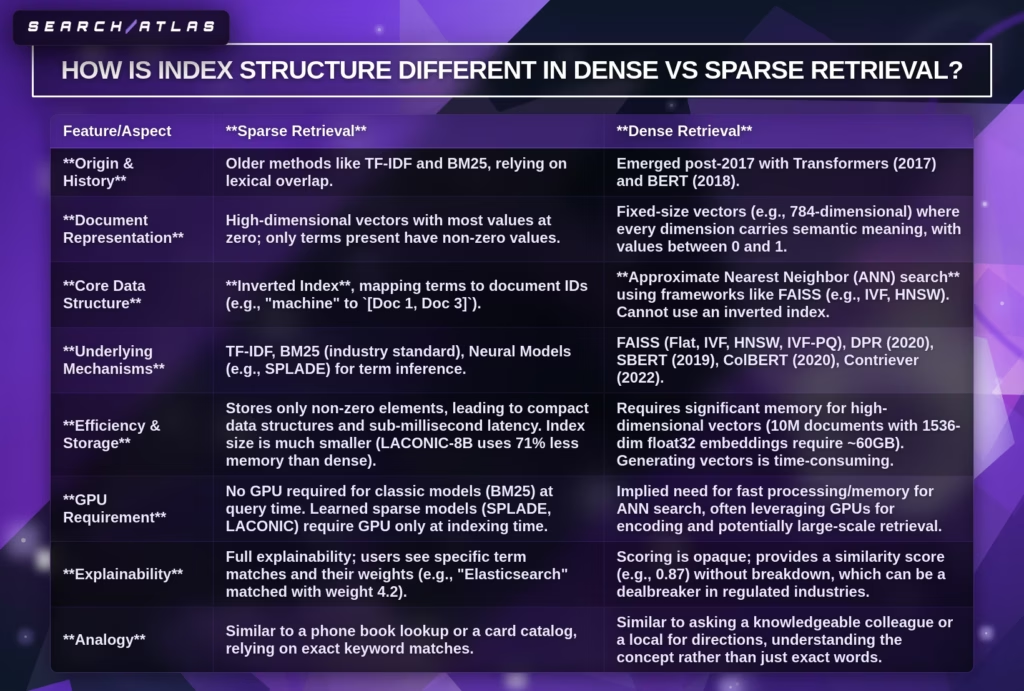

Dense vs sparse retrieval differs in representation, matching, indexing, and performance, which directly impacts retrieval accuracy and system cost. Dense retrieval uses approximate nearest neighbor indexes with 10–100 ms latency and achieves higher relevance (0.72 NDCG@10, 0.65 Recall@10), while sparse retrieval uses inverted index structures with sub-millisecond latency and achieves lower but stable performance (0.58 NDCG@10, 0.52 Recall@10). Dense retrieval requires GPU computation and ≈60GB memory for 10M documents, while sparse retrieval runs on CPU with ≈0.1GB for 100K documents. These differences define trade-offs between semantic understanding and efficiency.

Dense retrieval provides advantages in semantic similarity, recall, and contextual understanding, while sparse retrieval provides advantages in precision, speed, and cost-efficiency. Dense retrieval improves relevance by 10-20% on MS MARCO and integrates with Retrieval-Augmented Generation systems for 20-30% higher factual accuracy. Sparse retrieval ensures exact matching, full explainability, and costs $0.01-$0.10 per 1M queries, which fits keyword-critical and regulated environments. Dense retrieval fits semantic queries and LLM-generated inputs, while sparse retrieval fits exact queries (SKUs, error codes, legal terms).

Hybrid retrieval combines dense retrieval and sparse retrieval to achieve the best overall performance, which defines the current and future standard for search systems. Hybrid retrieval merges semantic similarity with lexical matching and improves recall by 15-30% and NDCG@10 to ≈0.85, outperforming dense-only (0.72) and sparse-only (0.58) systems. Optimization strategies include BM25 tuning, query rewriting, learned sparse models (SPLADE), dynamic negative sampling, ANN indexing (HNSW, IVF-PQ), and reranking pipelines. Dense vs sparse retrieval converges into hybrid, multimodal, and agent-driven systems, where retrieval aligns with intent, context, and scalability requirements.

What is Dense Retrieval?

Dense Retrieval is a neural retrieval method that represents queries and documents as dense vectors in a continuous embedding space, defined by deep neural networks that encode semantic meaning for similarity-based matching. Dense Retrieval definition centers on a neural embedding approach where Dense Retrieval converts text into fixed-length vector representations and compares vectors using semantic similarity instead of keyword overlap. Neural retrieval systems use embedding models to map semantically related phrases close together in vector space, which improves contextual understanding and retrieval precision. Similarity scoring follows cosine similarity: sim(q, d) = (q·d)/(||q||·||d||), where q and d represent query and document vectors.

Dense Retrieval emerged from neural information retrieval research that evolved from sparse methods (TF-IDF, BM25) into embedding-based systems after Word2Vec and GloVe introduced distributed representations between 2013 and 2017. Dense Retrieval architectures advanced with DSSM and bi-encoder models in 2018, followed by transformer-based systems (BERT, DPR) that increased retrieval accuracy and scalability (Karpukhin et al., 2020; Xiong et al., 2020). Dense Retrieval operates within semantic search systems, where Dense Retrieval replaces lexical matching with semantic similarity matching to retrieve contextually relevant results across large datasets.

What defines the neural embedding approach in Dense Retrieval? Neural embedding approach in Dense Retrieval refers to transforming text into dense numerical vectors using deep neural networks trained on query-document pairs. Dense Retrieval uses a query encoder and a passage encoder, where each encoder generates embeddings that capture contextual meaning. The query encoder processes user input into a vector, while the passage encoder converts documents into indexed vectors. This structure enables precomputed embeddings and fast similarity comparison during retrieval.

What are the vector representation principles in Dense Retrieval? Vector representation principles in Dense Retrieval refer to encoding each query and document into low-dimensional dense vectors that preserve semantic relationships. Dense Retrieval uses vectors with fixed dimensions (commonly 768) instead of sparse vectors with 10,000-100,000 dimensions. Dense Retrieval reduces dimensionality while preserving meaning, which increases efficiency in storage and retrieval. Vector proximity reflects semantic similarity, where closer vectors indicate higher relevance.

What is semantic similarity matching in Dense Retrieval? Semantic similarity matching in Dense Retrieval refers to ranking documents based on vector distance instead of exact keyword overlap. Dense Retrieval computes similarity using cosine similarity or dot product to identify top-k nearest vectors. Approximate Nearest Neighbor (ANN) search systems (FAISS) enable sub-linear retrieval time across billions of vectors. Semantic similarity matching improves relevance scores by 10-20% on benchmarks (MS MARCO) compared to keyword-based retrieval.

What is Sparse Retrieval?

Sparse retrieval is an information retrieval method that represents queries and documents as high-dimensional sparse vectors, defined by exact term matching and statistical weighting techniques (TF-IDF, BM25 algorithm) for keyword-based ranking. Sparse retrieval definition explains a lexical matching approach where sparse retrieval assigns weights to individual terms and retrieves documents based on shared terms between the query and the document. Sparse retrieval uses statistical scoring instead of semantic understanding, which makes sparse retrieval efficient and interpretable in large-scale search systems. TF-IDF scoring follows the formula: TF-IDF(t,d) = tf(t,d) × log(N/df(t)), where term frequency and inverse document frequency determine term importance.

What is TF-IDF in sparse retrieval? TF-IDF (Term Frequency-Inverse Document Frequency) is a statistical weighting method that measures how important a term is within a document relative to a corpus. TF-IDF increases scores for terms that appear frequently in a document and decreases scores for terms that appear across many documents. TF-IDF calculates term importance using tf(t,d) for frequency and log(N/df(t)) for rarity, which prioritizes distinctive terms in ranking.

What is lexical matching in sparse retrieval? Lexical matching in sparse retrieval refers to retrieving documents based on exact keyword overlap between the query and the document. Sparse retrieval compares query terms directly with indexed document terms, where higher overlap increases relevance scores. Lexical matching does not evaluate semantic meaning, which limits handling of synonyms and paraphrases but ensures precise matching for exact queries.

What is an inverted index architecture in sparse retrieval? Inverted index architecture in sparse retrieval refers to a data structure that maps terms to document locations for fast lookup and ranking. Sparse retrieval stores each term with a list of documents that contain that term, which enables rapid retrieval across large datasets. Inverted index systems process queries in milliseconds because the system retrieves only documents that contain matching terms instead of scanning the full corpus.

What is the BM25 algorithm in sparse retrieval? The BM25 algorithm is a probabilistic ranking function that improves TF-IDF by applying term frequency saturation and document length normalization for more accurate scoring (Robertson & Zaragoza, 2009). BM25 adjusts term importance using parameters k1 (1.2-2.0) and b (0.75), which balance frequency impact and document length. The BM25 algorithm serves as the default ranking method in systems (Elasticsearch, Solr) because the BM25 algorithm produces stable and interpretable relevance scores.

How Do Dense and Sparse Retrieval Differ?

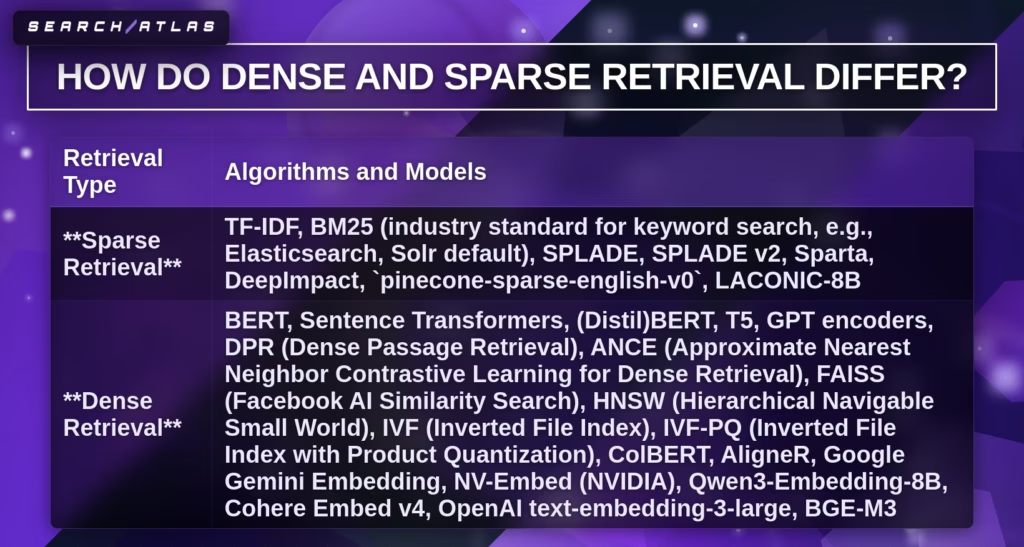

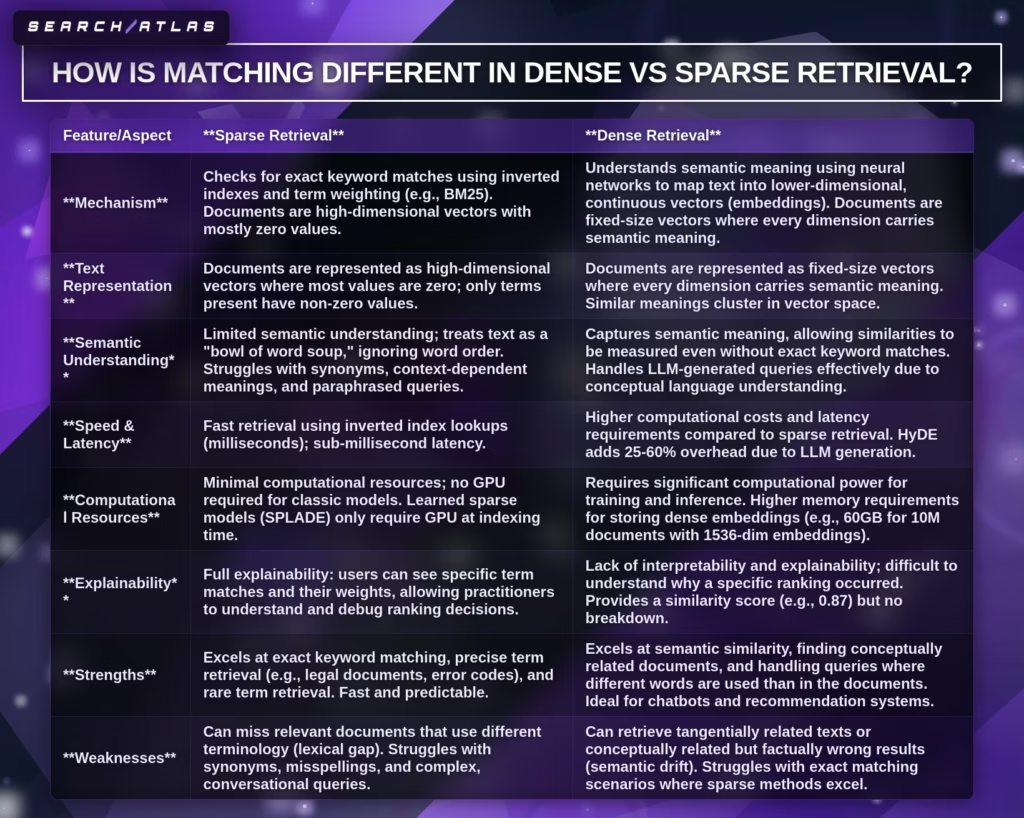

Dense vs sparse retrieval differs in representation, matching, indexing, computation, and performance, where dense retrieval uses semantic search and sparse retrieval uses keyword search. Dense vs sparse retrieval comparison shows that dense retrieval encodes meaning through neural vectors, while sparse retrieval encodes exact terms through weighted token vectors. Semantic search vs keyword search defines the core divide, where dense retrieval focuses on contextual similarity and sparse retrieval focuses on lexical overlap.

| Dimension | Dense Retrieval | Sparse Retrieval |

|---|---|---|

| Representation Method | Dense vectors (fixed 768–1536 dimensions, all values non-zero). | Sparse vectors (10,000–100,000 dimensions, mostly zero values). |

| Matching Approach | Semantic similarity using cosine similarity or dot product. | Lexical matching using exact keyword overlap (TF-IDF, BM25 algorithm). |

| Index Structure | ANN indexes (FAISS, HNSW) for vector search. | Inverted index for term-based lookup. |

| Computational Requirements | GPU required for training and often inference; high memory (≈60GB for 10M documents at 1536-dim). | CPU sufficient; low memory footprint; no neural training required for BM25. |

| Latency (quantified) | 10–100 ms with ANN search at scale. | <1 ms query latency with optimized inverted index. |

| Training Requirements | Requires labeled query-document pairs and neural training (DPR, ANCE). | No training required for BM25; optional training for learned sparse models (SPLADE). |

| Cost per 1M queries | $5–$15, depending on infrastructure and embedding storage. | $0.01–$0.10 with standard keyword indexing. |

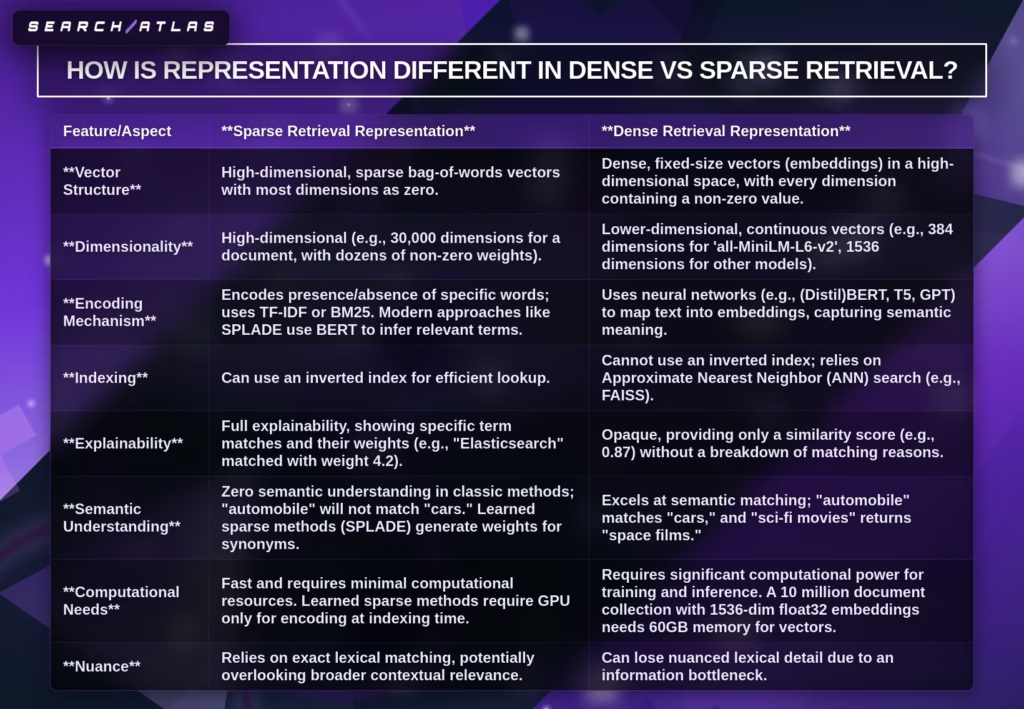

What is the difference in representation between dense and sparse retrieval? Dense retrieval uses low-dimensional dense vectors that encode semantic meaning, while sparse retrieval uses high-dimensional sparse vectors that encode term presence. Dense retrieval assigns a value to every dimension, which captures relationships between words. Sparse retrieval assigns values only to present terms, which preserves exact term importance. Dense vectors (768 dimensions) reduce storage complexity compared to sparse vectors (10,000+ dimensions), but dense vectors require specialized infrastructure.

How does matching differ in semantic search vs keyword search? Semantic search in dense retrieval matches meaning, while keyword search in sparse retrieval matches exact terms. Dense retrieval computes similarity using vector distance, which retrieves relevant results even with different wording. Sparse retrieval computes similarity using term overlap, which retrieves only documents that contain matching keywords. Dense retrieval improves relevance by 10-20% on MS MARCO, while sparse retrieval maintains precision for exact queries.

What is the difference in index structure between dense and sparse retrieval? Dense retrieval uses ANN index structures, while sparse retrieval uses inverted indexes. ANN indexes search nearest vectors efficiently in high-dimensional space. Inverted indexes map terms to document lists for direct lookup. ANN search achieves sub-linear scaling across billions of vectors, while inverted indexes achieve constant-time lookup for exact matches.

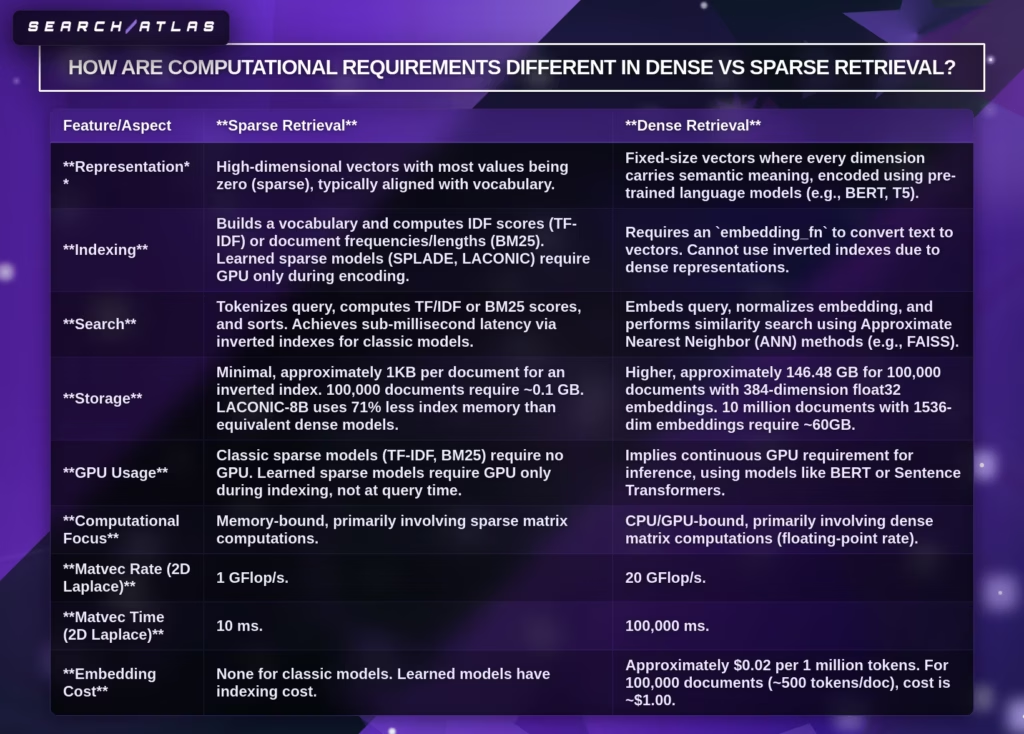

How do computational requirements differ between dense and sparse retrieval? Dense retrieval requires GPU computation and higher memory, while sparse retrieval operates efficiently on a CPU with minimal resources. Dense retrieval performs neural encoding and vector comparisons, which increases computational cost. Sparse retrieval performs term matching and scoring, which reduces infrastructure complexity. Learned sparse models introduce GPU usage during indexing but maintain efficient query execution.

How do latency and cost differ between dense and sparse retrieval? Dense retrieval has higher latency and cost, while sparse retrieval has ultra-low latency and minimal cost. Dense retrieval processes ANN search in 10 to 100 ms per query at scale. Sparse retrieval processes queries in under 1 ms using inverted indexes. Dense retrieval costs range from $5-$15 per 1M queries, while sparse retrieval costs range from $0.01-$0.10 per 1M queries.

How do training and benchmark performance differ between dense and sparse retrieval? Dense retrieval requires supervised training and achieves higher benchmark scores, while sparse retrieval requires no training and provides strong baseline performance. BM25 achieves 42.9 NDCG@10 on BEIR, while SPLADE reaches 51.3 and CSPLADE reaches 54.6. Dense models achieve higher scores, where OpenAI text-embedding-3-large reaches 64.6, and Qwen3-Embedding-8B reaches 70.6 on MTEB. Hybrid retrieval increases recall by 15% to 30% and reaches up to 95% overall retrieval quality in RAG.

1. Representation: Dense Vectors vs. Sparse Term Vectors

Dense vectors and sparse vectors differ in structure, where dense vectors encode semantic meaning in low-dimensional space and sparse vectors encode exact terms in high-dimensional space. Dense vectors use fixed dimensions (commonly 768), where every value carries meaning, while sparse vectors use 10,000 to 100,000 dimensions with mostly zero values representing term presence. Representation determines effectiveness because dense vectors enable semantic understanding and sparse vectors enforce exact matching. Dense vectors group related concepts in a vector space, while sparse vectors match only identical terms. Storage differs significantly, where dense vectors require continuous memory (≈60GB for 10M documents at 1536 dimensions) and sparse vectors use compressed inverted indexes. This distinction defines system design, where semantic search favors dense vectors and keyword precision favors sparse vectors.

2. Matching: Semantic Similarity vs. Exact Term Matching

Semantic similarity in dense retrieval matches meaning between queries and documents, while lexical matching in sparse retrieval matches exact terms based on keyword overlap. Semantic similarity uses vector distance (cosine similarity or dot product) to retrieve relevant content even without shared words, which increases recall for paraphrases and synonyms. Lexical matching uses the TF-IDF or BM25 algorithm to rank documents only when query terms appear explicitly, which increases precision for exact queries. Performance differs across benchmarks, where dense retrieval improves relevance by 10-20% on MS MARCO, while BM25 achieves 42.9 nDCG@10 on BEIR compared to 64.6-70.6 for dense models. This trade-off defines system behavior, where semantic similarity expands recall and lexical matching enforces precision.

3. Index Structure: ANN Indexes vs. Inverted Indexes

Approximate nearest neighbor in dense retrieval uses vector indexes to locate similar embeddings, while the inverted index in sparse retrieval maps terms to documents for exact lookup. Approximate nearest neighbor search (FAISS, HNSW) scans high-dimensional vector space and retrieves nearest vectors in 10-100 ms at scale, which supports semantic similarity search across billions of embeddings. Inverted index stores term-to-document mappings and retrieves results in under 1 ms through direct lookup, which ensures fast keyword matching. Index size differs significantly, where dense indexes require ≈60GB for 10M documents (1536 dimensions), while sparse indexes reduce memory by up to 71% through non-zero term storage. This structural difference defines performance, where ANN scales semantic retrieval, and the inverted index maximizes speed and efficiency.

4. Computational Requirements: GPU vs. CPU Processing

GPU retrieval in dense retrieval requires neural encoding and vector computation, while CPU indexing in sparse retrieval relies on term weighting and inverted index operations. Dense retrieval performs embedding generation using transformer models, which requires GPUs for training and often for inference, with a query latency of 10-100 ms and a memory usage of ≈60GB for 10M documents. Sparse retrieval performs tokenization and scoring (TF-IDF, BM25 algorithm) on CPUs, which achieves sub-millisecond latency and requires minimal memory (≈0.1GB for 100K documents). GPU retrieval increases computational cost due to floating-point operations, while CPU indexing reduces infrastructure requirements. This difference defines deployment strategy, where dense retrieval prioritizes semantic accuracy and sparse retrieval prioritizes efficiency and low cost.

What are the Advantages of Dense Retrieval?

Dense retrieval provides semantic understanding, higher relevance, and scalable performance, which makes dense retrieval effective for modern search and AI systems. Dense retrieval advantages include improvements in semantic similarity, recall, efficiency, and adaptability across multiple retrieval tasks.

The advantages of dense retrieval are listed below.

- Semantic Matching (Core Capability). Semantic matching is the ability of dense retrieval to identify relevant documents based on meaning rather than exact keyword overlap, which improves alignment between query intent and retrieved content.

- Improved Relevance (Performance Benefit). Improved relevance is the increase in result quality where retrieved documents better satisfy user intent, which leads to more accurate answers in search and AI systems.

- Lexical Gap Mitigation (Capability). Lexical gap mitigation is the ability to bridge differences between query wording and document wording, which allows systems to match synonyms and paraphrases effectively.

- Improved Recall (Performance Benefit). Improved recall is the ability to retrieve a higher percentage of relevant documents from the corpus, which ensures fewer important results are missed.

- Robustness to Vocabulary Drift (Performance Benefit). Robustness to vocabulary drift is the ability to remain effective as language usage evolves over time, which maintains retrieval quality despite changing terminology.

- Contextual Alignment (Performance Benefit). Contextual alignment is the ability to interpret queries within their full context, which improves precision when queries contain ambiguity or multiple meanings.

- Broad Semantic Similarity (Capability). Broad semantic similarity is the capability to identify relationships between concepts across different contexts, which expands retrieval beyond exact matches.

- Advanced Reasoning Capabilities (Capability). Advanced reasoning capabilities refer to the ability to support multi-hop and inference-based retrieval, which enables systems to connect related concepts across documents.

- Optimized Embedding Space (Performance Benefit). An optimized embedding space is a structured vector representation where similar meanings cluster together, which improves retrieval accuracy and efficiency.

- Improved Query Representations (DANCE) (Performance Benefit). Improved query representations refer to enhanced encoding methods such as Dense Approximate Nearest Neighbor Contextual Embeddings (DANCE), which refine how queries are represented in vector space.

- Efficient Scaling (Efficiency Benefit). Efficient scaling is the ability to handle large datasets without linear increases in computational cost, which supports enterprise-level search systems.

- Efficient Retrieval (Efficiency Benefit). Efficient retrieval is the ability to quickly identify nearest-neighbor vectors, which reduces latency in real-time search applications.

- Orders-of-Magnitude Improvements (Efficiency Benefit). Orders-of-magnitude improvements refer to significant performance gains compared to traditional retrieval methods, which increase both speed and accuracy.

- Indexing Strategies Efficiency (Efficiency Benefit). Indexing strategies efficiency is the use of vector indexes such as FAISS or HNSW, which optimize storage and retrieval speed.

- Practical Viability with Fine-Grained Retrieval (Efficiency Benefit). Practical viability with fine-grained retrieval is the ability to operate at the passage or sentence level, which improves precision in answer extraction systems.

- Amortized Cost for Load-Time Enhancements (Efficiency Benefit). Amortized cost refers to performing heavy computation during indexing instead of query time, which reduces the runtime cost per query.

- Improved Robustness (Robustness Benefit). Improved robustness is the ability to maintain performance under noisy or incomplete queries, which ensures consistent retrieval quality.

- Enhanced Generalizability (Robustness Benefit). Enhanced generalizability is the ability to perform well across different domains without retraining, which supports broader applicability.

- Low-Resource and Domain Adaptation (Training Capability). Low-resource adaptation is the ability to perform effectively with limited labeled data, which enables deployment in niche domains.

- Integration with LLMs (Adaptability). Integration with Large Language Models (LLMs) is the ability to supply relevant context to generative systems, which improves answer accuracy in Retrieval-Augmented Generation (RAG).

- Application-Specific Performance (Adaptability). Application-specific performance is the ability to fine-tune retrieval for tasks such as QA, recommendation, or summarization, which increases task effectiveness.

- End-to-End Learning (Adaptability). End-to-end learning is the ability to train retrieval models jointly with downstream tasks, which improves overall system performance.

- Interpretability (Model Feature). Interpretability is the ability to analyze vector relationships and similarity scores, which helps diagnose retrieval behavior.

- Superior Retrieval Performance (Proposition-Based Benefit). Superior retrieval performance is the consistent outperformance of dense retrieval over traditional methods on benchmarks, which validates its effectiveness.

- Improved Downstream QA Tasks (Proposition-Based Benefit). Improved downstream QA tasks refer to better answer generation when dense retrieval supplies relevant context, which enhances system outputs.

- Consistent Outperformance (Proposition-Based Benefit). Consistent outperformance is the repeated success across benchmarks and datasets, which demonstrates reliability.

- Cross-Task Generalization (Proposition-Based Benefit). Cross-task generalization is the ability to perform well across multiple retrieval tasks, which reduces the need for separate systems.

- Improved Downstream QA Performance (Retrieve-then-Read) (Proposition-Based Benefit). Improved QA performance in retrieve-then-read pipelines is the enhancement of answer accuracy when retrieval quality increases.

- Higher Density of Question-Related Information (Proposition-Based Benefit). A higher density of relevant information is the concentration of useful context in retrieved passages, which improves answer synthesis.

- Addressing Limitations of Other Granularities (Proposition-Based Benefit). Addressing granularity limitations is the ability to work across document, passage, and sentence levels, which increases flexibility.

- Retrieval-Augmented Language Models (LLaMA-2-7B) (Proposition-Based Benefit). Retrieval-augmented language models refer to systems like LLaMA-2-7B that use dense retrieval for grounding, which improves factual accuracy.

- Initial Filtering in RAG (RAG Role). Initial filtering is the role of dense retrieval in selecting candidate documents, which reduces the search space for generation.

- Foundation for RAG Pipelines (RAG Role). The Foundation for RAG pipelines is the role of dense retrieval as the core retrieval layer, which enables effective generation.

- Gatekeeper Role in RAG (RAG Role). The gatekeeper role is the function of controlling which information enters the generation stage, which directly impacts output quality.

Dense retrieval advantages establish dense retrieval as a core component in semantic search, RAG pipelines, and AI-driven retrieval systems, where meaning-based matching outperforms keyword-based methods in complex queries.

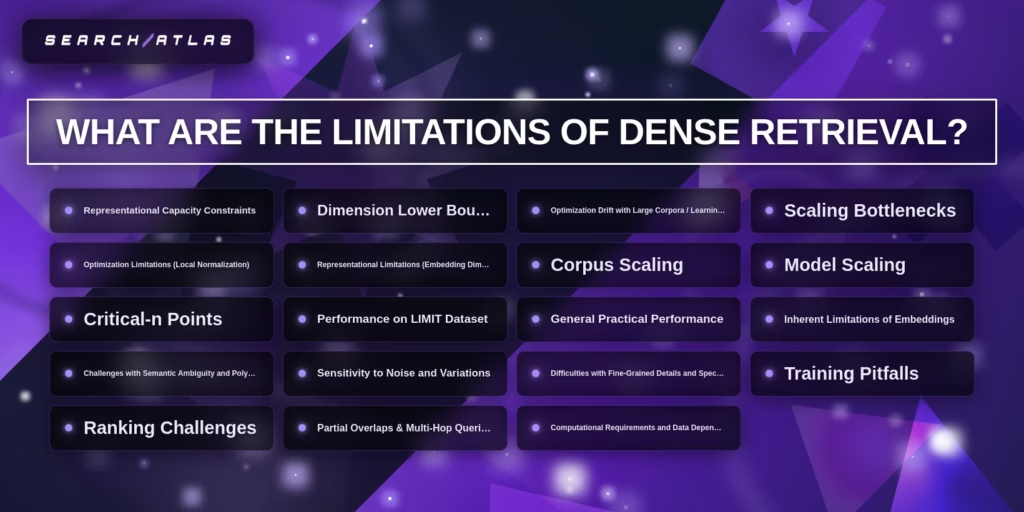

What are the Limitations of Dense Retrieval?

Dense retrieval has limitations in representation, scalability, training, and precision, which restrict dense retrieval performance in exact matching and large-scale systems. Dense retrieval limitations arise from embedding constraints, computational cost, and semantic ambiguity in neural retrieval systems.

The limitations of dense retrieval are listed below.

- Representational Capacity Constraints. Representational capacity constraints refer to the limited ability of dense retrieval embeddings to encode all semantic nuances within fixed-size vectors, which reduces precision for complex queries.

- Dimension Lower Bound. The dimension lower bound is the minimum embedding size required to preserve semantic information, which increases memory and computation requirements as dimensionality grows.

- Optimization Drift with Large Corpora. Optimization drift with large corpora is the degradation of embedding quality as the dataset size increases, which causes inconsistent similarity relationships across documents.

- Scaling Bottlenecks. Scaling bottlenecks refer to the computational and infrastructure limits when indexing and searching large vector spaces, which slow down retrieval at scale.

- Optimization Limitations (Local Normalization). Optimization limitations from local normalization refer to training approaches that focus on limited negative samples, which reduces global ranking accuracy.

- Representational Limitations (Embedding Dimensionality). Representational limitations from embedding dimensionality refer to the trade-off between vector size and information density, which restricts how much meaning a vector can encode.

- Corpus Scaling. Corpus scaling is the challenge of maintaining retrieval efficiency and accuracy as the number of indexed documents grows, which increases latency and storage cost.

- Model Scaling. Model scaling is the requirement for larger neural models to improve performance, which increases training cost and deployment complexity.

- Critical-n Points. Critical-n points refer to thresholds where adding more data or dimensions no longer improves retrieval performance, which creates diminishing returns.

- Performance on the LIMIT Dataset. Performance on the LIMIT dataset refers to observed weaknesses in benchmark evaluations designed to test retrieval limits, which highlight failure cases in dense retrieval systems.

- General Practical Performance. General practical performance refers to inconsistencies between benchmark results and real-world applications, which reduce reliability in production systems.

- Inherent Limitations of Embeddings. Inherent limitations of embeddings refer to the inability of vector representations to capture exact symbolic relationships, which affects tasks requiring precision.

- Challenges with Semantic Ambiguity and Polysemy. Challenges with semantic ambiguity and polysemy refer to the difficulty in distinguishing words with multiple meanings, which leads to incorrect matches.

- Sensitivity to Noise and Variations. Sensitivity to noise and variations refers to performance degradation when queries contain typos, irrelevant tokens, or structural inconsistencies.

- Difficulties with Fine-Grained Details and Specificity. Difficulties with fine-grained details refer to the inability to capture exact facts, numbers, or rare entities, which reduces accuracy for specific queries.

- Training Pitfalls. Training pitfalls refer to issues such as poor negative sampling, overfitting, or biased datasets, which degrade retrieval quality.

- Ranking Challenges. Ranking challenges refer to the difficulty of ordering retrieved documents precisely, which impacts the top-k result quality.

- Partial Overlaps & Multi-Hop Queries. Partial overlaps and multi-hop query limitations refer to the difficulty of handling queries that require combining information from multiple sources, which reduces reasoning capability.

- Computational Requirements and Data Dependency. Computational requirements and data dependency refer to the need for large-scale labeled data and high-performance hardware, which increases cost and limits accessibility.

Dense retrieval limitations show that dense retrieval trades precision, transparency, and cost-efficiency for semantic understanding, which requires hybrid retrieval strategies to balance performance.

What are the Advantages of Sparse Retrieval?

Sparse retrieval provides exact matching, high efficiency, and low-cost scalability, which makes sparse retrieval reliable for keyword-based search systems. The advantages of sparse retrieval focus on lexical precision, transparency, and operational performance in large-scale information retrieval tasks.

The advantages of sparse retrieval are listed below.

- Lexical Precision and Exact Matching (Retrieval Advantage). Lexical precision and exact matching refer to the ability of sparse retrieval to match query terms exactly with document terms, which ensures high accuracy for keyword-based searches.

- Transparency and Interpretability (System Characteristic). Transparency and interpretability refer to the ability to understand how scores are computed using term frequency and inverse document frequency, which makes retrieval decisions explainable.

- Production Efficiency and Scalability (Operational Advantage). Production efficiency and scalability refer to the ability of sparse retrieval systems to operate efficiently on inverted indexes, which supports large-scale deployment with stable performance.

- Competitiveness with Dense Retrieval (Performance Advantage). Competitiveness with dense retrieval refers to the ability of sparse methods such as BM25 to perform strongly on many benchmarks, which keeps them relevant in modern retrieval systems.

- Cost-Effectiveness (Infrastructure) (Financial Advantage). Cost-effectiveness refers to the low infrastructure requirements of sparse retrieval, which reduces compute, storage, and maintenance costs.

- Semantic Enrichment and Expansion (Capability). Semantic enrichment and expansion refer to the use of techniques such as query expansion and synonym mapping, which improve recall without changing the core sparse framework.

- Contextual Meaning and Term Importance (Semantic Capability). Contextual meaning and term importance refer to weighting mechanisms such as TF-IDF and BM25, which prioritize important terms within documents.

- Storage Efficiency (Resource Advantage). Storage efficiency refers to the compact representation of documents using sparse vectors or inverted indexes, which minimizes memory usage.

- Effectiveness for Keyword Queries (Scenario Performance). Effectiveness for keyword queries refers to strong performance when users provide clear, term-based queries, which aligns with traditional search behavior.

- Hybrid Retrieval Systems Contribution (System Integration Benefit). Hybrid retrieval systems’ contribution refers to the role of sparse retrieval as a complementary method to dense retrieval, which improves overall system performance.

- Recall Metric Improvements (Hybrid Sparse-Dense) (Performance Metric). Recall metric improvements refer to increased coverage of relevant documents when sparse retrieval is combined with dense methods, which enhances hybrid retrieval results.

- Query Characteristics Suitability (Query Type Suitability). Query characteristics suitability refers to strong alignment with structured and explicit queries, which improves precision for well-defined searches.

- Small, Specialized Datasets Effectiveness (Dataset Suitability). Small, specialized datasets’ effectiveness refers to reliable performance without requiring large training data, which suits niche domains.

- Extremely Short User Inputs Benefit (Input Type Suitability). Extremely short user inputs benefit refers to the ability to handle short queries effectively, which ensures accurate retrieval even with minimal input.

- Enhanced Knowledge Retrieval in RAG (Application Benefit). Enhanced knowledge retrieval in Retrieval-Augmented Generation (RAG) refers to precise document filtering before generation, which improves factual grounding.

- Reduced False Positives (Accuracy Benefit). Reduced false positives refer to fewer irrelevant matches due to strict term matching, which increases precision.

- Throughput and Latency Enhancement (Performance Improvement). Throughput and latency enhancement refer to fast query processing using optimized index structures, which support real-time applications.

- First-Stage Retrieval Role (System Role). First-stage retrieval role refers to the use of sparse retrieval for initial candidate selection, which reduces the search space for later ranking stages.

- Complementary Information Retrieval (System Integration Benefit). Complementary information retrieval refers to combining lexical and semantic signals, which improves robustness across query types.

- “Missing Link” between IR and Dense Retrieval (System Role). The “missing link” role refers to sparse retrieval acting as a bridge between traditional information retrieval and neural retrieval, which enables hybrid architectures.

- Bridging Semantic Gap (Semantic Capability). Bridging the semantic gap refers to improvements through expansion techniques that connect related terms, which enhance retrieval without full semantic modeling.

- Addressing Dense Retriever Limitations (System Integration Benefit). Addressing dense retriever limitations refers to compensating for weaknesses in semantic models, which ensures balanced retrieval performance across systems.

The advantages of sparse retrieval establish it as a core component of keyword search, hybrid retrieval pipelines, and cost-efficient large-scale systems, where precision and efficiency are critical.

What are the Limitations of Sparse Retrieval?

Sparse retrieval has limitations in semantic understanding, recall, scalability, and query handling, which restrict sparse retrieval performance in modern search systems. Sparse retrieval limitations arise from strict lexical matching, resource constraints in advanced models, and gaps in handling complex queries.

The limitations of sparse retrieval are listed below.

- Vocabulary Mismatch Problem (Retrieval Limitation). Vocabulary mismatch problem refers to the inability of sparse retrieval to match semantically related terms that do not share exact wording, which reduces recall for synonym-based queries.

- Insufficient Contextual Meaning Capture (Semantic Limitation). Insufficient contextual meaning capture is the limitation where sparse retrieval cannot interpret full sentence context, which leads to a shallow understanding of queries.

- Difficulty Differentiating Term Importance (Semantic Limitation). Difficulty differentiating term importance refers to reliance on statistical weighting methods, which fail to fully capture semantic significance beyond frequency signals.

- Inability to Handle Negation, Sarcasm, or Implied Meaning (Semantic Limitation). Inability to handle negation or implied meaning refers to the lack of deep language understanding, which causes incorrect matches when intent depends on subtle linguistic cues.

- Unnecessarily Complex Training Stages (Complexity Limitation). Unnecessarily complex training stages refer to multi-step processes in learned sparse models, which increase system complexity without consistent performance gains.

- Reliance on Generative Models (Dependency Limitation). Reliance on generative models refers to dependence on external systems for query expansion, which introduces variability and additional cost.

- Long Inference Time for External Expansion Methods (Performance Limitation). Long inference time refers to delays caused by expansion techniques such as doc2query, which increase query processing time.

- Lower Retrieval Effectiveness Compared to Dense Retrievers (Performance Limitation). Lower retrieval effectiveness refers to weaker performance on semantic benchmarks, which limits competitiveness in modern AI search.

- Massive Memory Overhead (Resource Limitation). Massive memory overhead refers to increased storage requirements in advanced sparse models, which offset traditional efficiency advantages.

- Large Model Sizes (Resource Limitation). Large model sizes refer to the growth of neural sparse retrieval models, which increases infrastructure requirements.

- Suboptimal Query Formulation with Context (Query Limitation). Suboptimal query formulation refers to the difficulty of incorporating long or contextual queries, which reduces retrieval accuracy.

- Introduction of Noise into Queries (Query Limitation). Introduction of noise refers to expansion methods adding irrelevant terms, which decreases precision.

- Limited Scope of Mention Context (Query Limitation). Limited scope of mention context refers to focusing on isolated terms rather than the full context, which restricts the understanding of complex queries.

- Growing Gap with LLM-Generated Queries (Query Limitation). The growing gap with LLM-generated queries refers to misalignment between natural language queries and keyword-based retrieval, which reduces effectiveness.

- Limited Language Support (English Only) (Deployment Limitation). Limited language support refers to sparse systems being optimized primarily for English, which limits multilingual deployment.

- Deployment Complexity in Cloud Services (Deployment Limitation). Deployment complexity refers to challenges in integrating advanced sparse models into scalable cloud environments.

- Increased Latency with Bi-encoder Mode (Deployment Limitation). Increased latency refers to slower performance when combining sparse retrieval with neural components, which affects real-time systems.

- Inability to Reach Highest Recall Levels Alone (Effectiveness Limitation). Inability to reach the highest recall levels refers to missing relevant documents that lack exact keyword overlap, which limits completeness.

- Missing Relevant Items Surfaced by Dense Retrievers (Completeness Limitation). Missing relevant items refers to the failure to retrieve semantically relevant documents, which dense models would capture.

- Doc2Query Backfiring on Minor Details (Specific Model Limitation). Doc2Query backfiring refers to query expansion generating misleading terms, which harms precision for specific queries.

- DeepImpact Tokenization Issues (Specific Model Limitation). DeepImpact tokenization issues refer to inconsistencies in token weighting, which affect retrieval accuracy.

- TILDEv2 Single Scalar Importance Score Insufficiency (Specific Model Limitation). TILDev2 limitation refers to using a single importance score per token, which oversimplifies semantic contribution.

- UniCOIL Loss of Deeper Semantic Understanding (Specific Model Limitation). UniCOIL limitation refers to reduced semantic depth due to term reweighting approaches, which limits contextual understanding.

- SPARTA Not Sparse Enough by Construction (Specific Model Limitation). SPARTA limitation refers to generating dense-like representations, which reduces the efficiency benefits of sparsity.

- SPARTA: Lack of Additional Neural Networks (Specific Model Limitation). SPARTA’s limitation also includes the absence of deeper modeling layers, which restricts learning capacity.

- SPLADE Unsuitability for Tight Latency Constraints (Specific Model Limitation). SPLADE limitation refers to the high computational cost during inference, which increases latency.

- SPLADE Not Fixing Cold-Start Problems (Specific Model Limitation). SPLADE limitation refers to reliance on existing data distributions, which limits performance for new or unseen content.

- SPLADE Less Necessary in Limited Vocabulary Domains (Specific Model Limitation). SPLADE limitation refers to reduced advantage in domains with controlled vocabularies, which makes simpler methods sufficient.

Sparse retrieval limitations show that sparse retrieval prioritizes precision and efficiency but requires hybrid integration with dense retrieval to achieve semantic coverage and high recall.

When Should You Use Dense Retrieval vs. Sparse Retrieval?

Dense retrieval vs sparse retrieval selection depends on query type, resource constraints, and retrieval goals, where dense retrieval fits semantic search and sparse retrieval fits keyword search. Dense vs sparse retrieval decision-making aligns with semantic search vs keyword search requirements, which determine accuracy, cost, and system complexity.

The use cases for dense retrieval vs sparse retrieval are listed below.

- Use Sparse Retrieval for Exact Matching (Keyword Search Scenario).

Sparse retrieval fits queries that require exact term matching (error codes, product SKUs, API names). Sparse retrieval uses the BM25 algorithm scoring, which ensures high precision and sub-millisecond latency with inverted index lookup. - Use Sparse Retrieval for Low-Cost, High-Speed Systems (Efficiency Scenario).

Sparse retrieval fits systems with strict latency and cost constraints. Sparse retrieval runs on CPU indexing and requires minimal memory (≈0.1GB for 100K documents), which reduces cost per 1M queries to $0.01-$0.10. - Use Sparse Retrieval for Explainability (Compliance Scenario).

Sparse retrieval fits environments that require transparent ranking (legal, finance, healthcare). Sparse retrieval exposes term-level weights, which enables full auditability and deterministic debugging. - Use Dense Retrieval for Semantic Search (Meaning-Based Scenario).

Dense retrieval fits queries that require semantic similarity and contextual understanding. Dense retrieval encodes meaning through dense vectors, which improves relevance by 10-20% on MS MARCO. - Use Dense Retrieval for Complex or LLM-Generated Queries (AI Search Scenario).

Dense retrieval fits natural language and paraphrased queries. Dense retrieval captures conceptual meaning, which increases recall and reduces vocabulary mismatch in conversational search. - Use Dense Retrieval for Modern AI Systems (RAG and NLP Scenario).

Dense retrieval fits Retrieval-Augmented Generation pipelines and recommendation systems. Dense retrieval provides relevant context to language models, which increases factual accuracy by 20-30%. - Use Hybrid Retrieval for Maximum Performance (Combined Scenario).

Hybrid retrieval combines dense retrieval and sparse retrieval. Hybrid retrieval increases recall by 15-30% and achieves up to 95% retrieval performance by merging semantic similarity with keyword precision.

Dense retrieval vs sparse retrieval selection requires aligning the retrieval method with query intent, system constraints, and performance goals, where hybrid retrieval provides the highest overall effectiveness across semantic and keyword search scenarios.

What is Hybrid Retrieval?

Hybrid retrieval is a retrieval method that combines dense retrieval and sparse retrieval to improve both semantic similarity and keyword matching, which increases recall and precision in search systems. Hybrid retrieval merges neural retrieval (dense vectors) with lexical retrieval (BM25 algorithm) in a single pipeline, where hybrid retrieval balances semantic search vs keyword search to overcome individual limitations. Dense retrieval captures meaning through embeddings, while sparse retrieval ensures exact term matching through inverted index structures, which creates a more robust retrieval process.

What components define hybrid retrieval systems? Hybrid retrieval systems consist of dense semantic retrieval and sparse lexical retrieval operating together with a fusion mechanism. Dense retrieval encodes queries and documents into vectors and retrieves results using approximate nearest neighbor search (HNSW, FAISS). Sparse retrieval indexes terms using inverted index structures (Elasticsearch, OpenSearch) and ranks results with the BM25 algorithm. Fusion methods (Reciprocal Rank Fusion, weighted scoring) combine outputs into a unified ranking.

What performance improvements does hybrid retrieval provide? Hybrid retrieval improves recall by 15-30% and increases relevance by 15-25% compared to single-method retrieval systems. Hybrid retrieval achieves higher NDCG@10 scores by combining semantic similarity with lexical precision. Benchmarks show hybrid retrieval increases recall from 70-80% (dense only) to higher levels and produces up to 18% more actionable results in domain-specific tasks.

What role does hybrid retrieval play in modern AI systems? Hybrid retrieval acts as the foundation for Retrieval-Augmented Generation systems, where hybrid retrieval supplies accurate context to large language models. Hybrid retrieval reduces hallucination risk and improves factual accuracy by combining precise keyword matches with semantically relevant context. Hybrid retrieval integrates into frameworks (LangChain, Haystack, Weaviate) and supports production-grade AI search systems.

Hybrid retrieval establishes a balanced retrieval strategy that outperforms dense retrieval and sparse retrieval alone, which makes hybrid retrieval the default approach for high-quality semantic and keyword search systems.

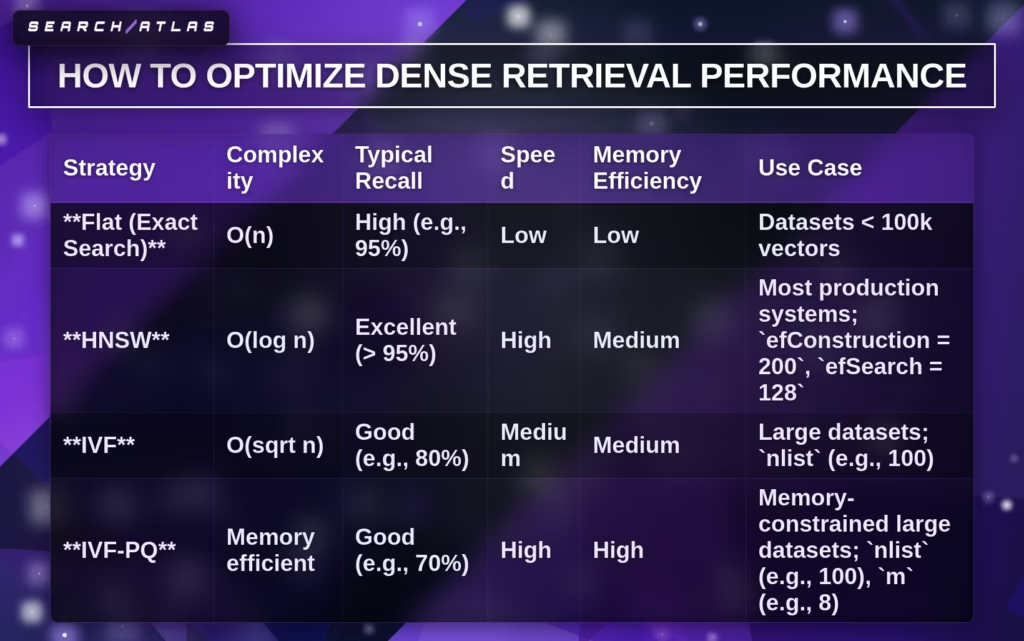

How to Optimize Dense Retrieval Performance?

Dense retrieval performance optimization refers to improving retrieval accuracy, efficiency, and scalability through training strategies, indexing methods, and query optimization techniques. Dense retrieval optimization focuses on embedding quality, negative sampling, ANN indexing, and system-level performance tuning, which directly impact ranking quality and latency.

What are the most effective training strategies for dense retrieval? Effective training strategies for dense retrieval include dynamic negative sampling, STAR, ADORE, and LTRe, which improve ranking performance and training efficiency. Dynamic hard negatives replace static negatives, which stabilizes training and improves top-k ranking. STAR increases MRR@10 by 13% and delivers 20x faster training compared to ANCE. ADORE improves ranking performance by 20-22% and achieves 179x speed improvement. LTRe removes negative sampling and provides over 170x training speed-up with better retrieval accuracy.

What embedding optimization techniques improve dense retrieval? Embedding optimization techniques in dense retrieval include model selection, fine-tuning, and dimensionality control, which improve semantic representation quality. High-performance models (text-embedding-3-large, BGE-base-en-v1.5) increase accuracy through higher-dimensional embeddings (768-3072). Fine-tuning with triplet loss and hard negatives aligns embeddings with task-specific relevance. Dimensionality reduction (Matryoshka representation) reduces memory by 4x-12x while preserving 90-95% accuracy.

What indexing strategies optimize dense retrieval performance? Indexing strategies in dense retrieval use approximate nearest neighbor algorithms (FAISS: HNSW, IVF, IVF-PQ) to balance recall, speed, and memory usage. HNSW achieves >95% recall with logarithmic complexity, which fits production systems. IVF reduces search space with cluster-based indexing, while IVF-PQ compresses vectors for high memory efficiency. Parameter tuning (efSearch=128, nlist=100) increases retrieval accuracy with controlled latency.

What query and retrieval optimizations improve performance? Query optimization in dense retrieval includes query expansion, caching, and instruction tuning, which increase retrieval relevance and efficiency. Query expansion generates multiple semantic variations, which improves recall. Query caching stores up to 10,000 embeddings, which reduces redundant computation. Instruction prefixes improve embedding alignment for asymmetric retrieval tasks.

What relevance tuning methods improve dense retrieval accuracy? Relevance tuning in dense retrieval uses re-ranking and score calibration to improve top-k result quality. Two-stage retrieval applies bi-encoder retrieval (top 100) followed by cross-encoder re-ranking (top 10), which increases NDCG@10. Score normalization (Z-score, sigmoid) standardizes similarity values for consistent ranking.

What system-level optimizations improve dense retrieval scalability? System-level optimization in dense retrieval includes GPU acceleration, batching, and distributed retrieval, which increase throughput and reduce latency. Batched retrieval processes multiple queries simultaneously, which improves throughput. GPU acceleration (FAISS GPU) speeds up vector search operations. Distributed retrieval uses shard-based search with parallel execution, which enables large-scale deployment.

How to Optimize Sparse Retrieval Performance?

Sparse retrieval performance optimization focuses on query expansion, ranking function tuning, neural sparse models, and index acceleration, which improve recall, latency, and precision in keyword-based retrieval systems. Sparse retrieval optimization strengthens lexical matching through BM25 tuning, learned sparse expansion, pruning methods, and hybrid retrieval. Sparse retrieval performance increases when the system adjusts term weighting, reduces index overhead, and refines queries for better token overlap. OpenSearch reported Recall@4 improvement from 0.215 with BM25 to 0.362 with hybrid dense-sparse retrieval on BEIR/fiqa.

What are the main ways to optimize sparse retrieval performance? There are 5 main ways to optimize sparse retrieval performance. Firstly, tune ranking functions (BM25, TF-IDF). Secondly, improve query formulation (rewriting, fuzzy matching, synonym dictionaries). Thirdly, use learned sparse retrieval models (DeepCT, SparTerm, SPLADE). Fourthly, accelerate retrieval with pruning and compact index structures. Fifthly, combine sparse retrieval with reranking or hybrid retrieval. Each method targets a specific bottleneck, where term weighting improves ranking quality, query reformulation improves lexical coverage, and indexing strategies reduce latency.

What ranking functions improve sparse retrieval performance? The BM25 algorithm and TF-IDF improve sparse retrieval performance through stronger term weighting and document length normalization. BM25 extends TF-IDF with term frequency saturation and length control through k1 and b. BM25 tuning starts with k1 = 1.5 and b = 0.75, then uses grid search on a validation set. TF-IDF uses TF-IDF(t,d) = tf(t,d) × log(N/df(t)), where rare terms receive higher weight. BM25 remains the standard baseline because BM25 produces strong keyword relevance with low computational cost.

What query optimization methods improve sparse retrieval performance? Query rewriting, fuzzy queries, synonym dictionaries, and metadata filtering improve sparse retrieval performance by increasing lexical overlap between queries and documents. Query rewriting rephrases the original query into terms that match indexed text more directly. Fuzzy queries recover spelling variations and minor token differences. Synonym dictionaries expand exact-match coverage across equivalent terms. Metadata filtering narrows the candidate set with structured fields (source, category, type), which reduces noise and increases ranking precision.

What learned sparse models improve sparse retrieval performance? DeepCT, Doc2Query, SparTerm, and SPLADE improve sparse retrieval performance by adding contextual term importance and learned expansion to lexical retrieval. Doc2Query generates synthetic queries per document and appends them before indexing, which increases first-stage retrieval performance on MS MARCO and TREC-CAR. DeepCT predicts token importance from context and inserts weighted terms into the inverted index. SparTerm combines importance prediction with gating for better term selection. SPLADE applies learned expansion with sparsity regularization, which produced the strongest results across multiple datasets while retaining inverted index retrieval.

What makes SPLADE important in sparse retrieval optimization? SPLADE is important in sparse retrieval optimization because SPLADE combines semantic expansion with sparse index efficiency. SPLADE uses learned term weights and sparsity regularization to expand document and query vocabulary without abandoning lexical indexing. FLOPS regularization reduces frequently activated terms, which lowers the runtime cost and balances the postings distribution. SPLADE-v2 improves pooling through max aggregation, which produced stronger retrieval effectiveness than earlier summation-based variants. SPLADE reached effectiveness close to state-of-the-art dense retrieval while preserving interpretability.

What performance bottlenecks limit neural sparse retrieval? Model inference is the main bottleneck in neural sparse retrieval performance. BM25 showed a constant latency of 5.4 ms, document-only sparse retrieval showed 8.7 ms, and bi-encoder sparse retrieval showed 265.4 ms because model inference adds major overhead. Inverted index search cost rose from 2.80 ms per 1M documents for BM25 to 5.15 ms for document-only sparse retrieval and 20.58 ms for bi-encoder sparse retrieval. Neural sparse search still improved NDCG@10 by 12.7% to 20% over baseline setups, but inference cost remains the main trade-off.

What acceleration methods improve sparse retrieval speed? Horizontal scaling, Lucene upgrades, and GPU inference improve sparse retrieval speed. Horizontal scaling distributes documents across shards and nodes, which reduces per-node search load. OpenSearch 2.12 with Lucene 9.9 reduced P99 search latency by 72% in document-only mode and 76% in bi-encoder mode versus OpenSearch 2.11 with Lucene 9.7. GPU inference reduced P99 bi-encoder latency by 73% and increased neural sparse ingestion throughput by 234%. Mixed-precision inference and batch processing increase throughput further.

What pruning and indexing methods optimize sparse retrieval latency? Lightweight Superblock Pruning, Block-Max Pruning, and compact index structures optimize sparse retrieval latency by reducing postings traversal and index size. LSP/0 ran 1.8x-17x faster than Superblock Pruning and 1.8x to 12x faster than Block-Max Pruning on MS MARCO. BMP delivered 2x-60x faster safe retrieval than earlier dynamic pruning methods. Flat-Inv reduced storage by up to 6.2x versus BMP-Inv, while Forward Index reduced storage by up to 9x in larger block settings. These methods improve speed without major relevance loss.

What system design improves sparse retrieval quality the most? Hybrid retrieval with sparse first-stage retrieval and reranking improves sparse retrieval quality the most. Sparse retrieval identifies high-precision candidates through BM25 or learned sparse indexing, and then the rerankers refine the final order. Reranking improves top-result quality, while hybrid dense-sparse retrieval improves recall. A practical sparse-first architecture uses chunking, sparse retrieval, reranking, and response generation in sequence, where each stage narrows error and increases relevance.

Sparse retrieval performance optimization requires ranking tuning, query refinement, learned sparse expansion, and index acceleration, because sparse retrieval improves most when lexical precision and system efficiency improve together.

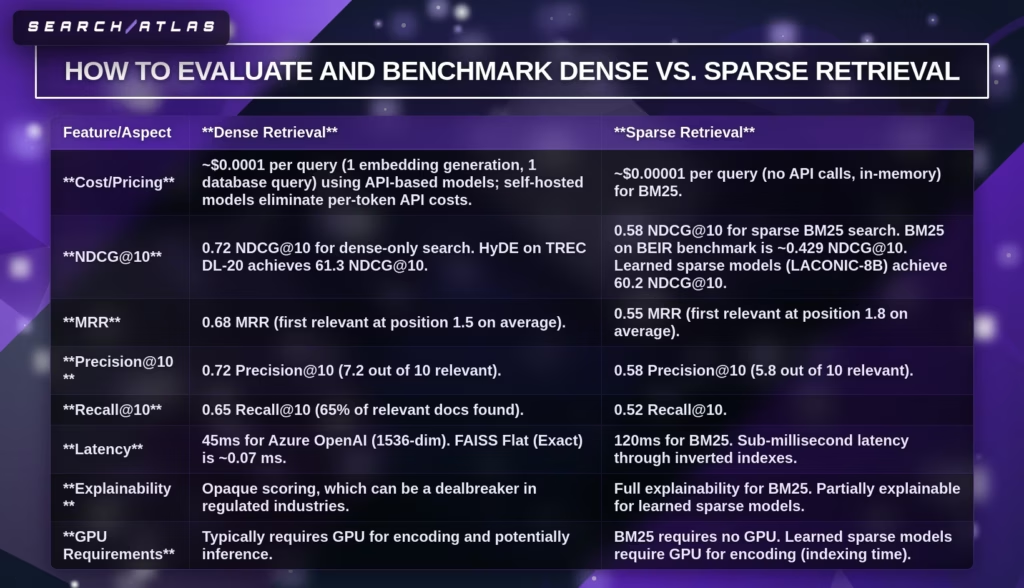

How to Evaluate and Benchmark Dense vs. Sparse Retrieval?

Evaluating and benchmarking dense vs sparse retrieval measures, retrieval quality, efficiency, and cost using standardized metrics and datasets, which determine system performance across semantic search vs keyword search. Dense vs sparse retrieval evaluation compares ranking quality, latency, and infrastructure requirements through benchmarks (BEIR, MS MARCO) and metrics (NDCG@10, MRR, Recall@k).

The evaluation methods for dense vs sparse retrieval are listed below.

- Measure Ranking Quality (NDCG@10, MRR, Precision@k).

- Measure Recall and Coverage (Recall@k).

- Measure Latency and Throughput (ms/query).

- Measure Cost per Query ($ per 1M queries).

- Evaluate Explainability and Transparency.

- Benchmark Across Standard Datasets (BEIR, MS MARCO).

- Compare Hybrid vs Single Retrieval Performance.

What metrics measure ranking quality in dense vs sparse retrieval? NDCG@10, MRR, and Precision@k measure ranking quality in dense vs sparse retrieval by evaluating result relevance and ranking order. Dense retrieval achieves ~0.72 NDCG@10 and 0.68 MRR, while sparse retrieval achieves ~0.58 NDCG@10 and 0.55 MRR. Precision@10 shows 0.72 for dense retrieval and 0.58 for sparse retrieval. These metrics quantify how well top-ranked results match user intent.

What metrics measure recall in dense vs sparse retrieval? Recall@k measures how many relevant documents retrieval systems return within the top-k results. Dense retrieval achieves ~0.65 Recall@10, while sparse retrieval achieves ~0.52. Hybrid retrieval improves recall by 15-30%, which increases coverage of relevant documents in mixed-query environments.

What metrics measure latency and efficiency in dense vs sparse retrieval? Latency and throughput measure system speed and scalability in dense vs sparse retrieval. Dense retrieval processes queries in ~45 ms with embedding generation, while sparse retrieval achieves sub-millisecond latency through inverted index lookup. ANN search adds 10-100 ms latency at scale, while BM25 remains near constant time.

What metrics measure cost in dense vs sparse retrieval? Cost per query measures infrastructure and API expenses in dense vs sparse retrieval systems. Dense retrieval costs ~$0.0001 per query due to embedding generation, while sparse retrieval costs ~$0.00001 per query with in-memory indexing. Hybrid retrieval combines both costs but delivers higher ROI through improved accuracy.

What benchmarks validate dense vs sparse retrieval performance? BEIR and MS MARCO benchmarks validate dense vs sparse retrieval performance across diverse datasets and query types. BM25 achieves ~0.429 NDCG@10 on BEIR, while dense models reach 64.6 to 70.6 on MTEB benchmarks. Learned sparse models (LACONIC-8B) reach 60.2 NDCG@10, which narrows the gap.

What role do hybrid benchmarks play in evaluation? Hybrid retrieval benchmarks measure the combined performance of dense and sparse retrieval systems. Hybrid retrieval achieves ~0.85 NDCG@10 and ~0.82 MRR, which outperforms dense-only (0.72 NDCG@10) and sparse-only (0.58 NDCG@10). Hybrid systems provide consistent performance across semantic and keyword queries.

Dense vs sparse retrieval evaluation requires combining ranking metrics, recall analysis, latency measurement, and cost benchmarking, which ensures accurate comparison across semantic and keyword search systems.

What is the Future of Dense and Sparse Retrieval?

The future of dense and sparse retrieval is hybrid retrieval systems that combine semantic similarity and lexical matching to maximize recall, precision, and efficiency. Dense and sparse retrieval evolve toward unified pipelines, where hybrid retrieval becomes the default architecture for production search and Retrieval-Augmented Generation systems. Hybrid retrieval improves recall by 15-30% and increases business value by ≈$1,500 per month for systems with 100,000 documents and 1,000 daily queries.

What architecture defines the future retrieval systems? Future retrieval systems use a two-stage hybrid architecture where sparse retrieval generates candidates and dense retrieval reranks results. Sparse retrieval (BM25 algorithm) retrieves hundreds to thousands of documents through inverted index lookup with sub-millisecond latency. Dense retrieval encodes semantic meaning through embeddings and refines top-k results using approximate nearest neighbor search. Fusion methods (Reciprocal Rank Fusion) combine both outputs into a single ranked list.

How do dense and sparse retrieval roles evolve in hybrid systems? Sparse retrieval remains the first-stage retriever for speed and precision, while dense retrieval becomes the semantic reranking layer for contextual relevance. Sparse retrieval ensures exact term coverage and low-cost execution. Dense retrieval improves semantic understanding and resolves vocabulary mismatch. This division of roles creates stable and scalable retrieval pipelines.

What challenges shape the future of dense retrieval? Dense retrieval faces challenges in scalability, cost, latency, and interpretability, which drive innovation in compression and indexing. Dense retrieval requires large memory (≈60GB for 10M documents) and GPU processing, which increases cost. Dense retrieval struggles with exact matching and explainability. Sparse embedding compression reduces memory usage while preserving retrieval quality.

What technological trends define future retrieval systems? Future retrieval systems integrate agentic search, multimodal retrieval, and cognitive search capabilities powered by large language models. Retrieval systems expand beyond text into images and multimodal data using vector representations. Agentic retrieval performs multi-step search workflows to achieve user goals. Cognitive search shifts from relevance metrics to intent satisfaction and task completion.

What scalability strategies define future hybrid retrieval? Future hybrid retrieval systems scale through ANN indexing strategies (HNSW, IVF-PQ) and distributed architectures. HNSW supports datasets of 100,000-10 million vectors with high recall. IVF-PQ enables compression for datasets above 10 million vectors. Distributed retrieval uses shard-based architectures with parallel search and global reranking.

Dense and sparse retrieval converge into hybrid, multimodal, and agent-driven systems, where retrieval evolves from keyword and semantic matching into goal-oriented, context-aware information access.