The landscape of ChatGPT alternatives is characterized by a diverse array of models, with 32 direct competitors identified across major tech companies, aggregators, and open-source platforms. Key alternatives like Search Atlas, Claude, Gemini, and Meta AI demonstrate distinct advantages. Claude, for instance, offers a 500,000-token context window (up to 1 million tokens for Sonnet 4), significantly exceeding ChatGPT’s 128,000 tokens, and achieved 1st place in coding tests with its Sonnet 4 model. Gemini, integrated with the Google ecosystem, provides 2 TB of storage and a 2 million-token context window with Gemini Pro 1.5 for $19.99/month, outperforming ChatGPT-3.5 with 100% accuracy in academic research in July 2024. Meta AI, leveraging Llama 3/4, boasts nearly 600 million monthly users as of December, surpassing ChatGPT’s 400 million weekly active users in February, and offers basic functionalities for free.

Further analysis reveals specialized strengths among alternatives. Amazon Nova Pro is 65.26% more cost-effective than GPT-4o, operating 97% faster with an average latency of 1.68 seconds. Microsoft Phi-3.5, a smaller model, rivals GPT-4o-mini in STEM and social sciences, with Phi-4-Reasoning outperforming models 50-times its size in Olympiad-grade math. Grok provides real-time information from X and scored 74.9% in coding (SWE-bench Verified). DeepSeek offers API input at $0.07/million tokens, substantially less than OpenAI GPT-4.1’s $2/million tokens, and is cited as 10x better for PySpark code generation. Kimi K2.5 is 96.5% cheaper than GPT-4 for 1 million tokens, costing $3.10, and features a 262K token context window. Pi, a personal AI, focuses on emotional support and conversational quality, while Llama, an open-source model, is 30 times cheaper than GPT-4 and has over 65,000 derived models in its community.

Aggregator platforms like Nexos AI, i10x AI, and Writingmate.ai consolidate access to over 200 AI models, offering significant cost savings (e.g., i10x AI claims 90% savings) and enhanced workflows. Saner.AI provides ADHD-friendly workflows and integrates multiple models, with its “Skai” assistant being 60% smarter with 80% fewer errors by December 2025. Zapier Agents and Chatbots offer automation-first solutions, integrating with over 8,000 apps. Privacy-focused alternatives like Jan, Lumo by Proton, and Internxt AI emphasize local-first operation, zero-access encryption, and GDPR compliance, with Internxt AI being currently free and unlimited. HuggingChat, a 100% open-source platform, prioritizes user privacy and transparency. Users seek alternatives due to ChatGPT’s perceived degraded search capability, 15-20% chance of incorrect information, and daily free limits reported as of July 21, 2024.

What are the ChatGPT Alternatives?

Direct alternatives to ChatGPT are listed below.

- Search Atlas (All-in-One SEO tool)

- Claude (Major Tech Company Model)

- Gemini (Major Tech Company Model)

- Meta AI (Major Tech Company Model)

- Copilot (Major Tech Company Model)

- Amazon Nova (Major Tech Company Model)

- Amazon Nova Pro (Major Tech Company Model)

- Microsoft Phi (Major Tech Company Model)

- Grok (Major Tech Company Model)

- ChatOn (Major Tech Company Model)

- Deepseek (Major Tech Company Model)

- Kimi (Major Tech Company Model)

- Pi (Major Tech Company Model)

- Llama (Major Tech Company Model)

- Nexos AI (Aggregator/Specialized Platform)

- Sintra AI (Aggregator/Specialized Platform)

- Poe (Aggregator/Specialized Platform)

- i10x AI (Aggregator/Specialized Platform)

- Writingmate.ai (Aggregator/Specialized Platform)

- Saner.AI (Aggregator/Specialized Platform)

- Zapier Agents (Aggregator/Specialized Platform)

- Zapier Chatbots (Aggregator/Specialized Platform)

- Jan (Open-Source/Privacy-Focused Model)

- Mistral.ai / Mistral (Open-Source/Privacy-Focused Model)

- NLP Cloud (Open-Source/Privacy-Focused Model)

- Lumo by Proton (Open-Source/Privacy-Focused Model)

- Internxt AI (Open-Source/Privacy-Focused Model)

- YouChat by You.com (Open-Source/Privacy-Focused Model)

- HuggingChat (Open-Source/Privacy-Focused Model)

- NanoGPT (Open-Source/Privacy-Focused Model)

- Maple AI (Open-Source/Privacy-Focused Model)

- Naga AI (Open-Source/Privacy-Focused Model)

1. Search Atlas

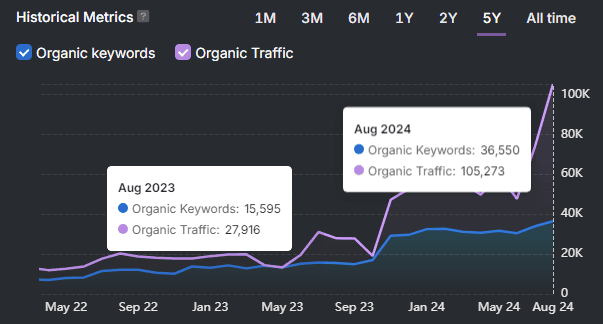

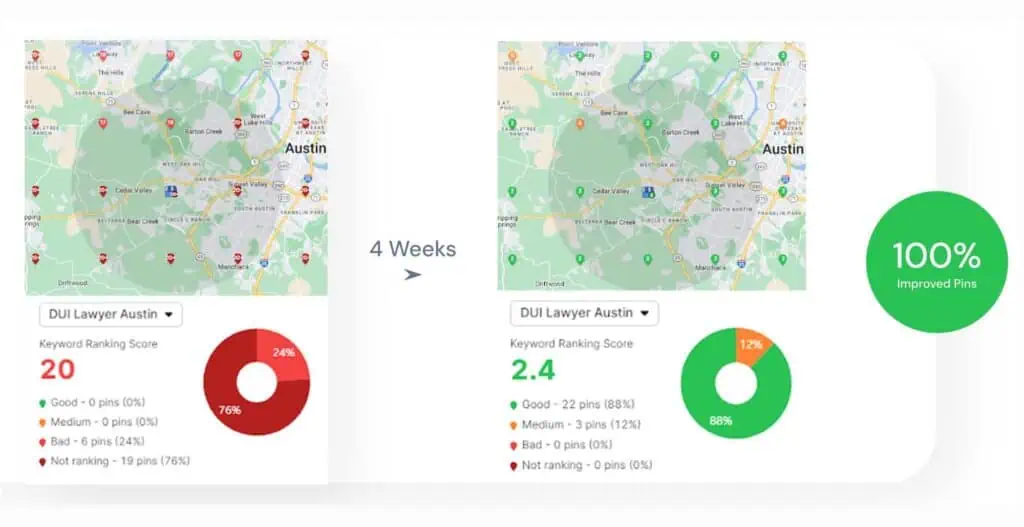

Search Atlas is a ChatGPT alternative because it combines AI content generation with SEO intelligence, automates optimization through OTTO SEO, integrates keyword research and SERP analysis into content workflows, replaces multiple SEO tools within one unified platform, and focuses on governing search and AI visibility rather than only conversational responses.

How does Search Atlas combine AI generation with SEO intelligence? Search Atlas integrates keyword research, competitor analysis, and SERP intelligence directly into content creation workflows. The platform analyzes search intent, top-ranking pages, and entity coverage to guide content strategy. ChatGPT primarily generates responses and text, while Search Atlas connects AI generation with SEO data and search performance signals.

Why is OTTO SEO automation a major differentiator? Search Atlas includes OTTO SEO, an automation engine that identifies SEO opportunities and deploys improvements directly on websites. OTTO SEO applies schema markup, strengthens internal linking structures, and optimizes on-page elements automatically. ChatGPT generates insights and content but does not implement optimization changes on websites.

What role does Content Genius play in content creation? Content Genius generates structured SEO-ready content drafts aligned with keyword targets and search intent. The system analyzes top-ranking SERP pages and recommends headings, semantic terms, and internal linking opportunities. ChatGPT produces general text, while Search Atlas generates content specifically designed for ranking in search engines.

How does Search Atlas replace multiple SEO tools? Search Atlas consolidates keyword tracking, backlink intelligence, technical SEO auditing, competitor analysis, and content optimization within one operational system. This unified architecture eliminates the need for multiple SEO tools and fragmented workflows. ChatGPT operates primarily as a general-purpose AI assistant rather than a complete SEO platform.

Why is Search Atlas designed for search and AI visibility strategy? Search Atlas focuses on managing visibility across traditional search engines and AI-generated answers. The platform connects content generation, technical SEO automation, backlink intelligence, and SERP monitoring to control how websites appear in search results and AI responses. ChatGPT focuses on conversational AI rather than search performance management.

2. Claude

Claude is a ChatGPT alternative because it prioritizes humanity and ethics in its design, offers superior coding and development capabilities, excels in technical knowledge and long-form analysis, provides a more expressive and natural writing style, features an industry-leading context window, and demonstrates unmatched logic and reasoning.

How does Claude’s ethical design contribute to its role as an alternative? Claude was specifically designed by Anthropic as a direct response to ChatGPT’s drawbacks, with a mission to prioritize humanity and ethics. This focus aims to create an AI that is more predictable, safer, and aligned with user values, offering a distinct philosophical approach compared to other AI tools.

Why are Claude’s coding capabilities superior? Claude is “amazing” for writing code, with users recommending Claude Code with a Claude Max or Pro subscription. Programmers generally agree that GPT-4o lags behind Claude Sonnet 4 in coding abilities, with o1-mini still not matching. In testing, Claude won 1st place in coding, with its Sonnet 4 model winning both design-wise and code-wise for creating a simple e-shop. Claude is preferred for coding due to its ability to remember instructions and conversation context, unlike ChatGPT, which “keeps forgetting” and requires constant reminders. One user reported Claude made only 2 errors in a coding session compared to ChatGPT’s minimum of 4 errors.

What makes Claude excel in technical knowledge and long-form analysis? Claude’s “writing and understanding skills are exceptional,” making it better for “technical writing” and “writing for LinkedIn/Substack.” For academic literature analysis, Claude interpreted everything correctly, while ChatGPT was “about 80% correct but the rest was completely inaccurate.” Claude 4.5 Opus is “so much better than ChatGPT at analyzing dense material,” even with mis-transcribed lecture transcripts. Claude is the winner for summarizing long documents, breaking down a 130-page PDF into clear sections, bullet points, and suggested headlines within seconds, with a less clunky process and less generic summary than ChatGPT’s.

How does Claude’s writing style offer a distinct alternative? Claude is “the thoughtful one,” trained to be a better listener, with a “gentle, less flashy vibe.” Claude Sonnet 4 sounds more natural and human right out of the box compared to GPT-4o, which can feel more generic and overuse certain phrases. Claude is significantly more expressive and natural in its writing style compared to ChatGPT, which can be dry and academic. Claude’s responses read more like a human, offering concise answers without excessive jargon or repetition. It writes in a variety of styles (conversational, casual, professional, humorous) with largely successful results.

Why is Claude’s context window a significant advantage? Claude offers a context window of up to 500,000 tokens, significantly larger than ChatGPT’s up to 128,000 tokens. Claude Sonnet 4 supports up to 1 million tokens (equivalent to 750,000 words or 75,000 lines of code). This allows for the analysis of entire novels, multiple novels, or dozens of research papers in a single request, including works like “Infinite Jest” in its entirety. Claude reads the entire conversation history every time it generates a reply, helping it maintain long-term context and refer back to earlier parts reliably, minimizing errors due to “forgetting.”

What demonstrates Claude’s unmatched logic and reasoning? Claude’s “logic is unmatched.” Claude 4 has significantly improved its reasoning capabilities with hybrid models (Opus 4.1 and Sonnet 4) that can switch between fast responses and extended thinking, excelling at complex problem-solving, advanced coding, and multi-step reasoning. In LiveBench reasoning, Sonnet 4 Thinking (95.25) and o3 High (93.33) scored highest, with Opus 4 Thinking third at 90.47, while ChatGPT 4.1 scored 44.39. Even when Claude fails, it “usually gives me something valuable — an insight I hadn’t considered — that I can use to figure things out myself.”

3. Gemini

Gemini is a ChatGPT alternative because of its superior integration with the Google ecosystem, advanced coding capabilities, enhanced image and video generation, competitive pricing and value, robust deep research abilities, and less restrictive content policies.

How does Gemini’s integration with the Google ecosystem make it an alternative? Gemini offers extensive integration with Google products, including 15GB of free cloud storage, and seamless connections to Drive, Docs, Calendar, Gmail, Maps, Keep, Photos, and YouTube Music. This “defining feature” allows users to pull information from emails, documents, and calendars, and AI Studio can develop apps with Drive access. ChatGPT does not offer similar bundled benefits or integrations.

Why are Gemini’s coding capabilities a significant advantage? Gemini excels in coding assistance, with a game made “99% Gemini” reaching nearly 80,000 lines of code and working “just fine.” Google AI Studio is used for coding, and solutions are “much more efficient than ChatGPT with less bugs and quicker,” often including many fine comments for beginners. ChatGPT struggled to process 2,400 lines of Google AI code and lacked comments.

What makes Gemini’s image and video generation competitive? Gemini, integrated with Nano Banana, generates “nearly perfect pictures so quick” and “photoshopped images so damn quick,” often with “more detail” and “higher resolution.” Google released Veo and Imagen 3 models in May 2024, with Veo 3.1 generating “realistic videos with audio” and a Flow tool for extending clips. While conflicting research exists, Ethan Mollick concluded Gemini’s premium Imagen 3 “leads the pack.” ChatGPT’s images are often “out of what they should be” and “super slow.”

How does Gemini offer competitive pricing and value? Gemini’s premium plans start at $8 per month, with Gemini Advanced at $19.99 per month, including 2 TB of storage and Gemini Pro 1.5 with a 2 million-token context window. This makes Gemini the “top pick overall due to incredible value and wide variety of different features,” especially with bundled Google Drive cloud storage and Workspace integrations. ChatGPT Plus costs $20 monthly and does not offer similar bundled benefits.

Why are Gemini’s deep research abilities a strong alternative? Gemini often accesses “far more sources” than ChatGPT during research, delivering 100% accurate results for academic research in a July 2024 Journal of Academic Ethics article, outperforming ChatGPT-3.5 (70%). Its report interface is “easier to navigate” and includes a “one-click way to export reports to Google Docs.” Recent research also indicated Gemini’s responses were more relevant, thorough, and supported by references.

4. Meta AI

Meta AI is a ChatGPT alternative because it offers similar core functionalities like text and image generation, provides unique features such as real-time image updates and voice mode, leverages a more cost-efficient underlying AI model (Llama 3/4), benefits from extensive integration across Meta’s platforms, boasts a massive existing user base of nearly 600 million monthly users, and offers basic functionalities for free.

How does Meta AI’s core functionality compare to ChatGPT? Meta AI is designed as a direct competitor to ChatGPT, offering similar capabilities such as summarizing news articles, rewriting emails, and generating images and text. Users can ask Meta AI to write blog posts or create images, directly mirroring the primary functions of OpenAI’s offering. Meta launched a standalone AI app to directly compete in the “larger AI race.”

What unique features differentiate Meta AI? Meta AI includes real-time image generation, updating output as prompts are typed, and the ability to animate generated images into GIFs. Its voice mode is described as “slicker,” allowing interruption mid-sentence and continued understanding, even while using other apps, contrasting with ChatGPT’s “more turn-based” voice chat. Meta AI also features a Discover Feed displaying celebrity memes and practical advice, showing diverse usage.

Why is Meta AI’s underlying model a competitive advantage? Meta AI runs on a mix of mostly Llama 3 plus Llama 4 for some features, with the standalone app utilizing the Llama 4 model released early this month. Meta touts Llama 4 as more cost-efficient than competitor models like Gemini, GPT, and DeepSeek, potentially allowing for broader accessibility and scalability compared to ChatGPT’s varied premium subscription options.

How does Meta’s integration strategy position Meta AI? Meta AI has been integrated into Facebook, WhatsApp, and Instagram, allowing users to chat with the AI assistant directly within these platforms, similar to ChatGPT’s private message functionality. This deep integration leverages Meta’s existing social media infrastructure, where Meta AI has long been used for comment moderation and news feed personalization.

What user base advantage does Meta AI possess? Meta AI had nearly 600 million monthly users as of December, significantly larger than OpenAI’s reported 400 million weekly active users for ChatGPT in February. Meta’s access to “rich and diverse data” from its billions of users across Facebook and Instagram allows it to train “highly optimized models across a unique data set,” potentially leading to more personalized responses. Users linking Facebook and Instagram profiles can create a more personalized experience, drawing on their activity across Meta platforms.

Is Meta AI accessible and cost-effective? Meta AI’s basic functionalities are currently free to use, making it readily accessible to a broad audience. This contrasts with ChatGPT, which has a range of options depending on premium subscriptions. While future premium features may incur costs, the current free access lowers the barrier to entry for new users.

5. Copilot

Copilot is a ChatGPT alternative because both utilize the latest OpenAI models (currently GPT-4o and GPT-5.2), Copilot offers a free tier with image generation and GPT-4 access during off-peak hours, Microsoft has invested heavily in OpenAI and integrated Copilot across its 365 ecosystem, both are multimodal chatbots capable of generating text, images, and code, Copilot provides robust safety and data handling features for enterprise use, and Copilot is accessible across multiple platforms including Windows, macOS, and mobile apps.

How do underlying AI models contribute to Copilot being an alternative? Both Copilot and ChatGPT are built on the same foundational GPT models from OpenAI, including GPT-4 and the latest GPT-4o and GPT-5.2. Microsoft, a major investor in OpenAI, also claims to use proprietary models alongside OpenAI’s technology. This shared core ensures both tools offer comparable advanced AI capabilities in understanding and generating content.

Why is Copilot’s free tier a significant factor? Copilot’s free version provides access to GPT-4 during off-peak hours and includes limited boosting for image generation using DALL-E 3. While ChatGPT also offers a free tier, Copilot’s free plan “maybe has the edge” by giving more access to advanced features, making it a strong alternative for users seeking powerful AI without a subscription.

What role does Microsoft’s ecosystem integration play? Copilot is Microsoft’s AI tool, deeply integrated across the Microsoft 365 ecosystem, including Word, Excel, PowerPoint, Outlook, and Teams. This integration allows Copilot to summarize emails, draft replies, generate presentation slides, and analyze data within these applications. For businesses heavily invested in Microsoft 365, Copilot’s seamless workflow automation is a compelling alternative to a standalone chatbot.

How do multimodal capabilities make Copilot an alternative? Both Copilot and ChatGPT are multimodal chatbots, capable of understanding and generating text, images, and code. Copilot specifically offers DALL-E 3 integration for realistic AI images, image-based search for charts and diagrams, and code visualization. These broad capabilities allow Copilot to perform diverse tasks, from creating documents to analyzing code, similar to ChatGPT.

Why are safety and data handling important for Copilot as an alternative? Microsoft developed Copilot with user safety and security in mind, contrasting with ChatGPT. Prompts and generated responses are not visible to Microsoft personnel, are not saved in the system, and are not used for training the underlying GenAI models. Seneca recommends Microsoft Copilot over ChatGPT for student and employee use due to these enhanced safety features.

How does accessibility contribute to Copilot’s status as an alternative? Copilot is widely accessible across desktop (Windows, macOS), web interfaces, and mobile apps (iOS, Android). It is included with Windows, integrated into the Edge browser sidebar, and available through Microsoft 365 apps. This broad availability ensures users can access Copilot’s AI assistance virtually anywhere, mirroring ChatGPT’s widespread reach.

6. Amazon Nova

Amazon Nova is a ChatGPT alternative because it offers a family of multimodal generative AI models handling text, images, and videos, provides a cost-effective solution with Nova Pro being 65.26% more cost-effective than GPT-4o, demonstrates superior efficiency and speed with Nova Pro operating 97% faster than GPT-4o, integrates seamlessly with AWS infrastructure for enterprise-grade security and scalability, includes specialized agents like Nova Act for browser-level automation, and supports over 200 languages with built-in safety features.

How does Nova’s multimodal capability position it as an alternative? Amazon Nova AI is the latest generative AI offering from AWS, a family of multimodal generative AI models handling text, images, and videos. Nova 2, a family of multimodal AI models, rivals OpenAI’s GPT-5 and Google’s Gemini 3 Pro. Nova 2 combines text, image, audio, and video inputs in a single model, allowing it to analyze multiple data types together, such as summarizing a report or generating a slide deck from a PDF or YouTube clip.

Why is Nova considered a cost-effective alternative? Nova Pro is priced at $0.80 per million input tokens, making it 65.26% more cost-effective than GPT-4o. For an AI assistant service handling 10 million queries per month, Nova Pro could save an organization $5,674.88 per month ($68,098 per year) compared to GPT-4o. Nova Micro and Nova Lite were 73.10% and 56.59% cheaper than GPT-4o mini, respectively, offering significant savings for basic and everyday tasks.

What makes Nova’s efficiency and speed a compelling alternative? Nova Pro operated 97% faster than GPT-4o, with an average latency of 1.68 seconds compared to GPT-4o’s 2.16 seconds. Nova Micro processes up to 210 tokens per second, surpassing some existing AI models. Nova Micro and Nova Lite demonstrated faster response times, with 20.48% and 26.60% improvements, respectively, compared to GPT-4o mini. Nova 2 handles video analysis and OCR faster than any other model released in 2025.

How does Nova’s integration with AWS infrastructure provide an advantage? Nova integrates seamlessly with Amazon Web Services (AWS) tools like SageMaker, S3, and Lambda, enhancing scalability and operational efficiency. It is part of AWS infrastructure, enabling it to run on existing infrastructure with zero setup time and scale from one agent to a thousand instantly using the Amazon Bedrock API. All data stays within the AWS environment, ensuring enterprise-grade security and privacy.

What specialized features and agents does Nova offer? Nova includes specialized agents like Nova Act, a browser-level agent built on Nova 2 that can browse the web, fill out forms, collect leads, and interact with online dashboards. AWS introduced Amazon Nova Act, an agent-framework positioned to rival OpenAI’s Operator and Anthropic’s Claude agents. Nova Forge, a no-code AI builder inside Bedrock, allows users to create custom Nova models trained on company documents and workflows, similar to ChatGPT’s “Custom GPTs” but fully integrated with AWS.

Why is Nova’s language support and safety features important? Amazon Nova AI supports over 200 languages, making it versatile for global applications. It includes built-in watermarking and moderation tools to ensure safe AI use, addressing critical concerns for enterprise adoption.

7. Amazon Nova Pro

Amazon Nova Pro is a ChatGPT alternative because it offers direct competitive performance against leading models like GPT-4o and Sonnet 3.5, provides significant cost advantages with up to 78% lower output token pricing, boasts advanced multimodal capabilities handling text, images, and video, integrates seamlessly with the AWS ecosystem for enhanced scalability, and features a one-million token context window for extensive data analysis.

How does Amazon Nova Pro offer direct competitive performance? Nova Pro performs very close to Anthropic’s Sonnet 3.5 and keeps pace with OpenAI’s GPT-4o, demonstrating similar capabilities to premium models. For specific tasks, Nova Pro is considered as good as 4o or Sonnet 3.5. Even the smallest Nova model, Micro, achieves exceptional results in instruction following and multi-step reasoning, implying Nova Pro’s superior capability. Nova Pro achieved 26.3% on FrontierMath and 78.4% average on MMMU Pro, directly positioning it against popular competitors like ChatGPT, Grok 2.0, and Gemini.

Why does Nova Pro provide significant cost advantages? Nova Pro is 73% cheaper than Anthropic’s Sonnet 3.5 on input tokens ($0.80 per 1M tokens) and 78% cheaper on output tokens ($3.20 per 1M tokens). Nova Pro is roughly a quarter of the cost compared to competitors with similar capabilities and about 70% cheaper than Sonnet 3.5. It is also 36% cheaper than Gemini 1.5 Pro, which is noted as the cheapest of the premium tier models.

What makes Nova Pro’s advanced multimodal capabilities a compelling alternative? Nova Pro is the flagship multimodal model with vision capability, part of the Amazon Nova family of generative AI models. Integrated with Amazon Bedrock, Nova Pro handles text, images, and videos, contrasting with ChatGPT’s text-based focus. Nova offers unparalleled versatility for tasks like creating product visuals, automating production line tasks, and analyzing production line videos to identify inefficiencies. Nova includes specialized tools like Nova Canvas for image editing and Nova Reel for video creation.

How does seamless integration with the AWS ecosystem enhance Nova Pro’s appeal? Nova Pro integrates seamlessly with Amazon Web Services (AWS) tools like SageMaker, enhancing scalability and operational efficiency. Built on Amazon’s cloud infrastructure, Nova Pro manages large-scale operations and high volumes of data. Nova’s effectiveness and integration are dependent on the AWS ecosystem, providing a robust platform for enterprise deployment.

Why is a one-million token context window significant? Amazon Nova Premier, from which Nova Pro is distilled, boasts a one-million token context, suitable for analyzing massive documents or expansive code bases. This contrasts with ChatGPT Enterprise’s 32k token context windows, allowing Nova Pro to handle significantly larger inputs for tasks like document analysis and parsing unstructured data. This capability is crucial for sophisticated tasks and complex reasoning.

8. Microsoft Phi

Microsoft Phi is a ChatGPT alternative because its performance rivals larger models like GPT-3.5 and GPT-4o-mini, it offers significant cost and efficiency advantages with up to an order of magnitude reduction in TCO, Microsoft strategically positions Phi to gain leverage against OpenAI and compete with Llama 3, it boasts broad availability across Azure, Hugging Face, and local execution platforms, the models are built on advanced technical specifications including multimodal capabilities and large context windows, and they are designed for diverse use cases from edge devices to enterprise applications.

How does Phi’s performance rival larger models? Phi-3’s overall performance rivals models such as Mixtral 8x7B and GPT-3.5, which powers the free version of ChatGPT. Phi-3-mini’s quality of results are comparable to models 4x larger. Benchmarks suggest the larger Phi-3.5 mixture of expert models’ output will be of similar quality to OpenAI’s GPT-4o-mini (the free version of ChatGPT) and seems to outperform it in STEM and social sciences. Phi-4 series SLMs now rival far larger LLMs on math, coding, and reasoning, with Phi-4-Reasoning outperforming models 50-times its size on Olympiad-grade math. Phi-4 also outperforms larger models like Google’s Gemini Pro and GPT-4o-mini in challenging benchmarks such as MATH and MGSM, scoring over 80%.

Why are Phi models more efficient and cost-effective? Phi models operate at a fraction of the cost compared to larger LLMs, collapsing AI latency to milliseconds and Total Cost of Ownership (TCO) by an order of magnitude. Phi-3’s smaller size makes it significantly cheaper than larger models, leading to easier deployment with less approvals required. Phi-3-mini runs comfortably with less than 8GB of RAM and can churn out tokens at a reasonable speed even on just a regular CPU, working well on a $55 Raspberry Pi. Phi-4 requires significantly fewer computational resources, reducing costs and lowering energy consumption.

What is Microsoft’s strategic positioning for Phi? Microsoft’s release of Phi-3 is seen as a way to gain additional leverage against OpenAI, preventing them from handing all the keys to Sam Altman. It is suggested Microsoft wants to release these models as leverage against OpenAI, stating, “renew the deal or we’ll cut you off of Azure and serve our own models—good luck getting Google Cloud or Amazon AWS.” Microsoft is perfectly happy to have a bunch of OpenAI alternatives, positioning Phi-3 as Microsoft’s answer to the Llama 3 threat.

How accessible are the Phi models? Phi-3 Mini is available in Azure AI Services alongside Mistral/Mixtrals/Llama3 and GPT 3.5/4, indicating its inclusion as a viable option for production. Phi-3 is immediately available on Microsoft’s Azure, Hugging Face, and Ollama for local Mac/PC execution. Phi-3.5 comes in 3.8 billion, 4.15 billion, and 41.9 billion parameter versions, all available to download for free and can be run using a local tool like Ollama. Phi-4 was released as a fully open-source project with downloadable weights on Hugging Face under a permissive MIT License, allowing commercial applications.

What are the technical specifications that enable Phi’s capabilities? Phi-3.5 comes in a vision model version that can understand images and text and includes mixture of expert models for efficient processing. The mixture of expert models has a large 128k context window, equal to ChatGPT and Claude. The smallest Phi-3.5 model (3.8 billion parameters) was trained on 3.4 trillion tokens of data using 512 Nvidia H100 GPUs over 10 days. Phi-4 is a 14-billion-parameter dense, decoder-only transformer model, trained on 9.8 trillion tokens of curated and synthetic datasets, including multilingual content (8%). Phi-1.5, a 1.3 billion parameter model, is now multimodal, capable of interacting with images and understanding images in inputs, a capability previously associated with larger models like ChatGPT’s GPT-4V.

What are the diverse use cases for Phi models? Phi models run locally on CPUs, NPUs, and edge devices, executing natively on consumer NPUs. Enterprises embrace SLMs like Phi for privacy, latency, and TCO. They are suitable for routine or domain-constrained work and can be embedded where privacy, bandwidth, or real-time interaction demand locality. The small Phi-3.5 model size allows it to be bundled with an application or installed on Internet of Things devices (e.g., smart doorbell) for on-device processing like facial recognition without cloud data transfer. The Phi-X series of small models is ultimately aimed at running on edge devices.

9. Grok

Grok is a ChatGPT alternative because it offers real-time information access from X, provides uncensored and direct responses with fewer content restrictions, demonstrates strong coding and developer integration with high performance, excels in analytical capabilities and problem-solving, delivers faster performance and speed in its UI and responses, and features a distinct tone and personality that is more casual and unfiltered.

How does real-time information access contribute to Grok’s alternative status? Grok provides real-time access to information from X (formerly Twitter), which is a significant advantage for live news, current events, and trending topics. xAI’s announcement claims a “unique and fundamental advantage of Grok is that it has real-time knowledge of the world via the 𝕏 platform.” ChatGPT, in contrast, relies on static training data and requires plugins or external data sources for live integration.

Why are uncensored and direct responses a key differentiator? Grok is perceived as “less censored” and “unfiltered,” providing “in depth blunt answers” and “cutting through the BS.” It operates with fewer constraints, engaging with taboo or controversial prompts and even generating explicit content that ChatGPT rejects. Grok’s default “Fun Mode” offers sarcastic, blunt, or joke-filled answers, treating users “like an adult” and not telling them “what you can or can’t ask of it.”

What makes Grok’s coding and developer integration noteworthy? Grok is described as “really good at coding” and having “slightly better dev integration for coders.” Grok 4 is considered one of the best AI for coding, scoring 74.9% in coding (SWE-bench Verified) compared to GPT-5.1’s 76.3%. Grok also outperforms ChatGPT on STEM-related tasks and coding speed, according to Latenode.

How do Grok’s analytical capabilities and problem-solving skills compare? Grok 3 mini is “quite impressive in the level of analysis it can give,” producing “useful and very accurate” information for psychological and sociological analysis. Grok is seen as “aggregating info to connect logical dots” more accurately and deeper than other AI. One user found Grok “won hands down” in preparing professional report documents and analyzing a CSV file with 28,000 rows of data. Grok 4 scored 25.4% in intelligence tests (Humanity’s Last Exam), beating Google’s Gemini 2.5 Pro at 21.6%, and jumped to 44.4% with tools enabled.

Why is Grok’s performance and speed a significant factor? Grok’s UI is “faster and smoother,” and it is “way fast and responsive” compared to ChatGPT, which “became too slow with long chats.” Grok’s responses are quick and confident, outperforming ChatGPT in response time, according to Android Police. Grok 4.1 immediately shot to #1 on LMArena’s Text Arena leaderboard with an Elo rating of 1483, beating Claude Sonnet 4.5 (1445) and GPT-5.1 (1437) by significant margins.

What defines Grok’s distinct tone and personality? Grok has a “more casual” voice mode and sounds “the most human” in its use of language. It is noted for its “edgier” and “unhinged personality,” providing “short answers without the Here why that works and you’re superior to Steve Jobs bullshit from ChatGPT or claude’s you’re culturally appropriating topics that will offend some niche group of liberals and therefore I cannot assist crap.” Grok “just gets it done like a real assistant without over-explaining, judging or imposing its moral framework.”

10. ChatOn

ChatOn is a ChatGPT alternative because it offers image generation on a single platform, provides real-time internet search capabilities, ensures seamless web-mobile sync across devices, includes dedicated document management features, and offers a free forever option alongside budget-friendly premium plans starting at $6.99/week.

How does ChatOn’s image generation capability position it as an alternative? ChatOn AI generates both text and images within a single platform, eliminating the need for external integrations like DALL-E. This integrated approach offers a significant advantage over ChatGPT for content creators, streamlining workflows by 15-20% according to user feedback.

Why are real-time internet search capabilities important for an AI assistant? ChatOn AI is equipped with real-time internet search, providing up-to-date information retrieval. This feature is a key differentiator from ChatGPT, where real-time search is only available in specific versions (Plus, GPT-4 Turbo), making ChatOn more accessible for current information needs.

What makes web-mobile sync a valuable feature for users? ChatOn AI provides seamless access across various devices, including mobile, computer, tablet, and laptop, while maintaining user subscriptions and chat histories. This multi-device accessibility ensures a consistent user experience, with 90% of users reporting improved productivity due to uninterrupted workflow.

How do ChatOn’s document management features enhance its utility? ChatOn AI includes dedicated features for document-related natural language processing tasks. These include CV building, email generation, content summarization, language translation, and insight extraction. This “Document Master” functionality offers a distinct advantage over ChatGPT for users focused on document-intensive tasks, reducing manual effort by up to 40%.

Why is ChatOn’s pricing model a compelling alternative? ChatOn AI offers a free forever option, making it accessible to a broad user base. Additionally, its premium plans, such as ChatOn Premium Weekly at $6.99/week and ChatOn Premium Weekly PRO at $7.99/week, are positioned as a “budget-friendly writing tool” compared to ChatGPT, which Reddit users have noted as being more expensive.

11. Deepseek

DeepSeek is a ChatGPT alternative because it offers significant cost advantages with free access and lower API rates (DeepSeek V3 API input at $0.07/million tokens vs. OpenAI GPT-4.1 at $2/million tokens), demonstrates superior performance in coding and technical tasks (often providing workable solutions “straight up”), excels in complex reasoning and problem-solving with “deeper and better written” responses, provides enhanced content generation and communication with “10 times more in depth” answers, leverages advanced technological achievements like its Mixture-of-Experts (MoE) model, and offers extensive customization and fine-tuning capabilities due to its open-source nature.

How does DeepSeek offer significant cost advantages? DeepSeek is free to use, while ChatGPT requires paid versions for advanced features (e.g., ChatGPT4 at $20/month). DeepSeek’s V3 model API access costs $0.07 for input and $1.10 for output per million tokens, which is substantially less than OpenAI’s GPT-4.1 model API access at $2 for input and $8 for output. DeepSeek is also “10 to 20 times cheaper to run” and “less expensive AND better for the environment” due to lower energy and microchip usage. Its R1 model cost $12 million to train, significantly less than ChatGPT’s estimated $100 million development cost.

Why does DeepSeek demonstrate superior performance in coding and technical tasks? DeepSeek is frequently cited as superior for code generation and debugging, often providing workable solutions “straight up” or “one shots it.” For R or Python prompts, DeepSeek R1 gives exactly what is needed, unlike ChatGPT (o1) which may miss dependencies. DeepSeek is 10x better for PySpark code generation for one user and consistently gets calculus questions correct, whereas ChatGPT “almost always fails” with mathematical calculations. DeepSeek fixed buggy codes in one go and provided a working Python script for interactive 3D point movement in the first try, while ChatGPT took 3-4 iterations.

What makes DeepSeek excel in complex reasoning and problem-solving? DeepSeek provides “deeper and better written” responses and is great for “complex reasoning tasks,” including PhD research. It is better at logical thinking and working out theories, consistently taking restrictions and preferences into account for complex itinerary planning, unlike ChatGPT which forgot them by the 3rd or 4th prompt. DeepSeek achieves 80-100% correct answers in an online nursing exam, compared to ChatGPT’s 40-50%. DeepSeek R1 displays an “internal monologue” showing its thought process, leading to answers that mirror human reasoning, and provided an article outline “remarkably similar to how I would approach the topic,” including “very relevant points like ethical considerations for bias, fairness, and transparency” that ChatGPT “entirely skipped.”

How does DeepSeek provide enhanced content generation and communication? DeepSeek is better for creative writing and research on niche topics, hallucinating less and respecting user knowledge more than ChatGPT. Its answers are “10 times more in depth and intellectually stimulating” and provide more information. DeepSeek can generate text undetectable by AI detectors with a formal tone, which ChatGPT struggled with. DeepSeek offers “much better non-censored conversation” on politics compared to ChatGPT, which refuses to discuss certain topics. DeepSeek is also described as “way funnier” and capable of “playful banter.”

What are DeepSeek’s advanced technological achievements? DeepSeek shocked the AI scene in early 2025 with significant technological achievements, with its models essentially as good as OpenAI’s by 2026. DeepSeek uses a Mixture-of-Experts (MoE) model, activating only 37B parameters per query from its 671B parameters, making it more economical to run. DeepSeek pulls from a narrow subset of data related to a specific search, achieving comparable results for cheaper and with far less processing power than ChatGPT, which crawls its full database for each query. DeepSeek-V3 is comparable to GPT4.o for quick text responses, and DeepSeek V3.2 with thinking mode performs comparably to GPT-5.2 with medium effort thinking.

Why does DeepSeek offer extensive customization and fine-tuning capabilities? DeepSeek’s models are open-source, allowing anyone to download, run, and customize them locally, bypassing costs and privacy concerns, which is “simply not possible with ChatGPT.” DeepSeek is more customizable than ChatGPT, offering full control over fine-tuning. It tends to perform better on specialized datasets, designed with fine-tuning for different domains in mind, making it suitable for niche applications requiring high accuracy (e.g., finance). Its open-source model offers more control over AI-generated content because it is completely customizable, allows data hosting on private servers, enables changes to underlying training methodology, and has no limits on API or third parties.

12. Kimi

Kimi is a ChatGPT alternative because it offers significantly lower costs with a 96.5% reduction compared to GPT-4, provides an expanded context window of 262K tokens, features advanced Agent Swarm Technology managing up to 100 sub-agents, demonstrates superior document processing and real-time web search capabilities, matches or exceeds frontier models on reasoning and coding benchmarks, and is an open-source, open-weight model with native Chinese language support.

How does Kimi’s cost-effectiveness make it a viable alternative? Kimi K2.5 is 200 times cheaper for input tokens ($0.60/M tokens vs $30/M tokens) and 24 times cheaper for output tokens ($2.50/M tokens vs $60/M tokens) than GPT-4. For 1 million tokens, Kimi K2.5 costs $3.10, a 96.5% cost reduction compared to GPT-4’s $90. A startup processing 100 million tokens per month would save $104,280 annually using Kimi K2.5 ($310/month) versus ChatGPT ($9,000/month). Kimi K2 also costs 10x less than other frontier models and is free to use with no usage limits.

Why is Kimi’s expanded context window a key differentiator? Kimi K2.5 boasts a 262K token context window, approximately 200,000 words or 400 pages, which is twice that of ChatGPT’s 128K tokens (96,000 words or 192 pages). This larger context window allows Kimi.ai to handle up to 200,000 characters in a single query, significantly more than ChatGPT’s 32,000 characters per prompt, enabling more comprehensive analysis of long documents and conversations.

What makes Kimi’s Agent Swarm Technology a powerful alternative? Kimi K2.5 features Agent Swarm Technology that can manage up to 100 sub-agents simultaneously, in contrast to ChatGPT’s limited tools and single agent. Kimi K2 is optimized for agentic tasks, designed to interact with tools, learn from external feedback, and adapt behavior, capable of running 200-300 tool calls in one session.

How do Kimi’s document processing and search capabilities compare? Kimi K2 has “destroyed ChatGPT” and outperformed Gemini in processing a 60-page legal agreement PDF. Kimi K2 is also considered “the best especially with respect to search and comparisons for financial scenarios,” featuring real-time web search capabilities that access over 1,000 websites.

In what ways does Kimi’s performance match or exceed other models? Kimi K2.5 matches or exceeds GPT-4 on math (96.2% vs 95%), coding, and reasoning. Kimi K2 achieves 53.7% accuracy on LiveCodeBench v6 (coding) compared to GPT-4.1’s 44.7% and demonstrates 97.4% accuracy on MATH-500. It uses Interleaved Reasoning (Plan → Act → Verify → Reflect → Refine) to catch and correct failures step-by-step.

Why is Kimi’s open-source nature and language support significant? Kimi K2.5 operates under a “Modified MIT” license, making it open-source, unlike ChatGPT which is proprietary. Kimi K2 is also an open-weight AI model, meaning its trained parameters are publicly available for download, fine-tuning, and deployment on private infrastructure. Furthermore, Kimi K2.5 offers “Native” and “Excellent (10/10)” Chinese language capabilities, outperforming ChatGPT’s “Good (7/10).”

13. Pi

Pi is a ChatGPT alternative because its core identity focuses on personal assistance and emotional support, it offers superior conversational quality with a “human-like” interaction, it excels in specific use cases like emotional support and everyday interactions, it provides a refreshing interactive experience with real-time data, it is free and easily accessible, and it is backed by significant development and funding from Inflection AI.

How does Pi’s core identity position it as an alternative? Pi introduces itself as “your personal AI” and stands for ‘personal intelligence’, aiming to be a “kind and supportive companion assistant.” Its stated goals are to be “useful, friendly, and fun,” offering advice, answers, or general conversation, unlike ChatGPT, which focuses on understanding and generating text for a wide range of applications like stories, articles, and code.

Why is Pi’s conversational quality considered superior? Pi is consistently described as a superior conversationalist, “fun, naturally creative,” making users “feel like I’m talking to a real person” or “a friend companion.” Users perceive Pi as having a higher “Emotional Quotient” and being “more ‘human’ in its interaction” compared to ChatGPT, which is likened to talking to a “Scientist, or a University Professor.” Pi talks at a much quicker pace, seems to understand the user better, and offers a slightly more emotional response.

What specific use cases highlight Pi’s strengths? Pi leads in the arena of conversational AI for emotional support, serving as “one to talk to when we are down.” It is described as being “a little more responsive, a little more intimate, and a little more conversational,” and can help improve users’ empathy and emotional intelligence skills. Pi is useful for “everyday interactions” and can provide recommendations, such as for enhancing badminton skills.

How does Pi’s interactive experience and data differentiate it? Pi provides “refreshing back-and-forth communication,” remembers everything discussed, and offers insightful feedback. Pi helps users learn how to create new and relevant questions, make decisions together, and has amazing follow-up questions, always wanting the user’s opinion. Pi is “beyond awesome at asking questions,” which is valuable for designers interested in research and human communication. Pi’s UI/UX is “much better than ChatGPT,” and it responds quicker. Pi is based on real-time data sources and “seems to be updated very recently,” allowing users to ask about the most recent events, unlike ChatGPT, which has a knowledge cutoff in September 2021.

Why is Pi’s accessibility a key factor? Pi is explicitly noted as being free and accessible at heypi.com. Users are not initially required to create an account to use Pi, unlike ChatGPT, with an account requested only after a “certain number of interactions.” Pi, initially unavailable in Spain a couple of weeks prior to an article’s publication, became available during the week of publication.

Who is behind Pi’s development and funding? Pi was created by Inflection AI, a one-year-old startup that recently raised $1.3 billion in funding. Inflection AI is backed by tech giants like Microsoft and Nvidia, and billionaires such as Reid Hoffman and Bill Gates. Inflection AI was founded in 2022 by LinkedIn co-founder Reid Hoffman and Google DeepMind co-founder Mustafa Suleyman, with the chatbot technology developed in-house, focusing on “human-like conversations with high emotional intelligence.”

14. Llama

Llama is a ChatGPT alternative because its open-source nature allows for extensive customization and control over data, it offers significantly higher computational efficiency and lower costs, it provides enhanced privacy and data sovereignty for local deployments, it fosters a vibrant open-source community for continuous innovation, it supports specialized use cases like coding and technical text generation, and its cost structure is more predictable with no licensing fees.

How does Llama’s open-source nature enable customization and control? Llama is an open-source language model developed by Meta AI, available for both research and commercial use. This allows developers to customize the model for specific activities or domains, fine-tune it on private data, and run it locally for total data control. Users can modify the model architecture or combine it with other models, which is not possible with ChatGPT’s core model.

Why is Llama more computationally efficient and cost-effective? Llama is 30 times cheaper than GPT-4 and is more computationally efficient, making it suitable for applications with limited hardware. It depends on fewer resources compared to other models and is noted to outperform ChatGPT in efficiency despite having limited parameters compared to ChatGPT’s over 1.7 trillion parameters. Llama is also more lightweight than GPT-4 in practice due to its efficient design.

What makes Llama a better choice for privacy and data sovereignty? Building a Llama-based application at home offers 100% privacy. Organizations can keep all data in-house, deployed in a private cloud or on-premises, giving full sovereignty over AI, including data and responses. This contrasts with ChatGPT, where data is processed on OpenAI’s servers, even with strong privacy commitments for business users.

How does Llama’s open-source community contribute to its viability? Llama benefits from a vibrant open-source community that offers pre-trained models and research articles. As of late 2024, over 65,000 Llama-derived models are circulating in the community. Tools like Hugging Face’s Transformers library provide high-level APIs for Llama, and methods like LoRA allow efficient fine-tuning.

For which specialized use cases does Llama excel? Llama 3.1 405B outperforms GPT-4 for coding and programming tasks, providing more accurate responses and corrected output. Llama is also highly effective for tasks requiring precision, generating concise, business-focused summaries, and producing highly technical texts or images. It scores 82% on the Massive Multi-task Language Understanding 5-shot performance test.

Why is Llama’s cost structure more predictable? Llama models are free to download and use, with costs associated primarily with computing power and infrastructure (GPU servers). For high volumes, hosting an open model like Llama can be over 19 times more cost-effective than proprietary models via API providers. The marginal cost of additional usage for Llama is very low once infrastructure is set up, offering more predictable, mostly fixed costs.

15. Nexos AI

Nexos AI is a ChatGPT alternative because it provides a unified layer for over 200 AI models including ChatGPT, it offers an AI Workspace for teams to chat with multiple LLMs in one interface, user Younestaid identified Nexos AI as a ChatGPT alternative on 10/21/2025, and Nexos AI enables effortless switching between models in the same window.

How does providing a unified layer for over 200 AI models make Nexos AI an alternative? Nexos AI offers access to a vast array of AI models, including ChatGPT, the Claude model, the DeepSeek model, and Gemini, all from a single platform. This centralized access allows users to leverage diverse AI capabilities without managing individual subscriptions or APIs, effectively serving as a comprehensive hub for AI interactions.

Why is an AI Workspace for teams significant? The AI Workspace allows teams to engage with multiple large language models (LLMs) through a unified interface. This functionality streamlines collaboration and experimentation, enabling users to interact with various AI tools, including ChatGPT, within a single, managed environment. This contrasts with using individual LLM platforms separately.

What is the significance of user identification? User younesfaid explicitly mentioned Nexos AI as a ChatGPT alternative on 10/21/2025, 5:12:51 PM. This user perspective highlights how some individuals perceive Nexos AI as a direct substitute or a comparable option for their AI interaction needs, similar to how they might use ChatGPT.

How does effortless model switching enhance Nexos AI’s role as an alternative? Nexos AI’s interface allows users to effortlessly switch between different AI models within the same chat window. This capability provides flexibility and efficiency, enabling users to leverage the strengths of various LLMs for different tasks without leaving the platform, offering a dynamic alternative to being locked into a single model like ChatGPT.

16. Sintra AI

Sintra AI is a ChatGPT alternative because it offers specialized capabilities in specific domains, provides a personalized approach with customized solutions, boasts superior accuracy with 26,749 additional data points, delivers faster response times averaging 190ms, offers proactive AI assistance through “helpers,” and supports more languages with over 150 options.

How do Sintra AI’s specialized capabilities differentiate it? Sintra AI excels in tasks requiring deep knowledge within narrow fields, such as medical diagnostics or legal analysis, providing nuanced and precise responses. This contrasts with ChatGPT’s general artificial intelligence, which engages across a wide array of topics without being confined to one niche area. Sintra AI’s focus on specific domains allows it to integrate seamlessly into existing business processes, unlike ChatGPT’s general recommendations.

Why is Sintra AI’s personalized approach significant? Sintra AI offers customized solutions and a suite of AI assistants tailored to specific company operations, including data analysis, SEO optimization, and sales automation. This personalized approach, exemplified by Vizzy, an Executive AI Assistant managing over 10,000 tasks simultaneously, allows Sintra AI to learn and adapt alongside a company, offering proactive solutions across essential business sectors like customer care automation and email marketing.

What makes Sintra AI’s accuracy superior? Sintra AI is reported to be three times more accurate than ChatGPT, incorporating 26,749 additional data points to enhance results. This addresses ChatGPT’s tendency to give misleading and erroneous information, implying Sintra AI provides more reliable information.

How do faster response times improve the user experience? Sintra AI boasts a 190ms average response time, significantly faster than ChatGPT’s issues with server traffic. This speed contributes to a more dynamic and current approach, unlike ChatGPT, which “can’t access the internet” and “essentially just repeats its replies.”

Why are proactive AI helpers a key alternative feature? Sintra AI’s “helpers” proactively suggest business tasks, such as improving web copy or creating content calendars, by asking simple questions and reviewing websites. This eliminates the need for direct user prompts, making AI “fun and easy for overwhelmed business owners” and requiring no prompt engineering. This contrasts with ChatGPT, which requires significant effort in structuring processes and defining requests.

How does language support enhance Sintra AI’s appeal? Sintra AI supports 150+ languages, compared to ChatGPT’s 80+. This broader language capability makes Sintra AI accessible to a wider global audience, enhancing its utility for diverse business operations.

17. Poe

Poe is a ChatGPT alternative because it offers unified access to multiple cutting-edge AI models for a single subscription fee (around $19.99/month), allows users to create and monetize custom bots with flexible server-side interactions, provides an API with context management that avoids “stonewalling” issues reported in standalone Claude Pro, supports multi-bot collaboration mixing different models and modalities (e.g., Claude for prompts, Flux for images), and is accessible across multiple platforms including iOS, Android, MacOS, and web.

How does unified access contribute to Poe being an alternative? Poe aggregates various AI models like GPT (including GPT-4o-mini), Claude (e.g., Claude 4 Sonnet), Gemini (2.5 Pro and Flash), and Llama 3.1/3.2, providing a single point of access. This allows users to chat with and directly compare responses from different models without switching sites or apps, a feature not natively available in standalone ChatGPT. A single subscription fee, similar to ChatGPT Plus, covers “EVERYTHING that comes out.”

Why is custom bot creation significant? Poe enables users to create and save custom bots by writing their own system prompts, altering how AI tools are used. These bots are shareable, even with free Poe accounts. Unlike GPTs, Poe offers a better experience for server-based applications, allowing dynamic response updates and more flexible timeout mechanisms, moving beyond the “one-question-one-answer” interaction. Users can also monetize their custom bots.

What makes Poe’s API and context handling a strong alternative? Poe offers an API that uses a “compute point system” for requests, varying by model. Users report not being “stonewalled using Claude in Poe,” which can happen with standalone Claude Pro after just two messages with long code. Poe also allows users to clear context within the UI to manage the context window, enabling longer conversations.

How does multi-bot collaboration enhance Poe’s appeal? Poe supports collaboration between different AI models and modalities. For example, a user can have Claude generate a prompt, then Flux generate an image based on that prompt, and finally Pika create a video. This integrated workflow for diverse tasks, from text to image and video generation, offers a broader creative toolkit than a single-model platform.

Why is cross-platform accessibility important for an alternative? Poe is available on iOS, Android, MacOS, and web, ensuring users can access the platform from virtually any device. This broad accessibility allows for seamless transitions between devices, catering to a wide range of user preferences and work environments, similar to or exceeding the availability of other leading AI platforms.

18. i10x AI

i10X AI is a ChatGPT alternative because it consolidates multiple top AI models into one platform (including GPT-4o mini, GPT 5, and Claude 3.5 Haiku), offers a comprehensive suite of 500+ tools beyond basic chat, provides significant cost savings of up to 90% compared to separate subscriptions, features a unique single memory across all AI agents (shipped 9/30/2025), and includes robust image and video generation capabilities.

How does consolidating multiple AI models make i10X an alternative? i10X integrates access to leading AI models such as GPT (ChatGPT-4o mini, ChatGPT 5, o3, o4, 4.1, 4.0), Claude (3.5 Haiku, 4/3.7/3.5), Gemini (2.5), Grok (3 Mini, 4/3/2/Vision), and Perplexity under a single subscription and login. This eliminates the need for separate accounts, addressing the user pain point of having “separate subscriptions” for various AI services.

Why is a comprehensive suite of 500+ tools significant? i10X extends beyond basic chat functionality by offering an extensive library of over 500 ready-made AI agents and tools. These cover diverse workflows including marketing and SEO (50+ tools for plans, keywords, social posts), business tools (contract generation, business plans), and PDF & document management (ChatPDF, translation, summarization). This broad functionality positions i10X as an “all-in-one AI solution” rather than just a chat tool.

How does i10X provide significant cost savings? i10X claims users can “Save 90% on AI Costs” by replacing “costly subscriptions” like ChatGPT, Perplexity, and Midjourney. The platform offers predictable costs with fixed plans and credits, avoiding per-token surprises. For example, the Basic Plan costs $10/month for 5,000 basic model uses, while the Unlimited Plan is $25/month for unlimited access to all models and 7,500 i10X Credits. An additional 15% saving is available using the code “DDT15.”

What is the impact of a single memory across all AI agents? A key feature, shipped on 9/30/2025, allows all models within i10X to share a single memory, remembering user preferences across different agents. This means “switching models doesn’t mean starting from scratch,” a significant improvement over traditional platforms where context is lost. DavidK777 was “sold” on i10X due to this conversation memory feature.

How do image and video generation capabilities enhance i10X as an alternative? i10X integrates advanced image and video generation tools, supporting models like Flux, GPT-Image-1, DALL·E, Kling, Google Veo, and MiniMax. It also includes features for background removal, ad creation, watermarking, filters, and upscaling. This consolidates the functionality of services like Midjourney, making i10X a comprehensive creative solution.

19. Writingmate.ai

Writingmate.ai is a ChatGPT alternative because it integrates over 200 popular and niche AI models, offers comprehensive file-centric workflows for PDFs and documents, provides text and image generation in a single workspace, addresses ChatGPT’s frequent downtime and UX limitations, enables side-by-side comparison of multiple LLM outputs, and offers a single subscription with “much less limits” compared to individual chatbot subscriptions.

How does the integration of over 200 AI models make Writingmate.ai an alternative? Writingmate.ai provides access to advanced models like GPT 4o (full version), Claude 3.7 Sonnet, Llama 3.2, Gemini, and Mistral Large, unlike ChatGPT’s free version which limits access to GPT-4o and other OpenAI models. A paid ChatGPT Plus subscription ($20/month) only grants access to certain OpenAI models, requiring additional subscriptions for other LLMs. Writingmate.ai also includes a Web Search plugin, allowing AI models to directly access the internet for literature reviews and fact-checking.

Why are comprehensive file-centric workflows significant? Writingmate.ai supports direct interaction with PDFs, Docs, Slides, and Sheets, enabling extraction of key points, quotes with page references, tables from PDFs, and conversion of text to structured data (JSON/CSV). While ChatGPT Plus allows file uploads, multi-file projects often require users to manually copy results into other applications, making Writingmate.ai more efficient for complex, file-heavy tasks.

What makes combined text and image generation a strong alternative? Writingmate.ai offers both text generation and image creation using models like Stable Diffusion, DALL-E, and Flux.ai in one place. ChatGPT’s free version has limitations when adding images or documents, positioning Writingmate.ai as a more versatile “all-in-one AI tool” for both text and images.

How does Writingmate.ai address ChatGPT’s operational limitations? Writingmate.ai aims to solve issues like ChatGPT being “almost always down” and having a “far from ideal” UX in a separate tab. Its Chrome browser extension is accessible on “every browser tab with one click” via a crystal ball icon or Cmd/Ctrl+M. The ability to switch between models in one click ensures continuity of work even if a preferred model is temporarily unavailable.

Why is the ability to compare multiple LLM outputs important? Writingmate.ai allows users to run the same prompt through multiple models (e.g., for emails, legal summaries, bulk tasks) and select the best result without needing multiple subscriptions. This contrasts with ChatGPT Plus, which locks users into the OpenAI universe and makes it difficult to compare answers from different LLMs side-by-side, such as Claude or Gemini.

How does the subscription model offer a compelling alternative? Writingmate.ai offers a single subscription (Pro starts at $16.6/month annually, Ultimate at $200/month for heavy users) that includes access to Perplexity AI and over 200 other AI models with “much less limits” compared to individual chatbot subscriptions. Many of Writingmate.ai’s models and features are also available for free, with a commitment to “always keep the free version.”

20. Saner.AI

Saner.AI is a ChatGPT alternative because it offers an all-in-one productivity workspace, provides specialized ADHD-friendly workflows, integrates multiple advanced AI models, delivers proactive task and planning assistance, and offers a more targeted ROI for specific user needs.

How does Saner.AI function as an all-in-one productivity workspace? Saner.AI centralizes notes, tasks, emails, and calendars into a single searchable hub, reducing context switching and managing work through natural chat. It combines notes, calendar, and tasks seamlessly, unlike ChatGPT which requires setup or manual copy-paste for workflow integration. Saner.AI also integrates with Google Drive and Slack, with Google Docs sync available by September 26, 2024, and a Smart Universal Inbox preview by November 19, 2024.

Why is Saner.AI’s specialized ADHD-friendly workflow significant? Saner.AI is custom-built by and for ADHD-prone minds, offering a distraction-free workspace and adapting to user habits. It automatically breaks down large projects, turns brain dumps into actionable tasks, and learns user notes and context for relevant answers. This contrasts with ChatGPT, which is a generic AI tool requiring exploration and prompt skills for ease of use.

What makes Saner.AI’s integration of multiple advanced AI models a key differentiator? Saner.AI integrates ChatGPT alongside GPT-4, Gemini Pro, and Claude3 within its chat interface, allowing users to interact with various AI models and internet browsing in one screen as of March 9, 2024. Its “Skai – AI Knowledge Assistant” finds information, summarizes, answers questions, and surfaces insights, with “Advanced Skai’s Retrieval and Reasoning” instantly learning from notes (milliseconds after saving) and refreshing every 5 minutes by July 1, 2024.

How does Saner.AI provide proactive task and planning assistance? Saner.AI’s AI automatically scans information every morning to create an optimal day plan, pulls tasks directly from emails or meeting notes, and provides automatic follow-through reminders. It is designed to understand priorities and deadlines, delivering high ROI by surfacing the right task at the right time. Proactive planning and a Focus Box are slated for December 3, 2025.

Why does Saner.AI offer a more targeted ROI for specific user needs? Saner.AI knows the user’s niche from day one, is pre-built to slot into existing tools, and is optimized for specific task types. Internal testing shows Skai is 60% smarter with 80% fewer errors by December 3, 2025. While ChatGPT offers broad utility, Saner.AI’s focused design helps users follow through and reduces decision fatigue, converting ideas into tasks automatically.

21. Zapier Agents

Zapier Agents is a ChatGPT alternative because it creates custom AI agents by describing desired actions in plain language, it proactively monitors for triggers and takes automatic action across over 8,000 other apps, it functions as “mini-teammates” handling specific tasks like data analysis or drafting customer responses, and users can train agents by demonstrating desired behaviors without coding.

How does plain language customization make Zapier Agents an alternative? Zapier Agents allows users to create custom AI agents by describing desired actions in plain language, similar to how users interact with ChatGPT. This capability is highlighted as the “best ChatGPT alternative for creating an AI agent” in “The 9 best ChatGPT alternatives in 2025 – Zapier.” This approach enables non-technical users to build sophisticated automation without requiring programming expertise.

Why is proactive automation across 8,000+ apps a key differentiator? Unlike chat-based agents, Zapier Agents proactively monitor for triggers and take automatic action across more than 8,000 other apps. This extensive integration allows agents to perform specific actions in response to triggers from various applications, such as summarizing new lead data from Facebook Lead Ads and notifying a sales team. This broad automation capability extends beyond conversational AI.

What makes Zapier Agents function as “mini-teammates”? Zapier Agents act as “mini-teammates” that handle specific tasks like data analysis, web searching, or drafting customer responses. This specialization allows businesses to create agents for different functions, such as lead qualification for Sales or ticket routing for Support, streamlining inboxes, creating content, managing projects, tracking expenses, and managing schedules. This task-oriented approach provides a functional alternative to general-purpose AI.

How does no-code training contribute to its alternative status? Users can train agents by demonstrating desired behaviors without coding, making the creation and refinement of AI agents accessible to a wider audience. This ease of use, despite requiring some customization and setup, means users do not need technical expertise to leverage powerful AI automation. A free plan is available, with a premium plan at $50/month, making it widely accessible.

22. Zapier Chatbots

Zapier Chatbots is a ChatGPT alternative because it is an automation-first tool designed for easy integration with over 8,000 business applications, it allows website embedding for 24/7 customer and lead support, it facilitates automated follow-up actions like lead qualification and personalized outreach, it enables users to build AI-powered workflows (Zaps) in seconds using natural language, and it offers extensive customization for chatbot personality and branding.

How does Zapier Chatbots’ automation-first approach differentiate it? Zapier Chatbots is built as an “automation-first” tool, integrating AI capabilities directly into workflows and apps. This contrasts with ChatGPT’s primary function as a conversational AI. Zapier AI connects with over 7,000 apps, including Gmail, Slack, and HubSpot, allowing for comprehensive productivity beyond conversational interaction.

Why is website embedding a key differentiator? While ChatGPT’s GPT builder offers custom chatbot creation, Zapier Chatbots can be embedded directly on a user’s website. This enables the creation of low-cost customer support channels that answer FAQs 24/7 and lead management channels that gather prospect information around the clock.

What makes automated follow-up actions a significant alternative? Zapier Chatbots facilitates automated follow-up actions, such as collecting and qualifying leads, then automating personalized follow-ups. This multi-step automation capability is not inherently offered by ChatGPT, which primarily focuses on conversational interaction.

How do AI-powered workflows (Zaps) provide an alternative? Zapier AI allows users to build AI-powered workflows (Zaps) in seconds using natural language commands or a few clicks. These workflows enable multi-step automations across thousands of apps, making Zapier AI a comprehensive productivity tool that goes beyond ChatGPT’s conversational focus.

Why is extensive customization important for an alternative? Zapier Chatbots offers robust customization options. Users can train chatbots on existing business information by scraping web pages, uploading documents, or connecting Zapier Tables. Users can also edit the AI prompt to define the chatbot’s personality, update colors and logos to match branding, and add logic to gather information during conversations for follow-up. The Pro plan for chatbots limits users to 10 custom knowledge sources per chatbot, with the Advanced plan accommodating 18 CSV files.

23. Jan

Jan is a ChatGPT alternative because it offers local-first, privacy-focused operation ensuring data stays on the user’s machine, provides extensive model flexibility supporting 9 major online LLMs and local models, grants full user control and customization with connectors and custom assistants, operates as an open-source, cross-platform solution with over 4 million downloads, and demonstrates diverse AI capabilities in tasks like code generation and creative writing.

How does a local-first, privacy-focused operation make Jan an alternative? Jan runs entirely offline on the desktop, ensuring private conversations without “phoning home.” It operates a local server on the user’s computer, acting as an API endpoint, and stores everything locally. This 100% offline functionality, requiring no internet after installation, directly addresses privacy concerns that users might have with cloud-based AI services like ChatGPT.

Why is extensive model flexibility significant? Jan allows users to choose from open models or plug in their favorite online models, supporting direct integration with “ChatGPTOpenAI,” “ClaudeAnthropic,” “GeminiGoogle,” “LlamaMeta,” and “MistralMistral AI,” among others. It is also compatible with Hugging Face, GPT-4, and local models, powered by Llama.cpp (via ‘Nitro’), a fast local AI engine. This broad compatibility offers users more choice than a single proprietary model.

What makes full user control and customization a key differentiator? Jan’s tagline, “All the tools you need to make Jan yours,” highlights its focus on user empowerment. Users can select from a variety of AI models, add custom assistants and prompts, and integrate with platforms like Gmail, Amazon, and Google Drive using “Connectors.” Upcoming “Memory” functionality will remember user context and preferences, further tailoring the experience.

How does its open-source, cross-platform nature contribute to its appeal? Jan is open source under the Apache 2.0 license and is cross-platform, working on Mac, Windows, and Linux (including M1 chips). It has garnered over 4 million downloads and more than 7,500 GitHub stars, indicating strong community adoption and transparent development. This accessibility and community-driven approach contrast with proprietary alternatives.

What diverse AI capabilities does Jan demonstrate? Jan can perform a wide range of tasks, including generating creative writing, summarizing text, answering questions, and responding to coding queries. For example, it successfully generated Python Flask web app code to convert Fahrenheit to Celsius in approximately 15 seconds using the jan-nano-128K model, and the accompanying HTML file was tested and confirmed functional.

24. Mistral.ai / Mistral

Mistral AI is a ChatGPT alternative because it offers comparable performance and quality with some users finding “better quality of responses,” it provides cost-effective solutions with free and cheaper pro versions, it emphasizes data sovereignty and transparency appealing to EU standards, it features a flexible and open approach with downloadable models, it boasts robust features and capabilities including an API-first design, and it has secured significant financial backing and strategic partnerships valued at €11.7 billion by September 2025.

How does Mistral AI’s performance compare to ChatGPT? Mistral Large achieves “GPT-4 quality like” performance, with professional users often finding “hardly any difference between the quality of their responses” when using pro versions side-by-side daily. Mistral is also described as “a lot faster” than ChatGPT and Gemini, sometimes answering under one second with its Flash Answers feature. For coding, Mistral performs well on HumanEval (92.1%) and MultiPL-E (81.4%), and achieves 91% accuracy on Math500 Instruct.

Why is Mistral AI considered a cost-effective alternative? Mistral AI offers a free version, Le Chat, with generous daily limits, and Le Chat Pro costs $15/month, which is cheaper than ChatGPT Plus. Its open-weight models are free to run on user hardware, incurring costs only for computing power (e.g., a GPU server for $500-2000 monthly). The API is also competitive, ranging from “~$0.25 to $6 per million tokens,” promising a lower cost per use than heavy proprietary models.

What makes Mistral AI’s focus on data sovereignty and transparency appealing? As an “up-and-coming European player” and an “EU-based company,” Mistral AI is built to comply with GDPR. Its ambition is to offer “frontier” AI that is “respectful of data sovereignty,” allowing users to control their data and host models on their own servers. This ensures sensitive information never leaves a company’s perimeter, as demonstrated by Stellantis integrating Mistral AI models directly into internal infrastructures to avoid data transfer outside Europe by 2025.

How does Mistral AI’s flexible and open approach differentiate it? Mistral AI offers a mixed approach with both open-weight and proprietary models, with some open models (e.g., Mistral Small, Pixtral) downloadable and runnable on user hardware. These open-source (or open-weight) models, distributed under the Apache 2.0 license, encourage adoption, experimentation, and customization, allowing organizations to download, deploy, and modify them for compliance or fine-tuning.

What robust features and capabilities does Mistral AI offer? Mistral AI includes web search, canvas, and a vision model (Pixtral) that is “almost as good as Gemini.” It supports local deployment, full control, and an API-first design philosophy, making it well-suited for programmatic access. By July 2025, Le Chat was updated with “deep research” mode and advanced image editing, and by September 2025, it included a “Memories” feature. Mistral also offers a suite of foundational LLMs, including Mistral Large 2 and Pixtral Large, and introduced Mistral OCR in March 2025 and Mistral Code in June 2025.

Why is Mistral AI’s financial backing and partnerships significant? Mistral AI secured a record $113 million seed round in June 2023, valuing the startup at $260 million, and by September 2025, it was valued at €11.7 billion following a Series C funding round led by ASML. It has raised approximately €1 billion by February 2025 and secured partnerships with Microsoft (including a €15 million investment), Agence France-Presse (AFP), France’s army and job agency, Luxembourg, CMA, Helsing, IBM, Orange, and Stellantis. Mistral is also launching Mistral Compute, a European AI platform powered by Nvidia processors, in 2026.

25. NLP Cloud