AI content detectors are systems that analyze text patterns, predictability, and linguistic structure to estimate whether artificial intelligence generated the content. AI content detectors function as an AI checker, an AI detector, and an AI scanner that evaluates writing based on statistical signals instead of source matching. AI content detectors matter because they create transparency, enforce academic integrity, and support content authenticity across education, publishing, and business workflows.

AI content detectors differ from plagiarism checkers because AI content detectors analyze how text is written, while plagiarism checkers analyze where text comes from. AI content detectors focus on predictability, structure, and semantic patterns, while plagiarism checkers match text against existing sources. This distinction defines how AI detectors work, since AI content detectors rely on Natural Language Processing, machine learning, and statistical modeling instead of database comparison.

AI content detectors work through 4 core techniques that measure predictability, variation, classification, and semantic meaning. Perplexity measures how predictable text is, while burstiness measures variation in sentence structure. AI classifiers process tokenized text through trained models to assign probabilities, and context with embedding maps, meaning into a vector space for deeper analysis. These techniques combine to form probabilistic outputs that estimate AI generation likelihood.

AI content detectors require structured usage because best practices and limitations define their reliability. Effective use includes cross-checking tools, recognizing AI writing patterns, evaluating context and intent, and integrating detection into broader originality checks. Limitations include false positives, easy evasion, bias, rapid AI advancement, context constraints, and lack of definitive evidence. AI content detectors remain useful as indicators, but not as proof, which defines their role in modern content verification systems.

What Are AI Detectors?

AI detectors are programs that analyze text patterns, language structures, and metadata to estimate whether artificial intelligence generated the content. AI detectors identify predictable wording, repeated structures, and machine-generated signals to separate AI-written text from human-written text. AI detectors belong to the broader category of digital authenticity tools.

Why did AI detectors emerge? AI detectors emerged after OpenAI released GPT 2 in February 2019, as AI text generation became more advanced. The main purpose was content authenticity verification, plagiarism control, and misinformation reduction. GPTZero popularized early detection methods through perplexity and burstiness analysis.

How do AI detectors differ from plagiarism checkers? AI detectors examine how the text was written, while plagiarism checkers examine where the text came from. AI detectors focus on predictability, structure, and writing patterns. Plagiarism checkers focus on source matching against existing content. The International Center for Academic Integrity recommended combining both tools in 2024 for stronger content verification.

There are 4 main detection mechanisms in AI detectors. The mechanisms are listed below.

- Perplexity measures word predictability on a 0 to 100 scale. Lower perplexity often signals AI text because AI text follows more predictable word sequences.

- Burstiness measures sentence variation on a 0 to 100 scale. Higher burstiness often signals human writing because human writing shifts more between short and long sentences.

- Metadata traces identify hidden markers from some AI systems. Metadata traces provide a direct signal, but not every model inserts them.

- Natural Language Processing analyzes context, syntax, and semantic coherence. Natural Language Processing examines whether the language pattern matches common AI-generated output.

There are 3 main attributes of AI detectors. The attributes are listed below.

- AI detectors produce probabilistic results, not proof. Scribbr reported up to 84% accuracy for premium tools and 68% for top free tools, while GPTZero reported 99% accuracy for AI versus human samples and 96.5% for mixed documents.

- AI detectors lose reliability as AI models evolve. Strong results in 2023 do not guarantee strong results in 2026 because newer models produce more human-sounding text.

- AI detectors create false positives. Formal writing, second language English writing, and neurodivergent writing patterns face a higher risk of incorrect AI flags.

Who uses AI detectors, and why do they matter? Educators use AI detectors to review academic integrity. Content moderation teams use AI detectors to review authenticity and misinformation risks. Businesses use AI detectors for hiring, policy compliance, and document review. The market for AI detectors continues to grow as AI-generated content becomes more common. Future systems are moving toward multimedia detection for text, images, and video.

Why Are AI Detectors Important?

AI detectors are important because they create transparency, deter cheating, assess learning, combat misconduct, uphold academic standards, and maintain content authenticity. AI detectors establish visibility into content creation patterns and enforce accountability across education and professional environments. AI detectors influence how institutions evaluate originality, integrity, and authorship.

There are 6 main reasons AI detectors matter. The reasons are listed below.

- AI detectors create transparency in student AI use. AI detectors track writing activity, revisions, and AI-generated inputs to reveal how content was produced. This visibility allows educators to define AI usage rules and evaluate submissions with consistent standards.

- AI detectors deter cheating by introducing risk. AI detectors increase uncertainty for unauthorized AI usage, which reduces the likelihood of submitting AI-generated work as original. This risk exposure reinforces academic integrity policies.

- AI detectors assess whether learning occurs. AI detectors identify patterns that indicate independent thinking versus automated generation. This distinction ensures that grades reflect actual knowledge and cognitive ability.

- AI detectors combat academic misconduct. AI detectors identify contract cheating, collusion, essay mill usage, and AI paraphrasing patterns. This detection process exposes unauthorized external contributions in submitted work.

- AI detectors uphold academic standards. AI detectors identify AI-generated text and allow pre-submission checks for originality. This process reduces manual review time and enforces consistent evaluation criteria.

- AI detectors maintain content authenticity across industries. AI detectors identify AI-generated material in journalism, marketing, and publishing to reduce misinformation and automated content risks. Search engines evaluate originality signals, and ranking losses occur when content lacks uniqueness, as shown by a GoCardless article that dropped 5 positions after a March 2024 update.

How do AI detectors create transparency in student AI use? AI detectors create transparency by exposing writing patterns, revisions, and AI-generated inputs within a document. AI detectors record structural changes and generation signals to show how the content evolved. This visibility allows accurate evaluation of authorship and enforces fair grading standards.

Why do AI detectors deter cheating? AI detectors deter cheating because detection introduces a measurable risk for unauthorized AI usage. AI detectors identify machine-generated patterns, which discourages submission of unoriginal work. This mechanism reinforces compliance with academic policies.

What role do AI detectors play in assessing learning? AI detectors assess learning by distinguishing between human reasoning patterns and automated text generation. AI detectors analyze structure and predictability to determine whether independent thinking occurred. This distinction ensures that academic outcomes reflect actual skill levels.

How do AI detectors combat misconduct? AI detectors combat misconduct by identifying patterns linked to contract cheating, collusion, and AI rewriting. AI detectors evaluate structural consistency and generation signals to detect external content creation. This process strengthens institutional control over academic violations.

Why are AI detectors essential for upholding academic standards? AI detectors uphold academic standards by identifying AI-generated text and enforcing originality checks before evaluation. AI detectors automate detection workflows, which reduces manual review effort and increases consistency. This system preserves fairness in grading and certification.

What makes AI detectors important for maintaining content authenticity? AI detectors maintain content authenticity by identifying AI-generated material across journalism, marketing, and publishing. AI detectors reduce risks linked to misinformation, deepfakes, and automated writing. Search engines prioritize original content, and ranking declines occur when AI-generated content lacks uniqueness.

What Are the Differences Between AI Detectors and Plagiarism Checkers?

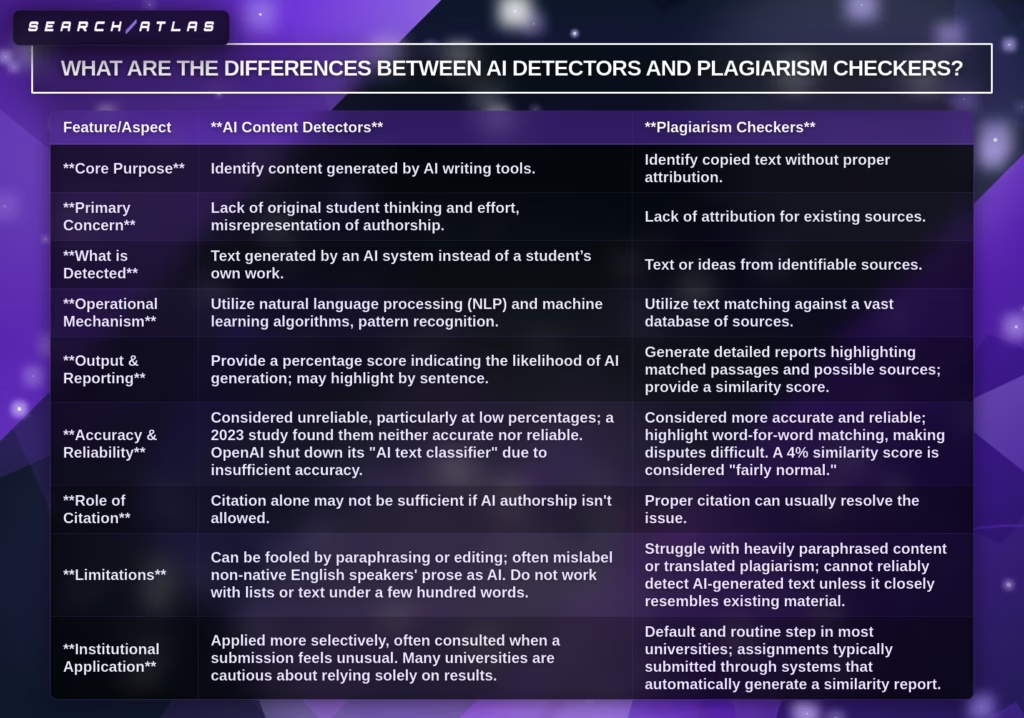

AI detectors and plagiarism checkers differ in purpose, detection method, output, and reliability. AI detectors identify AI-generated text based on writing patterns and predictability. Plagiarism checkers identify copied or paraphrased text based on source matching and attribution gaps.

What is the core purpose of AI detectors vs plagiarism checkers? AI detectors identify content generated by artificial intelligence, while plagiarism checkers identify copied content without proper attribution. AI detectors focus on authorship authenticity and writing origin. Plagiarism checkers focus on source attribution and content reuse.

What does each tool detect? AI detectors detect machine-generated text, while plagiarism checkers detect text matched to existing sources. AI detectors analyze structure, predictability, and linguistic signals. Plagiarism checkers compare text against databases of academic papers, websites, and documents.

How do AI detectors and plagiarism checkers differ across key features? AI detectors and plagiarism checkers differ across 9 main features. The features are listed below.

| Feature/Aspect | AI Content Detectors | Plagiarism Checkers |

|---|---|---|

| Core Purpose | Identify AI-generated text. | Identify copied text without attribution. |

| Primary Concern | Authorship authenticity and lack of original thinking. | Missing attribution from existing sources. |

| What is Detected | AI-generated writing patterns. | Text or ideas from identifiable sources. |

| Operational Mechanism | Natural Language Processing, machine learning, and pattern recognition. | Text matching against large source databases. |

| Output and Reporting | Probability score of AI generation with sentence-level highlights. | Similarity score with matched passages and source links. |

| Accuracy and Reliability | Lower reliability due to probabilistic estimation. | Higher reliability due to exact text matching. |

| Role of Citation | Citation does not resolve AI authorship concerns. | Citation resolves most attribution issues. |

| Limitations | Fails with edited AI text, short content, non native writing. | Fails with heavy paraphrasing or translated content. |

| Institutional Application | Used selectively during investigations. | Used routinely in submission workflows. |

How accurate are AI detectors compared to plagiarism checkers? Plagiarism checkers are more accurate and reliable than AI detectors. Plagiarism checkers rely on direct text matching, which produces verifiable evidence. AI detectors produce probabilistic estimates, and a 2023 study found them unreliable at low percentages, while OpenAI discontinued its AI classifier due to low accuracy.

When do AI detectors need to be used? AI detectors are used when authorship authenticity is the primary concern. AI detectors provide probability scores that indicate AI generation and trigger a deeper investigation into the writing’s origin. AI detectors are applied when the writing style appears inconsistent or synthetic.

When do plagiarism checkers need to be used? Plagiarism checkers are used when attribution and source matching are the primary concern. Plagiarism checkers generate similarity reports with direct evidence of copied or reused content. Plagiarism checkers are standard in academic submission systems.

Can AI detectors and plagiarism checkers work together? Yes, AI detectors and plagiarism checkers work together in a combined integrity workflow. AI detectors evaluate authorship patterns, while plagiarism checkers verify source attribution. Human review validates flagged results because automated scores do not confirm misconduct.

What Are Key AI Detection Techniques?

Key AI detection techniques are methods that analyze text predictability, variation, classification signals, and semantic structure to identify AI-generated content. AI detection techniques evaluate linguistic patterns, statistical probability, and contextual meaning to distinguish machine-generated writing from human writing.

There are 4 main AI detection techniques. The techniques are listed below.

- Perplexity measures text predictability.

- Burstiness measures sentence variation.

- AI classifiers identify machine-generated patterns.

- Context and embeddings analyze semantic meaning.

1. Perplexity (Predictability)

Perplexity is the key detection technique because it measures text predictability, which directly exposes differences between AI-generated writing and human writing. Perplexity quantifies how likely each word appears in sequence, which aligns with how large language models optimize output.

There are 5 main reasons perplexity is the key detection technique. The reasons are listed below.

- AI-generated text consistently shows lower perplexity scores.

- Human writing naturally shows higher perplexity.

- Detection tools use this gap to classify text with high accuracy.

- Perplexity measures predictability, which is a core LLM output behavior.

- Perplexity identifies abnormal or contaminated data patterns.

How does AI-generated text’s lower perplexity enable detection? AI-generated text produces lower perplexity scores because language models optimize for predictable and fluent output. GPT 4o records perplexity scores 15-20 on benchmarks, while Claude 3.5 records scores 12-18. Human-written abstracts show a median perplexity of 35.9, while AI-generated abstracts show 21.2. This gap creates a measurable signal for detection.

Why does human writing show higher perplexity? Human writing shows higher perplexity because human language includes unpredictable word choices and stylistic variation. Human writing selects from a wider range of possible words at each step. A perplexity score of 50 reflects selection from 50 likely word options, while a score of 5 reflects limited variation and high predictability.

Why does predictability make perplexity effective? Perplexity measures predictability, which defines how AI models generate text token by token. Large language models prioritize the most probable next word, which reduces variability and lowers perplexity. Human writing introduces irregular patterns, which increases perplexity.

How does perplexity identify data issues and anomalies? Perplexity identifies abnormal patterns because extremely low or high scores signal data quality problems. Very low perplexity indicates training data leakage or memorization. Very high perplexity indicates corrupted text, encoding errors, or incoherent content. This diagnostic function improves model evaluation and dataset validation.

2. Burstiness (Variation)

Burstiness is the key detection technique because it measures variation in sentence length and structure, which clearly separates human writing patterns from AI-generated patterns. Burstiness captures how text shifts between short and long sentences, which reflects natural human expression versus algorithmic consistency.

There are 4 main reasons burstiness is the key detection technique. The reasons are listed below.

- Human writing shows high variation in sentence length and rhythm.

- AI-generated text shows uniform and structured patterns.

- Detection tools analyze sentence length distributions to identify patterns.

- Burstiness combines with perplexity to improve detection accuracy.

How does human writing variation make burstiness effective? Human writing produces high burstiness because sentence length and rhythm change based on thought flow and emphasis. Human writing shifts between short sentences of 5 words and long sentences of 50 words. This variation creates irregular patterns that reflect personal style and cognitive processing.

Why does AI-generated text uniformity make burstiness effective? AI-generated text produces low burstiness because sentence structures follow consistent patterns. Early AI models generate sentences with similar length and structure due to probability-based token selection. This consistency creates a uniform distribution that signals machine generation.

How do detectors use sentence length distribution for burstiness analysis? AI detectors analyze the probability distribution of sentence lengths to classify text as human or AI-generated. Narrow and uniform distributions indicate AI-generated content. Irregular and wide distributions indicate human writing. GPTZero uses burstiness as a core statistical signal in detection systems.

Why does combining burstiness with perplexity improve detection? Burstiness and perplexity form a composite detection model that increases classification accuracy. Perplexity measures predictability, while burstiness measures variation. Low perplexity with low burstiness signals AI-generated text, while higher variability with occasional spikes signals human writing.

3. AI Classifiers

AI classifiers are the key detection technique because they use machine learning models and Natural Language Processing to classify text as AI-generated or human-written with high accuracy. AI classifiers process linguistic patterns, statistical signals, and semantic structures to produce a classification outcome.

There are 5 main reasons AI classifiers are the key detection technique. The reasons are listed below.

- AI classifiers use advanced machine learning models to detect patterns.

- AI classifiers apply Natural Language Processing to analyze linguistic nuances.

- AI classifiers use deep learning and interpretability techniques for classification.

- AI classifiers train on labeled datasets through supervised learning.

- AI classifiers achieve high accuracy on benchmark evaluations.

How do machine learning models make AI classifiers effective? AI classifiers use neural networks and ensemble models trained on human and AI-generated text to identify structural and stylistic patterns. AI classifiers convert text into tokens, transform tokens into embeddings, and process embeddings through models that output classification labels 0-1. This pipeline enables pattern recognition at scale.

Why is Natural Language Processing critical for AI classifiers? Natural Language Processing enables AI classifiers to analyze syntax, context, and word relationships in text. Natural Language Processing evaluates sentence structure, word choice, and stylistic consistency to detect signals linked to machine-generated writing. This analysis improves classification precision.

What role do deep learning and interpretability play in AI classifiers? Deep learning enables complex pattern recognition, while interpretability explains classification decisions. AI classifiers detect signals, repetition, uniform structure, and low perplexity. Interpretability techniques expose which features influenced the classification outcome.

How does supervised learning improve AI classifier performance? Supervised learning trains AI classifiers on labeled datasets to distinguish human-written text from AI-generated text. AI classifiers learn from features, token frequency, syntactic structure, perplexity scores, and semantic embeddings. Diverse training data improves robustness across writing styles.

What accuracy levels do modern AI classifiers achieve? Modern AI classifiers achieve accuracy rates above 95% on benchmark tests. GPTZero reports 99% accuracy with a 1% false positive rate for AI versus human classification. Advanced models use precision, recall, and F1 score to evaluate performance consistency.

4. Context and Embeddings

Context and embeddings are the key detection techniques because they represent text meaning as numerical vectors that capture semantic relationships and contextual variation. Context and embeddings encode how words function within sentences and across documents, which enables precise detection of AI-generated patterns.

There are 6 main reasons context and embeddings are the key detection technique. The reasons are listed below.

- Context and embeddings capture nuanced word meanings based on surrounding text.

- Context and embeddings improve model accuracy through vector representation.

- Context and embeddings enhance retrieval by modeling document relationships.

- Context and embeddings improve generalization for unseen queries.

- Context and embeddings drive major gains in information retrieval performance.

- Context engineering based on embeddings is becoming a core AI skill.

How do context and embeddings capture nuanced word meanings? Context and embeddings generate different vectors for the same word depending on the surrounding text. Context and embeddings assign dynamic representations, so the word “bank” receives one vector for finance and another for a river edge. This approach replaces static representations with context-aware meaning.

Why do context and embeddings improve model accuracy? Context and embeddings improve accuracy by converting language into numerical vectors that enable precise pattern recognition. Context and embeddings allow models to process ambiguity, complex syntax, and semantic relationships. Models using deep contextual embeddings outperform traditional methods in tasks with complex language.

What impact do context and embeddings have on information retrieval performance? Context and embeddings have significantly improved information retrieval benchmarks since 2019. Context and embeddings enable semantic search systems to understand language relationships more effectively. This advancement improves tasks like search, summarization, and translation.

Why is context engineering becoming a core skill? Context engineering is becoming a core skill because embedding driven systems rely on structured context rather than prompt phrasing. Context and embeddings shift optimization from prompt design to context design. Industry projections indicate this shift will define AI system development by 2026.

What Are the Best Practices For Using AI Detectors?

Best practices for using AI detectors are structured methods that improve accuracy, reduce false positives, and ensure fair evaluation of content authenticity. Best practices define how AI detectors integrate into verification workflows and how results are interpreted responsibly.

There are 7 main best practices for using AI detectors. The best practices are listed below.

- Acknowledge the limitations of AI detectors.

- Cross-check results with multiple tools.

- Recognize AI writing patterns.

- Consider context and intent.

- Maintain transparency in detection use.

- Integrate AI detection into a broader originality process.

- Engage in dialogue during evaluation.

1. Acknowledge the Limitations of AI Detectors

Acknowledging the limitations is the best practice because AI detectors produce high error rates, bias, and unreliable results that require careful interpretation. Acknowledging the limitations ensures that AI detector outputs are treated as signals, not proof, which prevents incorrect conclusions.

There are 5 main reasons acknowledging the limitations is the best practice. The reasons are listed below.

- AI detectors produce high error rates and false accusations.

- AI detectors show bias against underrepresented groups.

- AI-generated text is easy to modify and evade detection.

- AI detectors rely on probabilistic models with structural limitations.

- Over-reliance leads to unjust outcomes and loss of trust.

How do high error rates impact AI detector reliability? High error rates reduce reliability because AI detectors misclassify both AI-generated and human-written text. OpenAI discontinued its AI detection tool after it correctly identified only 26% of AI text and falsely labeled 9% of human text. Some detectors report error rates 10%-30%, which creates inconsistent results.

Why do AI detectors show bias against underrepresented groups? AI detectors show bias because detection models misinterpret structured or formulaic writing as AI-generated text. Research shows that over 61% of essays by non native English speakers were misclassified as AI-generated. Up to 97% of TOEFL essays were flagged by at least one detector. This bias stems from reliance on perplexity and burstiness signals.

Why is AI-generated text easy to evade detection? AI-generated text is easy to evade because small edits change detection signals significantly. Paraphrasing, adding variation, and inserting personal tone reduce detection accuracy. One study showed accuracy dropped 74%-42% after minor edits. Simple prompt changes allow bypass rates 80%-90%.

What are the fundamental flaws in AI detection systems? AI detection systems rely on probabilistic algorithms that produce variable and non-definitive outputs. Each AI detector trains on different datasets, which leads to inconsistent classification results. As large language models improve, the gap between AI and human writing decreases, which weakens detection signals.

How does over-reliance on AI detectors create unjust outcomes? Over-reliance creates unjust outcomes because false positives lead to incorrect accusations and penalties. Cases show historical documents and human-written work flagged as AI-generated. Institutions like UCLA disabled AI detection features due to trust concerns and accuracy limitations.

2. Cross-check With Multiple Tools

Cross-checking with multiple tools is the best practice because single AI detectors produce inconsistent results, while comparison across tools improves reliability and reduces error. Cross-checking with multiple tools creates a stronger verification process because different AI detectors use different models, datasets, and scoring systems.

There are 5 main reasons why cross-checking with multiple tools is the best practice. The reasons are listed below.

- Single AI detectors produce inconsistent and limited results.

- Cross verification reduces errors and improves reliability.

- Human review adds fairness and contextual judgment.

- Integrated suites and hybrid models create stronger detection systems.

- Different training data across models exposes gaps missed by one detector.

How do single AI detectors show inconsistency and limitations? Single AI detectors show inconsistency because the same text often receives very different scores across platforms. One self-written research paper received 23% AI from Originality.AI and 82% AI from GPTZero. Verified ChatGPT text received scores 40%-100% AI, while some tools marked the same text as human-written. Error rates average 15%-25% for text detection.

Why does cross verification improve reliability? Cross verification improves reliability because comparing outputs from several tools reduces dependence on one probabilistic score. A flagged result from one detector gains meaning only after comparison with other detectors. This comparison reduces false positives and creates a stronger basis for review.

Why is human review necessary during cross-checking? Human review is necessary because AI detector scores do not evaluate intent, context, or writing history. Human review examines the full submission, checks contextual relevance, and validates whether the flagged patterns actually indicate AI generation. This process improves fairness and prevents automatic conclusions.

Why do integrated suites and hybrid models strengthen detection? Integrated suites and hybrid models strengthen detection because they combine multiple signals with human oversight. Hybrid systems join machine learning analysis with expert review, which creates stronger resistance against rapidly changing AI writing patterns. This structure improves reliability more than a single detector.

Why does different training data across models matter? Different training data across models matters because one detector often identifies patterns that another detector misses. Each model learns from different text samples, architectures, and classification signals. Agreement across multiple models creates a stronger confidence signal than reliance on one model alone.

3. Learn to Recognize AI Writing Patterns

Learning to recognize AI writing patterns is the best practice because pattern recognition improves evaluation accuracy, editing precision, and control over AI-assisted content. Learning to recognize AI writing patterns allows direct identification of machine-generated signals beyond detector scores.

There are 5 main reasons learning to recognize AI writing patterns is the best practice. The reasons are listed below.

- Pattern recognition enables self-evaluation and iterative improvement.

- Pattern recognition prevents AI-sounding text.

- Pattern recognition enables targeted editing based on intensity.

- Pattern recognition signals when the writing approach requires adjustment.

- Pattern recognition preserves human voice and cognitive control.

How does pattern recognition enable self-evaluation and improvement? Pattern recognition enables self-evaluation because identifying AI signals allows iterative editing and score reduction. A text scored at 93% AI reduced to 79% after two editing cycles. Pattern recognition isolates detectable structures, which allows focused revisions and measurable improvement.

Why does pattern recognition prevent AI-sounding text? Pattern recognition prevents AI-sounding text because it identifies uniform structure, predictable phrasing, and low variation. Human detection accuracy is 53%, which is close to random at 50%. Pattern recognition compensates for this limitation by exposing detectable signals that reduce machine-like tone.

How does pattern recognition enable targeted editing? Pattern recognition enables targeted editing because AI detectors highlight sections based on intensity levels. High-intensity sections require structural changes, while low-intensity sections require minor variation. A document with 25% AI patterned content isolates specific segments for revision.

Why do consistent scores indicate a need to adjust the writing approach? Consistent high scores indicate structural dependence on AI-generated patterns. A score near 25% reflects mostly human-written content with detectable AI influence. Repeated high scores require changes in prompting, drafting, and editing workflow.

How does pattern recognition preserve human voice? Pattern recognition preserves human voice because editing focuses on removing AI signals without removing original reasoning. This process maintains unique expression and critical thinking while refining structure. Iterative editing and rescoring confirm alignment with human writing patterns.

4. Consider Context and Intent

Considering context and intent is the best practice because detection results require meaning, purpose, and usage conditions to produce accurate and fair decisions. Considering context and intent ensures that AI detector outputs align with real authorship, allowed usage, and evaluation criteria.

There are 6 main reasons considering context and intent is the best practice. The reasons are listed below.

- Context and intent improve detection accuracy.

- Context and intent increase evaluation efficiency.

- Context and intent enable scalable analysis.

- Context and intent generate actionable data insights.

- Context and intent strengthen model confidence and training.

- Context and intent improve interaction quality and outcomes.

How do context and intent improve detection accuracy? Context and intent improve accuracy because they reduce misclassification between AI-generated and human-written text. Natural Language Processing analyzes purpose and semantic relationships instead of isolated keywords. Context-aware evaluation increases prediction precision by 5.3x compared to non-contextual analysis.

Why do context and intent increase efficiency? Context and intent increase efficiency by reducing processing time and eliminating irrelevant analysis. Intent classification identifies the purpose of text and aligns evaluation criteria faster. Systems using intent recognition reduce response time by 50%.

Why do context and intent enable scalability? Context and intent enable scalability because structured intent classification processes large volumes of content consistently. Context-driven systems maintain accuracy across increasing input size without degradation. This structure prevents bottlenecks that occur in keyword-based evaluation.

How do context and intent generate data insights? Context and intent generate insights because categorized intent data reveals recurring patterns and issues. Intent classification groups content into categories, which exposes trends and anomalies. This analysis supports decision-making and process improvement.

Why do context and intent strengthen model confidence? Context and intent strengthen confidence because models assign probability scores to intent classification. Confidence levels prevent incorrect classification when prediction certainty is low. This mechanism improves reliability through controlled decision thresholds and continuous learning.

How do context and intent improve outcomes? Context and intent improve outcomes because evaluation aligns with real usage, purpose, and communication goals. Context-driven analysis produces more relevant interpretations and accurate conclusions. This alignment improves trust, consistency, and decision quality.

5. Be Transparent About Detection

Being transparent about detection is the best practice because transparency replaces unreliable scores with process-based evaluation, which improves fairness and trust. Being transparent about detection ensures that AI detector results are interpreted within clear rules, documented workflows, and visible authorship signals.

There are 4 main reasons why being transparent about detection is the best practice. The reasons are listed below.

- Transparency shifts evaluation toward the learning and writing process.

- Transparency builds trust between evaluators and writers.

- Transparency protects against arbitrary or incorrect decisions.

- Transparency defines ethical AI usage standards.

How does transparency shift focus to learning and process? Transparency shifts focus because AI detection scores do not prove authorship, while process evidence shows how the content was created. AI detectors generate probabilistic outputs, and OpenAI discontinued its classifier due to low reliability. Process-based evaluation reviews drafts, revisions, and reasoning steps, which align the assessment with learning outcomes.

Why does transparency build trust between evaluators and writers? Transparency builds trust because clear rules define acceptable AI usage and remove uncertainty. Explicit policies distinguish brainstorming, outlining, and full generation. Visibility into drafts and sources replaces hidden usage, which creates open evaluation conditions and consistent expectations.

How does transparency prevent arbitrary decisions? Transparency prevents arbitrary decisions because evaluation includes multiple evidence points instead of a single detector score. AI detectors misclassify structured or non native writing, which creates a risk of false positives. A transparent workflow reviews citation quality, argument depth, and writing history before conclusions.

How does transparency define ethical AI usage? Transparency defines ethical usage because disclosure requirements document how AI contributed to the content. AI use statements and revision logs show where AI-assisted idea generation or drafting. This structure separates acceptable assistance from full automation and preserves accountability in content creation.

6. Use AI Detection as Part of a Wider Originality Check

Using AI detection as part of a wider originality check is the best practice because combining multiple verification methods improves accuracy, reduces errors, and ensures complete evaluation of content authenticity. Using AI detection as part of a wider originality check integrates authorship analysis, source verification, and human review into a single workflow.

There are 5 main reasons why using AI detection as part of a wider originality check is the best practice. The reasons are listed below.

- Combined methods create transparency in AI usage.

- Combined methods provide defense against evolving AI threats.

- Combined methods reduce the limitations of standalone detectors.

- Combined methods strengthen academic integrity evaluation.

- Combined methods enable responsible AI integration.

How does combining methods create transparency in AI usage? Combining methods creates transparency because AI detection signals are supported by authorship evidence and source validation. AI detectors indicate possible AI generation, while plagiarism checkers verify attribution and originality. This combination reveals how content was created and whether it follows defined policies.

Why do combined methods defend against evolving AI threats? Combined methods defend against evolving threats because different tools detect different risk types. AI detectors identify synthetic text that does not match existing sources. Plagiarism checkers identify copied material. This layered approach captures both generated and reused content that single tools miss.

How do combined methods reduce the limitations of standalone detectors? Combined methods reduce limitations because multiple systems offset false positives and false negatives. AI detectors produce probabilistic results with error margins 10%-20%. Cross-validation with plagiarism detection, citation checks, and authorship tracking reduces misclassification risk.

How do combined methods strengthen academic integrity evaluation? Combined methods strengthen integrity because evaluation includes multiple evidence points beyond a single score. Review processes examine writing history, citation accuracy, and argument structure. Decisions require multiple indicators instead of relying only on detection output.

Why do combined methods enable responsible AI integration? Combined methods enable responsible AI integration because clear workflows define acceptable AI usage and verification standards. Policies require disclosure, attribution, and process documentation. This structure ensures ethical use of AI while maintaining content authenticity.

7. Engage In Dialogue

Engaging in dialogue is the best practice because conversation adds context, validates intent, and replaces automated judgment with evidence-based evaluation. Engaging in dialogue ensures that AI detector results are interpreted through communication, which improves fairness and accuracy.

There are 6 main reasons engaging in dialogue is the best practice. The reasons are listed below.

- Dialogue builds trust through transparency.

- Dialogue strengthens learning and critical thinking.

- Dialogue enables nuanced human judgment.

- Dialogue addresses bias and equity risks.

- Dialogue shifts focus from detection to prevention.

- Dialogue enables collaborative problem solving.

How does dialogue build trust and transparency? Dialogue builds trust because clear communication explains how AI detection works and how results are used. Disclosure of AI detection in advance removes uncertainty and prevents hidden evaluation. This clarity creates consistent expectations and reduces conflict.

Why does dialogue strengthen learning and critical thinking? Dialogue strengthens learning because discussion clarifies appropriate and inappropriate AI usage. Structured conversations explain how AI fits into drafting, research, and revision. This process reinforces independent thinking and controlled AI usage.

How does dialogue enable nuanced human judgment? Dialogue enables nuanced judgment because evaluators interpret AI detector signals alongside explanations from the writer. AI detectors provide scores without reasoning, while dialogue reveals intent, workflow, and decision-making. This combination produces accurate conclusions.

How does dialogue address bias and equity risks? Dialogue addresses bias because discussion allows evaluation beyond patterns that trigger false positives. AI detectors misclassify non native English writing and structured text. Direct communication introduces context that prevents incorrect classification.

Why does dialogue shift focus to prevention? Dialogue shifts focus to prevention because early communication defines rules and reduces misuse before submission. Clear expectations guide behavior and reduce reliance on post-submission detection. This approach improves compliance and reduces violations.

How does dialogue enable collaborative problem solving? Dialogue enables problem-solving because discussion identifies causes of flagged results and defines corrective actions. Conversations reveal misunderstanding, misuse, or process gaps. This interaction leads to improved workflows and stronger academic integrity systems.

What are the Limitations of AI Detectors?

Limitations of AI detectors are constraints that reduce accuracy, reliability, and fairness when identifying AI-generated content. Limitations of AI detectors affect how detection results are interpreted and why AI detectors require supporting verification methods.

There are 6 main limitations of AI detectors. The limitations are listed below.

- High false positives.

- Easy evasion leading to false negatives.

- Bias and reduced accuracy across user groups.

- Rapidly advancing AI technology.

- Context and length constraints.

- Lack of definitive evidence.

1. High False Positives

High false positives are a limitation because AI detectors incorrectly classify human-written content as AI-generated, which reduces reliability and creates harmful consequences. High false positives distort evaluation outcomes and weaken trust in AI detection systems.

There are 6 main reasons high false positives are a limitation. The reasons are listed below.

- AI detectors frequently misclassify human writing.

- False positives create serious academic and professional consequences.

- False positives reveal bias across writing styles and groups.

- False positives prove low accuracy and reliability.

- False positives persist despite simple evasion techniques.

- False positives result from flawed detection mechanisms.

How frequently do AI detectors misclassify human writing? AI detectors misclassify human writing at rates 12% to 27%, depending on the tool and dataset. Tests show Originality.AI flagged 18% of human text, GPTZero flagged 14%, and Turnitin flagged 12%. A behavioral health study reported over 27% of human academic texts flagged as AI-generated.

Why do false positives create serious consequences? False positives create consequences because incorrect classification leads to accusations of misconduct. Students receive penalties and records of academic violations. Around 10% of students report false accusations, and professional cases include job rejection based on incorrect AI detection results.

How do false positives reveal bias in AI detectors? False positives reveal bias because detection models misinterpret structured or non native writing as AI-generated. Studies show that over 61% of non native English essays were misclassified. Up to 97% of TOEFL essays were flagged by at least one detector, and 20% of Black students faced false accusations compared to 7% of white students.

Why do false positives prove low accuracy and reliability? False positives prove low reliability because AI detectors fail to consistently distinguish human and AI text. OpenAI discontinued its classifier after achieving only 26% detection accuracy. Studies confirm that detectors are neither accurate nor reliable across datasets.

Why do false positives persist despite evasion techniques? False positives persist because detection systems fail to separate edited AI text from human writing. Detection accuracy drops 74%-42% after minor edits. Prompt changes reduce detection rates 100% to 0%, which shows instability in classification.

What causes high false positives in detection mechanisms? High false positives occur because detectors rely on perplexity and burstiness signals that overlap with human writing patterns. Polished and structured writing matches AI-generated patterns, which leads to misclassification. Even historical documents like the U.S. Constitution have been flagged as AI-generated.

2. Easy Evasion (False Negatives)

Easy evasion is a limitation because AI detectors fail to identify AI-generated text after minor edits, which leads to false negatives and unreliable results. Easy evasion allows AI-generated content to appear human-written, which weakens detection accuracy.

There are 6 main reasons easy evasion is a limitation. The reasons are listed below.

- AI models evolve faster than detection systems.

- Human intervention bypasses detection systems.

- Detection relies on statistical patterns, not authorship proof.

- The short text lacks sufficient data for detection.

- Watermarking lacks adoption and is removable.

- Text structure limits reliable detection signals.

How do evolving AI models reduce detection effectiveness? Evolving AI models reduce detection effectiveness because newer models produce highly human-like text that detectors cannot identify. GPT 4 level models generate outputs that match human writing patterns. Detection systems trained on older models fail to recognize these newer outputs.

Why does human intervention bypass AI detection? Human intervention bypasses detection because edits remove detectable AI patterns. Paraphrasing, translation, and mixed human input reduce detection signals. Studies show that over 30% of lightly edited AI content avoids detection systems.

Why is reliance on statistical patterns a limitation? Statistical pattern reliance is a limitation because AI detectors estimate likelihood instead of confirming authorship. AI detectors analyze token distribution, entropy, and structure, which produces inconsistent results. Different detectors generate different scores for the same text.

Why does short text create false negatives? Short text creates false negatives because limited content reduces detectable statistical signals. AI detectors require sufficient text length to measure perplexity and burstiness. Brief passages lack enough data for accurate classification.

Why is watermarking ineffective for detection? Watermarking is ineffective because it is not universally implemented and can be removed. Not all AI models embed watermarks, and removal techniques bypass detection. Adversarial methods further reduce watermark reliability.

Why does text as a domain limit detection accuracy? Text limits detection accuracy because language is a discrete system without fixed markers of authorship. AI-generated text and human writing share overlapping structures. This overlap makes consistent detection difficult as evasion techniques continue to evolve.

3. Bias and Reduced Accuracy

Bias and reduced accuracy are limitations because AI detectors produce inconsistent results, misclassify specific groups, and fail to reliably distinguish AI-generated text from human writing. Bias and reduced accuracy weaken trust in detection systems and create unfair evaluation outcomes.

There are 6 main reasons bias and reduced accuracy are limitations. The reasons are listed below.

- AI detectors show low overall accuracy.

- AI detectors exhibit bias against non native English speakers.

- AI detectors vary in accuracy across disciplines.

- AI detectors struggle with hybrid human AI text.

- AI detectors produce inconsistent results across AI models.

- AI detectors rely on flawed and outdated systems.

How does low overall accuracy limit AI detectors? Low accuracy limits AI detectors because performance rates approach random classification levels. Studies show top tools achieve around 50% accuracy, while commercial detectors identify AI content at about 63% with false positive rates near 25%. Large-scale research confirms AI detectors are neither accurate nor reliable.

Why do AI detectors show bias against non native English speakers? Bias occurs because detection models misinterpret structured or predictable language patterns as AI-generated text. Over 61% of non native English essays were misclassified, and 97% of TOEFL essays were flagged by at least one detector. Detection scores for non native writers average 44.61% compared to 30.68% for native writers.

Why does accuracy vary across academic disciplines? Accuracy varies because writing structure differs across disciplines, which affects detection signals. Interdisciplinary abstracts show false accusation rates up to 62.50%, while performance improves in the social sciences. Technology and engineering content shows lower detection consistency.

Why do AI detectors struggle with hybrid text? Hybrid text creates limitations because AI detectors cannot separate human-written and AI-generated sections. Detection systems show underdetection rates above 50% for mixed content. This overlap prevents accurate classification of blended writing.

How does the AI model used affect detection accuracy? Detection accuracy changes because different AI models produce different linguistic patterns. Gemini 2.0 Pro enhanced text is 2.8 times more likely to be flagged than ChatGPT enhanced text. GPTZero assigns 55.50% probability to Gemini text compared to 19.79% for ChatGPT text.

Why do flawed and outdated systems reduce reliability? Reliability decreases because AI detectors rely on older models and static training data. Detection systems become obsolete as new AI models evolve. Prompt changes and paraphrasing reduce detection rates 100% to 0%, which confirms structural weaknesses.

4. Rapidly Advancing Technology

Rapidly advancing technology is a limitation because AI generation improves faster than detection systems, which reduces accuracy and increases undetectable AI content. Rapidly advancing technology creates a gap where detectors rely on outdated models while newer AI produces human-level writing.

There are 5 main reasons rapidly advancing technology is a limitation. The reasons are listed below.

- AI models evolve beyond detection capabilities.

- Generative AI produces highly human-like text.

- Detection systems lag behind new AI models.

- Evasion techniques improve alongside AI systems.

- Human detection accuracy remains low.

How do evolving AI models reduce detection effectiveness? Evolving AI models reduce detection effectiveness because newer systems generate text that matches human writing patterns. GPT 4 and GPT 4o produce outputs with high fluency and variation, which removes detectable signals used by older detectors.

Why do humans like AI output limit detection? Human-like AI output limits detection because the similarity between AI and human writing removes clear classification boundaries. Detection accuracy drops 74% to 42% after minor edits to AI-generated text. AI-assisted human writing often becomes indistinguishable from fully human content.

Why do detection systems lag behind AI advancements? Detection systems lag because they train on older models and static datasets. OpenAI discontinued its AI classifier after it identified only 26% of AI-generated text. This lag creates a mismatch between current AI capabilities and detection methods.

How do improving evasion techniques increase false negatives? Improving evasion techniques increases false negatives because users modify AI text to remove detectable patterns. Prompt engineering, paraphrasing, and AI humanizer tools bypass detection systems. Some techniques reduce detection rates 100%-0% and achieve bypass rates 80%-90%.

Why do human limitations reinforce this problem? Human limitations reinforce this problem because humans detect AI-generated text at only 53% accuracy. This rate is close to random guessing at 50%, which shows that even human judgment struggles with modern AI output.

5. Context and Length Constraints

Context and length constraints are limitations because AI detectors rely on sufficient text and shallow pattern analysis, which reduces accuracy in short or nuanced content. Context and length constraints prevent AI detectors from capturing meaning, intent, and reliable statistical signals.

There are 5 main reasons context and length constraints are limitations. The reasons are listed below.

- AI detectors show bias against non native English writing.

- AI detectors fail to understand nuance and context.

- AI detectors are easy to evade with small changes.

- AI detectors fall behind rapidly evolving AI models.

- AI detectors require sufficient text length for analysis.

How does bias affect context and length limitations? Bias affects detection because limited context increases reliance on simplified patterns that misclassify non native writing. Studies show 61% of non native English essays were misclassified, and 97% of TOEFL essays were flagged. Reduced context amplifies reliance on surface-level signals.

Why do AI detectors fail to understand nuance and context? AI detectors fail because they analyze statistical patterns instead of semantic meaning. Complex vocabulary, formal tone, and structured arguments trigger AI signals even in human writing. Detection systems evaluate style, not factual accuracy or intent.

Why are AI detectors easy to evade under these constraints? AI detectors are easy to evade because small edits disrupt pattern-based signals. Detection accuracy drops 74%-42% after minor modifications. Prompt adjustments reduce detection rates 100%-0%, which shows instability.

How does rapid AI evolution worsen context limitations? Rapid AI evolution worsens limitations because newer models generate context-aware and human-like text. Detection systems trained on older models cannot identify these outputs. This gap reduces effectiveness over time.

Why does text length affect detection accuracy? Text length affects accuracy because AI detectors require enough data to calculate reliable patterns. Short passages lack sufficient tokens for perplexity and burstiness analysis. Word limits in free tools restrict analysis scope, which reduces detection reliability.

6. Lack of Evidence

Lack of evidence is a limitation because AI detectors produce probabilistic scores instead of verifiable proof of authorship, which makes results unreliable and non-definitive. Lack of evidence prevents AI detectors from confirming whether a human or an AI system created the content.

There are 6 main reasons lack of evidence is a limitation. The reasons are listed below.

- AI detectors do not outperform random classification.

- AI detection tools show extremely low verified accuracy.

- Large-scale studies confirm unreliable performance.

- Minor text changes invalidate detection results.

- Detection tools acknowledge limited reliability.

- Detection results are inconsistent across repeated tests.

Why does failure to outperform random classification limit AI detectors? Failure to outperform random classification limits AI detectors because results do not reliably distinguish AI-generated text from human writing. Studies show detection systems perform near random chance as of 2024, which removes confidence in classification outcomes.

Why does low accuracy prevent reliable evidence? Low accuracy prevents reliable evidence because detection tools fail to correctly identify AI-generated text consistently. OpenAI discontinued its AI Classifier after achieving only 26% success in detecting AI text and falsely flagging 9% of human writing.

What do large-scale studies prove about detection reliability? Large-scale studies prove that AI detectors are neither accurate nor reliable across multiple tools and datasets. Research covering 14 detection tools found that the best system performed correctly only about 50% of the time, which confirms weak evidence value.

Why do small text changes invalidate detection results? Small text changes invalidate detection results because AI detectors rely on unstable pattern signals. Accuracy drops significantly when AI text is edited to appear more human, which removes consistency in detection outcomes.

Why do detection tools acknowledge limited reliability? Detection tools acknowledge limited reliability because their scoring systems include uncertainty and error margins. Turnitin states its AI detection is not always accurate and that scores at or below 20% carry low confidence, which confirms a lack of definitive evidence.

Why are AI detection results inconsistent? AI detection results are inconsistent because the same content produces different scores across multiple tests. A 2025 study showed identical files receiving different AI probability scores without any changes, which undermines credibility and repeatability.

How reliable are AI detectors?

AI detectors are not highly reliable because they perform near random accuracy levels, produce inconsistent results, and rely on probabilistic estimation instead of proof. AI detectors estimate the likelihood of AI-generated text, but results vary widely across tools, datasets, and writing styles.

What is the reliability of AI-generated content detectors? AI-generated content detectors have not demonstrated reliability beyond random chance as of 2024. AI detectors assign probability scores, not definitive classifications, which leads to inconsistent outputs. The same document can receive 0% AI from one tool and 100% AI from another. A 2025 study confirmed that repeated tests on the same file produce different scores.

What issues exist with false positives and false negatives? AI detectors produce high false positives and false negatives, which leads to incorrect classification. Human-written content is flagged as AI-generated, while AI-generated text passes as human. Studies show false positive rates 24.5%-25%. Cases include human essays flagged as 100% AI and independent writing flagged at 70%.

How do different AI detection tools perform? AI detection tools show inconsistent performance across platforms and models. Turnitin reports 98% confidence with a ±15% margin of error. Compilatio reached 70% accuracy in 2023 and 99% in late 2024. GPTZero flagged the U.S. Constitution as AI-generated, which shows inconsistency. Free tools show mixed results, with some detecting 100% of AI text while others detect none.

What limitations reduce AI detector reliability? AI detector reliability decreases due to model lag, evasion techniques, and lack of transparency. New AI models produce human-like text that detectors cannot identify. Paraphrasing and translation bypass detection systems. Short text and mixed content reduce accuracy, and detectors do not explain classification decisions.

How are AI detectors used in academic settings? AI detectors are used as supportive tools, not definitive decision systems. No universal standard exists for AI detection in education. Some institutions use detection scores for initial review, while final decisions rely on human evaluation. The primary function remains educational assessment, not automated enforcement.

How Does the Future of AI Detectors Look?

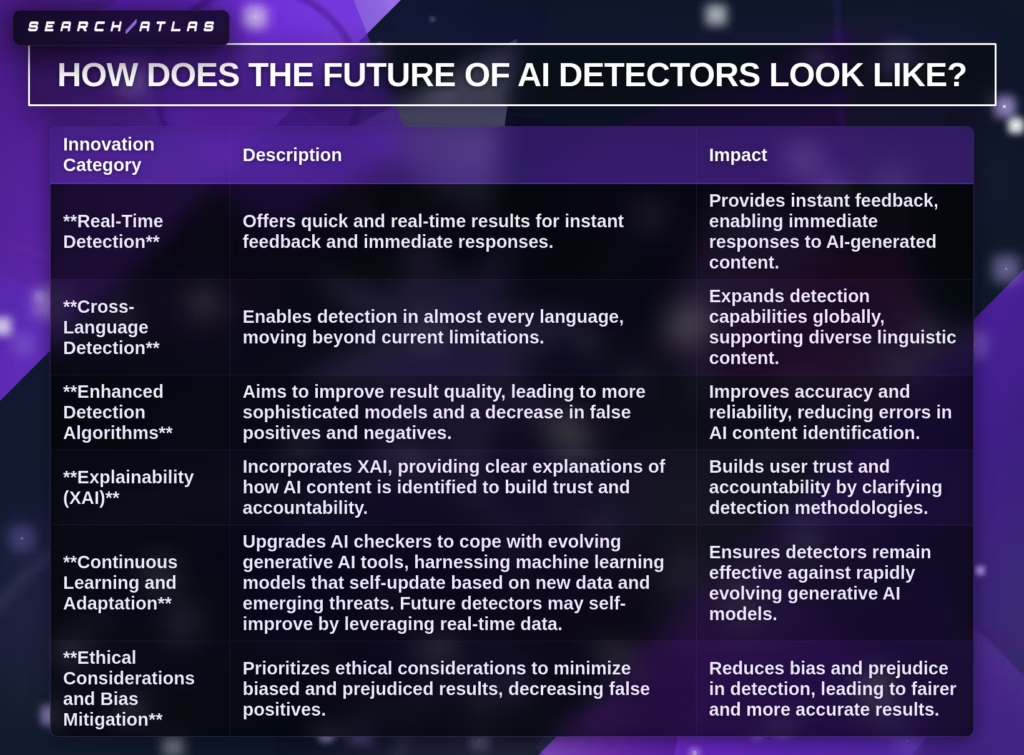

The future of AI detectors shows rapid market growth, increasing sophistication, and a shift from standalone detection tools to integrated evaluation systems. AI detectors are evolving into systems that combine detection, analysis, and contextual validation across multiple content formats.

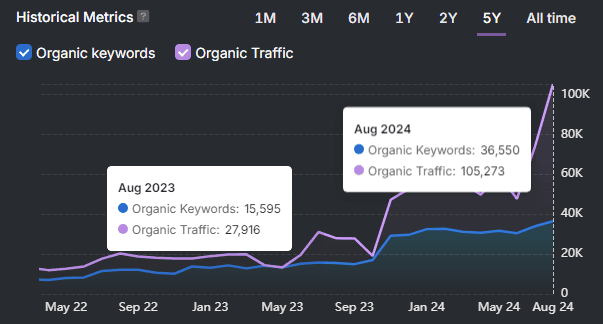

What is the projected market growth of AI detectors? The AI detector market is projected to grow from USD 0.98 billion in 2026 to USD 7.84 billion in 2035 and USD 31.37 billion by 2040. This growth represents a CAGR of 25.99% from 2026 to 2040. Market expansion reflects increased demand for content verification across education, media, and enterprise systems.

How is the role of AI detectors evolving? AI detectors are shifting from enforcement tools to educational and analytical systems. AI detectors function as conversation starters in education and as quality filters in content workflows. Institutions prioritize transparency, AI literacy, and process-based evaluation instead of relying only on detection scores.

What new features will future AI detectors include? Future AI detectors will include advanced integrations, multimodal detection, and source identification capabilities. AI detectors will analyze text, images, and video within unified systems. Integration with content platforms will allow real-time validation and continuous monitoring.

What are the key innovations shaping AI detection? There are 6 main innovation categories shaping AI detection. The categories are listed below.

- Real-time detection enables instant analysis and feedback.

- Cross-language detection expands coverage across global content.

- Enhanced algorithms improve accuracy and reduce errors.

- Explainability provides transparent reasoning behind results.

- Continuous learning enables adaptation to new AI models.

- Bias mitigation improves fairness and reduces misclassification.

What challenges will continue to limit AI detectors? AI detectors will remain limited because detection systems react to AI models instead of leading them. Detection tools lag behind generation systems, which creates a continuous arms race. Accuracy ceilings remain around 90% for specific use cases, and results change as models evolve.

How will watermarking influence the future of AI detection? Watermarking will influence detection by embedding identifiable patterns directly into AI-generated content. Watermarking methods use statistical frameworks to detect machine-generated text without altering readability. Adoption by major AI providers and regulatory pressure will determine effectiveness.

Are AI Detectors Accurate?

No, AI detectors are not consistently accurate because they rely on probabilistic models, produce inconsistent results, and often perform near random classification levels. AI detectors estimate likelihood instead of proving authorship, which creates variability across tools and use cases.

Why are AI detectors not consistently accurate? AI detectors are not consistently accurate because studies show performance often aligns with random chance and varies across tools. A 2025 study confirmed that the same content receives different scores across repeated checks. This inconsistency prevents reliable classification.

What accuracy ranges do AI detectors report? AI detectors report accuracy ranges 80% to 99%, but these figures exclude the false positive impact and vary by content type. High accuracy claims often reflect detection of obvious AI text, not real-world mixed or edited content. Adjusting false positive rates reduces overall detection capability.

Why do false positives affect perceived accuracy? False positives distort accuracy because detectors inflate success rates by over-flagging human content. A system detects 100% of AI content by labeling most text as AI-generated. This tradeoff reduces practical reliability.

Why do simple edits reduce detection accuracy? Simple edits reduce accuracy because AI detectors rely on unstable pattern signals. Minor paraphrasing and structure changes remove detectable features, which causes detection failure.

What is the overall conclusion about AI detector accuracy? AI detectors are unreliable for definitive decisions because accuracy varies, results conflict, and outputs lack proof of authorship. AI detectors function as indicators, not evidence, which requires human validation and additional verification methods.

Can Human-Written Text Be Flagged as AI-Generated?

Yes, human-written text is flagged as AI-generated because AI detectors produce false positives and misclassify legitimate writing. AI detectors rely on pattern recognition, which overlaps with many forms of human writing.

Why do AI detectors flag human-written text as AI-generated? AI detectors flag human text because structured, formal, and error-free writing matches AI detection patterns. Writing with a consistent tone, clear grammar, and predictable structure triggers low perplexity and low burstiness signals, which leads to misclassification.

What evidence shows human writing is misclassified? Multiple cases confirm that AI detectors incorrectly flag human-written content at high rates. Turnitin misidentified 4% of human papers as AI-generated. QuillBot flagged a fully human essay as 42% AI. The U.S. Constitution and the Old Testament were flagged as 100% AI generated.

Which types of human writing are most affected? Certain writing styles are more likely to be flagged due to pattern similarity. Formal academic writing, ESL writing, and grammar-corrected text show higher false positive rates. Neurodivergent writing patterns trigger detection signals.

What are the real-world consequences of false positives? False positives create academic and institutional risks because incorrect flags lead to penalties and distrust. A graduate thesis was flagged at 92% AI, and essays written in supervised environments were flagged as 100% AI. Universities like MIT, Yale, and Vanderbilt reduced reliance on AI detectors due to these issues.

What is the overall conclusion? Human-written text is flagged as AI-generated because AI detectors cannot reliably distinguish authorship. AI detectors function as indicators, not proof, which requires human review and additional validation.

Will AI Content Detectors Improve Over Time?

No, AI content detectors will not consistently improve because performance remains unstable, inconsistent, and dependent on rapidly changing AI models. AI content detectors show temporary gains, but overall reliability does not improve in a sustained or predictable way.

Why do AI detectors fail to show consistent improvement? AI detectors fail to improve consistently because performance fluctuates across time, tools, and test conditions. In January 2023, top detectors achieved 66% accuracy, while in 2025, some tools reached perfect scores in isolated tests. Later evaluations showed fewer tools maintaining those results, which proves instability.

Why does inconsistency limit long-term improvement? Inconsistency limits improvement because the same content produces different results across tools and repeated tests. A 2025 study showed identical files receiving different AI scores without changes. This variability prevents standardization and reliability.

Why does accuracy remain near random levels? Accuracy remains near random levels because detection methods cannot reliably separate AI-generated and human-written text. Studies confirm no detector outperforms random chance as of 2024. This limitation prevents consistent progress.

Why do AI advancements prevent detector improvement? AI advancements prevent improvement because generation models evolve faster than detection systems. New AI models produce more human-like text, which removes detectable signals. Detection systems trained on older models become obsolete quickly.

Why did OpenAI discontinue its AI detection tool? OpenAI discontinued its AI detection tool because it failed to achieve reliable accuracy. The system identified only 26% of AI-generated text correctly, which confirms the difficulty of improving detection systems.

What is the overall conclusion about future improvement? AI content detectors will remain unreliable because of structural limitations, rapid AI evolution, and inconsistent performance, preventing stable progress. AI detectors function as indicators, not definitive solutions, which limits long-term improvement.